Abstract

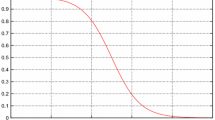

Estimation of probability density functions (pdf) is one major topic in pattern recognition. Parametric techniques rely on an arbitrary assumption on the form of the underlying, unknown distribution. Nonparametric techniques remove this assumption In particular, the Parzen Window (PW) relies on a combination of local window functions centered in the patterns of a training sample. Although effective, PW suffers from several limitations. Artificial neural networks (ANN) are, in principle, an alternative family of nonparametric models. ANNs are intensively used to estimate probabilities (e.g., class-posterior probabilities), but they have not been exploited so far to estimate pdfs. This paper introduces a simple neural-based algorithm for unsupervised, nonparametric estimation of pdfs, relying on PW. The approach overcomes the limitations of PW, possibly leading to improved pdf models. An experimental demonstration of the behavior of the algorithm w.r.t. PW is presented, using random samples drawn from a standard exponential pdf.

Chapter PDF

Similar content being viewed by others

Keywords

These keywords were added by machine and not by the authors. This process is experimental and the keywords may be updated as the learning algorithm improves.

References

Bengio, Y.: Neural Networks for Speech and Sequence Recognition. International Thomson Computer Press, London (1996)

Bishop, C.M.: Neural Networks for Pattern Recognition. Oxford University Press, Oxford (1995)

Bourlard, H., Morgan, N.: Connectionist Speech Recognition. A Hybrid Approach, vol. 247. Kluwer Academic Publishers, Boston (1994)

Duda, R.O., Hart, P.E.: Pattern Classification and Scene Analysis. Wiley, New York (1973)

Fukunaga, K.: Statistical Pattern Recognition, 2nd edn. Academic Press, San Diego (1990)

Haykin, S.: Neural Networks (A Comprehensive Foundation). Macmillan, New York (1994)

Husmeier, D.: Neural Networks for Conditional Probability Estimation. Springer, London (1999)

Mood, A.M., Graybill, F.A., Boes, D.C.: Introduction to the Theory of Statistics, 3rd edn. McGraw-Hill International, Singapore (1974)

Park, J., Sandberg, I.W.: Universal approximation using radial-basis-function networks. Neural Computation 3(2), 246–257 (1991)

Rumelhart, D.E., Hinton, G.E., Williams, R.J.: Learning internal representations by error propagation. In: Rumelhart, D.E., McClelland, J.L. (eds.) Parallel Distributed Processing, vol. 1, ch. 8, pp. 318–362. MIT Press, Cambridge (1986)

Trentin, E.: Networks with trainable amplitude of activation functions. Neural Networks 14(4–5), 471–493 (2001)

Author information

Authors and Affiliations

Editor information

Editors and Affiliations

Rights and permissions

Copyright information

© 2006 Springer-Verlag Berlin Heidelberg

About this paper

Cite this paper

Trentin, E. (2006). Simple and Effective Connectionist Nonparametric Estimation of Probability Density Functions. In: Schwenker, F., Marinai, S. (eds) Artificial Neural Networks in Pattern Recognition. ANNPR 2006. Lecture Notes in Computer Science(), vol 4087. Springer, Berlin, Heidelberg. https://doi.org/10.1007/11829898_1

Download citation

DOI: https://doi.org/10.1007/11829898_1

Publisher Name: Springer, Berlin, Heidelberg

Print ISBN: 978-3-540-37951-5

Online ISBN: 978-3-540-37952-2

eBook Packages: Computer ScienceComputer Science (R0)