Abstract

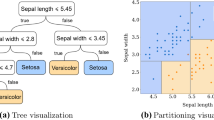

Probability trees (or Probability Estimation Trees, PET’s) are decision trees with probability distributions in the leaves. Several alternative approaches for learning probability trees have been proposed but no thorough comparison of these approaches exists.

In this paper we experimentally compare the main approaches using the relational decision tree learner Tilde (both on non-relational and on relational datasets). Next to the main existing approaches, we also consider a novel variant of an existing approach based on the Bayesian Information Criterion (BIC). Our main conclusion is that overall trees built using the C4.5-approach or the C4.4-approach (C4.5 without post-pruning) have the best predictive performance. If the number of classes is low, however, BIC performs equally well. An additional advantage of BIC is that its trees are considerably smaller than trees for the C4.5- or C4.4-approach.

Chapter PDF

Similar content being viewed by others

References

ILPnet2 applications descriptions, http://www-ai.ijs.si/~ilpnet2/apps/

Blockeel, H., De Raedt, L.: Top-down induction of first order logical decision trees. Artificial Intelligence 101(1-2), 285–297 (1998)

Fierens, D., Ramon, J., Blockeel, H., Bruynooghe, M.: A comparison of approaches for learning first-order logical Probability Estimation Trees. In: Kramer, S., Pfahringer, B. (eds.) ILP 2005. LNCS (LNAI), vol. 3625, pp. 121–135. Springer, Heidelberg (2005)

Fierens, D., Ramon, J., Blockeel, H., Bruynooghe, M.: A comparison of approaches for learning probability trees. Technical Report CW 418, Department of Computer Science, Katholieke Universiteit Leuven (2005)

Friedman, N., Goldszmidt, M.: Learning Bayesian networks with local structure. In: Learning and Inference in Graphical Models. MIT Press, Cambridge (1998)

Knobbe, A.J.: Data mining for adaptive system management. In: Proceedings of the 1st International Conference and exhibition on the Practical Application of Knowledge Discovery and Data Mining, PADD 1997 (1997)

Kramer, S., De Raedt, L., Helma, C.: Molecular feature mining in HIV data. In: Proceedings of the 7th ACM SIGKDD International Conference on Knowledge Discovery and Data Mining (KDD 2001), pp. 136–143 (2001)

Merz, C., Murphy, P.: UCI repository of machine learning databases. University of California, Department of Information and Computer Science, Irvine, CA (1996), http://www.ics.uci.edu/~mlearn/mlrepository.html

Michie, D., Muggleton, S., Page, D., Srinivasan, A.: To the international computing community: A new east-west challenge. Technical report, Oxford University Computing Laboratory, Oxford, UK (1994), Available at ftp.comlab.ox.ac.uk

Neville, J., Jensen, D., Friedland, L., Hay, M.: Learning relational probability trees. In: Proceedings of the 9th ACM SIGKDD International Conference on Knowledge Discovery and Data Mining, KDD 2003 (2003)

Provost, F., Domingos, P.: Tree induction for probability-based ranking. Machine Learning 52, 199–216 (2003)

Schwarz, G.: Estimating the dimension of a model. Annals of Statistics 6, 461–464 (1978)

Srinivasan, A., King, R., Bristol, D.: An assessment of ILP-assisted models for toxicology and the PTE-3 experiment. In: Džeroski, S., Flach, P.A. (eds.) ILP 1999. LNCS (LNAI), vol. 1634, p. 291. Springer, Heidelberg (1999)

Author information

Authors and Affiliations

Editor information

Editors and Affiliations

Rights and permissions

Copyright information

© 2005 Springer-Verlag Berlin Heidelberg

About this paper

Cite this paper

Fierens, D., Ramon, J., Blockeel, H., Bruynooghe, M. (2005). A Comparison of Approaches for Learning Probability Trees. In: Gama, J., Camacho, R., Brazdil, P.B., Jorge, A.M., Torgo, L. (eds) Machine Learning: ECML 2005. ECML 2005. Lecture Notes in Computer Science(), vol 3720. Springer, Berlin, Heidelberg. https://doi.org/10.1007/11564096_54

Download citation

DOI: https://doi.org/10.1007/11564096_54

Publisher Name: Springer, Berlin, Heidelberg

Print ISBN: 978-3-540-29243-2

Online ISBN: 978-3-540-31692-3

eBook Packages: Computer ScienceComputer Science (R0)