Abstract

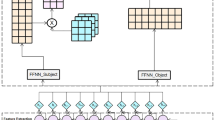

Traditional Chinese Medicine (TCM) texts contain a wealth of knowledge accumulated over thousands of years, making the extraction of knowledge from these texts a pivotal concern. Named Entity Recognition (NER) can serve as an effective tool for extracting knowledge information from TCM texts. However, TCM texts contain a large number of rare characters and homophones, and the attributions of entities are also more complex, making TCMNER more challenging. In order to address this issue, this paper introduces MC-TCMNER, a novel method that leverages the multi-modal features of Chinese characters and incorporates a training strategy based on contrastive learning. Experiments have shown that our proposed method achieves an F1 score of 94.05% on the TCMNER dataset and 52.84% on the C-CLUE benchmark, demonstrating the effectiveness of MC-TCMNER. Furthermore, owing to the limited availability of a comprehensive dataset for TCMNER, we have taken the initiative to publicly release a TCMNER dataset that we meticulously collected and annotated.

Access this chapter

Tax calculation will be finalised at checkout

Purchases are for personal use only

Similar content being viewed by others

References

Zhang, Q., Zhou, J., Zhang, B.: Computational traditional chinese medicine diagnosis: a literature survey. Comput. Biolo. Med. 133, 104358 (2021)

Su, M.H., Lee, C.W., Hsu, C.L., Su, R.C.: RoBERTa-based traditional chinese medicine named entity recognition model. In: ROCLING, pp. 61–66 (2022)

Song, B., Bao, Z., Wang, Y.Z., Zhang, W., Sun, C.: Incorporating Lexicon for named entity recognition of traditional Chinese medicine books. In: Zhu, X., Zhang, M., Hong, Yu., He, R. (eds.) NLPCC 2020. LNCS (LNAI), vol. 12431, pp. 481–489. Springer, Cham (2020). https://doi.org/10.1007/978-3-030-60457-8_39

Xiaonan Li, Hang Yan, Xipeng Qiu, Xuanjing Huang: FLAT: Chinese NER Using Flat-Lattice Transformer. ACL 2020: 6836–6842

Ma, R., Peng, M., Zhang, Q., Huang, X.: Simplify the Usage of Lexicon in Chinese NER. ACL, pp. 5951–5960 (2020)

Xue, M., Bowen, Yu., Liu, T., Zhang, Y.: Erli Meng, pp. 3831–3841. Porous Lattice Transformer Encoder for Chinese NER. COLING, Bin Wang (2020)

Zhao, S., Wang, C., Hu, M., Yan, T., Wang, M.: MCL: Multi-granularity contrastive learning framework for Chinese NER. In: AAAI, pp. 14011–14019 (2023)

Zhang, D., et al.: Improving distantly-supervised named entity recognition for traditional chinese medicine text via a novel back-labeling approach. IEEE Access 8, 145413–145421 (2020)

Jin, Z., Zhang, Y., Kuang, H., Yao, L., Zhang, W., Pan, Y.: Named entity recognition in traditional Chinese medicine clinical cases combining BiLSTM-CRF with knowledge graph. In: Douligeris, C., Karagiannis, D., Apostolou, D. (eds.) KSEM 2019. LNCS (LNAI), vol. 11775, pp. 537–548. Springer, Cham (2019). https://doi.org/10.1007/978-3-030-29551-6_48

Qi, Y., Ma, H., Shi, L., Zan, H., Zhou, Q.: Adversarial transfer for classical Chinese NER with translation word segmentation. In: NLPCC, vol. 1 pp. 298–310 (2022)

Liu, C.L., Chu, C.T., Chang, W.T., Zheng, T.Y.: When classical chinese meets machine learning: explaining the relative performances of word and sentence segmentation tasks. DH (2020)

Meng, Y., et al.: Glyce: glyph-vectors for Chinese character representations. In: NeurIPS, pp. 2742–2753 (2019)

Xuan, Z., Bao, R., Jiang, S.: FGN: fusion glyph network for Chinese named entity recognition. In: Chen, H., Liu, K., Sun, Y., Wang, S., Hou, L. (eds.) CCKS 2020. CCIS, vol. 1356, pp. 28–40. Springer, Singapore (2021). https://doi.org/10.1007/978-981-16-1964-9_3

Mai, C., et al.: Pronounce differently, mean differently: a multi-tagging-scheme learning method for Chinese NER integrated with lexicon and phonetic features. Inf. Process. Manag. 59(5), 103041 (2022)

Yan-Xin, H., Bo, L.: A Chinese text classification model based on radicals and character distinctions. IEEE Access 11, 45520–45526 (2023)

Li, S., Hu, X., Lin, L., Liu, A., Wen, L., Philip, S.Y.: A multi-level supervised contrastive learning framework for low-resource natural language inference. IEEE ACM Trans. Audio Speech Lang. Process. 31, 1771–1783 (2023)

Cheng, X., Cao, B., Ye, Q., Zhu, Z., Li, H., Zou, Y.: ML-LMCL: Mutual learning and large-margin contrastive learning for improving ASR robustness in spoken language understanding. In: ACL (Findings), pp. 6492–6505 (2023)

Hu, X., et al.: Language agnostic multilingual information retrieval with contrastive learning. In: ACL (Findings), pp. 9133–9146 (2023)

Li, J., Selvaraju, R., Gotmare, A., Joty, S., Xiong, C., Hoi, S.C.H.: Align before fuse: vision and language representation learning with momentum distillation. In: NeurIPS, pp. 9694–9705 (2021)

He, K., Chen, X., Xie, S., Li, Y., Dollár, P., Girshick, R.: Masked autoencoders are scalable vision learn- ers. In: CVPR (2022)

Dosovitskiy, A., et al.: An image is worth 16x16 words: transformers for image recognition at scale. In: ICLR (2021)

Zhang, Z., et al.: NerCo: a contrastive learning based two-stage Chinese NER method. In: IJCAI, pp. 5287–5295 (2023)

Vaswani, A., Shazeer, N., Parmar, N., Uszkoreit, J., Jones, L., Gomez, A.N.: Lukasz Kaiser, pp. 5998–6008. Attention is All you Need. NIPS, Illia Polosukhin (2017)

Mikolov, T., Sutskever, I., Chen, K., Corrado, G. S., Dean, J.: Distributed representations of words and phrases and their compositionality. In: Proceedings of the Conference On Neural Information Processing Systems, pp. 3111–3119 (2013)

Devlin, J., Chang, M.W., Lee, K., Toutanova, K.: BERT: pre-training of deep bidirectional transformers for language understanding. In: NAACL-HLT, pp. 4171–4186 (2019)

Ji, Z., Shen, Y., Sun, Y., Yu, T., Wang, X.: C-CLUE: a benchmark of classical Chinese based on a crowdsourcing system for knowledge graph construction. In: Qin, B., Jin, Z., Wang, H., Pan, J., Liu, Y., An, B. (eds.) CCKS 2021. CCIS, vol. 1466, pp. 295–301. Springer, Singapore (2021). https://doi.org/10.1007/978-981-16-6471-7_24

Zhang, Y., Yang, J.: Chinese NER using lattice LSTM. In: Proceedings of the 56th Annual Meeting of the Association for Computational Linguistics (ACL), pp. 1554–1564 (2018)

Weischedel, R., et al.: Ontonotes release 4.0. LDC2011t03. Philadelphia: Linguistic Data Consortium (2011)

Yan, H., Deng, B., Li, X., Qiu, X: TENER: adapting transformer encoder for named entity recognition. CoRR abs/1911.04474 (2019)

Li, J., et al.: Unified named entity recognition as word-word relation classification. In: Proceedings of the AAAI Conference on Artificial Intelligence 36, 10965–10973 (2022)

Acknowledgment

This work was supported by Industry-University-Research Cooperation Project of Fujian Science and Technology Planning (No: 2022H6012), Science and Technology Research Project of Jiangxi Provincial Department of Education (No. GJJ 2206003), Natural Science Foundation of Fujian Province of China (No. 2021J011 169, No .2022J011224).

Author information

Authors and Affiliations

Corresponding author

Editor information

Editors and Affiliations

Rights and permissions

Copyright information

© 2024 The Author(s), under exclusive license to Springer Nature Switzerland AG

About this paper

Cite this paper

Cao, S., Wu, Q. (2024). MC-TCMNER: A Multi-modal Fusion Model Combining Contrast Learning Method for Traditional Chinese Medicine NER. In: Rudinac, S., et al. MultiMedia Modeling. MMM 2024. Lecture Notes in Computer Science, vol 14556. Springer, Cham. https://doi.org/10.1007/978-3-031-53311-2_25

Download citation

DOI: https://doi.org/10.1007/978-3-031-53311-2_25

Published:

Publisher Name: Springer, Cham

Print ISBN: 978-3-031-53310-5

Online ISBN: 978-3-031-53311-2

eBook Packages: Computer ScienceComputer Science (R0)