Abstract

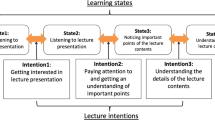

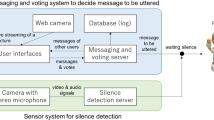

In lecture with presentation slides such as e-Learning lecture on video, it is important for lecturers to control their non-verbal behavior involving gaze, gesture, and paralanguage to attract learners’ attention to slide or oral contents they intend to emphasize. However, it is not so easy even for well-experienced lecturers to properly use and maintain non-verbal behavior in their lecture to promote learners’ interest and understanding. This paper proposes robot lecture, in which a communication robot substitutes for human lecturers, and reconstructs their non-verbal behavior to enhance their lecture. Towards such reconstruction, we have designed a model of non-verbal behavior in lecture, and developed a robot lecture system, which follows the model to detect and reconstruct insufficient/inappropriate behavior, and which conducts the reconstructed lecture. This paper describes a case study with the system, whose purpose was to ascertain the benefits of robot lecture by comparing video lecture conducted by human, robot lecture simply reproducing the original one, and robot lecture reconstructing the original one. The results suggest that the robot lecture involving reconstruction promotes learners’ understanding of the lecture slides more than the video lecture and the robot lecture involving simple reproduction.

Access this chapter

Tax calculation will be finalised at checkout

Purchases are for personal use only

Similar content being viewed by others

References

Collins, J.: Education techniques for lifelong learning: giving a powerpoint presentation: the art of communicating effectively. Radiographics 24(4), 1185–1192 (2004)

Melinger, A., Levelt, W.J.M.: Gesture and the communicative intention of the speaker. Gesture 4(2), 119–141 (2004)

Arima, M.: An examination of the teachers’ gaze and self reflection during classroom instruction: comparison of a veteran teacher and a novice teacher. Bull. Grad. Sch. Educ. Hiroshima Univ. 63, 9–17 (2014 in Japanese)

Goldin-Meadow, S., Alibali, M.W.: Gesture’s role in speaking, learning, and creating language. Ann. Rev. Psychol. 64, 257–283 (2013)

Ishino, T., Goto, M., Kashihara, A.: A robot for reconstructing presentation behavior in lecture. In: Proceedings of the 6th International Conference on Human-Agent Interaction (HAI), pp. 67–75 (2018)

Kamide, H., Kawabe, K., Shigemi, S., Arai, T.: Nonverbal behaviors toward an audience and a screen for a presentation by a humanoid robot. Artif. Intell. Res. 3(2), 57–66 (2014)

Goto, M., Ishino, T., Inazawa, K., Matsumura, N., Nunobiki, T., Kashihara, A.: Authoring robot presentation for promoting reflection on presentation scenario. In: Proceedings of the 14th Annual ACM/IEEE International Conference on Human Robot Interaction (HRI), pp. 660–661 (2019)

McNeill, D.: Hand and mind: What Gestures Reveal about Thought. University of Chicago press, Chicago (1992)

Presentation Sota. https://sota.vstone.co.jp/home/presentation_sota/

Acknowledgments

This work is supported in part by JSPS KAKENHI Grant Number 17H01992.

Author information

Authors and Affiliations

Corresponding author

Editor information

Editors and Affiliations

Rights and permissions

Copyright information

© 2019 Springer Nature Switzerland AG

About this paper

Cite this paper

Kashihara, A., Ishino, T., Goto, M. (2019). Robot Lecture for Enhancing Non-verbal Behavior in Lecture. In: Isotani, S., Millán, E., Ogan, A., Hastings, P., McLaren, B., Luckin, R. (eds) Artificial Intelligence in Education. AIED 2019. Lecture Notes in Computer Science(), vol 11626. Springer, Cham. https://doi.org/10.1007/978-3-030-23207-8_24

Download citation

DOI: https://doi.org/10.1007/978-3-030-23207-8_24

Published:

Publisher Name: Springer, Cham

Print ISBN: 978-3-030-23206-1

Online ISBN: 978-3-030-23207-8

eBook Packages: Computer ScienceComputer Science (R0)