Abstract

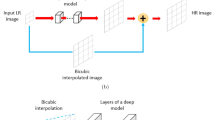

Machine learning has become a very popular method in various branches of industry and has been successfully applied to a number of practical tasks. Optical character recognition, which is one of the most challenging task in computer vision, has made significant progress due to machine learning applications. Modern OCR systems can provide a high-accuracy predictions both for scanned documents and real-scene images. Despite such power, such models are still suffering from low-quality images, especially, in extreme cases of compressed images. A traditional approach to overcoming such deep learning model weakness is to extend the dataset in such a way as to cover such distortions. However, it requires model retraining and can not guarantee the same accuracy on the previous dataset. We tackle this issue from another perspective. In this paper, we discover how a super-resolution preprocessing step could help the OCR model to recognize images itself. Based on our custom synthetic dataset, we built a super-resolution system. We also performed a careful analysis of how loss functions should be used for text images. Finally, we showed that a custom-trained super-resolution system shows much better results in terms of restored image quality and text recognition accuracy.

Access this chapter

Tax calculation will be finalised at checkout

Purchases are for personal use only

Similar content being viewed by others

References

Bevilacqua M, Roumy A, Guillemot C, Alberi-Morel ML (2012) Low-complexity single-image Super-resolution based on nonnegative neighbor embedding. https://doi.org/10.5244/C.26.135

Goodfellow I, Pouget-Abadie J, Mirza M, Xu B, Warde-Farley D, Ozair S, Courville A, Bengio Y (2014) Generative adversarial nets. In: Advances in neural information processing systems, 27

Huang JB, Singh A, Ahuja N (2015) Single image super-resolution from transformed self-exemplars. In: Proceedings of the IEEE conference on computer vision and pattern recognition, pp 5197–5206

Jaderberg M, Simonyan K, Zisserman A, Kavukcuoglu K (2016) Spatial transformer networks [Electronic resource]. Available from: https://arxiv.org/pdf/1506.02025.pdf

Ledig C, Theis L, Huszar F, Caballero J, Cunningham A, Acosta A, Aitken A, Tejani A, Totz J, Wang Z, Shi W (2017) Photo-realistic single image super-resolution using a generative adversarial [Electronic resource]. Available from: https://arxiv.org/abs/1609.04802

Liu J, Tang J, Wu G (2020) Residual feature distillation network for lightweight image super-resolution [Electronic resource]. Available from: https://arxiv.org/pdf/2009.11551

Liu JJ, Hou Q, Cheng MM, Wang C, Feng J (2020) Improving convolutional networks with self-calibrated convolutions In: Proceedings of the IEEE/CVF conference on computer vision and pattern recognition, pp 10096–10105

Luo C, Jin L, Sun Z (2019) MORAN: a multi-object rectified attention network for scene text recognition [Electronic resource]. Available from: https://arxiv.org/pdf/1901.03003.pdf

Martin D, Fowlkes C, Tal D, Malik J (2001) A database of human segmented natural images and its application to evaluating segmentation algorithms and measuring ecological statistics. In: Proceedings eighth IEEE international conference on computer vision (ICCV 2001), vol 2. IEEE, pp 416–423

Matsui Y, Ito K, Aramaki Y, Fujimoto A, Ogawa T, Yamasaki T, Aizawa K (2017) Sketch-based manga retrieval using manga109 dataset. Multimed Tools Appl, 21811–21838

Niu B, Wen W, Ren W, Zhang X, Yang L, Wang S, Zhang K, Cao X, Shen H (2020) Single image super-resolution via a holistic attention network [Electronic resource]. Available from: https://arxiv.org/pdf/2008.08767v1

Shi B, Yang M, Wang X, Lyu P, Yao C, Bai X (2018) An attentional scene text recognizer with flexible rectification

Smith R (2007) An overview of the tesseract OCR engine. In: Proceedings of the ninth international conference on document analysis and recognition (ICDAR), pp 629–633

Wang X, Yu K, Wu S, Gu J, Liu Y, Dong C, Loy CC, Qiao Y, Tang X (2018) ESRGAN: enhanced super-resolution generative adversarial networks [Electronic resource]. Available from: https://arxiv.org/abs/1809.00219

Wang Z, Bovik AC, Sheikh HR, Simoncelli EP (2004) Image quality assessment: from error visibility to structural similarity [Electronic resource]. Available from: http://www.cns.nyu.edu/pub/lcv/wang03-preprint

Wang Z, Bovik AC, Sheikh HR, Simoncelli EP (2004) Image quality assessment: from error visibility to structural similarity. IEEE Trans Image Process 13(4):600–612. https://doi.org/10.1109/TIP.2003.819861

Xiao M, Zheng S, Liu C, Wang Y, He D, Ke G, Bian J, Lin Z, Liu T-Y (2020) Invertible image rescaling [Electronic resource]. Available from: https://arxiv.org/pdf/2005.05650

Yang J, Wright J, Huang TS, Ma Y (2010) Image super-resolution via sparse representation. IEEE Trans Image Process 19(11):2861–2873

Zhao H, Gallo O, Frosio I, Kautz J (2018) Loss functions for image restoration with neural networks [Electronic resource]. Available from: https://arxiv.org/pdf/1511.08861

Zhao H, Kong X, He J, Qiao Y, Dong C (2020) Efficient image super-resolution using pixel attention [Electronic resource]. Available from: https://arxiv.org/pdf/2010.01073

Author information

Authors and Affiliations

Corresponding author

Editor information

Editors and Affiliations

Rights and permissions

Copyright information

© 2024 The Author(s), under exclusive license to Springer Nature Singapore Pte Ltd.

About this paper

Cite this paper

Baranov, M., Serhii, I., Shvetsov, D., Shcherbyna, Y. (2024). Application of Super Resolution for Optical Character Recognition in Low Quality Images. In: Yang, XS., Sherratt, R.S., Dey, N., Joshi, A. (eds) Proceedings of Eighth International Congress on Information and Communication Technology. ICICT 2023. Lecture Notes in Networks and Systems, vol 695. Springer, Singapore. https://doi.org/10.1007/978-981-99-3043-2_11

Download citation

DOI: https://doi.org/10.1007/978-981-99-3043-2_11

Published:

Publisher Name: Springer, Singapore

Print ISBN: 978-981-99-3042-5

Online ISBN: 978-981-99-3043-2

eBook Packages: EngineeringEngineering (R0)