Abstract

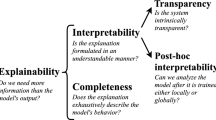

Automated navigation technology has established itself as an integral facet of intelligent transportation and smart city systems. Several international technological organizations have realized the immense potential of autonomous vehicular systems and are currently working towards their complete development for mainstream application. From deep learning algorithms for road object detection to intrusion detection systems for CAN bus monitoring, the functioning of a self-driving vehicle is powered by the simultaneous working of multiple inner vehicle module systems that perform proper vehicle navigation while ensuring the physical safety and digital privacy of the user. Transparency of the vehicle’s thought processes can assure the user of its credibility and reliability. This paper introduces explainable artificial intelligence, which aims to converge the decision-making processes of Autonomous Vehicle Systems (AVS). Here, the domain of Explainable AI (XAI) provides clear insights into the role of explainable AI in autonomous vehicles and increase human trust for AI based solutions in the same sector. This paper exhibits the trajectories of transportation advancements and the current scenario of the industry. A comparative quantitative and qualitative analysis is performed to compare the simulations of XAI and vehicular smart systems to showcase the significant developments achieved. Visual explanatory methods and an intrusion detection classifier were created as part of this research and achieved significant results over extant works.

Access this chapter

Tax calculation will be finalised at checkout

Purchases are for personal use only

Similar content being viewed by others

References

Arrieta, A. B., Díaz-Rodríguez, N., Ser, J. D., Bennetot, A., Tabik, S., Barbado, A., García, S., et al. (2020). Explainable artificial intelligence (XAI): Concepts, taxonomies, opportunities and challenges toward responsible AI. Information Fusion, 58, 82–115.

Yoganandhan, A., Subhash, S. D., Hebinson Jothi, J., & Mohanavel, V. (2020) Fundamentals and development of self-driving cars. Materials Today: Proceedings, 33, 3303–3310.

Cysneiros, L. M., Raffi, M., & Sampaio do Prado Leite, J. C. (2018). Software transparency as a key requirement for self-driving cars. In 2018 IEEE 26th International Requirements Engineering Conference (RE) (pp. 382–387). IEEE.

Hilgarter, K., & Granig, P. (2020). Public perception of autonomous vehicles: A qualitative study based on interviews after riding an autonomous shuttle. Transportation Research Part F: Traffic Psychology and Behaviour, 72, 226–243.

Hussain, R., & Zeadally, S. (2018). Autonomous cars: Research results, issues, and future challenges. IEEE Communications Surveys & Tutorials, 21(2), 1275–1313.

Ras, G., van Gerven, M., & Haselager, P. (2018). Explanation methods in deep learning: Users, values, concerns and challenges. In Explainable and Interpretable Models in Computer Vision and Machine Learning (pp. 19–36). Springer.

Czubenko, M., Kowalczuk, Z., & Ordys, A. (2015). Autonomous driver based on an intelligent system of decision-making. Cognitive Computation, 7(5), 569–581.

Rödel, C., Stadler, S., Meschtscherjakov, A., & Tscheligi, M. (2014). Towards autonomous cars: The effect of autonomy levels on acceptance and user experience. In Proceedings of the 6th International Conference on Automotive User Interfaces and Interactive Vehicular Applications (pp. 1–8).

Tyagi, A. K., & Aswathy, S. U. (2021). Autonomous intelligent vehicles (AIV): research statements, open issues, challenges and road for future. International Journal of Intelligent Networks, 2, 83–102. ISSN 2666-6030. https://doi.org/10.1016/j.ijin.2021.07.002

Varsha, R., et al. (2020). Deep learning based blockchain solution for preserving privacy in future vehicles. International Journal of Hybrid Intelligent System, 16(4), 223–236.

Adadi, A., & Berrada, M. (2018). Peeking inside the black-box: A survey on explainable artificial intelligence (XAI). IEEE Access, 6, 52138–52160.

Samek, W., & Müller, K.-R. (2019). Towards explainable artificial intelligence. In Explainable AI: interpreting, explaining and visualizing deep learning (pp. 5–22). Springer.

Lee, E., Braines, D., Stiffler, M., Hudler, A., & Harborne, D. (2019). Developing the sensitivity of LIME for better machine learning explanation. In Artificial intelligence and machine learning for multi-domain operations applications (vol. 11006, pp. 1100610). International Society for Optics and Photonics.

Selvaraju, R. R., Cogswell, M., Das, A., Vedantam, R., Parikh, D., & Batra, D. (2017). Grad-cam: Visual explanations from deep networks via gradientbased localization. In Proceedings of the IEEE International Conference on Computer Vision (pp. 618–626).

Chattopadhay, A., Sarkar, A., Howlader, P., & Balasubramanian, V. N. (2018). Grad-cam++: generalized gradient-based visual explanations for deep convolutional networks. In 2018 IEEE Winter Conference on Applications of Computer Vision (WACV) (pp. 839–847). IEEE.

Song, W., Dai, S., Huang, D., Song, J., & Antonio, L. (2021). Median-pooling grad-CAM: An efficient inference level visual explanation for CNN networks in remote sensing image classification. In International Conference on Multimedia Modeling (pp. 134146). Cham: Springer.

Ramaswamy, H. G. (2020). Ablation-cam: Visual explanations for deep convolutional network via gradient-free localization. In Proceedings of the IEEE/CVF Winter Conference on Applications of Computer Vision (pp. 983–991).

Shrikumar, A., Greenside, P., & Kundaje, A. (2017). Learning important features through propagating activation differences. In International Conference on Machine Learning (pp. 3145–3153). PMLR.

Arya, V., Bellamy, R.K.E., Chen, P.-Y., Dhurandhar, A., Hind, M., Hoffman, S. C., Houde, S., et al. (2020). AI explainability 360: hands-on tutorial. In Proceedings of the 2020 Conference on Fairness, Accountability, and Transparency (pp. 696–696).

Wiegand, G., Eiband, M., Haubelt, M., & Hussmann, H. (2020). I’d like an explanation for that!” Exploring reactions to unexpected autonomous driving. In 22nd International Conference on Human-Computer Interaction with Mobile Devices and Services (pp. 1–11).

Omeiza, D., Webb, H., Jirotka, M., & Kunze, L. (2021). Explanations in autonomous driving: a survey. arXiv preprint arXiv:2103.05154

Koo, J., Kwac, J., Ju, W., Steinert, M., Leifer, L., & Nass, C. (2015). Why did my car just do that? Explaining semi-autonomous driving actions to improve driver understanding, trust, and performance. International Journal on Interactive Design and Manufacturing (IJIDeM), 9(4), 269–275.

Petersen, L., Robert, Jessie Yang, X., Tilbury, & D. M. (2019). Situational awareness, drivers trust in automated driving systems and secondary task performance.

Shen, Y., Jiang, S., Chen, Y., Yang, E., Jin, X., Fan, Y., & Campbell. K. D. (2020). To explain or not to explain: a study on the necessity of explanations for autonomous vehicles.

Wiegand, G., Schmidmaier, M., Weber, T., Liu, Y., & Hussmann, H. (2019). I drive-you trust: Explaining driving behavior of autonomous cars. In Extended Abstracts of the 2019 Chi Conference on Human Factors in Computing Systems (pp. 1–6).

Tyagi, A. K., & Sreenath, N. (2015). A comparative study on privacy preserving techniques for location based services. British Journal of Mathematics and Computer Science, 10(4), 1–25. ISSN: 2231-0851

Tyagi, A. K., & Sreenath, N. (2015). Location privacy preserving techniques for location based services over road networks, 2–4 April 2015. In Proceeding of IEEE/International Conference on Communication and Signal Processing (ICCSP), Tamil Nadu, India (pp. 1319–1326). ISBN: 978-1-4799-8080-2

Nair, M. M., & Tyagi, A. K. (2021). Privacy: History, statistics, policy, laws, preservation and threat analysis. Journal of Information Assurance & Security, 16(1), 24–34.

Midha, S., Tripathi, K., & Sharma, M. K. (2022). Software defined network horizons and embracing its security challenges: From theory to practice. In Cloud and IOT Based Vehicular Ad hoc Networks, Chap. 9. Wiley. https://doi.org/10.1002/9781119761846.ch9

Author information

Authors and Affiliations

Corresponding author

Editor information

Editors and Affiliations

Rights and permissions

Copyright information

© 2023 The Author(s), under exclusive license to Springer Nature Singapore Pte Ltd.

About this paper

Cite this paper

Madhav, A.V.S., Tyagi, A.K. (2023). Explainable Artificial Intelligence (XAI): Connecting Artificial Decision-Making and Human Trust in Autonomous Vehicles. In: Singh, P.K., Wierzchoń, S.T., Tanwar, S., Rodrigues, J.J.P.C., Ganzha, M. (eds) Proceedings of Third International Conference on Computing, Communications, and Cyber-Security. Lecture Notes in Networks and Systems, vol 421. Springer, Singapore. https://doi.org/10.1007/978-981-19-1142-2_10

Download citation

DOI: https://doi.org/10.1007/978-981-19-1142-2_10

Published:

Publisher Name: Springer, Singapore

Print ISBN: 978-981-19-1141-5

Online ISBN: 978-981-19-1142-2

eBook Packages: EngineeringEngineering (R0)