Abstract

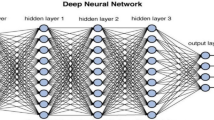

In a range of fields such as emotion detection, medical image processing and speech recognition, deep learning has recently achieved good results. With the pursuit of more precise results, many scholars try to add more new type network layers to increase the size of the neural network. However, this will lead to deeper and more intricate network models, and training and evaluating models requires intensive CPU calculations and tremendous computing resources which cannot be achieved by general purpose processors. Nowadays, some hardware accelerators such as Field Programmable Gate Array (FPGA) have been employed to accelerate the neural network, and FPGA with reconfigurability and low power consumption are currently applied to improve throughput of deep learning networks at a reasonable price. In this paper, the typical technologies and methods of accelerating deep learning network on FPGA in recent years are reviewed and analyzed with their advantages and disadvantages, and feasible research suggestions for the next research direction are given. It is expected that it will have a certain reference value for researchers in the field of deep learning acceleration and hardware optimization.

Access this chapter

Tax calculation will be finalised at checkout

Purchases are for personal use only

Similar content being viewed by others

References

Yann, L., Yoshua, B.: Convolutional networks for images, speech, and time series. Handb. Brain Theory Neural Netw. 3361(10) (1995)

Xingjian, S., Zhourong, C., Hao, W., et al.: Convolutional LSTM network. In: Proceedings of the 27th International Conference on Neural Information Processing Systems, A Machine Learning Approach for Precipitation Nowcasting, pp. 2672–2680. MIT Press (2015)

Ian, J.G., Jean, P.-A., Mehdi, M., et al.: Generative Adversarial Nets. MIT Press (2014)

Jiantao, Q., Song, S., Yu, W., et al.: Going deeper with embedded FPGA platform for convolutional neural network. In: Proceedings of the 2016 ACM/SIGDA International Symposium on Field-Programmable Gate Arrays, pp. 26–35. ACM (2016)

Chen, Z., Peng, L., Sun, G., et al.: Optimizing FPGA-based accelerator design for deep convolutional neural networks. In: Proceedings of the 2015 ACM/SIGDA International Symposium on Field-Programmable Gate Arrays, pp. 161–170. ACM (2015)

Williams, S., Waterman, A., Patterson, D.: Roofline: an insightful visual performance model for multicore architectures. Commun. Assoc. Comput. Mach. 52(4), 65–76 (2009)

Xuelei, L., Liangkui, D., Li, W., Fang, C.: FPGA accelerates deep residual learning for image recognition. In: 2017 IEEE 2nd Information Technology, Networking, Electronic and Automation Control Conference (ITNEC). IEEE, Chengdu (2017)

Roberto, D., Griffin, L., Jasmina, V., Paul, C., Graham, T., Shawki, A.: Caffeinated FPGAs: FPGA framework for convolutional neural networks. In: 2016 International Conference on Field-Programmable Technology (FPT). IEEE, Xi’an (2017)

Chao, W., Junneng, Z., et al.: Hardware implementation on FPGA for task-level parallel dataflow execution engine. IEEE Trans. Parall. Distrib. Syst. 27(8), 2303–2315 (2016)

Chen, Z., Di, W., Jiayu, S., Guangyu, S., Guojie, L., Jason, C.: Energy-efficient CNN implementation on a deeply pipelined FPGA cluster. In: Proceedings of the 2016 International Symposium, pp. 326–331 (2016)

Hector A.G., Shahzad, M., Jerald, Y., Ibrahim M.E.: BioCNN: a hardware inference engine for EEG-based emotion detection. IEEE Access 140896–140914 (2020)

Lei, G., Chao, W., Xi, L., Huaping, C., Xuehai, Z.: MALOC: a fully pipelined FPGA accelerator for convolutional neural networks with all layers mapped on chip. IEEE Trans. Comput. Aided Des. Integr. Circuits Syst. 37(11), 2601–2612 (2018)

Ming, X., Zunkai, H., Li, T., Hui, W., Victor, C., Yongxin, Z., Songlin, F.: SparkNoC: an energy-efficiency FPGA-based accelerator using optimized lightweight CNN for edge computing. J. Syst. Archit. 115(4), 101991 (2021)

Gan, F., Zuyi, H., Song, C., Feng, W.: Energy-efficient and high-throughput FPGA-based accelerator for Convolutional Neural Networks. In: 2016 13th IEEE International Conference on Solid-State and Integrated Circuit Technology (ICSICT). IEEE, Hangzhou (2017)

Dong, W., An, J., Xu, K.: PipeCNN: an OpenCL-based FPGA accelerator for large-scale convolution neuron networks. CoRR 1611(02450) (2016)

Kaiyuan, G., Lingzhi, S., Jiantao, Q., et al.: Angel-eye: a complete design flow for mapping CNN onto embedded FPGA. IEEE Trans. Comput. Aided Des. Integr. Circuits Syst. 37(1), 35–47 (2017)

Liqiang, L., Yun, L., Qingcheng, X., Shengen, Y.: Evaluating fast algorithms for convolutional neural networks on FPGAs. In: 2017 IEEE 25th Annual International Symposium on Field-programmable Custom Computing Machines. IEEE, Napa (2017)

Yufei, M., Yu, C., Sarma, V., Jae-sun, S.: Optimizing the convolution operation to accelerate deep neural networks on FPGA. IEEE Trans. Very Large-Scale Integr. (VLSI) Syst. 26(7), 1354–1367 (2018)

Lei, G., Chao, W., Xi, L., Huangping, C., Xuehai, Z.: A power-efficient and high-performance FPGA accelerator for convolutional neural networks. In: Proceedings of the 12th IEEE/ACM/IFIP International Conference on Hardware/Software Codesign and System Synthesis Companion, pp. 1–2 (2017)

Chao, H., Siyu, N., Gengsheng, C.: A layer-based structured design of CNN on FPGA. In: 2017 IEEE 12th International Conference on ASIC (ASICON). IEEE, Guiyang (2017)

Waichi, F., Kaiyen, W., Nicolas, F., Yunlun, H., Yude, H.: Development and validation of an EEG-based real-time emotion recognition system using edge AI computing platform with convolutional neural network system-on-chip design. IEEE J. Emerg. Sel. Top. Circ. Syst. 9(4), 645–657 (2019)

Jing, M., Chen, L., Zhiyong, G.: Hardware Implementation and optimization of tiny-YOLO network. Springer, Singapore (2017)

Ke, X., Xiaoyun, W., Dong, W.: A scalable OpenCL-based FPGA accelerator for YOLOv2. In: 2019 IEEE 27th Annual International Symposium on Field-Programmable Custom Computing Machines (FCCM). IEEE, San Diego (2019)

Hiroki, N., Masayuki, S., Shimpei, S.: A demonstration of FPGA-based you only look once version2 (YOLOv2). In: 2018 28th International Conference on Field Programmable Logic and Applications (FPL). IEEE, Dublin (2018)

Hiroki, N., Haruyoshi, Y., Tomoya, F., Shimpei, S.: A lightweight YOLOv2: a binarized CNN with a parallel support vector regression for an FPGA. In: Proceedings of the 2018 ACM/SIGDA International Symposium, pp. 31–40. ACM (2018)

Chaoyang, Z., Kejie, H., Shuyuan, Y., Ziqi, Z., Hejia, Z., Haibin, S.: An efficient hardware accelerator for structured sparse convolutional neural networks on FPGAs. IEEE Trans. Very Large Scale Integr. (VLSI) Syst. 1–13 (2020)

Zixiao, W., Ke, X., Shuaixiao, W., Li, Liu., Lingzhi, L., Dong, W.: Sparse-YOLO: hardware/software co-design of an FPGA accelerator for YOLOv2. IEEE Access 8(99), 116569–116585 (2020)

Xianchao, X., Brian, L.: FCLNN: a flexible framework for fast CNN prototyping on FPGA with OpenCL and Caffe. In: 2018 International Conference on Field-Programmable Technology (FPT). IEEE, Naha (2018)

Caiwen, D., Shuo, W., Ning, L., Kaidi, X., Yanzhi, W., Yun, L.: REQ-YOLO: a resource-aware, efficient quantization framework for object detection on FPGAs. In: Proceedings of the 2019 ACM/SIGDA International Symposium on Field-Programmable Gate Arrays, pp. 33–42 (2019)

Acknowledgements

This research is supported by: (1) 2020-2022 National Natural Science Foundation of China under Grand (Youth) No. 52001039 (2) 2020-2022 Funding of Shandong Natural Science Foundation in China No. ZR2019LZH005.

Author information

Authors and Affiliations

Corresponding author

Editor information

Editors and Affiliations

Rights and permissions

Copyright information

© 2022 The Author(s), under exclusive license to Springer Nature Singapore Pte Ltd.

About this paper

Cite this paper

Lv, Z., Zhang, J. (2022). A Survey of FPGA-Based Deep Learning Acceleration Research. In: Yao, J., Xiao, Y., You, P., Sun, G. (eds) The International Conference on Image, Vision and Intelligent Systems (ICIVIS 2021). Lecture Notes in Electrical Engineering, vol 813. Springer, Singapore. https://doi.org/10.1007/978-981-16-6963-7_5

Download citation

DOI: https://doi.org/10.1007/978-981-16-6963-7_5

Published:

Publisher Name: Springer, Singapore

Print ISBN: 978-981-16-6962-0

Online ISBN: 978-981-16-6963-7

eBook Packages: EngineeringEngineering (R0)