Abstract

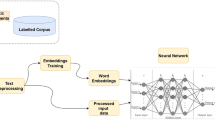

The online atmosphere is conducive for building connections with people all around the world, surpassing geographical boundaries. However, accepting participation from everyone is at the cost of compromising with abusive language or toxic comments. Limiting people from taking part in the discussions is not a viable option just because of misbehaving users. The proposed framework in this research harnesses the power of deep learning to enable toxic online comment recognition. These comments are further categorized using natural language processing tools.

Access this chapter

Tax calculation will be finalised at checkout

Purchases are for personal use only

Similar content being viewed by others

Change history

29 March 2022

Retraction Noe to: Chapter “Using Bidirectional LSTMs with Attention for Categorization of Toxic Comments” in: A. Khanna et al. (eds.), International Conference on Innovative Computing and Communications, Advances in Intelligent Systems and Computing 1387, https://doi.org/10.1007/978-981-16-2594-7_49

References

Madrigal, A. (2017). The basic grossness of humans. The Atlantic. https://www.theatlantic.com/technology/archive/2017/12/the-basic-grossness-of-humans/548330/.

Yin, D., et al. (2009). Detection of harassment on Web 2.0. In Proceedings of the Content Analysis in the WEB 2.0 (CAW2.0) Workshop at WWW2009.

Wulczyn, E., Thain, N., & Dixon, L. (2017). Ex machina: Personal attacks seen at scale. In Proceedings of the 26th International Conference on World Wide Web.

Chu, T., Jue, K., & Wang, M. (2017). Comment abuse classification with deep learning. In CS224n Final Project Reports.

Yang, Z., et al. (2016). Hierarchical attention networks for document classification. In Proceedings of NAACL-HLT 2016 (p. 14801489).

Bird, S., Loper, E., & Klein, E. (2009). Natural Language Processing with Python. OReilly Media Inc.

Pennington, J., Socher, R., & Manning, C. (2014). GloVe: global vectors for word representation. In Proceedings of the 2014 Conference on Empirical Methods in Natural Language Processing.

Parker, R., et al. (2011). English Gigaword Fifth Edition LDC2011T07. DVD. Philadelphia: Linguistic Data Consortium.

Nair, V. & Hinton, G. E. (2010). Rectified linear units improve restricted boltzmann machines. In Proceedings of the 27th International Conference on Machine Learning (pp. 807–814). USA: Omnipress.

Glorot, X., & Bengio, Y. (2010). Understanding the difficulty of training deep feedforward neural networks. In Proceedings of the Thirteenth International Conference on Artificial Intelligence and Statistics, PMLR (Vol. 9, pp. 249–256).

Abadi, M., et al. (2015). TensorFlow: Large-scale machine learning on heterogeneous systems. Software available from tensorflow.org.

Hochreiter, S., & Schmidhuber, J. (1997). Long short-term memory. Neural Computation, 9(8), 1735–1780.

Cho, K., et al. (2014). Learning phrase representations using RNN encoder-decoder for statistical machine translation. arXiv:1406.1078.

Srivastava, N., Hinton, G., Krizhevsky, A., Sutskever, I., & Salakhutdinov, R. (2014). Dropout: A simple way to prevent neural networks from overfitting. Journal of Machine Learning Research, 15(1), 1929–1958.

Ivanov, I. (2018). Tensorflow implementation of attention mechanism for text classification tasks. https://github.com/ilivans/tf-rnn-attention/.

Kingma, D. P. & Ba, J. (2014). Adam: A method for stochastic optimization. arXiv:1412.6980.

Author information

Authors and Affiliations

Editor information

Editors and Affiliations

Rights and permissions

Copyright information

© 2022 The Author(s), under exclusive license to Springer Nature Singapore Pte Ltd.

About this paper

Cite this paper

Tobias, Z., Bose, S. (2022). RETRACTED CHAPTER: Using Bidirectional LSTMs with Attention for Categorization of Toxic Comments. In: Khanna, A., Gupta, D., Bhattacharyya, S., Hassanien, A.E., Anand, S., Jaiswal, A. (eds) International Conference on Innovative Computing and Communications. Advances in Intelligent Systems and Computing, vol 1387. Springer, Singapore. https://doi.org/10.1007/978-981-16-2594-7_49

Download citation

DOI: https://doi.org/10.1007/978-981-16-2594-7_49

Published:

Publisher Name: Springer, Singapore

Print ISBN: 978-981-16-2593-0

Online ISBN: 978-981-16-2594-7

eBook Packages: Intelligent Technologies and RoboticsIntelligent Technologies and Robotics (R0)