Abstract

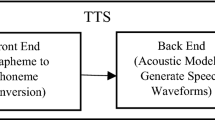

The recent progress of text-to-speech synthesis (TTS) technology has allowed computers to read any written text aloud with voice that is artificial but almost indistinguishable from real human speech. Such improvement in the quality of synthetic speech has expanded the application of the TTS technology. This chapter will explain the mechanism of a state-of-the-art TTS system after a brief introduction to some conventional speech synthesis methods with their advantages and weaknesses. The TTS system consists of two main components: text analysis and speech signal generation, both of which will be detailed in individual sections. The text analysis section will describe what kinds of linguistic features need to be extracted from text, and then present one of the latest studies at NICT from the forefront of TTS research. In this study, linguistic features are automatically extracted from plain text by applying an advanced deep learning technique. The later sections will detail a state-of-the-art speech signal generation using deep neural networks, and then introduce a pioneering study that has lately been conducted at NICT, where leading-edge deep neural networks that directly generate speech waveforms are combined with subband decomposition signal processing to enable rapid generation of human-sounding high-quality speech.

K. Tachibana belonged to NICT at the time of writing.

Access this chapter

Tax calculation will be finalised at checkout

Purchases are for personal use only

Similar content being viewed by others

Notes

- 1.

“Vocoder” is a coined term originated from “voice coder.” It generally refers to the whole process of encoding and decoding speech signals for their transmission, compression, encryption, etc., but in the field of TTS, “vocoder” often concerns only the decoding part, where speech waveforms are reconstructed from their parameterized representations.

References

Klatt, D.H.: Software for a cascade/parallel formant synthesizer. J. Acoust. Soc. Am. 67(3), 971–995 (1980)

Moulines, E., Charpentier, F.: Pitch synchronous waveform processing techniques for text-to-speech synthesis using diphones. Speech Commun. 9(5), 453–467 (1990)

Black, A.W., Campbell, N.: Optimising selection of units from speech databases for concatenative synthesis. In: Proceedings of Eurospeech95, vol. 1, pp. 581–584. Madrid, Spain (1995)

Yoshimura, T., Tokuda, K., Masuko, T., Kobayashi, T., Kitamura, T.: Simultaneous modeling of spectrum, pitch and duration in HMM-Based Speech Synthesis. In: Proceeding of Eurospeech, vol. 5, pp. 2347–2350 (1999)

Zen, H., Tokuda, K., Masuko, T., Kobayashi, T., Kitamura, T.: A hidden semi-Markov model-based speech synthesis system. IEICE Transactions on Information and Systems, E90-D(5), 825–834 (2007)

Ni, J., Shiga, Y., Kawai, H.: Global syllable vectors for building TTS front-end with deep learning. In: Proceedings of INTERSPEECH2017, pp. 769–773 (2017)

Pennington, J., Socher, R., Manning, C.D.: GloVe: global vectors for word representation. http://nlp.stanford.edu/projects/glove/2014

Irsory, O., Cardie, C.: Opinion mining with deep recurrent neural networks. In: Proceedings of the 2014 conference on Empirical Methods in Natural Language Processing (EMNLP), pp. 720–728 (2014)

Zen, H., Senior, A., Schuster, M.: Statistical parametric speech synthesis using deep neural networks. In: Proceedings of ICASSP, pp. 7962–7966 (2013)

Tokuda, K., Yoshimura, T., Masuko, T., Kobayashi, T., Kitamura, T.: Speech parameter generation algorithms for HMM-based speech synthesis. In: Proceedings of ICASSP, pp. 1315–1318 (2000)

van den Oord, A., Dieleman, S., Zen, H., Simonyan, K., Vinyals, O., Graves, A., Kalchbrenner, N., Senior, A., Kavukcuoglu, K.: WaveNet: a generative model for raw audio. arXiv preprint arXiv:1609.03499 (2016). (Unreviewed manuscript)

Mehri, S., Kumar, K., Gulrajani, I., Kumar, R., Jain, S., Sotelo, J., Courville, A., Bengio, Y.: SampleRNN: an unconditional end-to-end neural audio generation model. In: Proceedings of ICLR (2017)

Sotelo, J., Mehri, S., Kumar, K., Santos, J.F., Kastner, K., Courville, A., Bengio, Y.: Char2wav: end-to-end speech synthesis. In: Proceedings of ICLR (2017)

Wang, Y., Skerry-Ryan, R.J., Stanton, D., Wu, Y., Weiss, R., Jaitly, N., Yang, Z., Xiao, Y., Chen, Z., Bengio, S., Le, Q., Agiomyrgiannakis, Y., Clark, R., Saurous, R.A.: Tacotron: towards end-to-end speech synthesis. In: Proceedings of Interspeech, pp. 4006–4010 (2017)

Arik, S.O., Chrzanowski, M., Coates, A., Diamos, G., Gibiansky, A., Kang, Y., Li, X., Miller, J., Ng, A., Raiman, J., Sengupta, S., Shoeybi, M.: Deep voice: real-time neural text-to-speech. In Proceedings of ICML, pp. 195–204 (2017)

Tamamori, A., Hayashi, T., Kobayashi, K., Takeda, K., Toda, T.: Speaker-dependent WaveNet vocoder. In: Proceedings of Interspeech, pp. 1118–1122 (2017)

ITU-T: Recommendation G. 711. Pulse Code Modulation (PCM) of voice frequencies (1988)

van den Oord, A., Li, Y., Babuschkin, I., Simonyan, K., Vinyals, O., Kavukcuoglu, K., van den Driessche, G., Lockhart, E., Cobo, L.C., Stimberg, F., Casagrande, N., Grewe, D., Noury, S., Dieleman, S., Elsen, E., Kalchbrenner, N., Zen, H., Graves, A., King, H., Walters, T., Belov, D., Hassabis, D.: Parallel WaveNet: fast high-fidelity speech synthesis. arXiv preprint arXiv:1711.10433 (2017). (Unreviewed manuscript)

Okamoto, T., Tachibana, K., Toda, T., Shiga, Y., Kawai, H.: Subband WaveNet with overlapped single-sideband filter- banks. In: Proceedings of ASRU, pp. 698–704 (2017)

Okamoto, T., Tachibana, K., Toda, T., Shiga, Y., Kawai, H.: An investigation of subband WaveNet vocoder covering entire auditory frequency range with limited acoustic features. In: Proceedings of ICASSP, pp. 5654–5658 (2018)

Crociere, R.E., Rabiner, L.R.: Multirate Digital Signal Processing. Prentice Hall, Englewood Cliffs (1983)

Author information

Authors and Affiliations

Corresponding author

Editor information

Editors and Affiliations

Rights and permissions

Copyright information

© 2020 The Author(s), under exclusive license to Springer Nature Singapore Pte Ltd.

About this chapter

Cite this chapter

Shiga, Y., Ni, J., Tachibana, K., Okamoto, T. (2020). Text-to-Speech Synthesis. In: Kidawara, Y., Sumita, E., Kawai, H. (eds) Speech-to-Speech Translation. SpringerBriefs in Computer Science. Springer, Singapore. https://doi.org/10.1007/978-981-15-0595-9_3

Download citation

DOI: https://doi.org/10.1007/978-981-15-0595-9_3

Published:

Publisher Name: Springer, Singapore

Print ISBN: 978-981-15-0594-2

Online ISBN: 978-981-15-0595-9

eBook Packages: Computer ScienceComputer Science (R0)