Abstract

This paper examines the impulse control of a standard Brownian motion under a discounted criterion. In contrast with the dynamic programming approach, this paper first imbeds the stochastic control problem into an infinite-dimensional linear program over a space of measures and derives a simpler nonlinear optimization problem that has a familiar interpretation. Optimal solutions are obtained for initial positions in a restricted range. Duality theory in linear programming is then used to establish optimality for arbitrary initial positions.

This research was supported in part by National Science Foundation under grant DMS-1108782 and by grant award 246271 from the Simons Foundation.

You have full access to this open access chapter, Download conference paper PDF

Similar content being viewed by others

Keywords

- Impulse control

- Discounted criterion

- Infinite dimensional linear programming

- Expected occupation measures

1 Introduction

When one seeks to control a stochastic process and every intervention incurs a strictly positive cost, one must select a sequence of separate intervention times and amounts. The resulting stochastic problem is therefore an impulse control problem in which the decision maker seeks to either maximize a reward or minimize a cost. This paper continues the examination of the impulse control of Brownian motion. It considers a discounted cost criterion while a companion paper [5] studies the long-term average criterion. The aim of the paper is to illustrate a solution approach which first imbeds the stochastic control problem into an infinite-dimensional linear program over a space of measures and then reduces the linear program to a simpler nonlinear optimization. Contrasting with the long-term average paper, the dependence of the value function on the initial position of the process requires the use of duality in linear programming to obtain a complete solution.

Impulse control problems have been extensively studied using a quasi-variational approach; now classical works include [1, 3] while the recent paper [2] examines a Brownian inventory model. This paper extends a linear programming approach used on optimal stopping problems [4]. See [5] for additional references.

Let \(W\) be a standard Brownian motion process with natural filtration \(\{\mathcal{F}_t\}\). An impulse control policy consists of a pair of sequences \((\tau ,Y):=\{(\tau _k,Y_k): k\in \mathbb {N}\}\) in which \(\tau _k\) is the \(\{\mathcal{F}_t\}\)-stopping time of the \(k\)th impulse and the \(\mathcal{F}_{\tau _k}\)-measurable variable \(Y_k\) gives the \(k\)th impulse size. The sequence \(\{\tau _k: k\in \mathbb {N}\}\) is required to be non-decreasing, a natural assumption in that intervention \(k+1\) must occur no earlier than intervention \(k\). For a policy \((\tau ,Y)\), the impulse-controlled Brownian motion process is given by

The goal is to control the (discounted) second moment of \(X\) subject to (discounted) fixed and proportional costs for interventions. Let \((\tau ,Y)\) be an impulse control policy. Define \(c_0(x) = x^2\). Let \(k_1 > 0\) denote the fixed costs incurred for each intervention and let \(k_2 \ge 0\) be a cost proportional to the size of the intervention. Define the impulse cost function \(c_1(y,z) = k_1 + k_2|z-y|\), in which \(y\) denotes the pre-jump location of \(X\) (typically far from \(0\)) and \(z\) denotes the post-jump location of \(X\) which is thought to be close to \(0\). Let \(\alpha > 0\) denote the discount rate. The objective function is

The controller must balance the desire to keep the process \(X\) near \(0\) so as to have a small second moment against the desire to limit the number and/or sizes of interventions so as to have a small impulse cost. Since the goal is to minimize the objective function, impulse control policies having \(J(\tau ,Y;x_0)= \infty \) are undesirable. We therefore restrict attention to the impulse policies for which \(J(\tau ,Y;x_0)\) is finite. Denote this class of admissible controls by \(\mathcal{A}\).

We make five important observations about impulse policies. Firstly, “\(0\)-impulses” which do not change the state only increase the cost so can be excluded from consideration. Secondly, the symmetry of the dynamics and costs means that any impulse \((\tau _k,Y_k)\) which would cause sgn\((X(\tau _k)) = - {\mathrm sgn}(X(\tau _k-))\) on a set of positive probability will have no greater cost (smaller cost when \(k_2 >0\)) by replacing the impulse with one for which \(\tilde{X}(\tau _k) = \mathrm {sgn}(X(\tau _k-))|X(\tau _k)|\). Thus we can also restrict analysis to those policies for which all impulses keep the process on the same side of \(0\). Next, any policy \((\tau ,Y)\) with \(\lim _{k\rightarrow \infty } \tau _k =:\tau _\infty < \infty \) on a set of positive probability will have infinite cost so for every admissible policy \(\tau _k \rightarrow \infty \) \(a.s.\) as \(k\rightarrow \infty \). Next let \((\tau ,Y)\) be a policy for which there is some \(k\) such that \(\tau _k = \tau _{k+1}\) on a set of positive probability. Again due to the presence of the fixed intervention cost \(k_1\), the total cost up to time \(\tau _{k+1}\) will be at least \(k_1 \mathbb {E}[e^{-\alpha \tau _k} I(\tau _k = \tau _{k+1})]\) smaller by combining these interventions into a single intervention on this set. Hence we may restrict policies to those for which \(\tau _k < \tau _{k+1}\) \(a.s.\) for each \(k\).

The final observation is similar. Suppose \((\tau ,Y)\) is a policy such that on a set \(G\) of positive probability \(\tau _k < \infty \) and \(|X(\tau _k)| > |X(\tau _k-)|\) for some \(k\). Consider a modification of this impulse policy and resulting process \(\tilde{X}\) which simply fails to implement this impulse on \(G\). Define the stopping time \(\sigma = \inf \{t >\tau _k: |X(t)| \le |\tilde{X}(t)|\}\). Notice that the running costs accrued by \(\tilde{X}\) over \([\tau _k,\sigma )\) are smaller than those accrued by \(X\). Finally, at time \(\sigma \), introduce an intervention on the set \(G\) which moves the \(\tilde{X}\) process so that \(\tilde{X}(\sigma ) = X(\sigma )\). This intervention will incur a cost which is smaller than the cost for the process \(X\) at time \(\tau _k\). As a result, we may restrict the impulse control policies to those for which every impulse decreases the distance of the process from the origin.

2 Restricted Problem and Measure Formulation

The solution of the impulse control problem is obtained by first considering a subclass of the admissible impulse control pairs.

Condition 1

Let \(\mathcal{A}_1 \subset \mathcal{A}\) be those policies \((\tau ,Y)\) such that the resulting process \(X\) is bounded; that is, for \((\tau ,Y) \in \mathcal{A}_1\), there exists some \(M < \infty \) such that \(|X(t)| \le M\) for all \(t \ge 0\).

Note that for each \(M > 0\), any impulse control which has the process jump closer to \(0\) whenever \(|X(t-)|=M\) is in the class \(\mathcal{A}_1\) so this collection is non-empty. The bound is not required to be uniform for all \((\tau ,Y) \in \mathcal{A}_1\). The restricted impulse control problem is one of minimizing \(J(\tau ,Y;x_0)\) over all policies \((\tau ,Y) \in \mathcal{A}_1\).

We capture the expected behavior of the process and impulses with discounted measures. Let \((\tau ,Y) \in \mathcal{A}_1\) be given and consider \(f\in C^2(\mathbb {R})\). Then upon letting \(t\rightarrow \infty \) after taking expectations, the general Dynkin’s formula results in

in which the transversality condition \(\lim _{t\rightarrow \infty } \mathbb {E}_{x_0}\left[ e^{-\alpha t} f(X(t))\right] = 0\) follows from the boundedness of \(X\). Note the generator of the Brownian motion process is \(Af(x) = (1/2) f''(x)\). To simplify notation, define \(Bf(y,z) = f(y) - f(z)\).

Define the discounted expected occupation measure \(\mu _0\) and the discounted impulse measure \(\mu _1\) such that for each \(G, G_1, G_2 \subset \mathbb {R}\),

Notice that the total mass of \(\mu _0\) is \(1/\alpha \) while \(\mu _1\) is a finite measure since \(J(\tau ,Y;x_0)\) is finite. Rewriting the objective function and Dynkin’s formula in terms of these measures imbeds the impulse control problem in the linear program

We now wish to introduce an auxiliary linear program derived from (4) which only has the \(\mu _1\) measure as its variable and has fewer constraints. Define \(\phi (x) = e^{-\sqrt{2\alpha }x}\) and \(\psi (x) = e^{\sqrt{2\alpha }x}\). Notice that \(\phi \) is a strictly decreasing solution while \(\psi \) is a strictly increasing solution of the homogeneous equation \(\alpha f - Af = 0\). For each \((\tau ,Y)\in \mathcal{A}_1\), the resulting process \(X\) is bounded so we can use both \(\phi \) and \(\psi \) in (2). This results in the two constraints

which only constrain the measure \(\mu _1\). Note that the monotonicity and positivity of both \(\phi \) and \(\psi \) require the support of \(\mu _1\) to be such that the two integrals in (5) are positive. We can also take advantage of the symmetry inherent in the problem. Define \(p_0(x) = \cosh (\sqrt{2\alpha }x)\). Then averaging the two constraints (5) yields

Using \(g_0(x) = (\alpha x^2 + 1)/\alpha ^2\) in (2), where again the boundedness of \(X\) implies that the transversality condition is satisfied, yields

Let \([c_1-Bg_0]\) denote the sum of the two functions \(c_1\) and \(Bg_0\). Using (6) in (1) establishes that

and hence that

so the objective function value only depends on the measure \(\mu _1\). Since the objective function for each \((\tau ,Y) \in \mathcal{A}_1\) has the affine term \(g_0(x_0)\), it may be ignored for the purposes of optimization but it must be included to obtain the correct value for the objective function. Now form the auxiliary linear program

Let \(V_1(x_0)\) denote the value of the impulse control problem over policies in \(\mathcal{A}_1\), \(V_{lp}\) denote the value of (4) and \(V_{aux}\) denote the value of (8). The following proposition is immediate.

Proposition 2

\(V_{aux}(x_0) \le V_{lp}(x_0) \le V_1(x_0)\).

Remark 3

Our analysis will also involve other auxiliary linear programs as well. One will replace the single constraint in (8) with the pair of constraints (5) while another will limit the constraints in (4) to a single function. Each auxiliary program will provide a lower bound on \(V_{lp}(x_0)\) and hence on \(V_1(x_0)\).

2.1 Nonlinear Optimization and Partial Solution

Recall, the admissible impulse policies can be (and are) limited to those for which impulses move \(X\) closer to the origin. As a result, the integrand \(Bp_0 > 0\) and the constraint of (8) implies that the feasible measures \(\mu _1\) of (8) are those for which \(Bp_0/p_0(x_0)\) is a probability density. For a feasible \(\mu _1\), let \(\tilde{\mu }_1\) be the probability measure \(\frac{Bp_0}{p_0(x_0)}\, \mu _1\). Thus we can write the objective function as

Since the goal is to minimize the cost, a lower bound is given by the minimal value of \(F\) scaled by the constant \(p_0(x_0)\), where

Moreover, should the infimum be attained at some pair \((y_*,z_*)\), then the probability measure \(\tilde{\mu }_1(\cdot )\) putting unit point mass on \((y_*,z_*)\) would achieve the lower bound and identify an optimal \(\mu _1\) measure for the auxiliary linear program. To solve the stochastic problem, one would need to connect the measure \(\mu _1\) back to an admissible impulse control policy in the class \(\mathcal{A}_1\) in such a way that the resulting \(\mu _1\) measure would be given by (3).

Remark 4

The objective function \(p_0(x_0) F\) has a natural interpretation. First observe that \(Bp_0(y,z) = \cosh (\sqrt{2\alpha }y) - \cosh (\sqrt{2\alpha }z)\) so

It can be shown that the first fraction gives the expected discount for the time it takes \(X\) to reach \(\{\pm y\}\) when starting at \(x_0\). The ratio \(\frac{\cosh (\sqrt{2\alpha }z)}{\cosh (\sqrt{2\alpha }y)}\) then gives the expected discount for the time it takes \(X\) to again reach \(\{\pm y\}\) but this time starting at \(\pm z\) so the sum represents the expected discounting for infinitely many cycles. By symmetry, the initial term gives the cost for impulsing from \(\pm y\) to \(\pm z\) along with the second moment. The minimization therefore optimizes the expected cost over a particular class of impulse policies. We emphasize that the linear program imbedding is not restricted to these policies.

Proposition 5

There exists pairs \((y_*,z_*)\) and \((-y_*,-z_*)\) such that

Moreover, the minimizing pair \((y_*,z_*)\) having nonnegative components is unique.

Proof

First observe

so there exists some pairs \((y,z)\) for which \(F(y,z) < 0\) since the difference of the quadratic terms is negative and will dominate the constant and linear terms in the numerator. A straightforward asymptotic analysis show that \(F(y,z)\) is asymptotically nonnegative when \(y\rightarrow \infty \), \(z \rightarrow \infty \) or \(|y-z| \rightarrow 0\). Therefore \(F\) achieves its minimum at some point \((y_*,z_*)\).

Notice that \(F\) is symmetric about \(0\) in that \(F(-y,-z)=F(y,z)\) so it is sufficient to analyze \(F\) on the domain \(0 \le z \le y\). The first-order optimality conditions on \(F\) are

The minimizing pair \((y_*,z_*)\) will be interior to the region since \(\frac{\partial F}{\partial z}(y_*,0) = \frac{-k_2}{\cosh (\sqrt{2\alpha }\, y_*) - 1} < 0.\)

Simple algebra now leads to the following systems of nonlinear equations for \((y_*,z_*)\):

The fact that the minimal value of \(F\) is negative implies \(y_*>z_*> k_2\alpha /2\). Solving for \(-\alpha \sqrt{2\alpha }\,F(y_*,z_*)\) in each equation shows that at an optimal pair \((y_*,z_*)\),

A straightforward analysis of the function \(h(x)=[2x-k_2\alpha ]/\sinh (\sqrt{2\alpha }\, x)\) on the domain \([k_2\alpha /2,\infty )\) shows that the level sets of \(h\) consist of two-point sets and so on the region \(0\le z \le y\), the pair \((y_*,z_*)\) is unique.\(~\square \)

Now that the lower bound given in (9) is determined, it is important to connect an optimizing \(\mu _1^*\) with an admissible impulse control policy \((\tau ,Y) \in \mathcal{A}_1\). The existence of two minimizing pairs \((y_*,z_*)\) and \((-y_*,-z_*)\) allows many auxiliary-LP-feasible measures \(\mu _1\) to place point masses at these two points and still achieve the lower bound. This observation leads to a solution to the restricted stochastic impulse control problem.

Theorem 6

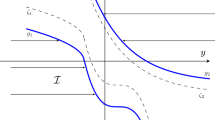

Let \((y_*,z_*)\) be the pair having positive components that minimizes \(F\) as identified in Proposition 5. Consider initial positions \(-y_* \le x_0 \le y_*\). Define the impulse control policy \((\tau ^*,Y^*)\) as follows:

and for \(k=2,3,4,\ldots \), define

Then \((\tau ^*,Y^*)\) is an optimal impulse control pair for the restricted stochastic impulse control problem and the corresponding optimal value is

Proof

The measure \(\mu _1^*\) defined from \((\tau ^*,Y^*)\) using (3) is concentrated on the two points \((-y_*,-z_*)\) and \((y_*,z_*)\). Since the process resulting from the admissible impulse control pair \((\tau ^*,Y^*)\) remains bounded, conditions (5) can be used to obtain the masses:

Recall \(\phi (x) = e^{-\sqrt{2\alpha }\, x}\) and \(\psi (x) = e^{\sqrt{2\alpha }\,x}\) so \(\phi (-x) = \psi (x)\) and \(\psi (-x) = \phi (x)\). As a result these expressions simplify to

It is now straightforward to verify that \(J(\tau ^*,Y^*;x_0)\) equals the value in (11).\(~\square \)

2.2 Full Solution

Theorem 6 solves the problem for initial positions \(x_0\) with \(|x_0| \le y_*\). The issue is now one of determining the optimal value and an optimal impulse control pair when \(|x_0| > y_*\). From an intuitive point of view, \(|x_0| < y_*\) has an optimal control which waits until the state process first hits \(\pm y_*\) before having an impulse so one might expect an impulse to occur immediately when \(|x_0| \ge y_*\). Since two impulses at the same instant are no better than one, one would anticipate that the after-jump location might be \(z \in (-y_*,y_*)\). The cost of an immediate jump from \(x_0\) to \(z\) followed by using an optimal impulse control is

Solving \(g'(z) = 0\) to find a minimizer results in

which is the first order condition (10) for which both \(y_*\) and \(z_*\) are solutions. An impulse to \(y_*\) would be followed by an immediate jump to \(z_*\) and incur two fixed costs whereas a single jump directly to \(z_*\) would cost less. This line of reasoning indicates that a single jump to \(z_*\) could be an optimal initial impulse.

The goal is to verify that this intuitive reasoning is correct. Define

For \(|y| > y_*\), the function \(\widehat{V}\) is the cost associated with the process starting at initial position \(y\), having an instantaneous jump from \(y\) to \( \mathrm {sgn}(y)\, z_*\) and then using the optimal impulse control policy of Theorem 6 thereafter. The following lemma is fairly straightforward so its proof is left to the reader.

Lemma 7

\(\widehat{V} \in C^1(\mathbb {R}) \cap C^2(\mathbb {R}\backslash \{\pm y_*\}).\)

The function \(\widehat{V}\) therefore has sufficient regularity to use in (2). We now consider the new auxiliary linear program

and its dual (having sole variable \(w\))

Observe that each linear program has feasible points with costs that are finite. A straightforward weak duality argument therefore shows that each value of (13) corresponding to a feasible variable \(w\) is no greater than any value of (12) for a feasible pair of measures and hence the value of (13) is a lower bound on the value of the restricted impulse control problem. Since \(\widehat{V}(x_0) > 0\), one seeks as large a positive value as possible for \(w\).

Theorem 8

The optimal value of (13) is \(\widehat{V}(x_0)\) which is achieved when \(w_* = 1\).

Proof

By symmetry, it is sufficient to examine \(x,y,z \ge 0\). Notice that for \(0 \le x < y_*\), \(\alpha \widehat{V}(x) - A\widehat{V}(x) = x^2 = c_0(x) \ge 0\) and hence the dual variable \(w\) cannot exceed \(1\). The question is whether \(w=1\) is feasible for (13) so examine the rest of the constraints with \(w=1\).

For \(x > y_*\), \(A\widehat{V}(x) = 0\) so the first constraint of (13) requires

Since the right-hand expression is an increasing function for \(x \in [k_2\alpha /2,\infty )\), it suffices to verify its nonnegativity with \(x=y_*\):

in which (10) is used to obtain the last expression. This inequality can be rewritten as

Since \((y_*,z_*)\) is a minimizing pair of the function \(F\), (14) holds and the first family of constraints of (13) is satisfied with \(w=1\).

Consider now the second family of constraints with \(w=1\). There are several cases to examine. When \(0 \le y \le z\), monotonicity of \(\widehat{V}\) on this range shows the condition is trivially satisfied. Next, for \(0\le z \le y \le y_*\), the constraint can be rewritten as

The right-hand expression gives the cost of an immediate jump from \(y\) to \(z\) followed by an optimal impulse control policy thereafter whereas the left-hand side gives the optimal cost. Hence this inequality is satisfied. Now consider \(y_* \le z < y\) and observe that \(\widehat{V}(y) - \widehat{V}(z) = k_2(y - z) < k_1 + k_2(y - z).\) Finally, for \(0 \le z < y_* < y\) and again using the definition of \(\widehat{V}\), the second set of constraints in (13) is equivalent to

or equivalently

This last inequality is true by the optimality of both the pair \((y_*,z_*)\) and the function \(V_1\) on \([-y_*,y_*]\) since the bracketed quantity on the right-hand side gives the cost associated with an initial impulse to \(z\) from \(y_*\) along with optimal impulse control policy starting from \(z\). Thus the second family of constraints in (13) hold when \(w=1\).\(~\square \)

We now have the following result.

Theorem 9

Let \((y_*,z_*)\) be the optimizing pair for \(F\) having positive components. Define the impulse control policy \((\tau ^*,Y^*)\) as follows;

and for \(k=2,3,4,\ldots \), define

Then \((\tau ^*,Y^*)\) is an optimal impulse control pair for the restricted stochastic impulse control problem and the corresponding optimal value is \(\widehat{V}(x_0)\).

Proof

The particular choice of \((\tau ^*,Y^*)\) implies \(\widehat{V}(x_0) \le V_{lp}(x_0) \le V_1(x_0) \le J(\tau ^*,Y^*) = \widehat{V}(x_0)\).\(~\square \)

2.3 Solution for General Admissible Impulse Controls

The solution of Sect. 2.2 is restricted to those impulse control policies under which the process \(X\) remains bounded. It is necessary to show that no lower cost can be obtained by any policy which allows the process to be unbounded.

Theorem 10

The impulse control policy \((\tau ^*,Y^*)\) of Theorem 9 is optimal in the class of all admissible policies and \(\widehat{V}(x_0)\) is the optimal value.

Proof

This argument establishes that \(\widehat{V}(x_0)\) is a lower bound on \(J(\tau ,Y;x_0)\) for every admissible impulse control policy. Theorem 9 then gives the existence of an optimal policy whose cost equals the lower bound.

Choose \((\tau ,Y) \in \mathcal{A}\) and let \(X\) be the resulting controlled process. Suppose there exists some \(K > 0\) such that \(\liminf _{t\rightarrow \infty } \mathbb {E}_{x_0}[e^{-\alpha t} \widehat{V}(X(t))] \ge K\). Note that

so the linearity of \(\widehat{V}\) on \(\{x: |x| \ge y_*\}\) implies that \(\mathbb {E}_{x_0}[|X(t)| I_{\{|X(t)|\ge y_*\}}]\) is asymptotically bounded below by \(K e^{\alpha t}\) as \(t \rightarrow \infty \). Hence by Jensen’s inequality for \(\epsilon > 0\) and \(t\) large,

Using this estimate in (1) shows \(J(\tau ,Y;x_0) = \infty \).

Now suppose \(J(\tau ,Y;x_0) < \infty \) so \(\liminf _{t\rightarrow \infty } \mathbb {E}_{x_0}[e^{-\alpha t} \widehat{V}(X(t))] = 0.\) Then there exists a sequence \(\{t_j:j\in \mathbb {N}\}\) such that \(\lim _{j\rightarrow \infty } \mathbb {E}_{x_0}[e^{-\alpha t_j} \widehat{V}(X(t_j)) ] = 0\). Note that \(|\widehat{V}'| \le k_2\) so \( \int _0^t e^{-\alpha s} \widehat{V}'(X(s))\, dW(s)\), \(t\ge 0\), is a martingale. Thus the dual constraints, in conjunction with the finiteness of the expected cost, implies that Dynkin’s formula holds when \(t=t_j\) for each \(j\). Hence

Letting \(j \rightarrow \infty \), an application of the monotone convergence theorem on the first expectation and the convergence to \(0\) of second expectation establishes that \(\widehat{V}(x_0)\) is a lower bound on the expected cost \(J(\tau ,Y;x_0)\).\(~\square \)

References

Bensoussan, A., Lions, J.-L.: Impulse Control and Quasi-Variational Inequalities. Bordas Editions. Gauthier-Villars, Paris (1984)

Dai, J.G., Yao, D.: Optimal control of Brownian inventory models with convex inventory cost, Part 2: Discount-optimal controls. Stoch. Syst. 3(2), 500–573 (2013)

Harrison, J.M., Sellke, T.M., Taylor, A.J.: Impulse control of Brownian motion. Math. Oper. Res. 8(3), 454–466 (1983)

Helmes, K., Stockbridge, R.H.: Construction of the value function and optimal rules in optimal stopping of one-dimensional diffusions. Adv. Appl. Probab. 42(1), 158–182 (2010)

Helmes, K., Stockbridge, R.H., Zhu, C.: Impulse control of standard Brownian motion: long-term average criterion. In: Helmes, K., Stockbridge, R.H., Zhu, C. (eds.) System Modeling and Optimization, vol. 443, pp. 148–157 (2014)

Author information

Authors and Affiliations

Corresponding author

Editor information

Editors and Affiliations

Rights and permissions

Copyright information

© 2014 IFIP International Federation for Information Processing

About this paper

Cite this paper

Helmes, K., Stockbridge, R.H., Zhu, C. (2014). Impulse Control of Standard Brownian Motion: Discounted Criterion. In: Pötzsche, C., Heuberger, C., Kaltenbacher, B., Rendl, F. (eds) System Modeling and Optimization. CSMO 2013. IFIP Advances in Information and Communication Technology, vol 443. Springer, Berlin, Heidelberg. https://doi.org/10.1007/978-3-662-45504-3_15

Download citation

DOI: https://doi.org/10.1007/978-3-662-45504-3_15

Published:

Publisher Name: Springer, Berlin, Heidelberg

Print ISBN: 978-3-662-45503-6

Online ISBN: 978-3-662-45504-3

eBook Packages: Computer ScienceComputer Science (R0)