Abstract

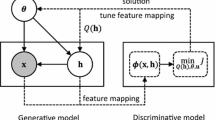

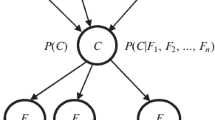

Generative score spaces provide a principled method to exploit generative information, e.g., data distribution and hidden variables, in discriminative classifiers. The underlying methodology is to derive measures or score functions from generative models. The derived score functions, spanning the so-called score space, provide features of a fixed dimension for discriminative classification. In this paper, we propose a simple yet effective score space which is essentially the sufficient statistics of the adopted generative models and does not involve the parameters of generative models. We further propose a discriminative learning method for the score space that seeks to utilize label information by constraining the classification margin over the score space. The form of score function allows the formulation of simple learning rules, which are essentially the same learning rules for a generative model with an extra posterior imposed over its hidden variables. Experimental evaluation of this approach over two generative models shows that performance of the score space approach coupled with the proposed discriminative learning method is competitive with state-of-the-art classification methods.

Chapter PDF

Similar content being viewed by others

References

Ng, A., Jordan, M.: On discriminative vs. generative classifiers: A comparison of logistic regression and naive bayes. In: NIPS (2002)

Vapnik, V.: The nature of statistical learning theory. Springer (2000)

Gönen, M., Alpaydin, E.: Localized multiple kernel learning. In: ICML (2008)

Jaakkola, T., Meila, M., Jebara, T.: Maximum entropy discrimination. TR AITR-1668, MIT (1999)

Raina, R., Shen, Y., Ng, A., McCallum, A.: Classification with hybrid generative/discriminative models. In: NIPS (2004)

Sha, F., Saul, L.: Large margin hidden markov models for automatic speech recognition. In: NIPS (2007)

Li, X., Zhao, X., Fu, Y., Liu, Y.: Bimodal gender recognition from face and fingerprint. In: CVPR (2010)

Perina, A., Cristani, M., Castellani, U., Murino, V., Jojic, N.: Free energy score spaces: using generative information in discriminative classifiers. IEEE Trans. on PAMI 34(7), 1249–1262 (2012)

Li, X., Lee, T., Liu, Y.: Stochastic feature mapping for pac-bayes classification. arXiv:1204.2609 (2012)

Li, X., Lee, T., Liu, Y.: Hybrid generative-discriminative classification using posterior divergence. In: CVPR (2011)

Jaakkola, T., Haussler, D.: Exploiting generative models in discriminative classifiers. In: NIPS (1999)

Tsuda, K., Kawanabe, M., Ratsch, G., Sonnenburg, S., Muller, K.: A new discriminative kernel from probabilistic models. Neural Computation 14(10), 2397–2414 (2002)

Vedaldi, A., Zisserman, A.: Efficient additive kernels via explicit feature maps. IEEE Trans. on PAMI 34(3), 480–492 (2012)

Holub, A.D., Welling, M., Perona, P.: Hybrid generative-discriminative visual categorization. International Journal of Computer Vision 77(1-3), 239–258 (2008)

Chatfield, K., Lempitsky, V., Vedaldi, A., Zisserman, A.: The devil is in the details: an evaluation of recent feature encoding methods. In: BMVC (2011)

Collins, M.: Discriminative training methods for hidden markov models: theory and experiments with perceptron algorithms. In: EMNLP, pp. 1–8 (2002)

Zhu, J., Ahmed, A., Xing, E.P.: Medlda: maximum margin supervised topic models for regression and classification. In: ICML, pp. 1257–1264 (2009)

Lacoste-Julien, S., Sha, F., Jordan, M.: Disclda: Discriminative learning for dimensionality reduction and classification. In: NIPS (2008)

der Maaten, L.V.: Learning discriminative fisher kernels. In: ICML, pp. 217–224 (2011)

Smith, N., Gales, M.: Speech recognition using svms. NIPS 14, 1197–1204 (2001)

Gales, M.J.F., Watanabe, S., Fosler-Lussier, E.: Structured discriminative models for speech recognition: an overview. IEEE Signal Processing Magazine 29(6), 70–81 (2012)

Neal, R., Hinton, G.: A new view of the em algorithm that justifies incremental, sparse and other variants. Learning in Graphical Models, 355–368

Wainwright, M.J., Jordan, M.I.: Graphical Models, Exponential Families, and Variational Inference. Now Publishers Inc., Hanover (2008)

Jordan, M., Ghahramani, Z., Jaakkola, T., Saul, L.: An introduction to variational methods for graphical models. Machine Learning 37(2), 183–233 (1999)

Graça, J., Ganchev, K., Taskar, B.: Expectation maximization and posterior constraints. In: NIPS (2007)

Friedman, J., Hastie, T., Tibshirani, R.: The Elements of Statistical Learning. Spinger (2008)

Gilks, W.R., Wild, P.: Adaptive rejection sampling for gibbs sampling. Applied Statistics, 337–348 (1992)

Langford, J.: Tutorial on practical prediction theory for classification. JMLR 6(1), 273–306 (2006)

Chang, C.C., Lin, C.J.: Libsvm: a library for support vector machines. ACM Transactions on Intelligent Systems and Technology 2(3), 27 (2011)

Rabiner, L.: A tutorial on hidden Markov models and selected applications in speech recognition. Proceeding of the IEEE 77(2), 257–286 (1989)

Baum, L., Petrie, T., Soules, G., Weiss, N.: A maximization technique occurring in the statistical analysis of probabilistic functions of markov chains. The Annals of Mathematical Statistics 41(1), 164–171 (1970)

Mollineda, R., Vidal, E., Casacuberta, F.: Cyclic sequence alignments: approximate versus optimal techniques. IJPRAI 16, 291–299 (2002)

Vedaldi, A., Gulshan, V., Varma, M., Zisserman, A.: Multiple kernels for object detection. In: ICCV (2009)

Blei, D., Ng, A., Jordan, M.: Latent dirichlet allocation. JMLR 3, 993–1022 (2003)

Griffiths, T., Steyvers, M.: Finding scientific topics. PNAS 101(suppl. 1), 5228–5235 (2004)

Lowe, D.: Distinctive image features from scale-invariant keypoints. IJCV 60(2), 91–110 (2004)

Author information

Authors and Affiliations

Editor information

Editors and Affiliations

Rights and permissions

Copyright information

© 2013 Springer-Verlag Berlin Heidelberg

About this paper

Cite this paper

Li, X., Wang, B., Liu, Y., Lee, T.S. (2013). Learning Discriminative Sufficient Statistics Score Space for Classification. In: Blockeel, H., Kersting, K., Nijssen, S., Železný, F. (eds) Machine Learning and Knowledge Discovery in Databases. ECML PKDD 2013. Lecture Notes in Computer Science(), vol 8190. Springer, Berlin, Heidelberg. https://doi.org/10.1007/978-3-642-40994-3_4

Download citation

DOI: https://doi.org/10.1007/978-3-642-40994-3_4

Publisher Name: Springer, Berlin, Heidelberg

Print ISBN: 978-3-642-40993-6

Online ISBN: 978-3-642-40994-3

eBook Packages: Computer ScienceComputer Science (R0)