Abstract

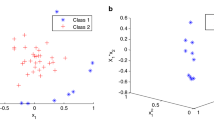

Nowadays, the imbalanced nature of some real-world data is receiving a lot of attention from the pattern recognition and machine learning communities in both theoretical and practical aspects, giving rise to different promising approaches to handling it. However, preprocessing methods operate in the original input space, presenting distortions when combined with kernel classifiers, that operate in the feature space induced by a kernel function. This paper explores the notion of empirical feature space (a Euclidean space which is isomorphic to the feature space and therefore preserves its structure) to derive a kernel-based synthetic over-sampling technique based on borderline instances which are considered as crucial for establishing the decision boundary. Therefore, the proposed methodology would maintain the main properties of the kernel mapping while reinforcing the decision boundaries induced by a kernel machine. The results show that the proposed method achieves better results than the same borderline over- sampling method applied in the original input space.

Access this chapter

Tax calculation will be finalised at checkout

Purchases are for personal use only

Preview

Unable to display preview. Download preview PDF.

Similar content being viewed by others

References

Chawla, N.V., Bowyer, K.W., Hall, L.O., Kegelmeyer, W.P.: Smote: Synthetic minority over-sampling technique. Journal of Artificial Intelligence Research 16, 321–357 (2002)

Tang, Y., Zhang, Y.Q., Chawla, N.V., Krasser, S.: SVMs modeling for highly imbalanced classification. IEEE Transactions on Systems, Man and Cybernetics, Part B: Cybernetics 39(1), 281–288 (2009)

Galar, M., Fernández, A., Barrenechea, E., Bustince, H., Herrera, F.: A review on ensembles for the class imbalance problem: Bagging-, boosting-, and hybrid-based approaches. IEEE Transactions on Systems, Man, and Cybernetics, Part C: Applications and Reviews 42(4), 463–484 (2012)

Schölkopf, B., Smola, A.J.: Learning with Kernels: Support Vector Machines, Regularization, Optimization, and Beyond. MIT Press (2001)

Schölkopf, B., Mika, S., Burges, C.J.C., Knirsch, P., Müller, K.R., Rätsch, G., Smola, A.J.: Input space versus feature space in kernel-based methods. IEEE Transactions on Neural Networks 10, 1000–1017 (1999)

Xiong, H., Swamy, M.N.S., Ahmad, M.O.: Optimizing the kernel in the empirical feature space. IEEE Transactions on Neural Networks 16(2), 460–474 (2005)

Yan, F., Mikolajczyk, K., Kittler, J., Tahir, M.A.: Combining multiple kernels by augmenting the kernel matrix. In: El Gayar, N., Kittler, J., Roli, F. (eds.) MCS 2010. LNCS, vol. 5997, pp. 175–184. Springer, Heidelberg (2010)

Xiong, H., Swamy, M.N.S., Ahmad, M.O.: Learning with the optimized data-dependent kernel. In: Proc. of the 2004 Conference on Computer Vision and Pattern Recognition Workshop, CVPRW, vol. 6, pp. 95–101. IEEE Computer Society (2004)

Abe, S., Onishi, K.: Sparse least squares support vector regressors trained in the reduced empirical feature space. In: de Sá, J.M., Alexandre, L.A., Duch, W., Mandic, D.P. (eds.) ICANN 2007. LNCS, vol. 4669, pp. 527–536. Springer, Heidelberg (2007)

Xiong, H.: A unified framework for kernelization: The empirical kernel feature space. In: Chinese Conference on Pattern Recognition, CCPR, pp. 1–5 (November 2009)

Han, H., Wang, W.-Y., Mao, B.-H.: Borderline-SMOTE: A New Over-Sampling Method in Imbalanced Data Sets Learning. In: Huang, D.-S., Zhang, X.-P., Huang, G.-B. (eds.) ICIC 2005. LNCS, vol. 3644, pp. 878–887. Springer, Heidelberg (2005)

Wang, H.Y.: Combination approach of smote and biased-svm for imbalanced datasets (2008)

Zeng, Z.-Q., Gao, J.: Improving SVM classification with imbalance data set. In: Leung, C.S., Lee, M., Chan, J.H. (eds.) ICONIP 2009, Part I. LNCS, vol. 5863, pp. 389–398. Springer, Heidelberg (2009)

Schölkopf, B., Smola, A., Müller, K.R.: Nonlinear component analysis as a kernel eigenvalue problem. Neural Computation 10(5), 460–474 (1998)

Cortes, C., Vapnik, V.: Support-vector networks. Machine Learning 20(3), 273–297 (1995)

Asuncion, A., Newman, D.: UCI machine learning repository (2007)

Fernández-Caballero, J.C., Martínez-Estudillo, F.J., Hervás-Martínez, C., Gutiérrez, P.A.: Sensitivity versus accuracy in multiclass problems using memetic pareto evolutionary neural networks. IEEE Transactions on Neural Networks 21(5), 750–770 (2010)

Demsar, J.: Statistical comparisons of classifiers over multiple data sets. Journal of Machine Learning Research 7, 1–30 (2006)

Braun, M.L., Buhmann, J.M., Müller, K.R.: On relevant dimensions in kernel feature spaces. J. Mach. Learn. Res. 9, 1875–1908 (2008)

Author information

Authors and Affiliations

Editor information

Editors and Affiliations

Rights and permissions

Copyright information

© 2013 Springer-Verlag Berlin Heidelberg

About this paper

Cite this paper

Pérez-Ortiz, M., Gutiérrez, P.A., Hervás-Martínez, C. (2013). Borderline Kernel Based Over-Sampling. In: Pan, JS., Polycarpou, M.M., Woźniak, M., de Carvalho, A.C.P.L.F., Quintián, H., Corchado, E. (eds) Hybrid Artificial Intelligent Systems. HAIS 2013. Lecture Notes in Computer Science(), vol 8073. Springer, Berlin, Heidelberg. https://doi.org/10.1007/978-3-642-40846-5_47

Download citation

DOI: https://doi.org/10.1007/978-3-642-40846-5_47

Publisher Name: Springer, Berlin, Heidelberg

Print ISBN: 978-3-642-40845-8

Online ISBN: 978-3-642-40846-5

eBook Packages: Computer ScienceComputer Science (R0)