Abstract

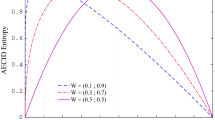

We present new results on the performance of Minimum Error Entropy (MEE) decision trees, which use a novel node split criterion. The results were obtained in a comparive study with popular alternative algorithms, on 42 real world datasets. Carefull validation and statistical methods were used. The evidence gathered from this body of results show that the error performance of MEE trees compares well with alternative algorithms. An important aspect to emphasize is that MEE trees generalize better on average without sacrifing error performance.

Chapter PDF

Similar content being viewed by others

References

Rokach, L., Maimon, O.: Decision Trees. In: Maimon, O., Rokach, L. (eds.) Data Mining and Knowledge Discovery Handbook. Springer, Heidelberg (2005)

Marques de Sá, J.P., Sebastião, R., Gama, J.: Tree Classifiers Based on Minimum Error Entropy Decisions. Can. J. Artif. Intell., Patt. Rec. and Mach. Learning (in Press, 2011)

Silva, L., Felgueiras, C.S., Alexandre, L., Marques de Sá, J.: Error Entropy in Classification Problems: A Univariate Data Analysis. Neural Computation 18, 2036–2061 (2006)

Asuncion, A., Newman, D.J.: UCI Machine Learning Repository. University of California, School of Information and Computer Science, Irvine, CA (2010), http://www.ics.uci.edu/~mlearn/MLRepository.html

Kearns, M.: A Bound on the Error of Cross Validation Using the Approximation and Estimation Rates, with Consequences for the Training-Test Split. Neural Computation 9, 1143–1161 (1997)

Molinaro, A.M., Simon, R., Pfeiffer, R.M.: Prediction Error Estimation: A Comparison of Resampling Methods. Bioinformatics 21, 3301–3307 (2005)

Demšar, J.: Statistical Comparisons of Classifiers over Multiple Data Sets. J. of Machine Learning Research 7, 1–30 (2006)

García, S., Fernández, A., Luengo, J., Herrera, F.: Advanced Nonparametric Tests for Multiple Comparisons in the Design of Experiments in Computational Intelligence and Data Mining: Experimental Analysis of Power. Information Sciences 180, 2044–2064 (2010)

Hochberg, Y., Tamhane, A.C.: Multiple Comparison Procedures. John Wiley & Sons, Inc. (1987)

Salzberg, S.L.: On Comparing Classifiers: Pitfalls to Avoid and a Recommended Approach. Data Mining and Knowledge Discovery 1, 317–328 (1997)

Jensen, D., Oates, T., Cohen, P.R.: Building Simple Models: A Case Study with Decision Trees. In: Liu, X., Cohen, P., Berthold, M. (eds.) IDA 1997. LNCS, vol. 1280, pp. 211–222. Springer, Heidelberg (1997)

Li, R.-H., Belford, G.G.: Instability of Decision Tree Classification Algorithms. In: Proc. 8th ACM SIGKDD International Conference on Knowledge Discovery and Data Mining, pp. 570–575 (2002)

Author information

Authors and Affiliations

Editor information

Editors and Affiliations

Rights and permissions

Copyright information

© 2011 Springer-Verlag Berlin Heidelberg

About this paper

Cite this paper

de Sá, J.P.M., Sebastião, R., Gama, J., Fontes, T. (2011). New Results on Minimum Error Entropy Decision Trees. In: San Martin, C., Kim, SW. (eds) Progress in Pattern Recognition, Image Analysis, Computer Vision, and Applications. CIARP 2011. Lecture Notes in Computer Science, vol 7042. Springer, Berlin, Heidelberg. https://doi.org/10.1007/978-3-642-25085-9_42

Download citation

DOI: https://doi.org/10.1007/978-3-642-25085-9_42

Publisher Name: Springer, Berlin, Heidelberg

Print ISBN: 978-3-642-25084-2

Online ISBN: 978-3-642-25085-9

eBook Packages: Computer ScienceComputer Science (R0)