Abstract

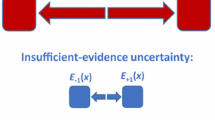

Uncertainty sampling is an effective method for performing active learning that is computationally efficient compared to other active learning methods such as loss-reduction methods. However, unlike loss-reduction methods, uncertainty sampling cannot minimize total misclassification costs when errors incur different costs. This paper introduces a method for performing cost-sensitive uncertainty sampling that makes use of self-training. We show that, even when misclassification costs are equal, this self-training approach results in faster reduction of loss as a function of number of points labeled and more reliable posterior probability estimates as compared to standard uncertainty sampling. We also show why other more naive methods of modifying uncertainty sampling to minimize total misclassification costs will not always work well.

This is an extended abstract of an article published in the machine learning journal [1].

Chapter PDF

Similar content being viewed by others

References

Liu, A., Jun, G., Ghosh, J.: A Self-training Approach to Cost Sensitive Uncertainty Sampling. Machine Learning (2009) doi: 10.1007/s10994-009-5131-9

Author information

Authors and Affiliations

Editor information

Editors and Affiliations

Rights and permissions

Copyright information

© 2009 Springer-Verlag Berlin Heidelberg

About this paper

Cite this paper

Liu, A., Jun, G., Ghosh, J. (2009). A Self-training Approach to Cost Sensitive Uncertainty Sampling. In: Buntine, W., Grobelnik, M., Mladenić, D., Shawe-Taylor, J. (eds) Machine Learning and Knowledge Discovery in Databases. ECML PKDD 2009. Lecture Notes in Computer Science(), vol 5781. Springer, Berlin, Heidelberg. https://doi.org/10.1007/978-3-642-04180-8_10

Download citation

DOI: https://doi.org/10.1007/978-3-642-04180-8_10

Publisher Name: Springer, Berlin, Heidelberg

Print ISBN: 978-3-642-04179-2

Online ISBN: 978-3-642-04180-8

eBook Packages: Computer ScienceComputer Science (R0)