Abstract

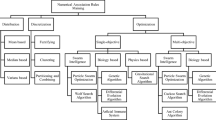

In this paper, we present an evaluation of learning algorithms to select proper ones in a rule evaluation support tool for post-processing of mined results. Post-processing of mined results is one of the key processes in the data mining process. However, it is difficult for human experts to completely evaluate several thousand of rules from a large dataset with noises. To reduce the costs in such a rule evaluation task, we have developed a rule evaluation support method with rule evaluation models, which learn from objective indices for mined classification rules and evaluations by a human expert for each rule. To enhance the adaptability of rule evaluation models, we introduced a constructive meta-learning system to choose proper learning algorithms. Then, we performed the case study on the meningitis data mining as an actual problem. The obtained results demonstrate the applicability of the proposed rule evaluation support method.

Access this chapter

Tax calculation will be finalised at checkout

Purchases are for personal use only

Preview

Unable to display preview. Download preview PDF.

Similar content being viewed by others

References

Hilderman, R.J., Hamilton, H.J.: Knowledge Discovery and Measure of Interest. Kluwer Academic Publishers, Dordrecht (2001)

Tan, P.N., Kumar, V., Srivastava, J.: Selecting the right interestingness measure for association patterns. In: Proceedings of International Conference on Knowledge Discovery and Data Mining KDD-2002, pp. 32–41 (2002)

Yao, Y.Y., Zhong, N.: An analysis of quantitative measures associated with rules. In: Zhong, N., Zhou, L. (eds.) Methodologies for Knowledge Discovery and Data Mining. LNCS (LNAI), vol. 1574, pp. 479–488. Springer, Heidelberg (1999)

Breiman, L.: Bagging predictors. Machine Learning 24, 123–140 (1996)

Freund, Y., Schapire, R.E.: Experiments with a new boosting algorithm. In: Thirteenth International Conference on Machine Learning, pp. 148–156 (1996)

Wolpert, D.: Stacked generalization. Neural Network 5, 241–260 (1992)

Gama, J., Brazdil, P.: Cascade generalization. Machine Learning 41, 315–343 (2000)

Metal (2002), http://www.metal-kdd.org/

Bernstein, A., Provost, F.: An intelligent assistant for knowledge discovery process. In: Proceedings IJCAI 2001 Workshop on Wrappers for Performance Enhancement in KDD (2001)

Abe, H., Yamaguchi, T.: Constructive meta-learning with machine learning method repositories. In: Orchard, B., Yang, C., Ali, M. (eds.) IEA/AIE 2004. LNCS (LNAI), vol. 3029, pp. 502–511. Springer, Heidelberg (2004)

Hatazawa, H., Negishi, N., Suyama, A., Tsumoto, S., Yamaguchi, T.: Knowledge discovery support from a meningoencephalitis database using an automatic composition tool for inductive applications. In: Terano, T., Chen, A.L.P. (eds.) PAKDD 2000. LNCS, vol. 1805, pp. 28–33. Springer, Heidelberg (2000)

Ohsaki, M., Kitaguchi, S., Kume, S., Yokoi, H., Yamaguchi, T.: Evaluation of rule interestingness measures with a clinical dataset on hepatitis. In: Boulicaut, J.-F., Esposito, F., Giannotti, F., Pedreschi, D. (eds.) PKDD 2004. LNCS (LNAI), vol. 3202, pp. 362–373. Springer, Heidelberg (2004)

Witten, I.H., Frank, E.: Data Mining: Practical Machine Learning Tools and Techniques with Java Implementations. Morgan Kaufmann, San Francisco (2000)

Quinlan, J.R.: Programs for Machine Learning. Morgan Kaufmann, San Francisco (1993)

Hinton, G.E.: Learning distributed representations of concepts. In: Proceedings of 8th Annual Conference of the Cognitive Science Society (1986)

Platt, J.: Fast training of support vector machines using sequential minimal optimization. In: Burges, C., Smola, A. (eds.) Advances in Kernel Methods - Support Vector Learning, pp. 185–208. MIT Press, Cambridge (1999)

Frank, E., Wang, Y., Inglis, S., Holmes, G., Witten, I.H.: Using model trees for classification. Machine Learning 32, 63–76 (1998)

Holte, R.C.: Very simple classification rules perform well on most commonly used datasets. Machine Learning 11, 63–91 (1993)

Mitchell, T.M.: Generalization as search. Artificial Intelligence 18, 203–226 (1982)

Michalski, R., Mozetic, I., Hong, J., Lavrac, N.: The AQ15 inductive learning system: An over view and experiments. Reports of Machine Learning and Inference Laboratory MLI-86-6, George Mason University (1986)

Booker, L.B., Holland, J.H., Goldberg, D.E.: Classifier systems and genetic algorithms. Artificial Intelligence 40, 235–282 (1989)

Quinlan, J.R.: Induction of decision tree. Machine Learning 1, 81–106 (1986)

Author information

Authors and Affiliations

Editor information

Editors and Affiliations

Rights and permissions

Copyright information

© 2007 Springer-Verlag Berlin Heidelberg

About this paper

Cite this paper

Abe, H., Tsumoto, S., Ohsaki, M., Yamaguchi, T. (2007). Evaluating a Constructive Meta-learning Algorithm for a Rule Evaluation Support Method Based on Objective Indices. In: Apolloni, B., Howlett, R.J., Jain, L. (eds) Knowledge-Based Intelligent Information and Engineering Systems. KES 2007. Lecture Notes in Computer Science(), vol 4693. Springer, Berlin, Heidelberg. https://doi.org/10.1007/978-3-540-74827-4_117

Download citation

DOI: https://doi.org/10.1007/978-3-540-74827-4_117

Publisher Name: Springer, Berlin, Heidelberg

Print ISBN: 978-3-540-74826-7

Online ISBN: 978-3-540-74827-4

eBook Packages: Computer ScienceComputer Science (R0)