Abstract

Numerical value range partitioning is an inherent part of inductive learning. In classification problems, a common partition ranking method is to use an attribute evaluation function to assign a goodness score to each candidate. Optimal cut point selection constitutes a potential efficiency bottleneck, which is often circumvented by using heuristic methods.

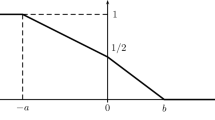

This paper aims at improving the efficiency of optimal multisplitting. We analyze convex and cumulative evaluation functions, which account for the majority of commonly used goodness criteria. We derive an analytical bound, which lets us filter out—when searching for the optimal multisplit—all partitions containing a specific subpartition as their prefix. Thus, the search space of the algorithm can be restricted without losing optimality.

We compare the partition candidate pruning algorithm with the best existing optimization algorithms for multisplitting. For it the numbers of evaluated partition candidates are, on the average, only approximately 25% and 50% of those performed by the comparison methods. In time saving that amounts up to 50% less evaluation time per attribute.

Chapter PDF

Similar content being viewed by others

Keywords

These keywords were added by machine and not by the authors. This process is experimental and the keywords may be updated as the learning algorithm improves.

References

Auer, P.: Optimal splits of single attributes. Unpublished manuscript, Institute for Theoretical Computer Science, Graz University of Technology (1997)

Birkendorf, A.: On fast and simple algorithms for finding maximal subarrays and applications in learning theory. In: Ben-David, S. (ed.) EuroCOLT 1997. LNCS, vol. 1208, pp. 198–209. Springer, Heidelberg (1997)

Blake, C., Keogh, E., Merz, C.: UCI repository of machine learning databases (1998), http://www.ics.uci.edu/_mlearn/MLRepository.html

Breiman, L., Friedman, J.H., Olshen, R.A., Stone, C.J.: Classification and Regression Trees. Wadsworth, Pacific Grove (1984)

Catlett, J.: On changing continuous attributes into ordered discrete attributes. In: Kodratoff, Y. (ed.) EWSL 1991. LNCS, vol. 482, pp. 164–178. Springer, Heidelberg (1991)

Codrington, C.W., Brodley, C.E.: On the qualitative behavior of impurity-based splitting rules I: The minima-free property. Mach. Learn. (to appear)

Cover, T., Thomas, J.: Elements of Information Theory. Wiley, New York (1991)

Elomaa, T., Rousu, J.: General and efficient multisplitting of numerical attributes. Mach. Learn. 36 (1999) (to appear)

Fayyad, U.M., Irani, K.B.: On the handling of continuous-valued attributes in decision tree generation. Mach. Learn. 8, 87–102 (1992)

Fayyad, U.M., Irani, K.B.: Multi-interval discretization of continuous-valued at- tributes for classification learning. In: Proceedings of the Thirteenth International Joint Conference on Artiicial Intelligence, pp. 1022–1027. Morgan Kaufmann, San Mateo (1993)

Fulton, T., Kasif, S., Salzberg, S.: Efficient algorithms for finding multi-way splits for decision trees. In: Prieditis, A., Russell, S. (eds.) Machine Learning: Proceedings of the Twelfth International Conference, pp. 244–251. Morgan Kaufmann, San Francisco (1995)

López de Màntaras, R.: A distance-based attribute selection measure for decision tree induction. Mach. Learn. 6, 81–92 (1991)

Quinlan, J.R.: Induction of decision trees. Mach. Learn. 1, 81–106 (1986)

Quinlan, J.R.: C4.5: Programs for Machine Learning. Morgan Kaufmann, San Mateo (1993)

Author information

Authors and Affiliations

Editor information

Editors and Affiliations

Rights and permissions

Copyright information

© 1999 Springer-Verlag Berlin Heidelberg

About this paper

Cite this paper

Elomaa, T., Rousu, J. (1999). Speeding Up the Search for Optimal Partitions. In: Żytkow, J.M., Rauch, J. (eds) Principles of Data Mining and Knowledge Discovery. PKDD 1999. Lecture Notes in Computer Science(), vol 1704. Springer, Berlin, Heidelberg. https://doi.org/10.1007/978-3-540-48247-5_10

Download citation

DOI: https://doi.org/10.1007/978-3-540-48247-5_10

Publisher Name: Springer, Berlin, Heidelberg

Print ISBN: 978-3-540-66490-1

Online ISBN: 978-3-540-48247-5

eBook Packages: Springer Book Archive