Abstract

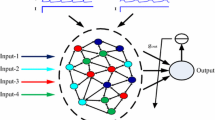

The liquid state machines have been well applied for solving large-scale spatio-temporal pattern recognition problems. The current supervised learning algorithms for the liquid state machines of spiking neurons generally only adjust the synaptic weights in the output layer, the synaptic weights of input and hidden layers are generated in the process of network structure initialization and no longer change. That is to say, the hidden layer is a static network, which usually neglects the dynamic characteristics of the liquid state machines. Therefore, a new supervised learning algorithm for the liquid state machines of spiking neurons based on bidirectional modification is proposed, which not only adjusts the synaptic weights in the output layer, but also changes the synaptic weights in the input and hidden layers. The algorithm is successfully applied to the spike trains learning. The experimental results show that the proposed learning algorithm can effectively learn the spike trains pattern with different learning parameter.

Access this chapter

Tax calculation will be finalised at checkout

Purchases are for personal use only

Similar content being viewed by others

References

Bohte, S.M.: The evidence for neural information processing with precise spike-times: a survey. Nat. Comput. 3(2), 195–206 (2004)

Quiroga, R.Q., Panzeri, S.: Principles of Neural Coding. CRC Press, Boca Raton (2013)

Izhikevich, E.M.: Which model to use for cortical spiking neurons? IEEE Trans. Neural Netw. 15(5), 1063–1070 (2004)

Ostojic, S., Brunel, N.: From spiking neuron models to linear-nonlinear models. PLoS Comput. Biol. 7(1), e1001056 (2011)

Maass, W.: Lower bounds for the computational power of networks of spiking neurons. Neural Comput. 8(1), 1–40 (2014)

Ghosh-Dastidar, S., Adeli, H.: Spiking neural networks. Int. J. Neural Syst. 19(4), 295–308 (2009)

Maass, W., Natschläger, T., Markram, H.: Real-time computing without stable states: a new framework for neural computation based on perturbations. Neural Comput. 14(11), 2531–2560 (2002)

Maass, W.: Liquid state machines: motivation, theory, and applications. In: Computability in Context: Computation and Logic in the Real World, pp. 275–296. Imperial College Press, London (2011)

Rosselló, J.L., Alomar, M.L., Morro, A., et al.: High-density liquid-state machine circuitry for time-series forecasting. Int. J. Neural Syst. 26(5), 1550036 (2016)

Lukoševičius, M., Jaeger, H.: Reservoir computing approaches to recurrent neural network training. Comput. Sci. Rev. 3(3), 127–149 (2009)

Burgsteiner, H., Kröll, M., Leopold, A., et al.: Movement prediction from real-world images using a liquid state machine. Appl. Intell. 26(2), 99–109 (2007)

Sala, D.A., Brusamarello, V.J., Azambuja, R.D., et al.: Positioning control on a collaborative robot by sensor fusion with liquid state machines. In: 2017 IEEE International Instrumentation and Measurement Technology Conference, pp. 1–6. IEEE, Turin, Italy (2017)

Zhang, Y., Li, P., Jin, Y., et al.: A digital liquid state machine with biologically inspired learning and its application to speech recognition. IEEE Trans. Neural Netw. Learn. Syst. 26(11), 2635–2649 (2015)

Jin, Y., Li, P.: Performance and robustness of bio-inspired digital liquid state machines: a case study of speech recognition. Neurocomputing 226, 145–160 (2017)

Zoubi, O.A., Awad, M., Kasabov, N.K.: Anytime multipurpose emotion recognition from EEG data using a liquid state machine based framework. Artif. Intell. Med. 86, 1–8 (2018)

Xue, F., Guan, H., Li, X.: Improving liquid state machine with hybrid plasticity. In: Advanced Information Management, Communicates, Electronic and Automation Control Conference, pp. 1955–1959. IEEE, Xi’an, China (2017)

Kroese, B., van der Smagt, P.: An Introduction to Neural Networks, 8th edn. The University of Amsterdam, Amsterdam (1996)

Ponulak, F., Kasinski, A.: Supervised learning in spiking neural networks with ReSuMe: sequence learning, classification, and spike shifting. Neural Comput. 22(2), 467–510 (2010)

Li, C.Y., Lu, J.T., Wu, C.P., et al.: Bidirectional modification of presynaptic neuronal excitability accompanying spike timing-dependent synaptic plasticity. Neuron 41(2), 257–268 (2004)

Lin, X., Wang, X., Hao, Z.: Supervised learning in multilayer spiking neural networks with inner products of spike trains. Neurocomputing 237, 59–70 (2017)

Gerstner, W., Kistler, W.M.: Spiking Neuron Models: Single Neurons, Populations, Plasticity. Cambridge University Press, New York (2002)

Acknowledgements

The work is supported by the National Natural Science Foundation of China under Grant No. 61762080, and the Medium and Small Scale Enterprises Technology Innovation Foundation of Gansu Province under Grant No. 17CX2JA038.

Author information

Authors and Affiliations

Corresponding author

Editor information

Editors and Affiliations

Rights and permissions

Copyright information

© 2018 Springer International Publishing AG, part of Springer Nature

About this paper

Cite this paper

Lin, X., Li, Q., Li, D. (2018). Supervised Learning Algorithm for Multi-spike Liquid State Machines. In: Huang, DS., Bevilacqua, V., Premaratne, P., Gupta, P. (eds) Intelligent Computing Theories and Application. ICIC 2018. Lecture Notes in Computer Science(), vol 10954. Springer, Cham. https://doi.org/10.1007/978-3-319-95930-6_23

Download citation

DOI: https://doi.org/10.1007/978-3-319-95930-6_23

Published:

Publisher Name: Springer, Cham

Print ISBN: 978-3-319-95929-0

Online ISBN: 978-3-319-95930-6

eBook Packages: Computer ScienceComputer Science (R0)