Abstract

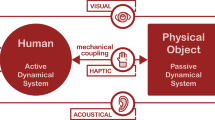

The dynamical response of a musical instrument plays a vital role in determining its playability. This is because, for instruments where there is a physical coupling between the sound-producing mechanism of the instrument and the player’s body (as with any acoustic instrument), energy can be exchanged across points of contact. Most instruments are strong enough to push back; they are springy, have inertia, and store and release energy on a scale that is appropriate and well matched to the player’s body. Haptic receptors embedded in skin, muscles, and joints are stimulated to relay force and motion signals to the player. We propose that the performer-instrument interaction is, in practice, a dynamic coupling between a mechanical system and a biomechanical instrumentalist. We take a stand on what is actually under the control of the musician, claiming it is not the instrument that is played, but the dynamic system formed by the instrument coupled to the musician’s body. In this chapter, we suggest that the robustness, immediacy, and potential for virtuosity associated with acoustic instrument performance are derived, in no small measure, from the fact that such interactions engage both the active and passive elements of the sensorimotor system and from the musician’s ability to learn to control and manage the dynamics of this coupled system. This, we suggest, is very different from an interaction with an instrument whose interface only supports information exchange. Finally, we suggest that a musical instrument interface that incorporates dynamic coupling likely supports the development of higher levels of skill and musical expressiveness.

You have full access to this open access chapter, Download chapter PDF

Similar content being viewed by others

1 Introduction

The mechanics of a musical instrument’s interface—what the instrument feels like—determines a great deal of its playability. What the instrument provides to be held, manipulated by mouth or hand, or otherwise controlled has obvious but also many subtle implications for how it can be used for musical expression. One means to undertake an analysis of playability and interface mechanics is in terms of the mechanical energy that is exchanged between a player’s body and the instrument. For acoustic instruments, mechanical energy injected by the player is transformed into acoustic energy through a process of resonance excitation. For electronic instruments, electrical energy is generally transformed into acoustic energy through a speaker, but controlled by interactions involving the player’s body and some physical portion of the instrument.

Importantly, there exists the possibility for mechanical energy stored in the physical part of the instrument to be returned to the player’s body. This possibility exists for both acoustic and electronic instruments, though in acoustic instruments it is in fact a likelihood. This likelihood exists because most acoustic instruments are strong enough to push back; they are springy, have inertia, and store and return energy on a scale that is roughly matched to the scale at which the player’s body stores and returns energy. Given that energy storage and return in the player’s body is determined by passive elements in muscle and tissues, one can say that the scale at which interface elements of the instrument are springy and have mass is similar to the scale at which muscles and tissues of the player are springy and have mass. That is, the mechanics of most acoustic instruments are roughly impedance matched to the biomechanics of the player’s body. Impedance matching facilitates the exchange of energy between passive elements within the instrument and passive elements that are part of the biomechanics of the player. Thus the player’s joints are moved or backdriven by the instrument, muscle stiffness is loaded, and the inertial dynamics of body segments are excited. In turn, haptic receptors embedded in skin, muscles, and joints are stimulated and relay force and motion signals to the player. It is also no accident that the parts of the body that interact with instruments—lips, fingers, hands—are the most highly populated by haptic receptors.

In this chapter, we propose that performer-instrument interaction is a dynamic coupling between a mechanical system and a biomechanical instrumentalist. This repositions the challenge of playing an instrument as a challenge of “playing” the coupled dynamics in which the body is already involved. We propose that interactions in which both the active and passive elements of the sensorimotor system (see Chap. 3) are engaged form a backdrop for musical creativity that is much more richly featured than the set of actions one might impose on an instrument considered in isolation from the player’s body. We further wish to propose that the robustness, immediacy, and potential for virtuosity associated with acoustic instrument performance are derived, in no small measure, from the fact that such interactions engage both the active and passive elements of the sensorimotor system and determine the musician’s ability to learn and manage the dynamics of this coupled system. This, we suggest, is very different from an interaction with an electronic instrument whose interface is only designed to support information exchange.

We also suggest that a musical instrument interface that incorporates dynamic coupling supports the development of higher levels of skill and musical expressiveness. To elaborate these proposals concretely, we will adopt a modeling approach that explicitly considers the role of the musician’s body in the process of extracting behaviors from a musical instrument. We will describe the springiness, inertia, and damping in both the body and the instrument in an attempt to capture how an instrument becomes an extension of the instrumentalist’s body. And insofar that the body might be considered an integral part of the process of cognition, so too does an instrument become a part of the process of finding solutions to musical problems and producing expressions to musical ideas.

2 A Musician Both Drives and Is Driven by Their Instrument

The standard perspective on the mechanics of acoustic instruments holds that energy is transformed from the mechanical to the acoustic domain—mechanical energy passes from player to instrument and is transformed by the instrument, at least in part, to acoustic energy that emanates from the instrument into the air. Models that describe the process by which mechanical excitation produces an acoustic response have been invaluable for instrument design and manufacture and have played a central role in the development of sound synthesis techniques, including modal synthesis [1] and especially waveguide synthesis [2] and physical modeling synthesis algorithms [3,4,5]. The role of the player in such descriptions is to provide the excitation or to inject energy. Using this energy-based model, the question of “control,” or how the player extracts certain behaviors including acoustic responses from the instrument reduces to considering how the player modulates the amount and timing of energy injected.

While an energy-based model provides a good starting point, we argue here that a musician does more than modulate the amount and timing of excitation. Elaborating further on the process of converting mechanical into acoustic energy, we might consider that not all energy injected is converted into acoustic energy. A portion of the energy is dissipated in the process of conversion or in the mechanical action of the instrument and a portion might be reflected back to the player. As an example, in Fig. 2.1, we show that a portion of the energy injected into the piano action by the player at the key is converted to sound, another portion is dissipated, and yet another portion is returned back to the player at the mechanical contact.

But a model that involves an injection of mechanical energy by the player does not imply that all energy passes continuously in one direction, nor even that the energy passing between player and instrument is under instantaneous control of the player. There might also exist energy exchanges between the player’s body and the instrument whose time course is instead governed by the coupling of mechanical energy storage elements in the player’s body and the instrument. Conceivably, energy may even oscillate back and forth between the player and instrument, as governed by the coupled dynamics. For example, multiple strikes of a drumstick on a snare drum are easily achieved with minimal and discrete muscle actions because potential energy may be stored and returned in not only the drumhead but also in the finger grip of the drummer. To drive these bounce oscillations, the drummer applies a sequence of discrete muscle actions at a much slower rate than the rate at which the drumstick bounces. Then to control the bounce oscillation rate, players modulate the stiffness of the joints in their hand and arm [6].

We see, then, that energy exchanges across a mechanical contact between musician and instrument yield new insights into the manner in which a player extracts behavior from an acoustic instrument. Cadoz and Wanderly, in defining the functions of musical gesture, refer to this exchange of mechanical energy as the “ergotic” function, the function which requires the player to do work upon the instrument mechanism [7]. Chapter 8 describes a software–hardware platform that addresses such issue. We extend this description here to emphasize that the instrument is a system which, once excited, will also “do work” on the biomechanical system that is the body of the player. In particular, we shall identify passive elements in the biomechanics of the player’s body upon which the instrument can “do work” or within which energy returned from the instrument can be stored in the player’s body, without volitional neural control by the player’s brain. The drumming example elaborated above already gives a flavor for this analysis. It is now important to consider the biomechanics of the player’s body.

Note that relative to virtually all acoustic musical instruments, the human body has a certain give, or bends under load. Such bending under load occurs even when the body is engaged in manually controlling an instrument. In engineering terms, the human body is said to be backdrivable. And this backdrivability is part of the match in mechanical impedance between body and instrument. Simple observations support this claim, such as excursions that take place at the hand without volitional control if the load from an instrument is unexpectedly applied or removed. Think for example of the sudden slip of the bowing hand when the bowstring interaction fails because of a lack of rosin [8]. It follows that significant power is exchanged between the player and instrument, even when the player is passive. Such power exchanges cannot be captured by representing the player as a motion source (an agent capable of specifying a motion trajectory without regard to the force required) or a force source (an agent capable of specifying a force trajectory without regard to the motion required). Because so much of the passive mechanics of the player’s body is involved, the contact between a human and machine turns out to hold disadvantages when it comes to dividing the human/machine system into manageable parts for the purposes of modeling.

If good playability was to be equated with high control authority and the backdrivable biomechanics ignored, then an instrument designer might maximize instrument admittance while representing the player as a motion source or maximize instrument impedance while representing the player as a force source. Indeed, this approach to instrument design has, on the one hand, produced the gestural control interface that provides no force feedback and, on the other hand, produced the touch screen that provides no motion feedback. But here we reject representations of the player as motion or force source and label approaches which equate playability with high control authority as misdirected. We contend that the gestural control interface lacking force feedback and touch screen are failures of musical instrument interface design (Chap. 12 discusses the use of touch screen devices with tactile feedback for pattern-based music composition and mixing). We claim that increasing a player’s control authority does not amount to increasing the ability of the player to express their motor intent. Instead, the impedance of the instrument should be matched to that of the player, to maximize power transfer between player and machine and thereby increase the ability of the player to express their motor (or musical expression) intent. Our focus on motor intent and impedance rather than control authority amounts to a fundamental change for the field of human motor control and has significant implications for the practice of designing musical instruments and other machines intended for human use.

3 The Coupled Dynamics: A New Perspective on Control

In this chapter, we are particularly interested in answering how a musician controls an instrument. To competently describe this process, our model must capture two energy-handling processes in addition to the process by which mechanical energy is converted into acoustic energy: First, how energy is handled by the instrument interface, and second, how it is handled by the player’s body. Thereafter, we will combine these models to arrive at a complete system model in which not only energy exchanges, but also information exchanges can be analyzed, and questions of playability and control can be addressed.

For certain instruments, the interface mechanics have already been modeled to describe what the instrument feels like to the player. Examples include models that capture the touch response of the piano action [9, 10] and feel of the drum head [11].

To capture the biomechanics of the player, suitable models are available from many sources, though an appropriately reduced model may be a challenge to find. In part, we seek a model describing what the player’s body “feels like” to the instrument, the complement of a model that describes what the instrument feels like to the player. We aim to describe the mechanical response of the player’s body to mechanical excitation at the contact with the instrument. Models that are competent without being overly complex may be determined by empirical means, or by system identification. Hajian and Howe [12] determined the response of the fingertip to a pulse force and Hasser and Cutkosky determined the response of a thumb/forefinger pinch grip to a pulse torque delivered through a knob [13]. Both of these works proposed parametric models in place of non-parametric models, showing that simple second-order models with mass, stiffness, and damping elements fit the data quite well. More detailed models are certainly available from the field of biomechanics, where characterizations of the driving point impedance of various joints in the body can be helpful for determining state of health. Models that can claim an anatomical or physiological basis are desirable, but such models run the risk of contributing complexity that would complicate the treatment of questions of control and playability.

Models that describe what the instrument and body feel like to each other are both models of driving-point impedance. They each describe relationships between force and velocity at the point of contact between player and instrument. The driving-point impedance of the instrument expresses the force response of the instrument to a velocity imposed by the player, and the driving-point impedance of the player expresses the force response of the player to a velocity imposed by the instrument. Of course, only one member of the pair can impose a force at the contact. The other subsystem must respond with velocity to the force imposed at the contact; thus, its model must be expressed as a driving-point admittance. This restriction as to which variable may be designated an input and which an output is called a causality restriction (see, e.g., [14]). The designation is an essentially arbitrary choice that must be made by the analyst. Let us choose to model the player as an admittance (imposing velocity at the contact) and the instrument as an impedance (imposing force at the contact).

Driving-point impedance models that describe what the body or instrument feel like to each other provide most, but not all of what is needed to describe how a player controls an instrument. A link to muscle action in the player and a link to the process by which mechanical energy is converted into acoustic energy in the instrument are still required. In particular, our driving-point admittance model of the player must be elaborated with input/output models that account for the processing of neural and mechanical signals in muscle. In addition, our driving-point impedance model of the instrument must be elaborated with an input/output model that accounts for the excitation of a sound generation process. If our driving-point admittance and impedance models are lumped parameter models in terms of mechanical mass, spring, and damping elements, then we might expect the same parameters to appear in the input/output models that we use to capture the effect of muscle action and the process of converting mechanical into acoustic energy.

Let us represent the process inside the instrument that transforms mechanical input into mechanical response as an operator G (see Fig. 2.2). This is the driving-point impedance of the instrument. And let the process that transforms mechanical input into acoustic response be called P. Naturally, in an acoustic instrument both G and P are realized in mechanical components. In a digital musical instrument, P is often realized in software as an algorithm. In a motorized musical instrument, even G can be realized in part through software [15].

Musician and instrument may both be represented as multi-input, multi-output systems. Representing the instrument in this way, an operator G transforms mechanical excitation into mechanical response. An operator P transforms mechanical excitation into acoustic response. Representing the player, let H indicate the biomechanics of the player’s body that determines the mechanical response to a mechanical excitation. The motor output of the player also includes a process M, in which neural signals are converted into mechanical action. The response of muscle M to neural excitation combines with the response of H to excitation from the instrument to produce the action of the musician on the instrument. The brain produces neural activation of muscle by monitoring both haptic and acoustic sensation. Blue arrows indicate neural signaling and neural processing while red arrows indicate mechanical signals and green arrows indicate acoustic signals

As described above, in P, there is generally a change in the frequency range that describes the input and output signals. The input signal, or excitation, occupies a low-frequency range, usually compatible with human motor action. The relatively high-frequency range of the output is determined in an acoustic instrument by a resonating instrument body or air column that is driven by the actions of the player on the instrument. Basically, motor actions of the player are converted into acoustic frequencies in the process P. On the other hand, G does not usually involve a change in frequency range.

Boldly, we represent the musician as well, naming the processes (operators) that transform input to output inside the nervous system and body of the musician. Here we identify both neural and mechanical signals, and we identify processes that transform neural signals, processes that transform mechanical signals (called biomechanics) and transducers that convert mechanical into neural signals (mechanoreceptors and proprioceptors) and transducers that convert neural into mechanical signals (muscles). Sect. 3.3.1 provides a description of such mechanisms. Let us denote those parts of the musician’s body that are passive or have only to do with biomechanics in the operator H. Biomechanics encompasses stiffness and damping in muscles and mass in bones and flesh. That is, biomechanics includes the capacity to store and return mechanical energy in either potential (stiffness) or kinetic (inertial) forms and to dissipate energy in damping elements. Naturally, there are other features in the human body that produce a mechanical response to a mechanical input that involve transducers (sensory organs and muscles) including reflex loops and sensorimotor loops. Sensorimotor loops generally engage the central nervous system and often some kind of cognitive or motor processing. These we have highlighted in Fig. 2.2 as a neural input into the brain and as a motor command that the brain produces in response. We also show the brain as the basis for responding to an acoustic input with a neural command to muscle. Finally, we represent muscle as the operator M that converts neural excitation into a motor action. The ears transform acoustic energy into neural signals available for processing and the brain in turn generates muscle commands that incite the action of the musician on the instrument. Figure 2.3 also represents the action of the musician on the instrument as the combination of muscle actions through M and response to backdrive by the instrument through H. Note that the model in Fig. 2.3 makes certain assumptions about superposition, though not all operators need be linear.

Instrument playing considered as a control design problem. a The musician, from the position of controller in a feedback loop, imposes their control actions on the instrument while monitoring the acoustic and haptic response of the instrument. b From the perspective of dynamic coupling, the “plant” upon which the musician imposes control actions is the system formed by the instrument and the musician’s own body (biomechanics)

This complete model brings us into position to discuss questions in control, that is, how a musician extracts desired behaviors from an instrument. We are particularly interested in how the musician formulates a control action that elicits a desired behavior or musical response from an instrument. We will attempt to unravel the processes in the formulation of a control action, including processes that depend on immediately available sensory input (feedback control) and processes that rely on memory and learning (open-loop control).

As will already be apparent, the acoustic response of an instrument is not the only signal available to the player as feedback. In addition, the haptic response functions as feedback, carrying valuable information about the behavior of the instrument and complementing the acoustic feedback. Naturally, the player, as controller in a feedback loop, can modify his or her actions on the instrument based on a comparison of the desired sound and the actual sound coming from the instrument. But the player can also modify his or her actions based on a comparison of the feel of the instrument and a desired or expected feel. A music teacher quite often describes a desired feel from the instrument, encouraging a pupil to adjust actions on the instrument until such a mechanical response can be recognized in the haptic response. One of the premises of this volume is that this second, haptic, channel plays a vital role in determining the “playability” of an instrument, i.e., in providing a means for the player to “feel” how the instrument behaves in response to their actions.

In the traditional formulation, the instrument is the system under control or the “plant” in the feedback control system (see Fig. 2.3a). As controller, the player aims to extract a certain behavior from the instrument by imposing actions and monitoring responses. But given that the haptic response impedes on the player across the same mechanical contact as the control action imposed by the player, an inner feedback loop is closed involving only mechanical variables. Neural signals and the brain of the instrument player are not involved. The mechanical contact and the associated inner feedback loop involve the two variables force and velocity whose product is power and is the basis for energy exchanges between player and instrument. That is, the force and motion variables that we identify at the mechanical contact between musician and instrument are special in that they transmit not only information but also mechanical energy. That energy may be expressed as the derivative of power, the product of force and velocity at the mechanical contact. As our model developed above highlights, a new dynamical system arises when the body’s biomechanics are coupled to the instrument mechanics. We shall call this new dynamical system the coupled dynamics. The inner feedback loop, which is synonymous with the coupled dynamics, is the new “plant” under control (see Fig. 2.3b). The outer feedback loop involves neural control and still has access to feedback in both haptic and audio channels.

In considering the “control problem,” we see that the coupled dynamics is a different system, possibly more complex, than the instrument by itself. Paradoxically, the musician’s brain is faced with a greater challenge when controlling the coupled dynamical system that includes the combined body and instrument dynamics. There are new degrees of freedom (DoF) to be managed—dynamic modes that involve exchanges of potential and kinetic energy between body and instrument. But something unique takes place when the body and instrument dynamics are coupled. A feedback loop is closed and the instrument becomes an extension of the body. The instrument interface disappears and the player gains a new means to effect change in their environment. This sense of immediacy is certainly at play when a skilled musician performs on an acoustic instrument.

But musical instruments are not generally designed by engineers. Rather, they are designed by craftsmen and musicians—and usually by way of many iterations of artistry and skill. Oftentimes that skill is handed down through generations in a process of apprenticeship that lacks engineering analysis altogether. Modern devices, on the other hand—those designed by engineers—might function as extensions of the brain, but not so much as extensions of the body. While there is no rule that says a device containing a microprocessor could not present a vanishingly small or astronomically large mechanical impedance to its player, it can be said that digital instrument designers to date have been largely unaware of the alternatives. Is it possible to design a digital instrument whose operation profits from power exchanges with its human player? We aim to capture the success of devices designed through craftsmanship and apprenticeship in models and analyses and thereby inform the design of new instruments that feature digital processing and perhaps embedded control.

4 Inner and Outer Loops in the Interaction Between Player and Instrument

Our new perspective, in which the “plant” under control by the musician is the dynamical system determined conjointly by the biomechanics of the musician and the mechanics of the instrument, yields a new perspective on the process of controlling and learning to control an instrument. Consider for a moment, the superior access that the musician has to feedback from the dynamics of the coupled system relative to feedback from the instrument. The body is endowed with haptic sensors in the lips and fingertips, but also richly endowed with haptic and proprioceptive sensors in the muscles, skin, and joints. Motions of the body that are determined in part by muscle action but also in part by actions of the instrument on the body may easily be sensed. A comparison between such sensed signals and expected sensations, based on known commands to the muscles, provides the capability of estimating states internal to the instrument. See, for example, [16].

The haptic feedback thus available carries valuable information for the musician about the state of the instrument. The response might even suggest alternative actions or modes of interaction to the musician. For example, the feel of let-off in the piano action (after which the hammer is released) and the feel of the subsequent return of the hammer onto the repetition lever and key suggest the availability of a rapid repetition to the pianist.

Let us consider cases in which the coupled dynamics provides the means to achieve oscillatory behaviors with characteristic frequencies that are outside the range of human volitional control. Every mechanical contact closes a feedback loop, and closing a feedback loop between two systems capable of storing and returning energy creates a new dynamic behavior. Speaking mechanically, if the new mode is underdamped, it would be called a new resonance or vibration mode. On the one hand, the force and motion variables support the exchange of mechanical energy; on the other hand, they create a feedback loop that is characterized by a resonance. Since we have identified a mechanical subsystem in both the musician and the instrument, it is noteworthy that these dynamics are potentially quite fast. There is no neural transmission nor cognitive processing that takes place in this pure mechanical loop.

Given that neural conduction velocities and the speed of cognitive processes may be quite slow compared to the rates at which potential and kinetic energy can be exchanged between two interconnected mechanical elements, certain behaviors in the musician-/instrument-coupled dynamics can be attributed to an inner loop, not involving closed-loop control by the musician’s nervous system. In particular, neural conduction delays and cognitive processing times on the order of 100 ms would preclude stable control of a lightly underdamped oscillator at more than about 5 Hz [17], yet rapid piano trills exceeding 10 Hz are often used in music [18]. The existence of compliance in the muscles of the finger and the rebound of the piano key are evidently involved in an inner loop, while muscle activation is likely the output of a feedforward control process.

As we say, the musician is not playing the musical instrument but instead playing the coupled dynamics of his or her own body and instrument. Many instruments support musical techniques which are quite evidently examples of the musician driving oscillations that arise from the coupled dynamics of body and instrument mechanics. For example, the spiccato technique in which a bow is “bounced” on a string involves driving oscillatory dynamics that arise from the exchange of kinetic and potential energy in the dynamics of the hand, the bow and hairs, and the strings. Similarly, the exchange of kinetic and potential energy underlies the existence of oscillatory dynamics in a drum roll, as described above. It is not necessary for the drummer to produce muscle action at the frequency of these oscillations, only to synchronize driving action to these oscillations [6].

The interesting question to be considered next is whether the perspective we have introduced here may have implications for the design of digital musical instruments: whether design principles might emerge that make a musical instrument an extension of the human body and a means for the musician to express their musical ideas. It is possible that answering such a question might also be the key to codifying certain emerging theories in the fields of human motor control and cognitive science. While it has long been appreciated that the best machine interface is one that “disappears” from consciousness, a theory to explain such phenomena has so far been lacking.

The concept of dynamic coupling introduced here also suggests a means for a musician to learn to control an instrument. First, we observe that humans are very adept at controlling their bodies when not coupled to objects in the environment. Given that the new control challenge presented when the body is coupled to an instrument in part involves dynamics that were already learned, it can be said that the musician already has some experience even before picking up an instrument for the first time. Also, to borrow a term from robotics, the body is hyper-redundantly actuated and equipped with a multitude of sensors. From such a perspective, it makes sense to let the body be backdriven by the instrument, because only then do the redundant joints become engaged in controlling the instrument.

An ideal musical instrument is a machine that extends the human body. From this perspective, it is the features in a musical instrument’s control interface that determine whether the instrument can express the player’s motor intent and support the development of manual skill. We propose that approaching questions of digital instrument design can be addressed by carefully considering the coupling between a neural system, biomechanical system, and instrument, and even the environment in which the musical performance involving the instrument takes place. Questions can be informed by thinking carefully about a neural system that “knows” how to harness the mechanics of the body and object dynamics and a physical system that can “compute in hardware” in service of a solution to a motor problem.

The human perceptual system is aligned not only to extracting structure from signals (or even pairs of signals) but to extract structure from pairs of signals known to be excitations and responses (inputs and outputs). What the perceptual system extracts in that case is what the psychologist J. J. Gibson refers to as “invariants” [19]. According to Gibson, our perceptual system is oriented not to the sensory field (which he terms the “ambient array”) but to the structure in the sensory field, the set of signals which are relevant in the pursuit of a specific goal. For example, in catching a ball, the “signal” of relevance is the size of the looming image on the retina and indeed the shape of that image; together these encode both the speed and angle of the approaching ball. Similarly, in controlling a drum roll, the signal of relevance is the rebound from the drumhead which must be sustained at a particular level to ensure an even roll. The important thing to note is that for the skilled player, there is no awareness of the proximal or bodily sensation of the signal. Instead, the external or “distal” object is taken to be the signal’s source. In classical control, such a structured signal is represented by its generator or a representation of a system known to generate such a structured signal.

Consider for a moment, a musician who experiences a rapid oscillation-like behavior arising from the coupling of his or her own body and an instrument, perhaps the bounce of a bow on a string, or the availability of a rapid re-strike on a piano key due to the function of the repetition lever. Such an experience can generally be evoked again and again by the musician learning to harness such a behavior and develop it into a reliable technique, even if it is not quite reliable at first. The process of evoking the behavior, by timing one’s muscle actions, would almost certainly have something to do with driving the behavior, even while the behavior’s dynamics might involve rapid communication of energy between body and instrument as described above. Given that the behavior is invariant to the mechanical properties of body and instrument (insofar that those properties are constant) it seems quite plausible that the musician would develop a kind of internal description or internal model of the dynamics of the behavior. That internal model will likely also include the possibilities for driving the behavior and the associated sensitivities.

In his pioneering work on human motor control, Nicolai Bernstein has described how the actions of a blacksmith are planned and executed in combination with knowledge of the dynamics of the hammer, workpiece, and anvil [20]. People who are highly skilled at wielding tools are able to decouple certain components of planned movements, thereby making available multiple “loops” or levels of control which they can “tighten” or “loosen” at will. In the drumming example cited above, we have seen that players can similarly control the impedance of their hand and arm to control the height of stick bounces (the speed of the drum roll), while independently controlling the overall movement amplitude (the loudness of the drum roll).

Interestingly, the concept of an internal model has become very influential in the field of human motor behavior in recent years [21] and model-based control has become an important sub-discipline in control theory. There is therefore much potential for research concerned with exploring the utility of model-based control for musical instruments, especially from the perspective that the model internalized by the musician is one that describes the mechanical interactions between his or her own body and the musical instrument. This chapter is but a first step in this direction. Before leaving the questions we have raised here, however, we will briefly turn our attention to how the musician might learn to manage such coupled dynamics, proposing that the robustness, immediacy, and potential for virtuosity associated with acoustic instrument performance is derived in large part from engaging interactions that involve both the active and passive elements of the sensorimotor system.

5 Implications of a Coupled Dynamics Perspective on Learning to Play an Instrument

At the outset of this chapter, we proposed that successful acoustic instruments are those which are well matched, in terms of their mechanical impedance, to the capabilities of our bodies. In other words, for an experienced musician, the amount of work they need to do to produce a desired sound is within a range that will not exhaust their muscles on the one hand but which will provide sufficient push-back to support control on the other. But what about the case for someone learning an instrument? What role does the dynamic behavior of the instrument play in the process of learning? Even if we do not play an instrument ourselves, we are probably all familiar with the torturous sound of someone learning to bow a violin, or with our own exhausting attempts to get a note out of a garden hose. This is what it sounds and feels like to struggle with the coupled dynamics of our bodies and an instrument whose dynamical behavior we have not yet mastered. And yet violins can be played, and hoses can produce notes, so the question is how does someone learn to master these behaviors?

Musical instruments represent a very special class of objects. They are designed to be manipulated and to respond, through sound, to the finest nuances of movement. As examples of tools that require fine motor control, they are hard to beat. And, as with any tool requiring fine motor control, a musician must be sensitive to how the instrument responds to an alteration in applied action with the tiniest changes in sound and the tiniest changes in haptic feedback. Indeed, a large part of acquiring skill as a musician is being able to predict, for a given set of movements and responses, the sound that the instrument will make and to adjust movements, in anticipation or in real time, when these expectations are not met.

The issue, as Bernstein points out, is that there are often many ways of achieving the same movement goal [20]. In terms of biomechanics, joints and muscles can be organized to achieve an infinite number of angles, velocities, and movement trajectories, while at the neurophysiological level, many motorneurons can synapse onto a single muscle and, conversely, many muscle fibers can be controlled by one motor unit (see Sect. 3.2 for more details concerning the hand). This results in a biological system for movement coordination that is highly adaptive and that can support us in responding flexibly to perturbations in the environment. In addition, as Bernstein’s observations of blacksmiths wielding hammers demonstrated, our ability to reconfigure our bodies in response to the demands of a task goal extends to incorporating the dynamics of the wielded tool into planned movement trajectories [20, 22]. Indeed, it is precisely this ability to adapt our movements in response to the dynamics of both the task and the task environment that allow us to acquire new motor skills.

Given this state of affairs, how do novice musicians (or indeed experienced musicians learning new pieces) select from all the possible ways of achieving the same musical outcome? According to Bernstein’s [20] theory of graded skill acquisition, early stages of skill acquisition are associated with “freezing” some biomechanical DoF (e.g., joint angles). Conversely, later (higher) stages are characterized by a more differentiated use of DoF (“freeing”), allowing more efficient and flexible/functional performance. This supposition aligns perfectly with experimental results in which persons adopted a high impedance during early stages of learning (perhaps removing DoF from the coupled dynamics) and transitioning to a lower impedance once the skill was mastered [23].

More recently, Ranganathan and Newell [24, 25] proposed that in understanding how and why learning could be transferred from one context to another, it was imperative to uncover the dynamics of the task being performed and to determine the “essential” and “non-essential” task variables. They define non-essential variables as the whole set of parameters available to the performer and suggest that modifications to these parameters lead to significant changes in task performance. For example, in throwing an object the initial angle and velocity would be considered non-essential variables, because changes to these values will lead to significant changes in the task outcome. The essential variables are a subset of the available working parameters that are bound together by a common function. In the case of throwing an object, this would be the function that relates the goal of this particular throwing task to the required throwing angle and velocity [26]. The challenge, as Pacheco and Newell point out, is that in many tasks this information is not immediately available. Therefore, the learner needs to engage in a process of discovery or “exploration” of the available dynamic behaviors to uncover, from the many possible motor solutions, which will be the most robust. But finding a motor solution is only the first step since learning will only occur when that movement pattern is stabilized through practice [27].

In contrast to exploration, stabilization is characterized as a process of making movement patterns repeatable, a process which Pacheco and Newell point out can be operationalized as a negative feedback loop, where both the non-essential and essential execution variables are corrected from trial to trial. Crucially, Pacheco and Newell determined that, for learning and transfer to be successful, the time spent in the exploration phase and the time spent in the stabilization phase must be roughly equal [26].

As yet, we have little direct evidence of these phases of learning of motor skill in the context of playing acoustic musical instruments. A study by Rodger et al., however, suggests that exploration and stabilization phases of learning may be present as new musical skills are acquired. In a longitudinal study, they recorded the ancillary (or non-functional) body movements of intermediate-level clarinetists before and after learning a new piece of music. Their results demonstrated that the temporal control of ancillary body movements made by participants was stronger in performances after the music had been learned and was closer to the measures of temporal control found for an expert musician’s movements [28]. While these findings provide evidence that the temporal control of musicians’ ancillary body movements stabilizes with musical learning, the lack of an easy way to measure the forces exchanged across the mechanical coupling between player and instrument means that we cannot yet empirically demonstrate the role that learning to manage the exchange of energy across this contact might play in supporting the exploration and stabilization of movements as skill is acquired. Indeed, the fact that haptic feedback plays a role for the musician in modeling an instrument’s behavior has already been demonstrated experimentally using simulated strings [29] and membranes [11, 30]. In both cases, performance of simple playing tasks was shown to be more accurate when a virtual haptic playing interface was present that modeled the touch response of the instrument (see also Chap. 6).

As a final point, we suggest that interacting with a digital musical instrument that has simulated dynamical behavior is very different from interacting with an instrument with a digitally mediated playing interface that only supports information exchange. As an extreme example, while playing keyboard music on a touch screen might result in a performance that retains note and timing information, it is very difficult, if not impossible, for a player to perform at speed or to do so without constantly visually monitoring the position of their hands. Not only does the touch screen lack the mechanical properties of a keyboard instrument, it also lacks the incidental tactile cues such as the edges of keys and the differentiated height of black and white keys that are physical “anchors” available as confirmatory cues for the player.

In summary, a musical instrument interface that incorporates dynamic coupling not only provides instantaneous access to a second channel of information about its state, but, because of the availability of cues that allow for the exploration and selection of multiple parameters available for control of its state, such an interface is also likely to support the development of higher levels of skill and musical expressiveness.

6 Conclusions

In this chapter, we have placed particular focus on the idea that the passive dynamics of the body of a musician play an integral role in the process of making music through an instrument. Our thesis, namely that performer-instrument interaction is, in practice, a dynamic coupling between a mechanical system and a biomechanical instrumentalist, repositions the challenge of playing an instrument as a challenge of “playing” the coupled dynamics in which the body is already involved. The idea that an instrument becomes an extension of the player’s body is quite concrete when the coupled dynamics of instrument and player are made explicit in a model. From a control engineering perspective, the body-/instrument-coupled dynamics form an inner feedback loop; the dynamics of this inner loop are to be driven by an outer loop encompassing the player’s central nervous system. This new perspective becomes a call to arms for the design of digital musical instruments. It places a focus on the haptic feedback available from an instrument, the role of energy storage and return in the mechanical dynamics of the instrument interface, and the possibilities for control of fast dynamic processes otherwise precluded by the use of feedback with loop delay.

This perspective also provides a new scaffold for thought on learning and skill acquisition, as we have only briefly explored. When approached from this perspective, skill acquisition is about refining control of one’s own body, as extended by the musical instrument through dynamic coupling. Increasing skill becomes a question of refining control or generalizing previously acquired skills. Thus, soft-assembly of skill can contribute to the understanding of learning to play instruments that express musical ideas. The open question remains: what role does the player’s perception of the coupled dynamics play in the process of becoming a skilled performer? Answering this question will require us to step inside the coupled dynamics of the player/instrument system. With the advent of new methods for on-body sensing of fine motor actions and new methods for embedding sensors in smart materials, the capacity to perform such observations is now within reach.

References

Van Den Doel, K., Pai, D.: Modal Synthesis for Vibrating Objects, pp. 1–8. Audio Anecdotes. AK Peter, Natick, MA (2003)

Bilbao, S., Smith, J.O.: Finite difference schemes and digital waveguide networks for the wave equation: Stability, passivity, and numerical dispersion. IEEE Trans. Speech Audio Process. 11(3), 255–266 (2003)

van Walstijn, M., Campbell, D. M.: Discrete-time modeling of woodwind instrument bores using wave variables. J. Acoust. Soc. Am. 113(Aug 2002), 575–585 (2003)

Smith, J.O.: Physical modeling using digital waveguides. Comput. Music J. 16(4), 74–91 (1992)

De Poli, G., Rocchesso, D.: Physically based sound modelling. Organ. Sound 3(1), 61–76 (1998)

Hajian, A.Z., Sanchez, D.S., Howe, R.D.: Drum roll: increasing bandwidth through passive impedance modulation. In: Proceedings of the 1997 IEEE/RSJ International Conference on Intelligent Robot and Systems Innovations in Theory Real-World Applications IROS’97, vol. 3, pp. 2294–2299 April 1997

Cadoz, C., Wanderley, M.M.: Gesture-music. In: Wanderley, M.M., Battier, M. (eds.), Trends gestural Control Music, pp. 71–94 (2000)

Smith, J.H., Woodhouse, J.: Tribology of rosin. J. Mech. Phys. Solids 48(8), 1633–1681 (2000)

Gillespie, R.B., Yu, B., Grijalva, R., Awtar, S.: Characterizing the feel of the Piano action. Comput. Music J. 35(1), 43–57 (2011)

Izadbakhsh, A., McPhee, J., Birkett, S.: Dynamic modeling and experimental testing of a piano action mechanism with a flexible hammer shank. J. Comput. Nonlinear Dyn. 3(July), 31004 (2008)

Berdahl, E., Smith, J.O., Niemeyer, G.: Feedback control of acoustic musical instruments: collocated control using physical analogs. J. Acoust. Soc. Am. 131(1), 963 (2012)

Hajian, A.Z., Howe, R.D.: Identification of the mechanical impedance at the human finger tip. J. Biomech. Eng. 119(1), 109–114 (1997)

Hasser, C.J., Cutkosky, M.R.: System identification of the human hand grasping a haptic knob. In: Proceedings of the 10th Symposium Haptic Interfaces Virtual Environment Teleoperator Systems HAPTICS, pp. 171–180 (2002)

Karnopp, D.C., Margolis, D.L., Rosenberg, R.C.: System Dynamics: A Unified Approach (1990)

Gillespie, R.B.: The virtual piano action: design and implementation. In: Proceedings of the International Computer Music Conference (ICMC), pp. 167–170 (1994)

von Holst, E., Mittelstaedt, H.: The principle of reafference: Interactions between the central nervous system and the peripheral organs. Naturwissenschaften 1950, 41–72 (1971)

Miall, R.C., Wolpert, D.M.: Forward models for physiological motor control. Neural Netw. 9(8), 1265–1279 (1996)

Moore, G.P.: Piano trills. Music Percept. Interdiscip. J. 9(3), 351–359 (1992)

Gibson, J.: Observations on active touch. Psychol. Rev. 69(6), 477–491 (1962)

Bernstein, N.I.: The coordination and regulation of movements. Pergamon, Oxford (1967)

Wolpert, D.M., Ghahramani, Z.: Computational principles of movement neuroscience. Nat. Neurosci. 3(Suppl.) November, pp. 1212–1217 (2000)

Latash, M.L.: Neurophysiological basis of movement. Human Kinet. (2008)

Osu, R., et al.: Short- and long-term changes in joint co-contraction associated with motor learning as revealed from surface EMG. J. Neurophysiol. 88(2), 991–1004 (2002)

Ranganathan, R., Newell, K.M.: Influence of motor learning on utilizing path redundancy. Neurosci. Lett. 469(3), 416–420 (2010)

Ranganathan, R., Newell, K.M.: Emergent flexibility in motor learning. Exp. Brain Res. 202(4), 755–764 (2010)

Pacheco, M.M., Newell, K.M.: Transfer as a function of exploration and stabilization in original practice. Hum. Mov. Sci. 44, 258–269 (2015)

Kelso, J.A.S.: Dynamic Patterns: The Self-Organization of Brain and Behavior. MIT Press (1997)

Rodger, M.W.M., O’Modhrain, S., Craig, C.M.: Temporal guidance of musicians’ performance movement is an acquired skill. Exp. Brain Res. 226(2), 221–230 (2013)

O’Modhrain, S.M., Chafe, C.: Incorporating haptic feedback into interfaces for music applications. In: ISORA, World Automation Conference (2000)

Berdahl, E., Niemeyer, G., Smith, J.O.: Using haptics to assist performers in making gestures to a musical instrument. In: Proceedings of the Conference on New Interfaces Musical Expression (NIME), pp. 177–182 (2009)

Author information

Authors and Affiliations

Corresponding author

Editor information

Editors and Affiliations

Rights and permissions

Open Access This chapter is licensed under the terms of the Creative Commons Attribution 4.0 International License (http://creativecommons.org/licenses/by/4.0/), which permits use, sharing, adaptation, distribution and reproduction in any medium or format, as long as you give appropriate credit to the original author(s) and the source, provide a link to the Creative Commons license and indicate if changes were made. The images or other third party material in this book are included in the book's Creative Commons license, unless indicated otherwise in a credit line to the material. If material is not included in the book's Creative Commons license and your intended use is not permitted by statutory regulation or exceeds the permitted use, you will need to obtain permission directly from the copyright holder.

Copyright information

© 2018 The Author(s)

About this chapter

Cite this chapter

O’Modhrain, S., Gillespie, R.B. (2018). Once More, with Feeling: Revisiting the Role of Touch in Performer-Instrument Interaction. In: Papetti, S., Saitis, C. (eds) Musical Haptics. Springer Series on Touch and Haptic Systems. Springer, Cham. https://doi.org/10.1007/978-3-319-58316-7_2

Download citation

DOI: https://doi.org/10.1007/978-3-319-58316-7_2

Published:

Publisher Name: Springer, Cham

Print ISBN: 978-3-319-58315-0

Online ISBN: 978-3-319-58316-7

eBook Packages: Computer ScienceComputer Science (R0)