Abstract

Algebraic equations

A linear, scalar, algebraic equation in x has the form

for arbitrary real constants a and b. The unknown is a number x. All other algebraic equations, e.g., \(x^{2}+ax+b=0\), are nonlinear. The typical feature in a nonlinear algebraic equation is that the unknown appears in products with itself, like x 2 or \(e^{x}=1+x+\frac{1}{2}x^{2}+\frac{1}{3!}x^{3}+\ldots\)

You have full access to this open access chapter, Download chapter PDF

Similar content being viewed by others

5.1 Introduction of Basic Concepts

5.1.1 Linear Versus Nonlinear Equations

Algebraic equations

A linear, scalar, algebraic equation in x has the form

for arbitrary real constants a and b. The unknown is a number x. All other algebraic equations, e.g., \(x^{2}+ax+b=0\), are nonlinear. The typical feature in a nonlinear algebraic equation is that the unknown appears in products with itself, like x 2 or \(e^{x}=1+x+\frac{1}{2}x^{2}+\frac{1}{3!}x^{3}+\ldots\)

We know how to solve a linear algebraic equation, x = −b ∕ a, but there are no general methods for finding the exact solutions of nonlinear algebraic equations, except for very special cases (quadratic equations constitute a primary example). A nonlinear algebraic equation may have no solution, one solution, or many solutions. The tools for solving nonlinear algebraic equations are iterative methods, where we construct a series of linear equations, which we know how to solve, and hope that the solutions of the linear equations converge to a solution of the nonlinear equation we want to solve. Typical methods for nonlinear algebraic equation equations are Newton’s method, the Bisection method, and the Secant method.

Differential equations

The unknown in a differential equation is a function and not a number. In a linear differential equation, all terms involving the unknown function are linear in the unknown function or its derivatives. Linear here means that the unknown function, or a derivative of it, is multiplied by a number or a known function. All other differential equations are non-linear.

The easiest way to see if an equation is nonlinear, is to spot nonlinear terms where the unknown function or its derivatives are multiplied by each other. For example, in

the terms involving the unknown function u are linear: \(u^{\prime}\) contains the derivative of the unknown function multiplied by unity, and au contains the unknown function multiplied by a known function. However,

is nonlinear because of the term −u 2 where the unknown function is multiplied by itself. Also

is nonlinear because of the term uu x where the unknown function appears in a product with its derivative. (Note here that we use different notations for derivatives: \(u^{\prime}\) or du ∕ dt for a function u(t) of one variable, \(\frac{\partial u}{\partial t}\) or u t for a function of more than one variable.)

Another example of a nonlinear equation is

because \(\sin(u)\) contains products of u, which becomes clear if we expand the function in a Taylor series:

Mathematical proof of linearity

To really prove mathematically that some differential equation in an unknown u is linear, show for each term T(u) that with \(u=au_{1}+bu_{2}\) for constants a and b,

For example, the term \(T(u)=(\sin^{2}t)u^{\prime}(t)\) is linear because

However, \(T(u)=\sin u\) is nonlinear because

5.1.2 A Simple Model Problem

A series of forthcoming examples will explain how to tackle nonlinear differential equations with various techniques. We start with the (scaled) logistic equation as model problem:

This is a nonlinear ordinary differential equation (ODE) which will be solved by different strategies in the following. Depending on the chosen time discretization of (5.1), the mathematical problem to be solved at every time level will either be a linear algebraic equation or a nonlinear algebraic equation. In the former case, the time discretization method transforms the nonlinear ODE into linear subproblems at each time level, and the solution is straightforward to find since linear algebraic equations are easy to solve. However, when the time discretization leads to nonlinear algebraic equations, we cannot (except in very rare cases) solve these without turning to approximate, iterative solution methods.

The next subsections introduce various methods for solving nonlinear differential equations, using (5.1) as model. We shall go through the following set of cases:

-

explicit time discretization methods (with no need to solve nonlinear algebraic equations)

-

implicit Backward Euler time discretization, leading to nonlinear algebraic equations solved by

-

an exact analytical technique

-

Picard iteration based on manual linearization

-

a single Picard step

-

Newton’s method

-

-

implicit Crank-Nicolson time discretization and linearization via a geometric mean formula

Thereafter, we compare the performance of the various approaches. Despite the simplicity of (5.1), the conclusions reveal typical features of the various methods in much more complicated nonlinear PDE problems.

5.1.3 Linearization by Explicit Time Discretization

Time discretization methods are divided into explicit and implicit methods. Explicit methods lead to a closed-form formula for finding new values of the unknowns, while implicit methods give a linear or nonlinear system of equations that couples (all) the unknowns at a new time level. Here we shall demonstrate that explicit methods constitute an efficient way to deal with nonlinear differential equations.

The Forward Euler method is an explicit method. When applied to (5.1), sampled at \(t=t_{n}\), it results in

which is a linear algebraic equation for the unknown value \(u^{n+1}\) that we can easily solve:

In this case, the nonlinearity in the original equation poses no difficulty in the discrete algebraic equation. Any other explicit scheme in time will also give only linear algebraic equations to solve. For example, a typical 2nd-order Runge-Kutta method for (5.1) leads to the following formulas:

The first step is linear in the unknown \(u^{*}\). Then \(u^{*}\) is known in the next step, which is linear in the unknown \(u^{n+1}\) .

5.1.4 Exact Solution of Nonlinear Algebraic Equations

Switching to a Backward Euler scheme for (5.1),

results in a nonlinear algebraic equation for the unknown value u n. The equation is of quadratic type:

and may be solved exactly by the well-known formula for such equations. Before we do so, however, we will introduce a shorter, and often cleaner, notation for nonlinear algebraic equations at a given time level. The notation is inspired by the natural notation (i.e., variable names) used in a program, especially in more advanced partial differential equation problems. The unknown in the algebraic equation is denoted by u, while \(u^{(1)}\) is the value of the unknown at the previous time level (in general, \(u^{(\ell)}\) is the value of the unknown \(\ell\) levels back in time). The notation will be frequently used in later sections. What is meant by u should be evident from the context: u may either be 1) the exact solution of the ODE/PDE problem, 2) the numerical approximation to the exact solution, or 3) the unknown solution at a certain time level.

The quadratic equation for the unknown u n in (5.2) can, with the new notation, be written

The solution is readily found to be

Now we encounter a fundamental challenge with nonlinear algebraic equations: the equation may have more than one solution. How do we pick the right solution? This is in general a hard problem. In the present simple case, however, we can analyze the roots mathematically and provide an answer. The idea is to expand the roots in a series in \(\Delta t\) and truncate after the linear term since the Backward Euler scheme will introduce an error proportional to \(\Delta t\) anyway. Using sympy, we find the following Taylor series expansions of the roots:

We see that the r1 root, corresponding to a minus sign in front of the square root in (5.4), behaves as \(1/\Delta t\) and will therefore blow up as \(\Delta t\rightarrow 0\)! Since we know that u takes on finite values, actually it is less than or equal to 1, only the r2 root is of relevance in this case: as \(\Delta t\rightarrow 0\), \(u\rightarrow u^{(1)}\), which is the expected result.

For those who are not well experienced with approximating mathematical formulas by series expansion, an alternative method of investigation is simply to compute the limits of the two roots as \(\Delta t\rightarrow 0\) and see if a limit appears unreasonable:

5.1.5 Linearization

When the time integration of an ODE results in a nonlinear algebraic equation, we must normally find its solution by defining a sequence of linear equations and hope that the solutions of these linear equations converge to the desired solution of the nonlinear algebraic equation. Usually, this means solving the linear equation repeatedly in an iterative fashion. Alternatively, the nonlinear equation can sometimes be approximated by one linear equation, and consequently there is no need for iteration.

Constructing a linear equation from a nonlinear one requires linearization of each nonlinear term. This can be done manually as in Picard iteration, or fully algorithmically as in Newton’s method. Examples will best illustrate how to linearize nonlinear problems.

5.1.6 Picard Iteration

Let us write (5.3) in a more compact form

with \(a=\Delta t\), \(b=1-\Delta t\), and \(c=-u^{(1)}\). Let \(u^{-}\) be an available approximation of the unknown u. Then we can linearize the term u 2 simply by writing \(u^{-}u\). The resulting equation, \(\hat{F}(u)=0\), is now linear and hence easy to solve:

Since the equation \(\hat{F}=0\) is only approximate, the solution u does not equal the exact solution \(u_{\mbox{\footnotesize e}}\) of the exact equation \(F(u_{\mbox{\footnotesize e}})=0\), but we can hope that u is closer to \(u_{\mbox{\footnotesize e}}\) than \(u^{-}\) is, and hence it makes sense to repeat the procedure, i.e., set \(u^{-}=u\) and solve \(\hat{F}(u)=0\) again. There is no guarantee that u is closer to \(u_{\mbox{\footnotesize e}}\) than \(u^{-}\), but this approach has proven to be effective in a wide range of applications.

The idea of turning a nonlinear equation into a linear one by using an approximation \(u^{-}\) of u in nonlinear terms is a widely used approach that goes under many names: fixed-point iteration, the method of successive substitutions, nonlinear Richardson iteration, and Picard iteration. We will stick to the latter name.

Picard iteration for solving the nonlinear equation arising from the Backward Euler discretization of the logistic equation can be written as

The \(\leftarrow\) symbols means assignment (we set \(u^{-}\) equal to the value of u). The iteration is started with the value of the unknown at the previous time level: \(u^{-}=u^{(1)}\).

Some prefer an explicit iteration counter as superscript in the mathematical notation. Let u k be the computed approximation to the solution in iteration k. In iteration k + 1 we want to solve

Since we need to perform the iteration at every time level, the time level counter is often also included:

with the start value \(u^{n,0}=u^{n-1}\) and the final converged value \(u^{n}=u^{n,k}\) for sufficiently large k.

However, we will normally apply a mathematical notation in our final formulas that is as close as possible to what we aim to write in a computer code and then it becomes natural to use u and \(u^{-}\) instead of \(u^{k+1}\) and u k or \(u^{n,k+1}\) and u n,k.

Stopping criteria

The iteration method can typically be terminated when the change in the solution is smaller than a tolerance ϵ u :

or when the residual in the equation is sufficiently small (<ϵ r ),

A single Picard iteration

Instead of iterating until a stopping criterion is fulfilled, one may iterate a specific number of times. Just one Picard iteration is popular as this corresponds to the intuitive idea of approximating a nonlinear term like \((u^{n})^{2}\) by \(u^{n-1}u^{n}\). This follows from the linearization \(u^{-}u^{n}\) and the initial choice of \(u^{-}=u^{n-1}\) at time level t n . In other words, a single Picard iteration corresponds to using the solution at the previous time level to linearize nonlinear terms. The resulting discretization becomes (using proper values for a, b, and c)

which is a linear algebraic equation in the unknown u n, making it easy to solve for u n without any need for an alternative notation.

We shall later refer to the strategy of taking one Picard step, or equivalently, linearizing terms with use of the solution at the previous time step, as the Picard1 method. It is a widely used approach in science and technology, but with some limitations if \(\Delta t\) is not sufficiently small (as will be illustrated later).

Notice

Equation (5.5) does not correspond to a ‘‘pure’’ finite difference method where the equation is sampled at a point and derivatives replaced by differences (because the \(u^{n-1}\) term on the right-hand side must then be u n). The best interpretation of the scheme (5.5) is a Backward Euler difference combined with a single (perhaps insufficient) Picard iteration at each time level, with the value at the previous time level as start for the Picard iteration.

5.1.7 Linearization by a Geometric Mean

We consider now a Crank-Nicolson discretization of (5.1). This means that the time derivative is approximated by a centered difference,

written out as

The term \(u^{n+\frac{1}{2}}\) is normally approximated by an arithmetic mean,

such that the scheme involves the unknown function only at the time levels where we actually intend to compute it. The same arithmetic mean applied to the nonlinear term gives

which is nonlinear in the unknown \(u^{n+1}\). However, using a geometric mean for \((u^{n+\frac{1}{2}})^{2}\) is a way of linearizing the nonlinear term in (5.6):

Using an arithmetic mean on the linear \(u^{n+\frac{1}{2}}\) term in (5.6) and a geometric mean for the second term, results in a linearized equation for the unknown \(u^{n+1}\):

which can readily be solved:

This scheme can be coded directly, and since there is no nonlinear algebraic equation to iterate over, we skip the simplified notation with u for \(u^{n+1}\) and \(u^{(1)}\) for u n. The technique with using a geometric average is an example of transforming a nonlinear algebraic equation to a linear one, without any need for iterations.

The geometric mean approximation is often very effective for linearizing quadratic nonlinearities. Both the arithmetic and geometric mean approximations have truncation errors of order \(\Delta t^{2}\) and are therefore compatible with the truncation error \(\mathcal{O}(\Delta t^{2})\) of the centered difference approximation for \(u^{\prime}\) in the Crank-Nicolson method.

Applying the operator notation for the means and finite differences, the linearized Crank-Nicolson scheme for the logistic equation can be compactly expressed as

Remark

If we use an arithmetic instead of a geometric mean for the nonlinear term in (5.6), we end up with a nonlinear term \((u^{n+1})^{2}\). This term can be linearized as \(u^{-}u^{n+1}\) in a Picard iteration approach and in particular as \(u^{n}u^{n+1}\) in a Picard1 iteration approach. The latter gives a scheme almost identical to the one arising from a geometric mean (the difference in \(u^{n+1}\) being \(\frac{1}{4}\Delta tu^{n}(u^{n+1}-u^{n})\approx\frac{1}{4}\Delta t^{2}u^{\prime}u\), i.e., a difference of size \(\Delta t^{2}\)).

5.1.8 Newton’s Method

The Backward Euler scheme (5.2) for the logistic equation leads to a nonlinear algebraic equation (5.3). Now we write any nonlinear algebraic equation in the general and compact form

Newton’s method linearizes this equation by approximating F(u) by its Taylor series expansion around a computed value \(u^{-}\) and keeping only the linear part:

The linear equation \(\hat{F}(u)=0\) has the solution

Expressed with an iteration index in the unknown, Newton’s method takes on the more familiar mathematical form

It can be shown that the error in iteration k + 1 of Newton’s method is proportional to the square of the error in iteration k, a result referred to as quadratic convergence. This means that for small errors the method converges very fast, and in particular much faster than Picard iteration and other iteration methods. (The proof of this result is found in most textbooks on numerical analysis.) However, the quadratic convergence appears only if u k is sufficiently close to the solution. Further away from the solution the method can easily converge very slowly or diverge. The reader is encouraged to do Exercise 5.3 to get a better understanding for the behavior of the method.

Application of Newton’s method to the logistic equation discretized by the Backward Euler method is straightforward as we have

and then

The iteration method becomes

At each time level, we start the iteration by setting \(u^{-}=u^{(1)}\). Stopping criteria as listed for the Picard iteration can be used also for Newton’s method.

An alternative mathematical form, where we write out a, b, and c, and use a time level counter n and an iteration counter k, takes the form

for \(k=0,1,\ldots\). A program implementation is much closer to (5.7) than to (5.8), but the latter is better aligned with the established mathematical notation used in the literature.

5.1.9 Relaxation

One iteration in Newton’s method or Picard iteration consists of solving a linear problem \(\hat{F}(u)=0\). Sometimes convergence problems arise because the new solution u of \(\hat{F}(u)=0\) is ‘‘too far away’’ from the previously computed solution \(u^{-}\). A remedy is to introduce a relaxation, meaning that we first solve \(\hat{F}(u^{*})=0\) for a suggested value \(u^{*}\) and then we take u as a weighted mean of what we had, \(u^{-}\), and what our linearized equation \(\hat{F}=0\) suggests, \(u^{*}\):

The parameter ω is known as a relaxation parameter, and a choice ω < 1 may prevent divergent iterations.

Relaxation in Newton’s method can be directly incorporated in the basic iteration formula:

5.1.10 Implementation and Experiments

The program logistic.py contains implementations of all the methods described above. Below is an extract of the file showing how the Picard and Newton methods are implemented for a Backward Euler discretization of the logistic equation.

The Crank-Nicolson method utilizing a linearization based on the geometric mean gives a simpler algorithm:

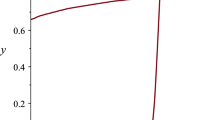

We may run experiments with the model problem (5.1) and the different strategies for dealing with nonlinearities as described above. For a quite coarse time resolution, \(\Delta t=0.9\), use of a tolerance \(\epsilon_{r}=0.1\) in the stopping criterion introduces an iteration error, especially in the Picard iterations, that is visibly much larger than the time discretization error due to a large \(\Delta t\). This is illustrated by comparing the upper two plots in Fig. 5.1. The one to the right has a stricter tolerance \(\epsilon=10^{-3}\), which causes all the curves corresponding to Picard and Newton iteration to be on top of each other (and no changes can be visually observed by reducing ϵ r further). The reason why Newton’s method does much better than Picard iteration in the upper left plot is that Newton’s method with one step comes far below the ϵ r tolerance, while the Picard iteration needs on average 7 iterations to bring the residual down to \(\epsilon_{r}=10^{-1}\), which gives insufficient accuracy in the solution of the nonlinear equation. It is obvious that the Picard1 method gives significant errors in addition to the time discretization unless the time step is as small as in the lower right plot.

The BE exact curve corresponds to using the exact solution of the quadratic equation at each time level, so this curve is only affected by the Backward Euler time discretization. The CN gm curve corresponds to the theoretically more accurate Crank-Nicolson discretization, combined with a geometric mean for linearization. This curve appears more accurate, especially if we take the plot in the lower right with a small \(\Delta t\) and an appropriately small ϵ r value as the exact curve.

When it comes to the need for iterations, Fig. 5.2 displays the number of iterations required at each time level for Newton’s method and Picard iteration. The smaller \(\Delta t\) is, the better starting value we have for the iteration, and the faster the convergence is. With \(\Delta t=0.9\) Picard iteration requires on average 32 iterations per time step, but this number is dramatically reduced as \(\Delta t\) is reduced.

However, introducing relaxation and a parameter ω = 0.8 immediately reduces the average of 32 to 7, indicating that for the large \(\Delta t=0.9\), Picard iteration takes too long steps. An approximately optimal value for ω in this case is 0.5, which results in an average of only 2 iterations! An even more dramatic impact of ω appears when \(\Delta t=1\): Picard iteration does not convergence in 1000 iterations, but ω = 0.5 again brings the average number of iterations down to 2.

Remark

The simple Crank-Nicolson method with a geometric mean for the quadratic nonlinearity gives visually more accurate solutions than the Backward Euler discretization. Even with a tolerance of \(\epsilon_{r}=10^{-3}\), all the methods for treating the nonlinearities in the Backward Euler discretization give graphs that cannot be distinguished. So for accuracy in this problem, the time discretization is much more crucial than ϵ r . Ideally, one should estimate the error in the time discretization, as the solution progresses, and set ϵ r accordingly.

5.1.11 Generalization to a General Nonlinear ODE

Let us see how the various methods in the previous sections can be applied to the more generic model

where f is a nonlinear function of u.

Explicit time discretization

Explicit ODE methods like the Forward Euler scheme, Runge-Kutta methods and Adams-Bashforth methods all evaluate f at time levels where u is already computed, so nonlinearities in f do not pose any difficulties.

Backward Euler discretization

Approximating \(u^{\prime}\) by a backward difference leads to a Backward Euler scheme, which can be written as

or alternatively

A simple Picard iteration, not knowing anything about the nonlinear structure of f, must approximate \(f(u,t_{n})\) by \(f(u^{-},t_{n})\):

The iteration starts with \(u^{-}=u^{(1)}\) and proceeds with repeating

until a stopping criterion is fulfilled.

Explicit vs implicit treatment of nonlinear terms

Evaluating f for a known \(u^{-}\) is referred to as explicit treatment of f, while if \(f(u,t)\) has some structure, say \(f(u,t)=u^{3}\), parts of f can involve the unknown u, as in the manual linearization \((u^{-})^{2}u\), and then the treatment of f is ‘‘more implicit’’ and ‘‘less explicit’’. This terminology is inspired by time discretization of \(u^{\prime}=f(u,t)\), where evaluating f for known u values gives explicit schemes, while treating f or parts of f implicitly, makes f contribute to the unknown terms in the equation at the new time level.

Explicit treatment of f usually means stricter conditions on \(\Delta t\) to achieve stability of time discretization schemes. The same applies to iteration techniques for nonlinear algebraic equations: the ‘‘less’’ we linearize f (i.e., the more we keep of u in the original formula), the faster the convergence may be.

We may say that \(f(u,t)=u^{3}\) is treated explicitly if we evaluate f as \((u^{-})^{3}\), partially implicit if we linearize as \((u^{-})^{2}u\) and fully implicit if we represent f by u 3. (Of course, the fully implicit representation will require further linearization, but with \(f(u,t)=u^{2}\) a fully implicit treatment is possible if the resulting quadratic equation is solved with a formula.)

For the ODE \(u^{\prime}=-u^{3}\) with \(f(u,t)=-u^{3}\) and coarse time resolution \(\Delta t=0.4\), Picard iteration with \((u^{-})^{2}u\) requires 8 iterations with \(\epsilon_{r}=10^{-3}\) for the first time step, while \((u^{-})^{3}\) leads to 22 iterations. After about 10 time steps both approaches are down to about 2 iterations per time step, but this example shows a potential of treating f more implicitly.

A trick to treat f implicitly in Picard iteration is to evaluate it as \(f(u^{-},t)u/u^{-}\). For a polynomial f, \(f(u,t)=u^{m}\), this corresponds to \((u^{-})^{m}u/u^{-}=(u^{-})^{m-1}u\). Sometimes this more implicit treatment has no effect, as with \(f(u,t)=\exp(-u)\) and \(f(u,t)=\ln(1+u)\), but with \(f(u,t)=\sin(2(u+1))\), the \(f(u^{-},t)u/u^{-}\) trick leads to 7, 9, and 11 iterations during the first three steps, while \(f(u^{-},t)\) demands 17, 21, and 20 iterations. (Experiments can be done with the code ODE_Picard_tricks.py .)

Newton’s method applied to a Backward Euler discretization of \(u^{\prime}=f(u,t)\) requires computation of the derivative

Starting with the solution at the previous time level, \(u^{-}=u^{(1)}\), we can just use the standard formula

Crank-Nicolson discretization

The standard Crank-Nicolson scheme with arithmetic mean approximation of f takes the form

We can write the scheme as a nonlinear algebraic equation

A Picard iteration scheme must in general employ the linearization

while Newton’s method can apply the general formula (5.11) with F(u) given in (5.12) and

5.1.12 Systems of ODEs

We may write a system of ODEs

as

if we interpret u as a vector \(u=(u_{0}(t),u_{1}(t),\ldots,u_{N}(t))\) and f as a vector function with components \((f_{0}(u,t),f_{1}(u,t),\ldots,f_{N}(u,t))\).

Most solution methods for scalar ODEs, including the Forward and Backward Euler schemes and the Crank-Nicolson method, generalize in a straightforward way to systems of ODEs simply by using vector arithmetics instead of scalar arithmetics, which corresponds to applying the scalar scheme to each component of the system. For example, here is a backward difference scheme applied to each component,

which can be written more compactly in vector form as

This is a system of algebraic equations,

or written out

Example

We shall address the 2 × 2 ODE system for oscillations of a pendulum subject to gravity and air drag. The system can be written as

where β is a dimensionless parameter (this is the scaled, dimensionless version of the original, physical model). The unknown components of the system are the angle \(\theta(t)\) and the angular velocity \(\omega(t)\). We introduce \(u_{0}=\omega\) and \(u_{1}=\theta\), which leads to

A Crank-Nicolson scheme reads

This is a coupled system of two nonlinear algebraic equations in two unknowns \(u_{0}^{n+1}\) and \(u_{1}^{n+1}\).

Using the notation u 0 and u 1 for the unknowns \(u_{0}^{n+1}\) and \(u_{1}^{n+1}\) in this system, writing \(u_{0}^{(1)}\) and \(u_{1}^{(1)}\) for the previous values \(u_{0}^{n}\) and \(u_{1}^{n}\), multiplying by \(\Delta t\) and moving the terms to the left-hand sides, gives

Obviously, we have a need for solving systems of nonlinear algebraic equations, which is the topic of the next section.

5.2 Systems of Nonlinear Algebraic Equations

Implicit time discretization methods for a system of ODEs, or a PDE, lead to systems of nonlinear algebraic equations, written compactly as

where u is a vector of unknowns \(u=(u_{0},\ldots,u_{N})\), and F is a vector function: \(F=(F_{0},\ldots,F_{N})\). The system at the end of Sect. 5.1.12 fits this notation with N = 1, \(F_{0}(u)\) given by the left-hand side of (5.18), while \(F_{1}(u)\) is the left-hand side of (5.19).

Sometimes the equation system has a special structure because of the underlying problem, e.g.,

with A(u) as an \((N+1)\times(N+1)\) matrix function of u and b as a vector function: \(b=(b_{0},\ldots,b_{N})\).

We shall next explain how Picard iteration and Newton’s method can be applied to systems like \(F(u)=0\) and \(A(u)u=b(u)\). The exposition has a focus on ideas and practical computations. More theoretical considerations, including quite general results on convergence properties of these methods, can be found in Kelley [8].

5.2.1 Picard Iteration

We cannot apply Picard iteration to nonlinear equations unless there is some special structure. For the commonly arising case \(A(u)u=b(u)\) we can linearize the product \(A(u)u\) to \(A(u^{-})u\) and b(u) as \(b(u^{-})\). That is, we use the most previously computed approximation in A and b to arrive at a linear system for u:

A relaxed iteration takes the form

In other words, we solve a system of nonlinear algebraic equations as a sequence of linear systems.

Algorithm for relaxed Picard iteration

Given \(A(u)u=b(u)\) and an initial guess \(u^{-}\), iterate until convergence:

-

1.

solve \(A(u^{-})u^{*}=b(u^{-})\) with respect to \(u^{*}\)

-

2.

\(u=\omega u^{*}+(1-\omega)u^{-}\)

-

3.

\(u^{-}\ \leftarrow\ u\)

‘‘Until convergence’’ means that the iteration is stopped when the change in the unknown, \(||u-u^{-}||\), or the residual \(||A(u)u-b||\), is sufficiently small, see Sect. 5.2.3 for more details.

5.2.2 Newton’s Method

The natural starting point for Newton’s method is the general nonlinear vector equation \(F(u)=0\). As for a scalar equation, the idea is to approximate F around a known value \(u^{-}\) by a linear function \(\hat{F}\), calculated from the first two terms of a Taylor expansion of F. In the multi-variate case these two terms become

where J is the Jacobian of F, defined by

So, the original nonlinear system is approximated by

which is linear in u and can be solved in a two-step procedure: first solve \(J\delta u=-F(u^{-})\) with respect to the vector \(\delta u\) and then update \(u=u^{-}+\delta u\). A relaxation parameter can easily be incorporated:

Algorithm for Newton’s method

Given \(F(u)=0\) and an initial guess \(u^{-}\), iterate until convergence:

-

1.

solve \(J\delta u=-F(u^{-})\) with respect to \(\delta u\)

-

2.

\(u=u^{-}+\omega\delta u\)

-

3.

\(u^{-}\ \leftarrow\ u\)

For the special system with structure \(A(u)u=b(u)\),

one gets

We realize that the Jacobian needed in Newton’s method consists of \(A(u^{-})\) as in the Picard iteration plus two additional terms arising from the differentiation. Using the notation \(A^{\prime}(u)\) for \(\partial A/\partial u\) (a quantity with three indices: \(\partial A_{i,k}/\partial u_{j}\)), and \(b^{\prime}(u)\) for \(\partial b/\partial u\) (a quantity with two indices: \(\partial b_{i}/\partial u_{j}\)), we can write the linear system to be solved as

or

Rearranging the terms demonstrates the difference from the system solved in each Picard iteration:

Here we have inserted a parameter γ such that γ = 0 gives the Picard system and γ = 1 gives the Newton system. Such a parameter can be handy in software to easily switch between the methods.

Combined algorithm for Picard and Newton iteration

Given A(u), b(u), and an initial guess \(u^{-}\), iterate until convergence:

-

1.

solve \((A+\gamma(A^{\prime}(u^{-})u^{-}+b^{\prime}(u^{-})))\delta u=-A(u^{-})u^{-}+b(u^{-})\) with respect to \(\delta u\)

-

2.

\(u=u^{-}+\omega\delta u\)

-

3.

\(u^{-}\ \leftarrow\ u\)

γ = 1 gives a Newton method while γ = 0 corresponds to Picard iteration.

5.2.3 Stopping Criteria

Let \(||\cdot||\) be the standard Euclidean vector norm. Four termination criteria are much in use:

-

Absolute change in solution: \(||u-u^{-}||\leq\epsilon_{u}\)

-

Relative change in solution: \(||u-u^{-}||\leq\epsilon_{u}||u_{0}||\), where u 0 denotes the start value of \(u^{-}\) in the iteration

-

Absolute residual: \(||F(u)||\leq\epsilon_{r}\)

-

Relative residual: \(||F(u)||\leq\epsilon_{r}||F(u_{0})||\)

To prevent divergent iterations to run forever, one terminates the iterations when the current number of iterations k exceeds a maximum value \(k_{\max}\).

The relative criteria are most used since they are not sensitive to the characteristic size of u. Nevertheless, the relative criteria can be misleading when the initial start value for the iteration is very close to the solution, since an unnecessary reduction in the error measure is enforced. In such cases the absolute criteria work better. It is common to combine the absolute and relative measures of the size of the residual, as in

where ϵ rr is the tolerance in the relative criterion and ϵ ra is the tolerance in the absolute criterion. With a very good initial guess for the iteration (typically the solution of a differential equation at the previous time level), the term \(||F(u_{0})||\) is small and ϵ ra is the dominating tolerance. Otherwise, \(\epsilon_{rr}||F(u_{0})||\) and the relative criterion dominates.

With the change in solution as criterion we can formulate a combined absolute and relative measure of the change in the solution:

The ultimate termination criterion, combining the residual and the change in solution with a test on the maximum number of iterations, can be expressed as

5.2.4 Example: A Nonlinear ODE Model from Epidemiology

A very simple model for the spreading of a disease, such as a flu, takes the form of a 2 × 2 ODE system

where S(t) is the number of people who can get ill (susceptibles) and I(t) is the number of people who are ill (infected). The constants β > 0 and ν > 0 must be given along with initial conditions \(S(0)\) and \(I(0)\).

Implicit time discretization

A Crank-Nicolson scheme leads to a 2 × 2 system of nonlinear algebraic equations in the unknowns \(S^{n+1}\) and \(I^{n+1}\):

Introducing S for \(S^{n+1}\), \(S^{(1)}\) for S n, I for \(I^{n+1}\) and \(I^{(1)}\) for I n, we can rewrite the system as

A Picard iteration

We assume that we have approximations \(S^{-}\) and \(I^{-}\) to S and I, respectively. A way of linearizing the only nonlinear term SI is to write \(I^{-}S\) in the \(F_{S}=0\) equation and \(S^{-}I\) in the \(F_{I}=0\) equation, which also decouples the equations. Solving the resulting linear equations with respect to the unknowns S and I gives

Before a new iteration, we must update \(S^{-}\ \leftarrow\ S\) and \(I^{-}\ \leftarrow\ I\).

Newton’s method

The nonlinear system (5.28)–(5.29) can be written as \(F(u)=0\) with \(F=(F_{S},F_{I})\) and \(u=(S,I)\). The Jacobian becomes

The Newton system \(J(u^{-})\delta u=-F(u^{-})\) to be solved in each iteration is then

Remark

For this particular system of ODEs, explicit time integration methods work very well. Even a Forward Euler scheme is fine, but (as also experienced more generally) the 4-th order Runge-Kutta method is an excellent balance between high accuracy, high efficiency, and simplicity.

5.3 Linearization at the Differential Equation Level

The attention is now turned to nonlinear partial differential equations (PDEs) and application of the techniques explained above for ODEs. The model problem is a nonlinear diffusion equation for \(u(\boldsymbol{x},t)\):

In the present section, our aim is to discretize this problem in time and then present techniques for linearizing the time-discrete PDE problem ‘‘at the PDE level’’ such that we transform the nonlinear stationary PDE problem at each time level into a sequence of linear PDE problems, which can be solved using any method for linear PDEs. This strategy avoids the solution of systems of nonlinear algebraic equations. In Sect. 5.4 we shall take the opposite (and more common) approach: discretize the nonlinear problem in time and space first, and then solve the resulting nonlinear algebraic equations at each time level by the methods of Sect. 5.2. Very often, the two approaches are mathematically identical, so there is no preference from a computational efficiency point of view. The details of the ideas sketched above will hopefully become clear through the forthcoming examples.

5.3.1 Explicit Time Integration

The nonlinearities in the PDE are trivial to deal with if we choose an explicit time integration method for (5.30), such as the Forward Euler method:

or written out,

which is a linear equation in the unknown \(u^{n+1}\) with solution

The disadvantage with this discretization is the strict stability criterion \(\Delta t\leq h^{2}/(6\max\alpha)\) for the case f = 0 and a standard 2nd-order finite difference discretization in 3D space with mesh cell sizes \(h=\Delta x=\Delta y=\Delta z\).

5.3.2 Backward Euler Scheme and Picard Iteration

A Backward Euler scheme for (5.30) reads

Written out,

This is a nonlinear PDE for the unknown function \(u^{n}(\boldsymbol{x})\). Such a PDE can be viewed as a time-independent PDE where \(u^{n-1}(\boldsymbol{x})\) is a known function.

We introduce a Picard iteration with k as iteration counter. A typical linearization of the \(\nabla\cdot(\alpha(u^{n})\nabla u^{n})\) term in iteration k + 1 is to use the previously computed u n,k approximation in the diffusion coefficient: \(\alpha(u^{n,k})\). The nonlinear source term is treated similarly: \(f(u^{n,k})\). The unknown function \(u^{n,k+1}\) then fulfills the linear PDE

The initial guess for the Picard iteration at this time level can be taken as the solution at the previous time level: \(u^{n,0}=u^{n-1}\).

We can alternatively apply the implementation-friendly notation where u corresponds to the unknown we want to solve for, i.e., \(u^{n,k+1}\) above, and \(u^{-}\) is the most recently computed value, u n,k above. Moreover, \(u^{(1)}\) denotes the unknown function at the previous time level, \(u^{n-1}\) above. The PDE to be solved in a Picard iteration then looks like

At the beginning of the iteration we start with the value from the previous time level: \(u^{-}=u^{(1)}\), and after each iteration, \(u^{-}\) is updated to u.

Remark on notation

The previous derivations of the numerical scheme for time discretizations of PDEs have, strictly speaking, a somewhat sloppy notation, but it is much used and convenient to read. A more precise notation must distinguish clearly between the exact solution of the PDE problem, here denoted \(u_{\mbox{\footnotesize e}}(\boldsymbol{x},t)\), and the exact solution of the spatial problem, arising after time discretization at each time level, where (5.33) is an example. The latter is here represented as \(u^{n}(\boldsymbol{x})\) and is an approximation to \(u_{\mbox{\footnotesize e}}(\boldsymbol{x},t_{n})\). Then we have another approximation \(u^{n,k}(\boldsymbol{x})\) to \(u^{n}(\boldsymbol{x})\) when solving the nonlinear PDE problem for u n by iteration methods, as in (5.34).

In our notation, u is a synonym for \(u^{n,k+1}\) and \(u^{(1)}\) is a synonym for \(u^{n-1}\), inspired by what are natural variable names in a code. We will usually state the PDE problem in terms of u and quickly redefine the symbol u to mean the numerical approximation, while \(u_{\mbox{\footnotesize e}}\) is not explicitly introduced unless we need to talk about the exact solution and the approximate solution at the same time.

5.3.3 Backward Euler Scheme and Newton’s Method

At time level n, we have to solve the stationary PDE (5.33). In the previous section, we saw how this can be done with Picard iterations. Another alternative is to apply the idea of Newton’s method in a clever way. Normally, Newton’s method is defined for systems of algebraic equations, but the idea of the method can be applied at the PDE level too.

Linearization via Taylor expansions

Let u n,k be an approximation to the unknown u n. We seek a better approximation on the form

The idea is to insert (5.36) in (5.33), Taylor expand the nonlinearities and keep only the terms that are linear in \(\delta u\) (which makes (5.36) an approximation for u n). Then we can solve a linear PDE for the correction \(\delta u\) and use (5.36) to find a new approximation

to u n. Repeating this procedure gives a sequence \(u^{n,k+1}\), \(k=0,1,\ldots\) that hopefully converges to the goal u n.

Let us carry out all the mathematical details for the nonlinear diffusion PDE discretized by the Backward Euler method. Inserting (5.36) in (5.33) gives

We can Taylor expand \(\alpha(u^{n,k}+\delta u)\) and \(f(u^{n,k}+\delta u)\):

Inserting the linear approximations of α and f in (5.37) results in

The term \(\alpha^{\prime}(u^{n,k})\delta u\nabla\delta u\) is of order \(\delta u^{2}\) and therefore omitted since we expect the correction \(\delta u\) to be small (\(\delta u\gg\delta u^{2}\)). Reorganizing the equation gives a PDE for \(\delta u\) that we can write in short form as

where

Note that \(\delta F\) is a linear function of \(\delta u\), and F contains only terms that are known, such that the PDE for \(\delta u\) is indeed linear.

Observations

The notational form \(\delta F=-F\) resembles the Newton system \(J\delta u=-F\) for systems of algebraic equations, with \(\delta F\) as \(J\delta u\). The unknown vector in a linear system of algebraic equations enters the system as a linear operator in terms of a matrix-vector product (\(J\delta u\)), while at the PDE level we have a linear differential operator instead (\(\delta F\)).

Similarity with Picard iteration

We can rewrite the PDE for \(\delta u\) in a slightly different way too if we define \(u^{n,k}+\delta u\) as \(u^{n,k+1}\).

Note that the first line is the same PDE as arises in the Picard iteration, while the remaining terms arise from the differentiations that are an inherent ingredient in Newton’s method.

Implementation

For coding we want to introduce u for u n, \(u^{-}\) for u n,k and \(u^{(1)}\) for \(u^{n-1}\). The formulas for F and \(\delta F\) are then more clearly written as

The form that orders the PDE as the Picard iteration terms plus the Newton method’s derivative terms becomes

The Picard and full Newton versions correspond to γ = 0 and γ = 1, respectively.

Derivation with alternative notation

Some may prefer to derive the linearized PDE for \(\delta u\) using the more compact notation. We start with inserting \(u^{n}=u^{-}+\delta u\) to get

Taylor expanding,

and inserting these expressions gives a less cluttered PDE for \(\delta u\):

5.3.4 Crank-Nicolson Discretization

A Crank-Nicolson discretization of (5.30) applies a centered difference at \(t_{n+\frac{1}{2}}\):

The standard technique is to apply an arithmetic average for quantities defined between two mesh points, e.g.,

However, with nonlinear terms we have many choices of formulating an arithmetic mean:

A big question is whether there are significant differences in accuracy between taking the products of arithmetic means or taking the arithmetic mean of products. Exercise 5.6 investigates this question, and the answer is that the approximation is \(\mathcal{O}(\Delta t^{2})\) in both cases.

5.4 1D Stationary Nonlinear Differential Equations

Section 5.3 presented methods for linearizing time-discrete PDEs directly prior to discretization in space. We can alternatively carry out the discretization in space of the time-discrete nonlinear PDE problem and get a system of nonlinear algebraic equations, which can be solved by Picard iteration or Newton’s method as presented in Sect. 5.2. This latter approach will now be described in detail.

We shall work with the 1D problem

The problem (5.50) arises from the stationary limit of a diffusion equation,

as \(t\rightarrow\infty\) and \(\partial u/\partial t\rightarrow 0\). Alternatively, the problem (5.50) arises at each time level from implicit time discretization of (5.51). For example, a Backward Euler scheme for (5.51) leads to

Introducing u(x) for \(u^{n}(x)\), \(u^{(1)}\) for \(u^{n-1}\), and defining f(u) in (5.50) to be f(u) in (5.52) plus \(u^{n-1}/\Delta t\), gives (5.50) with \(a=1/\Delta t\).

5.4.1 Finite Difference Discretization

The nonlinearity in the differential equation (5.50) poses no more difficulty than a variable coefficient, as in the term \((\alpha(x)u^{\prime})^{\prime}\). We can therefore use a standard finite difference approach when discretizing the Laplace term with a variable coefficient:

Writing this out for a uniform mesh with points \(x_{i}=i\Delta x\), \(i=0,\ldots,N_{x}\), leads to

This equation is valid at all the mesh points \(i=0,1,\ldots,N_{x}-1\). At \(i=N_{x}\) we have the Dirichlet condition \(u_{i}=0\). The only difference from the case with \((\alpha(x)u^{\prime})^{\prime}\) and f(x) is that now α and f are functions of u and not only of x: \((\alpha(u(x))u^{\prime})^{\prime}\) and \(f(u(x))\).

The quantity \(\alpha_{i+\frac{1}{2}}\), evaluated between two mesh points, needs a comment. Since α depends on u and u is only known at the mesh points, we need to express \(\alpha_{i+\frac{1}{2}}\) in terms of u i and \(u_{i+1}\). For this purpose we use an arithmetic mean, although a harmonic mean is also common in this context if α features large jumps. There are two choices of arithmetic means:

Equation (5.53) with the latter approximation then looks like

or written more compactly,

At mesh point i = 0 we have the boundary condition \(\alpha(u)u^{\prime}=C\), which is discretized by

meaning

The fictitious value u −1 can be eliminated with the aid of (5.56) for i = 0. Formally, (5.56) should be solved with respect to \(u_{i-1}\) and that value (for i = 0) should be inserted in (5.57), but it is algebraically much easier to do it the other way around. Alternatively, one can use a ghost cell \([-\Delta x,0]\) and update the u −1 value in the ghost cell according to (5.57) after every Picard or Newton iteration. Such an approach means that we use a known u −1 value in (5.56) from the previous iteration.

5.4.2 Solution of Algebraic Equations

The structure of the equation system

The nonlinear algebraic equations (5.56) are of the form \(A(u)u=b(u)\) with

The matrix A(u) is tridiagonal: \(A_{i,j}=0\) for j > i + 1 and j < i − 1.

The above expressions are valid for internal mesh points \(1\leq i\leq N_{x}-1\). For i = 0 we need to express \(u_{i-1}=u_{-1}\) in terms of u 1 using (5.57):

This value must be inserted in A 0,0. The expression for \(A_{i,i+1}\) applies for i = 0, and \(A_{i,i-1}\) does not enter the system when i = 0.

Regarding the last equation, its form depends on whether we include the Dirichlet condition \(u(L)=D\), meaning \(u_{N_{x}}=D\), in the nonlinear algebraic equation system or not. Suppose we choose \((u_{0},u_{1},\ldots,u_{N_{x}-1})\) as unknowns, later referred to as systems without Dirichlet conditions. The last equation corresponds to \(i=N_{x}-1\). It involves the boundary value \(u_{N_{x}}\), which is substituted by D. If the unknown vector includes the boundary value, \((u_{0},u_{1},\ldots,u_{N_{x}})\), later referred to as system including Dirichlet conditions, the equation for \(i=N_{x}-1\) just involves the unknown \(u_{N_{x}}\), and the final equation becomes \(u_{N_{x}}=D\), corresponding to \(A_{i,i}=1\) and \(b_{i}=D\) for \(i=N_{x}\).

Picard iteration

The obvious Picard iteration scheme is to use previously computed values of u i in A(u) and b(u), as described more in detail in Sect. 5.2. With the notation \(u^{-}\) for the most recently computed value of u, we have the system \(F(u)\approx\hat{F}(u)=A(u^{-})u-b(u^{-})\), with \(F=(F_{0},F_{1},\ldots,F_{m})\), \(u=(u_{0},u_{1},\ldots,u_{m})\). The index m is N x if the system includes the Dirichlet condition as a separate equation and \(N_{x}-1\) otherwise. The matrix \(A(u^{-})\) is tridiagonal, so the solution procedure is to fill a tridiagonal matrix data structure and the right-hand side vector with the right numbers and call a Gaussian elimination routine for tridiagonal linear systems.

Mesh with two cells

It helps on the understanding of the details to write out all the mathematics in a specific case with a small mesh, say just two cells (\(N_{x}=2\)). We use \(u^{-}_{i}\) for the i-th component in \(u^{-}\).

The starting point is the basic expressions for the nonlinear equations at mesh point i = 0 and i = 1:

Equation (5.59) written out reads

We must then replace u −1 by (5.58). With Picard iteration we get

where

Equation (5.60) contains the unknown u 2 for which we have a Dirichlet condition. In case we omit the condition as a separate equation, (5.60) with Picard iteration becomes

We must now move the u 2 term to the right-hand side and replace all occurrences of u 2 by D:

The two equations can be written as a 2 × 2 system:

where

The system with the Dirichlet condition becomes

with

Other entries are as in the 2 × 2 system.

Newton’s method

The Jacobian must be derived in order to use Newton’s method. Here it means that we need to differentiate \(F(u)=A(u)u-b(u)\) with respect to the unknown parameters \(u_{0},u_{1},\ldots,u_{m}\) (\(m=N_{x}\) or \(m=N_{x}-1\), depending on whether the Dirichlet condition is included in the nonlinear system \(F(u)=0\) or not). Nonlinear equation number i has the structure

Computing the Jacobian requires careful differentiation. For example,

The complete Jacobian becomes

The explicit expression for nonlinear equation number i, \(F_{i}(u_{0},u_{1},\ldots)\), arises from moving the \(f(u_{i})\) term in (5.56) to the left-hand side:

At the boundary point i = 0, u −1 must be replaced using the formula (5.58). When the Dirichlet condition at \(i=N_{x}\) is not a part of the equation system, the last equation \(F_{m}=0\) for \(m=N_{x}-1\) involves the quantity \(u_{N_{x}-1}\) which must be replaced by D. If \(u_{N_{x}}\) is treated as an unknown in the system, the last equation \(F_{m}=0\) has \(m=N_{x}\) and reads

Similar replacement of u −1 and \(u_{N_{x}}\) must be done in the Jacobian for the first and last row. When \(u_{N_{x}}\) is included as an unknown, the last row in the Jacobian must help implement the condition \(\delta u_{N_{x}}=0\), since we assume that u contains the right Dirichlet value at the beginning of the iteration (\(u_{N_{x}}=D\)), and then the Newton update should be zero for i = 0, i.e., \(\delta u_{N_{x}}=0\). This also forces the right-hand side to be \(b_{i}=0\), \(i=N_{x}\).

We have seen, and can see from the present example, that the linear system in Newton’s method contains all the terms present in the system that arises in the Picard iteration method. The extra terms in Newton’s method can be multiplied by a factor such that it is easy to program one linear system and set this factor to 0 or 1 to generate the Picard or Newton system.

5.5 Multi-Dimensional Nonlinear PDE Problems

The fundamental ideas in the derivation of F i and J i,j in the 1D model problem are easily generalized to multi-dimensional problems. Nevertheless, the expressions involved are slightly different, with derivatives in x replaced by \(\nabla\), so we present some examples below in detail.

5.5.1 Finite Difference Discretization

A typical diffusion equation

can be discretized by (e.g.) a Backward Euler scheme, which in 2D can be written

We do not dive into the details of handling boundary conditions now. Dirichlet and Neumann conditions are handled as in corresponding linear, variable-coefficient diffusion problems.

Writing the scheme out, putting the unknown values on the left-hand side and known values on the right-hand side, and introducing \(\Delta x=\Delta y=h\) to save some writing, one gets

This defines a nonlinear algebraic system on the form \(A(u)u=b(u)\).

Picard iteration

The most recently computed values \(u^{-}\) of u n can be used in α and f for a Picard iteration, or equivalently, we solve \(A(u^{-})u=b(u^{-})\). The result is a linear system of the same type as arising from \(u_{t}=\nabla\cdot(\alpha(\boldsymbol{x})\nabla u)+f(\boldsymbol{x},t)\).

The Picard iteration scheme can also be expressed in operator notation:

Newton’s method

As always, Newton’s method is technically more involved than Picard iteration. We first define the nonlinear algebraic equations to be solved, drop the superscript n (use u for u n), and introduce \(u^{(1)}\) for \(u^{n-1}\):

It is convenient to work with two indices i and j in 2D finite difference discretizations, but it complicates the derivation of the Jacobian, which then gets four indices. (Make sure you really understand the 1D version of this problem as treated in Sect. 5.4.1.) The left-hand expression of an equation \(F_{i,j}=0\) is to be differentiated with respect to each of the unknowns u r,s (recall that this is short notation for \(u_{r,s}^{n}\)), \(r\in\mathcal{I}_{x}\), \(s\in\mathcal{I}_{y}\):

The Newton system to be solved in each iteration can be written as

Given i and j, only a few r and s indices give nonzero contribution to the Jacobian since F i,j contains \(u_{i\pm 1,j}\), \(u_{i,j\pm 1}\), and u i,j. This means that J i,j,r,s has nonzero contributions only if r = i ± 1, s = j ± 1, as well as r = i and s = j. The corresponding terms in J i,j,r,s are \(J_{i,j,i-1,j}\), \(J_{i,j,i+1,j}\), \(J_{i,j,i,j-1}\), \(J_{i,j,i,j+1}\) and J i,j,i,j. Therefore, the left-hand side of the Newton system, \(\sum_{r}\sum_{s}J_{i,j,r,s}\delta u_{r,s}\) collapses to

The specific derivatives become

The J i,j,i,j entry has a few more terms and is left as an exercise. Inserting the most recent approximation \(u^{-}\) for u in the J and F formulas and then forming \(J\delta u=-F\) gives the linear system to be solved in each Newton iteration. Boundary conditions will affect the formulas when any of the indices coincide with a boundary value of an index.

5.5.2 Continuation Methods

Picard iteration or Newton’s method may diverge when solving PDEs with severe nonlinearities. Relaxation with ω < 1 may help, but in highly nonlinear problems it can be necessary to introduce a continuation parameter Λ in the problem: Λ = 0 gives a version of the problem that is easy to solve, while Λ = 1 is the target problem. The idea is then to increase Λ in steps, \(\Lambda_{0}=0,\Lambda_{1}<\cdots<\Lambda_{n}=1\), and use the solution from the problem with \(\Lambda_{i-1}\) as initial guess for the iterations in the problem corresponding to Λ i .

The continuation method is easiest to understand through an example. Suppose we intend to solve

which is an equation modeling the flow of a non-Newtonian fluid through a channel or pipe. For q = 0 we have the Poisson equation (corresponding to a Newtonian fluid) and the problem is linear. A typical value for pseudo-plastic fluids may be \(q_{n}=-0.8\). We can introduce the continuation parameter \(\Lambda\in[0,1]\) such that \(q=q_{n}\Lambda\). Let \(\{\Lambda_{\ell}\}_{\ell=0}^{n}\) be the sequence of Λ values in \([0,1]\), with corresponding q values \(\{q_{\ell}\}_{\ell=0}^{n}\). We can then solve a sequence of problems

where the initial guess for iterating on \(u^{\ell}\) is the previously computed solution \(u^{\ell-1}\). If a particular \(\Lambda_{\ell}\) leads to convergence problems, one may try a smaller increase in Λ: \(\Lambda_{*}=\frac{1}{2}(\Lambda_{\ell-1}+\Lambda_{\ell})\), and repeat halving the step in Λ until convergence is reestablished.

5.6 Operator Splitting Methods

Operator splitting is a natural and old idea. When a PDE or system of PDEs contains different terms expressing different physics, it is natural to use different numerical methods for different physical processes. This can optimize and simplify the overall solution process. The idea was especially popularized in the context of the Navier-Stokes equations and reaction-diffusion PDEs. Common names for the technique are operator splitting, fractional step methods, and split-step methods. We shall stick to the former name. In the context of nonlinear differential equations, operator splitting can be used to isolate nonlinear terms and simplify the solution methods.

A related technique, often known as dimensional splitting or alternating direction implicit (ADI) methods, is to split the spatial dimensions and solve a 2D or 3D problem as two or three consecutive 1D problems, but this type of splitting is not to be further considered here.

5.6.1 Ordinary Operator Splitting for ODEs

Consider first an ODE where the right-hand side is split into two terms:

In case f 0 and f 1 are linear functions of u, \(f_{0}=au\) and \(f_{1}=bu\), we have \(u(t)=Ie^{(a+b)t}\), if \(u(0)=I\). When going one time step of length \(\Delta t\) from t n to \(t_{n+1}\), we have

This expression can be also be written as

or

The first step (5.72) means solving \(u^{\prime}=f_{0}\) over a time interval \(\Delta t\) with \(u(t_{n})\) as start value. The second step (5.73) means solving \(u^{\prime}=f_{1}\) over a time interval \(\Delta t\) with the value at the end of the first step as start value. That is, we progress the solution in two steps and solve two ODEs \(u^{\prime}=f_{0}\) and \(u^{\prime}=f_{1}\). The order of the equations is not important. From the derivation above we see that solving \(u^{\prime}=f_{1}\) prior to \(u^{\prime}=f_{0}\) can equally well be done.

The technique is exact if the ODEs are linear. For nonlinear ODEs it is only an approximate method with error \(\Delta t\). The technique can be extended to an arbitrary number of steps; i.e., we may split the PDE system into any number of subsystems. Examples will illuminate this principle.

5.6.2 Strang Splitting for ODEs

The accuracy of the splitting method in Sect. 5.6.1 can be improved from \(\mathcal{O}(\Delta t)\) to \(\mathcal{O}(\Delta t^{2})\) using so-called Strang splitting, where we take half a step with the f 0 operator, a full step with the f 1 operator, and finally half another step with the f 0 operator. During a time interval \(\Delta t\) the algorithm can be written as follows.

The global solution is set as \(u(t_{n+1})=u^{**}(t_{n+1})\).

There is no use in combining higher-order methods with ordinary splitting since the error due to splitting is \(\mathcal{O}(\Delta t)\), but for Strang splitting it makes sense to use schemes of order \(\mathcal{O}(\Delta t^{2})\).

With the notation introduced for Strang splitting, we may express ordinary first-order splitting as

with global solution set as \(u(t_{n+1})=u^{**}(t_{n+1})\).

5.6.3 Example: Logistic Growth

Let us split the (scaled) logistic equation

with solution \(u=(9e^{-t}+1)^{-1}\), into

We solve \(u^{\prime}=f_{0}(u)\) and \(u^{\prime}=f_{1}(u)\) by a Forward Euler step. In addition, we add a method where we solve \(u^{\prime}=f_{0}(u)\) analytically, since the equation is actually \(u^{\prime}=u\) with solution e t. The software that accompanies the following methods is the file split_logistic.py .

Splitting techniques

Ordinary splitting takes a Forward Euler step for each of the ODEs according to

with \(u(t_{n+1})=u^{**,n+1}\).

Strang splitting takes the form

Verbose implementation

The following function computes four solutions arising from the Forward Euler method, ordinary splitting, Strang splitting, as well as Strang splitting with exact treatment of \(u^{\prime}=f_{0}(u)\):

Compact implementation

We have used quite many lines for the steps in the splitting methods. Many will prefer to condense the code a bit, as done here:

Results

Figure 5.3 shows that the impact of splitting is significant. Interestingly, however, the Forward Euler method applied to the entire problem directly is much more accurate than any of the splitting schemes. We also see that Strang splitting is definitely more accurate than ordinary splitting and that it helps a bit to use an exact solution of \(u^{\prime}=f_{0}(u)\). With a large time step (\(\Delta t=0.2\), left plot in Fig. 5.3), the asymptotic values are off by 20–30 %. A more reasonable time step (\(\Delta t=0.05\), right plot in Fig. 5.3) gives better results, but still the asymptotic values are up to 10 % wrong.

As technique for solving nonlinear ODEs, we realize that the present case study is not particularly promising, as the Forward Euler method both linearizes the original problem and provides a solution that is much more accurate than any of the splitting techniques. In complicated multi-physics settings, on the other hand, splitting may be the only feasible way to go, and sometimes you really need to apply different numerics to different parts of a PDE problem. But in very simple problems, like the logistic ODE, splitting is just an inferior technique. Still, the logistic ODE is ideal for introducing all the mathematical details and for investigating the behavior.

5.6.4 Reaction-Diffusion Equation

Consider a diffusion equation coupled to chemical reactions modeled by a nonlinear term f(u):

This is a physical process composed of two individual processes: u is the concentration of a substance that is locally generated by a chemical reaction f(u), while u is spreading in space because of diffusion. There are obviously two time scales: one for the chemical reaction and one for diffusion. Typically, fast chemical reactions require much finer time stepping than slower diffusion processes. It could therefore be advantageous to split the two physical effects in separate models and use different numerical methods for the two.

A natural spitting in the present case is

Looking at these familiar problems, we may apply a θ rule (implicit) scheme for (5.79) over one time step and avoid dealing with nonlinearities by applying an explicit scheme for (5.80) over the same time step.

Suppose we have some solution u at time level t n . For flexibility, we define a θ method for the diffusion part (5.79) by

We use u n as initial condition for \(u^{*}\).

The reaction part, which is defined at each mesh point (without coupling values in different mesh points), can employ any scheme for an ODE. Here we use an Adams-Bashforth method of order 2. Recall that the overall accuracy of the splitting method is maximum \(\mathcal{O}(\Delta t^{2})\) for Strang splitting, otherwise it is just \(\mathcal{O}(\Delta t)\). Higher-order methods for ODEs will therefore be a waste of work. The 2nd-order Adams-Bashforth method reads

We can use a Forward Euler step to start the method, i.e, compute \(u^{**,1}_{i,j}\).

The algorithm goes like this:

-

1.

Solve the diffusion problem for one time step as usual.

-

2.

Solve the reaction ODEs at each mesh point in \([t_{n},t_{n}+\Delta t]\), using the diffusion solution in 1. as initial condition. The solution of the ODEs constitutes the solution of the original problem at the end of each time step.

We may use a much smaller time step when solving the reaction part, adapted to the dynamics of the problem \(u^{\prime}=f(u)\). This gives great flexibility in splitting methods.

5.6.5 Example: Reaction-Diffusion with Linear Reaction Term

The methods above may be explored in detail through a specific computational example in which we compute the convergence rates associated with four different solution approaches for the reaction-diffusion equation with a linear reaction term, i.e. \(f(u)=-bu\). The methods comprise solving without splitting (just straight Forward Euler), ordinary splitting, first order Strang splitting, and second order Strang splitting. In all four methods, a standard centered difference approximation is used for the spatial second derivative. The methods share the error model \(E=Ch^{r}\), while differing in the step h (being either \(\Delta x^{2}\) or \(\Delta x\)) and the convergence rate r (being either 1 or 2).

All code commented below is found in the file split_diffu_react.py . When executed, a function convergence_rates is called, from which all convergence rate computations are handled:

Now, with respect to the error (\(E=Ch^{r}\)), the Forward Euler scheme, the ordinary splitting scheme and first order Strang splitting scheme are all first order (r = 1), with a step \(h=\Delta x^{2}=K^{-1}\Delta t\), where K is some constant. This implies that the ratio \(\frac{\Delta t}{\Delta x^{2}}\) must be held constant during convergence rate calculations. Furthermore, the Fourier number \(F=\frac{\alpha\Delta t}{\Delta x^{2}}\) is upwards limited to F = 0.5, being the stability limit with explicit schemes. Thus, in these cases, we use the fixed value of F and a given (but changing) spatial resolution \(\Delta x\) to compute the corresponding value of \(\Delta t\) according to the expression for F. This assures that \(\frac{\Delta t}{\Delta x^{2}}\) is kept constant. The loop in convergence_rates runs over a chosen set of grid points (Nx_values) which gives a doubling of spatial resolution with each iteration (\(\Delta x\) is halved).

For the second order Strang splitting scheme, we have r = 2 and a step \(h=\Delta x=K^{-1}\Delta t\), where K again is some constant. In this case, it is thus the ratio \(\frac{\Delta t}{\Delta x}\) that must be held constant during the convergence rate calculations. From the expression for F, it is clear then that F must change with each halving of \(\Delta x\). In fact, if F is doubled each time \(\Delta x\) is halved, the ratio \(\frac{\Delta t}{\Delta x}\) will be constant (this follows, e.g., from the expression for F). This is utilized in our code.

A solver diffusion_theta is used in each of the four solution approaches:

For the no splitting approach with Forward Euler in time, this solver handles both the diffusion and the reaction term. When splitting, diffusion_theta takes care of the diffusion term only, while the reaction term is handled either by a Forward Euler scheme in reaction_FE, or by a second order Adams-Bashforth scheme from Odespy. The reaction_FE function covers one complete time step dt during ordinary splitting, while Strang splitting (both first and second order) applies it with dt/2 twice during each time step dt. Since the reaction term typically represents a much faster process than the diffusion term, a further refinement of the time step is made possible in reaction_FE. It was implemented as

With the ordinary splitting approach, each time step dt is covered twice. First computing the impact of the reaction term, then the contribution from the diffusion term:

For the two Strang splitting approaches, each time step dt is handled by first computing the reaction step for (the first) dt/2, followed by a diffusion step dt, before the reaction step is treated once again for (the remaining) dt/2. Since first order Strang splitting is no better than first order accurate, both the reaction and diffusion steps are computed explicitly. The solver was implemented as

The second order version of the Strang splitting approach utilizes a second order Adams-Bashforth solver for the reaction part and a Crank-Nicolson scheme for the diffusion part. The solver has the same structure as the one for first order Strang splitting and was implemented as

When executing split_diffu_react.py, we find that the estimated convergence rates are as expected. The second order Strang splitting gives the least error (about 4e−5) and has second order convergence (r = 2), while the remaining three approaches have first order convergence (r = 1).

5.6.6 Analysis of the Splitting Method

Let us address a linear PDE problem for which we can develop analytical solutions of the discrete equations, with and without splitting, and discuss these. Choosing \(f(u)=-\beta u\) for a constant β gives a linear problem. We use the Forward Euler method for both the PDE and ODE problems.

We seek a 1D Fourier wave component solution of the problem, assuming homogeneous Dirichlet conditions at x = 0 and x = L:

This component fits the 1D PDE problem (f = 0). On complex form we can write

where \(i=\sqrt{-1}\) and the imaginary part is taken as the physical solution.

We refer to Sect. 3.3 and to the book [9] for a discussion of exact numerical solutions to diffusion and decay problems, respectively. The key idea is to search for solutions \(A^{n}e^{ikx}\) and determine A. For the diffusion problem solved by a Forward Euler method one has

where \(F=\alpha\Delta t/\Delta x^{2}\) is the mesh Fourier number and \(p=k\Delta x/2\) is a dimensionless number reflecting the spatial resolution (number of points per wave length in space). For the decay problem \(u^{\prime}=-\beta u\), we have A = 1 − q, where q is a dimensionless parameter for the resolution in the decay problem: \(q=\beta\Delta t\).

The original model problem can also be discretized by a Forward Euler scheme,

Assuming \(A^{n}e^{ikx}\) we find that

We are particularly interested in what happens at one time step. That is,

In the two stage algorithm, we first compute the diffusion step

Then we use this as input to the decay algorithm and arrive at

The splitting approximation over one step is therefore

5.7 Exercises

Problem 5.1 (Determine if equations are nonlinear or not)

Classify each term in the following equations as linear or nonlinear. Assume that u, u, and p are unknown functions and that all other symbols are known quantities.

-

1.

\(mu^{\prime\prime}+\beta|u^{\prime}|u^{\prime}+cu=F(t)\)

-

2.

\(u_{t}=\alpha u_{xx}\)

-

3.

\(u_{tt}=c^{2}\nabla^{2}u\)

-

4.

\(u_{t}=\nabla\cdot(\alpha(u)\nabla u)+f(x,y)\)

-

5.

\(u_{t}+f(u)_{x}=0\)

-

6.

\(\boldsymbol{u}_{t}+\boldsymbol{u}\cdot\nabla\boldsymbol{u}=-\nabla p+r\nabla^{2}\boldsymbol{u}\), \(\nabla\cdot\boldsymbol{u}=0\) (u is a vector field)

-

7.

\(u^{\prime}=f(u,t)\)

-

8.

\(\nabla^{2}u=\lambda e^{u}\)

Filename: nonlinear_vs_linear.

Problem 5.2 (Derive and investigate a generalized logistic model)

The logistic model for population growth is derived by assuming a nonlinear growth rate,

and the logistic model arises from the simplest possible choice of a(u): \(r(u)=\varrho(1-u/M)\), where M is the maximum value of u that the environment can sustain, and \(\varrho\) is the growth under unlimited access to resources (as in the beginning when u is small). The idea is that \(a(u)\sim\varrho\) when u is small and that \(a(t)\rightarrow 0\) as \(u\rightarrow M\).

An a(u) that generalizes the linear choice is the polynomial form

where p > 0 is some real number.

-

a)

Formulate a Forward Euler, Backward Euler, and a Crank-Nicolson scheme for (5.82).

Hint

Use a geometric mean approximation in the Crank-Nicolson scheme: \([a(u)u]^{n+1/2}\approx a(u^{n})u^{n+1}\).

-

a)

Formulate Picard and Newton iteration for the Backward Euler scheme in a).

-

b)

Implement the numerical solution methods from a) and b). Use logistic.py to compare the case p = 1 and the choice (5.83).

-

c)

Implement unit tests that check the asymptotic limit of the solutions: \(u\rightarrow M\) as \(t\rightarrow\infty\).

Hint

You need to experiment to find what ‘‘infinite time’’ is (increases substantially with p) and what the appropriate tolerance is for testing the asymptotic limit.

-

e)

Perform experiments with Newton and Picard iteration for the model (5.83). See how sensitive the number of iterations is to \(\Delta t\) and p.

Filename: logistic_p.

Problem 5.3 (Experience the behavior of Newton’s method)

The program Newton_demo.py illustrates graphically each step in Newton’s method and is run like

Use this program to investigate potential problems with Newton’s method when solving \(e^{-0.5x^{2}}\cos(\pi x)=0\). Try a starting point \(x_{0}=0.8\) and \(x_{0}=0.85\) and watch the different behavior. Just run

and repeat with 0.85 replaced by 0.8.

Exercise 5.4 (Compute the Jacobian of a 2 × 2 system)

Write up the system (5.18)–(5.19) in the form \(F(u)=0\), \(F=(F_{0},F_{1})\), \(u=(u_{0},u_{1})\), and compute the Jacobian \(J_{i,j}=\partial F_{i}/\partial u_{j}\).

Problem 5.5 (Solve nonlinear equations arising from a vibration ODE)

Consider a nonlinear vibration problem

where m > 0 is a constant, b ≥ 0 is a constant, s(u) a possibly nonlinear function of u, and F(t) is a prescribed function. Such models arise from Newton’s second law of motion in mechanical vibration problems where s(u) is a spring or restoring force, \(mu^{\prime\prime}\) is mass times acceleration, and \(bu^{\prime}|u^{\prime}|\) models water or air drag.

-

a)

Rewrite the equation for u as a system of two first-order ODEs, and discretize this system by a Crank-Nicolson (centered difference) method. With \(v=u^{\prime}\), we get a nonlinear term \(v^{n+\frac{1}{2}}|v^{n+\frac{1}{2}}|\). Use a geometric average for \(v^{n+\frac{1}{2}}\).

-

b)

Formulate a Picard iteration method to solve the system of nonlinear algebraic equations.

-

c)

Explain how to apply Newton’s method to solve the nonlinear equations at each time level. Derive expressions for the Jacobian and the right-hand side in each Newton iteration.

Filename: nonlin_vib.

Exercise 5.6 (Find the truncation error of arithmetic mean of products)

In Sect. 5.3.4 we introduce alternative arithmetic means of a product. Say the product is \(P(t)Q(t)\) evaluated at \(t=t_{n+\frac{1}{2}}\). The exact value is

There are two obvious candidates for evaluating \([PQ]^{n+\frac{1}{2}}\) as a mean of values of P and Q at t n and \(t_{n+1}\). Either we can take the arithmetic mean of each factor P and Q,

or we can take the arithmetic mean of the product PQ:

The arithmetic average of \(P(t_{n+\frac{1}{2}})\) is \(\mathcal{O}(\Delta t^{2})\):

A fundamental question is whether (5.85) and (5.86) have different orders of accuracy in \(\Delta t=t_{n+1}-t_{n}\). To investigate this question, expand quantities at \(t_{n+1}\) and t n in Taylor series around \(t_{n+\frac{1}{2}}\), and subtract the true value \([PQ]^{n+\frac{1}{2}}\) from the approximations (5.85) and (5.86) to see what the order of the error terms are.

Hint

You may explore sympy for carrying out the tedious calculations. A general Taylor series expansion of \(P(t+\frac{1}{2}\Delta t)\) around t involving just a general function P(t) can be created as follows:

The error of the arithmetic mean, \(\frac{1}{2}(P(-\frac{1}{2}\Delta t)+P(-\frac{1}{2}\Delta t))\) for t = 0 is then

Use these examples to investigate the error of (5.85) and (5.86) for n = 0. (Choosing n = 0 is necessary for not making the expressions too complicated for sympy, but there is of course no lack of generality by using n = 0 rather than an arbitrary n - the main point is the product and addition of Taylor series.)

Filename: product_arith_mean.

Problem 5.7 (Newton’s method for linear problems)

Suppose we have a linear system \(F(u)=Au-b=0\). Apply Newton’s method to this system, and show that the method converges in one iteration.

Filename: Newton_linear.

Problem 5.8 (Discretize a 1D problem with a nonlinear coefficient)

We consider the problem

Discretize (5.87) by a centered finite difference method on a uniform mesh.

Filename: nonlin_1D_coeff_discretize.

Problem 5.9 (Linearize a 1D problem with a nonlinear coefficient)

We have a two-point boundary value problem

-

a)

Construct a Picard iteration method for (5.88) without discretizing in space.

-

b)

Apply Newton’s method to (5.88) without discretizing in space.

-

c)

Discretize (5.88) by a centered finite difference scheme. Construct a Picard method for the resulting system of nonlinear algebraic equations.

-

d)

Discretize (5.88) by a centered finite difference scheme. Define the system of nonlinear algebraic equations, calculate the Jacobian, and set up Newton’s method for solving the system.

Filename: nonlin_1D_coeff_linearize.

Problem 5.10 (Finite differences for the 1D Bratu problem)

We address the so-called Bratu problem

where λ is a given parameter and u is a function of x. This is a widely used model problem for studying numerical methods for nonlinear differential equations. The problem (5.89) has an exact solution

where θ solves

There are two solutions of (5.89) for \(0<\lambda<\lambda_{c}\) and no solution for \(\lambda> \lambda_{c}\). For \(\lambda=\lambda_{c}\) there is one unique solution. The critical value λ c solves

A numerical value is \(\lambda_{c}=3.513830719\).

-

a)

Discretize (5.89) by a centered finite difference method.

-

b)

Set up the nonlinear equations \(F_{i}(u_{0},u_{1},\ldots,u_{N_{x}})=0\) from a). Calculate the associated Jacobian.

-

c)

Implement a solver that can compute u(x) using Newton’s method. Plot the error as a function of x in each iteration.

-

d)

Investigate whether Newton’s method gives second-order convergence by computing \(||u_{\mbox{\footnotesize e}}-u||/||u_{\mbox{\footnotesize e}}-u^{-}||^{2}\) in each iteration, where u is solution in the current iteration and \(u^{-}\) is the solution in the previous iteration.

Filename: nonlin_1D_Bratu_fd.

Problem 5.11 (Discretize a nonlinear 1D heat conduction PDE by finite differences)

We address the 1D heat conduction PDE

for \(x\in[0,L]\), where \(\varrho\) is the density of the solid material, c(T) is the heat capacity, T is the temperature, and k(T) is the heat conduction coefficient. \(T(x,0)=I(x)\), and ends are subject to a cooling law:

where h(T) is a heat transfer coefficient and T s is the given surrounding temperature.

-

a)

Discretize this PDE in time using either a Backward Euler or Crank-Nicolson scheme.

-

b)

Formulate a Picard iteration method for the time-discrete problem (i.e., an iteration method before discretizing in space).

-

c)

Formulate a Newton method for the time-discrete problem in b).

-

d)