Abstract

A very wide range of physical processes lead to wave motion, where signals are propagated through a medium in space and time, normally with little or no permanent movement of the medium itself. The shape of the signals may undergo changes as they travel through matter, but usually not so much that the signals cannot be recognized at some later point in space and time. Many types of wave motion can be described by the equation \(u_{tt}=\nabla\cdot(c^{2}\nabla u)+f\), which we will solve in the forthcoming text by finite difference methods.

You have full access to this open access chapter, Download chapter PDF

Similar content being viewed by others

A very wide range of physical processes lead to wave motion, where signals are propagated through a medium in space and time, normally with little or no permanent movement of the medium itself. The shape of the signals may undergo changes as they travel through matter, but usually not so much that the signals cannot be recognized at some later point in space and time. Many types of wave motion can be described by the equation \(u_{tt}=\nabla\cdot(c^{2}\nabla u)+f\), which we will solve in the forthcoming text by finite difference methods.

2.1 Simulation of Waves on a String

We begin our study of wave equations by simulating one-dimensional waves on a string, say on a guitar or violin. Let the string in the undeformed state coincide with the interval \([0,L]\) on the x axis, and let \(u(x,t)\) be the displacement at time t in the y direction of a point initially at x. The displacement function u is governed by the mathematical model

The constant c and the function I(x) must be prescribed.

Equation (2.1) is known as the one-dimensional wave equation. Since this PDE contains a second-order derivative in time, we need two initial conditions. The condition (2.2) specifies the initial shape of the string, I(x), and (2.3) expresses that the initial velocity of the string is zero. In addition, PDEs need boundary conditions, given here as (2.4) and (2.5). These two conditions specify that the string is fixed at the ends, i.e., that the displacement u is zero.

The solution \(u(x,t)\) varies in space and time and describes waves that move with velocity c to the left and right.

Sometimes we will use a more compact notation for the partial derivatives to save space:

and similar expressions for derivatives with respect to other variables. Then the wave equation can be written compactly as \(u_{tt}=c^{2}u_{xx}\).

The PDE problem (2.1)–(2.5 ) will now be discretized in space and time by a finite difference method.

2.1.1 Discretizing the Domain

The temporal domain \([0,T]\) is represented by a finite number of mesh points

Similarly, the spatial domain \([0,L]\) is replaced by a set of mesh points

One may view the mesh as two-dimensional in the x,t plane, consisting of points \((x_{i},t_{n})\), with \(i=0,\ldots,N_{x}\) and \(n=0,\ldots,N_{t}\).

Uniform meshes

For uniformly distributed mesh points we can introduce the constant mesh spacings \(\Delta t\) and \(\Delta x\). We have that

We also have that \(\Delta x=x_{i}-x_{i-1}\), \(i=1,\ldots,N_{x}\), and \(\Delta t=t_{n}-t_{n-1}\), \(n=1,\ldots,N_{t}\). Figure 2.1 displays a mesh in the x,t plane with \(N_{t}=5\), \(N_{x}=5\), and constant mesh spacings.

2.1.2 The Discrete Solution

The solution \(u(x,t)\) is sought at the mesh points. We introduce the mesh function \(u_{i}^{n}\), which approximates the exact solution at the mesh point \((x_{i},t_{n})\) for \(i=0,\ldots,N_{x}\) and \(n=0,\ldots,N_{t}\). Using the finite difference method, we shall develop algebraic equations for computing the mesh function.

2.1.3 Fulfilling the Equation at the Mesh Points

In the finite difference method, we relax the condition that (2.1) holds at all points in the space-time domain \((0,L)\times(0,T]\) to the requirement that the PDE is fulfilled at the interior mesh points only:

for \(i=1,\ldots,N_{x}-1\) and \(n=1,\ldots,N_{t}-1\). For n = 0 we have the initial conditions \(u=I(x)\) and \(u_{t}=0\), and at the boundaries \(i=0,N_{x}\) we have the boundary condition u = 0.

2.1.4 Replacing Derivatives by Finite Differences

The second-order derivatives can be replaced by central differences. The most widely used difference approximation of the second-order derivative is

It is convenient to introduce the finite difference operator notation

A similar approximation of the second-order derivative in the x direction reads

Algebraic version of the PDE

We can now replace the derivatives in (2.10) and get

or written more compactly using the operator notation:

Interpretation of the equation as a stencil

A characteristic feature of (2.11) is that it involves u values from neighboring points only: \(u_{i}^{n+1}\), \(u^{n}_{i\pm 1}\), \(u^{n}_{i}\), and \(u^{n-1}_{i}\). The circles in Fig. 2.1 illustrate such neighboring mesh points that contribute to an algebraic equation. In this particular case, we have sampled the PDE at the point \((2,2)\) and constructed (2.11), which then involves a coupling of \(u_{1}^{2}\), \(u_{2}^{3}\), \(u_{2}^{2}\), \(u_{2}^{1}\), and \(u_{3}^{2}\). The term stencil is often used about the algebraic equation at a mesh point, and the geometry of a typical stencil is illustrated in Fig. 2.1. One also often refers to the algebraic equations as discrete equations, (finite) difference equations or a finite difference scheme.

Algebraic version of the initial conditions

We also need to replace the derivative in the initial condition (2.3) by a finite difference approximation. A centered difference of the type

seems appropriate. Writing out this equation and ordering the terms give

The other initial condition can be computed by

2.1.5 Formulating a Recursive Algorithm

We assume that \(u^{n}_{i}\) and \(u^{n-1}_{i}\) are available for \(i=0,\ldots,N_{x}\). The only unknown quantity in (2.11) is therefore \(u^{n+1}_{i}\), which we now can solve for:

We have here introduced the parameter

known as the Courant number.

C is the key parameter in the discrete wave equation

We see that the discrete version of the PDE features only one parameter, C, which is therefore the key parameter, together with N x , that governs the quality of the numerical solution (see Sect. 2.10 for details). Both the primary physical parameter c and the numerical parameters \(\Delta x\) and \(\Delta t\) are lumped together in C. Note that C is a dimensionless parameter.

Given that \(u^{n-1}_{i}\) and \(u^{n}_{i}\) are known for \(i=0,\ldots,N_{x}\), we find new values at the next time level by applying the formula (2.14) for \(i=1,\ldots,N_{x}-1\). Figure 2.1 illustrates the points that are used to compute \(u^{3}_{2}\). For the boundary points, i = 0 and \(i=N_{x}\), we apply the boundary conditions \(u_{i}^{n+1}=0\).

Even though sound reasoning leads up to (2.14), there is still a minor challenge with it that needs to be resolved. Think of the very first computational step to be made. The scheme (2.14) is supposed to start at n = 1, which means that we compute u 2 from u 1 and u 0. Unfortunately, we do not know the value of u 1, so how to proceed? A standard procedure in such cases is to apply (2.14) also for n = 0. This immediately seems strange, since it involves \(u^{-1}_{i}\), which is an undefined quantity outside the time mesh (and the time domain). However, we can use the initial condition (2.13) in combination with (2.14) when n = 0 to eliminate \(u^{-1}_{i}\) and arrive at a special formula for \(u_{i}^{1}\):

Figure 2.2 illustrates how (2.16) connects four instead of five points: \(u^{1}_{2}\), \(u_{1}^{0}\), \(u_{2}^{0}\), and \(u_{3}^{0}\).

We can now summarize the computational algorithm:

-

1.

Compute \(u^{0}_{i}=I(x_{i})\) for \(i=0,\ldots,N_{x}\)

-

2.

Compute \(u^{1}_{i}\) by (2.16) for \(i=1,2,\ldots,N_{x}-1\) and set \(u_{i}^{1}=0\) for the boundary points given by i = 0 and \(i=N_{x}\),

-

3.

For each time level \(n=1,2,\ldots,N_{t}-1\)

-

a)

apply (2.14) to find \(u^{n+1}_{i}\) for \(i=1,\ldots,N_{x}-1\)

-

b)

set \(u^{n+1}_{i}=0\) for the boundary points having i = 0, \(i=N_{x}\).

-

a)

The algorithm essentially consists of moving a finite difference stencil through all the mesh points, which can be seen as an animation in a web page Footnote 1 or a movie file Footnote 2.

2.1.6 Sketch of an Implementation

The algorithm only involves the three most recent time levels, so we need only three arrays for \(u_{i}^{n+1}\), \(u_{i}^{n}\), and \(u_{i}^{n-1}\), \(i=0,\ldots,N_{x}\). Storing all the solutions in a two-dimensional array of size \((N_{x}+1)\times(N_{t}+1)\) would be possible in this simple one-dimensional PDE problem, but is normally out of the question in three-dimensional (3D) and large two-dimensional (2D) problems. We shall therefore, in all our PDE solving programs, have the unknown in memory at as few time levels as possible.

In a Python implementation of this algorithm, we use the array elements u[i] to store \(u^{n+1}_{i}\), u_n[i] to store \(u^{n}_{i}\), and u_nm1[i] to store \(u^{n-1}_{i}\).

The following Python snippet realizes the steps in the computational algorithm.

2.2 Verification

Before implementing the algorithm, it is convenient to add a source term to the PDE (2.1), since that gives us more freedom in finding test problems for verification. Physically, a source term acts as a generator for waves in the interior of the domain.

2.2.1 A Slightly Generalized Model Problem

We now address the following extended initial-boundary value problem for one-dimensional wave phenomena:

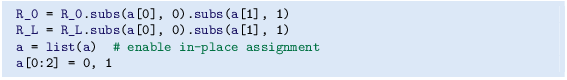

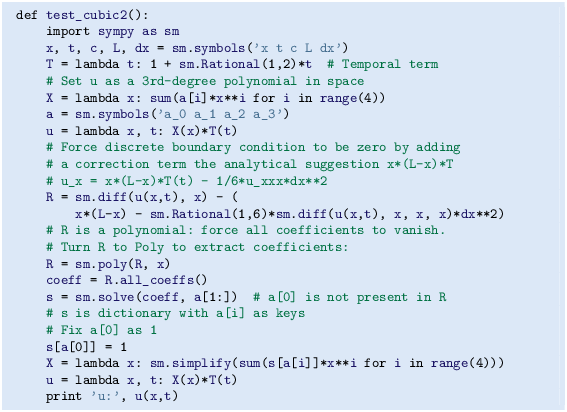

Sampling the PDE at \((x_{i},t_{n})\) and using the same finite difference approximations as above, yields

Writing this out and solving for the unknown \(u^{n+1}_{i}\) results in

The equation for the first time step must be rederived. The discretization of the initial condition \(u_{t}=V(x)\) at t = 0 becomes

which, when inserted in (2.23) for n = 0, gives the special formula

2.2.2 Using an Analytical Solution of Physical Significance

Many wave problems feature sinusoidal oscillations in time and space. For example, the original PDE problem (2.1)–(2.5) allows an exact solution

This \(u_{\mbox{\footnotesize e}}\) fulfills the PDE with f = 0, boundary conditions \(u_{\mbox{\footnotesize e}}(0,t)=u_{\mbox{\footnotesize e}}(L,t)=0\), as well as initial conditions \(I(x)=A\sin\left(\frac{\pi}{L}x\right)\) and V = 0.

How to use exact solutions for verification

It is common to use such exact solutions of physical interest to verify implementations. However, the numerical solution \(u^{n}_{i}\) will only be an approximation to \(u_{\mbox{\footnotesize e}}(x_{i},t_{n})\). We have no knowledge of the precise size of the error in this approximation, and therefore we can never know if discrepancies between \(u^{n}_{i}\) and \(u_{\mbox{\footnotesize e}}(x_{i},t_{n})\) are caused by mathematical approximations or programming errors. In particular, if plots of the computed solution \(u^{n}_{i}\) and the exact one (2.25) look similar, many are tempted to claim that the implementation works. However, even if color plots look nice and the accuracy is ‘‘deemed good’’, there can still be serious programming errors present!

The only way to use exact physical solutions like (2.25) for serious and thorough verification is to run a series of simulations on finer and finer meshes, measure the integrated error in each mesh, and from this information estimate the empirical convergence rate of the method.

An introduction to the computing of convergence rates is given in Section 3.1.6 in [9]. There is also a detailed example on computing convergence rates in Sect. 1.2.2.

In the present problem, one expects the method to have a convergence rate of 2 (see Sect. 2.10), so if the computed rates are close to 2 on a sufficiently fine mesh, we have good evidence that the implementation is free of programming mistakes.

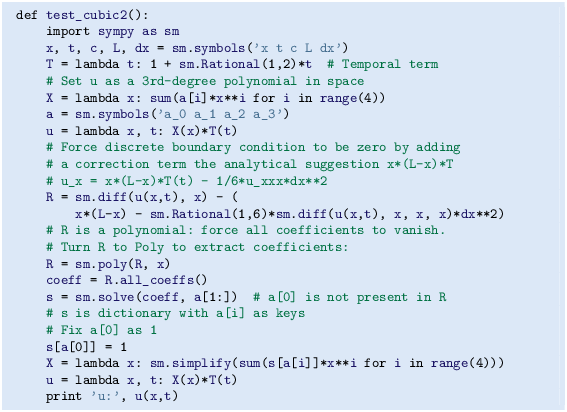

2.2.3 Manufactured Solution and Estimation of Convergence Rates

Specifying the solution and computing corresponding data

One problem with the exact solution (2.25) is that it requires a simplification (V = 0,f = 0) of the implemented problem (2.17)–(2.21). An advantage of using a manufactured solution is that we can test all terms in the PDE problem. The idea of this approach is to set up some chosen solution and fit the source term, boundary conditions, and initial conditions to be compatible with the chosen solution. Given that our boundary conditions in the implementation are \(u(0,t)=u(L,t)=0\), we must choose a solution that fulfills these conditions. One example is

Inserted in the PDE \(u_{tt}=c^{2}u_{xx}+f\) we get

The initial conditions become

Defining a single discretization parameter

To verify the code, we compute the convergence rates in a series of simulations, letting each simulation use a finer mesh than the previous one. Such empirical estimation of convergence rates relies on an assumption that some measure E of the numerical error is related to the discretization parameters through

where C t , C x , r, and p are constants. The constants r and p are known as the convergence rates in time and space, respectively. From the accuracy in the finite difference approximations, we expect r = p = 2, since the error terms are of order \(\Delta t^{2}\) and \(\Delta x^{2}\). This is confirmed by truncation error analysis and other types of analysis.

By using an exact solution of the PDE problem, we will next compute the error measure E on a sequence of refined meshes and see if the rates r = p = 2 are obtained. We will not be concerned with estimating the constants C t and C x , simply because we are not interested in their values.

It is advantageous to introduce a single discretization parameter \(h=\Delta t=\hat{c}\Delta x\) for some constant \(\hat{c}\). Since \(\Delta t\) and \(\Delta x\) are related through the Courant number, \(\Delta t=C\Delta x/c\), we set \(h=\Delta t\), and then \(\Delta x=hc/C\). Now the expression for the error measure is greatly simplified:

Computing errors

We choose an initial discretization parameter h 0 and run experiments with decreasing h: \(h_{i}=2^{-i}h_{0}\), \(i=1,2,\ldots,m\). Halving h in each experiment is not necessary, but it is a common choice. For each experiment we must record E and h. Standard choices of error measure are the \(\ell^{2}\) and \(\ell^{\infty}\) norms of the error mesh function \(e^{n}_{i}\):

In Python, one can compute \(\sum_{i}(e^{n}_{i})^{2}\) at each time step and accumulate the value in some sum variable, say e2_sum. At the final time step one can do sqrt(dt*dx*e2_sum). For the \(\ell^{\infty}\) norm one must compare the maximum error at a time level (e.max()) with the global maximum over the time domain: e_max = max(e_max, e.max()).

An alternative error measure is to use a spatial norm at one time step only, e.g., the end time T (\(n=N_{t}\)):

The important point is that the error measure (E) for the simulation is represented by a single number.

Computing rates

Let E i be the error measure in experiment (mesh) number i (not to be confused with the spatial index i) and let h i be the corresponding discretization parameter (h). With the error model \(E_{i}=Dh_{i}^{r}\), we can estimate r by comparing two consecutive experiments:

Dividing the two equations eliminates the (uninteresting) constant D. Thereafter, solving for r yields

Since r depends on i, i.e., which simulations we compare, we add an index to r: r i , where \(i=0,\ldots,m-2\), if we have m experiments: \((h_{0},E_{0}),\ldots,(h_{m-1},E_{m-1})\).

In our present discretization of the wave equation we expect r = 2, and hence the r i values should converge to 2 as i increases.

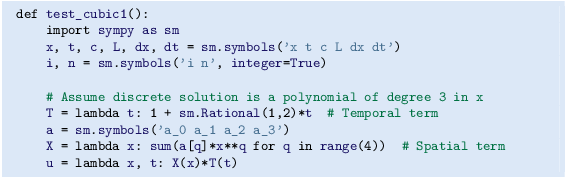

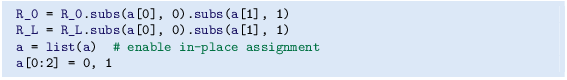

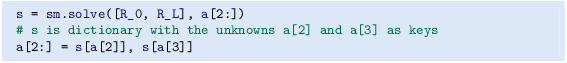

2.2.4 Constructing an Exact Solution of the Discrete Equations

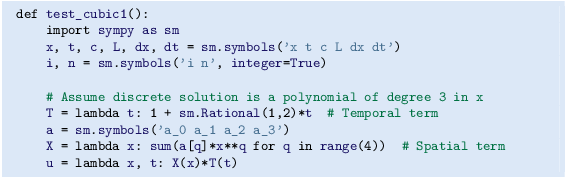

With a manufactured or known analytical solution, as outlined above, we can estimate convergence rates and see if they have the correct asymptotic behavior. Experience shows that this is a quite good verification technique in that many common bugs will destroy the convergence rates. A significantly better test though, would be to check that the numerical solution is exactly what it should be. This will in general require exact knowledge of the numerical error, which we do not normally have (although we in Sect. 2.10 establish such knowledge in simple cases). However, it is possible to look for solutions where we can show that the numerical error vanishes, i.e., the solution of the original continuous PDE problem is also a solution of the discrete equations. This property often arises if the exact solution of the PDE is a lower-order polynomial. (Truncation error analysis leads to error measures that involve derivatives of the exact solution. In the present problem, the truncation error involves 4th-order derivatives of u in space and time. Choosing u as a polynomial of degree three or less will therefore lead to vanishing error.)

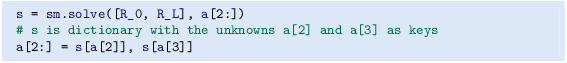

We shall now illustrate the construction of an exact solution to both the PDE itself and the discrete equations. Our chosen manufactured solution is quadratic in space and linear in time. More specifically, we set

which by insertion in the PDE leads to \(f(x,t)=2(1+t)c^{2}\). This \(u_{\mbox{\footnotesize e}}\) fulfills the boundary conditions u = 0 and demands \(I(x)=x(L-x)\) and \(V(x)={\frac{1}{2}}x(L-x)\).

To realize that the chosen \(u_{\mbox{\footnotesize e}}\) is also an exact solution of the discrete equations, we first remind ourselves that \(t_{n}=n\Delta t\) so that

Hence,

Similarly, we get that

Now, \(f^{n}_{i}=2(1+{\frac{1}{2}}t_{n})c^{2}\), which results in

Moreover, \(u_{\mbox{\footnotesize e}}(x_{i},0)=I(x_{i})\), \(\partial u_{\mbox{\footnotesize e}}/\partial t=V(x_{i})\) at t = 0, and \(u_{\mbox{\footnotesize e}}(x_{0},t)=u_{\mbox{\footnotesize e}}(x_{N_{x}},0)=0\). Also the modified scheme for the first time step is fulfilled by \(u_{\mbox{\footnotesize e}}(x_{i},t_{n})\).

Therefore, the exact solution \(u_{\mbox{\footnotesize e}}(x,t)=x(L-x)(1+t/2)\) of the PDE problem is also an exact solution of the discrete problem. This means that we know beforehand what numbers the numerical algorithm should produce. We can use this fact to check that the computed \(u^{n}_{i}\) values from an implementation equals \(u_{\mbox{\footnotesize e}}(x_{i},t_{n})\), within machine precision. This result is valid regardless of the mesh spacings \(\Delta x\) and \(\Delta t\)! Nevertheless, there might be stability restrictions on \(\Delta x\) and \(\Delta t\), so the test can only be run for a mesh that is compatible with the stability criterion (which in the present case is C ≤ 1, to be derived later).

Notice

A product of quadratic or linear expressions in the various independent variables, as shown above, will often fulfill both the PDE problem and the discrete equations, and can therefore be very useful solutions for verifying implementations.

However, for 1D wave equations of the type \(u_{tt}=c^{2}u_{xx}\) we shall see that there is always another much more powerful way of generating exact solutions (which consists in just setting C = 1 (!), as shown in Sect. 2.10).

2.3 Implementation

This section presents the complete computational algorithm, its implementation in Python code, animation of the solution, and verification of the implementation.

A real implementation of the basic computational algorithm from Sect. 2.1.5 and 2.1.6 can be encapsulated in a function, taking all the input data for the problem as arguments. The physical input data consists of c, I(x), V(x), \(f(x,t)\), L, and T. The numerical input is the mesh parameters \(\Delta t\) and \(\Delta x\).

Instead of specifying \(\Delta t\) and \(\Delta x\), we can specify one of them and the Courant number C instead, since having explicit control of the Courant number is convenient when investigating the numerical method. Many find it natural to prescribe the resolution of the spatial grid and set N x . The solver function can then compute \(\Delta t=CL/(cN_{x})\). However, for comparing \(u(x,t)\) curves (as functions of x) for various Courant numbers it is more convenient to keep \(\Delta t\) fixed for all C and let \(\Delta x\) vary according to \(\Delta x=c\Delta t/C\). With \(\Delta t\) fixed, all frames correspond to the same time t, and this simplifies animations that compare simulations with different mesh resolutions. Plotting functions of x with different spatial resolution is trivial, so it is easier to let \(\Delta x\) vary in the simulations than \(\Delta t\).

2.3.1 Callback Function for User-Specific Actions

The solution at all spatial points at a new time level is stored in an array u of length \(N_{x}+1\). We need to decide what to do with this solution, e.g., visualize the curve, analyze the values, or write the array to file for later use. The decision about what to do is left to the user in the form of a user-supplied function

where u is the solution at the spatial points x at time t[n]. The user_action function is called from the solver at each time level n.

If the user wants to plot the solution or store the solution at a time point, she needs to write such a function and take appropriate actions inside it. We will show examples on many such user_action functions.

Since the solver function makes calls back to the user’s code via such a function, this type of function is called a callback function. When writing general software, like our solver function, which also needs to carry out special problem- or solution-dependent actions (like visualization), it is a common technique to leave those actions to user-supplied callback functions.

The callback function can be used to terminate the solution process if the user returns True. For example,

is a callback function that will terminate the solver function (given below) of the amplitude of the waves exceed 10, which is here considered as a numerical instability.

2.3.2 The Solver Function

A first attempt at a solver function is listed below.

A couple of remarks about the above code is perhaps necessary:

-

Although we give dt and compute dx via C and c, the resulting t and x meshes do not necessarily correspond exactly to these values because of rounding errors. To explicitly ensure that dx and dt correspond to the cell sizes in x and t, we recompute the values.

-

According to the particular choice made in Sect. 2.3.1, a true value returned from user_action should terminate the simulation. This is here implemented by a break statement inside the for loop in the solver.

2.3.3 Verification: Exact Quadratic Solution

We use the test problem derived in Sect. 2.2.1 for verification. Below is a unit test based on this test problem and realized as a proper test function compatible with the unit test frameworks nose or pytest.

When this function resides in the file wave1D_u0.py, one can run pytest to check that all test functions with names test_*() in this file work:

2.3.4 Verification: Convergence Rates

A more general method, but not so reliable as a verification method, is to compute the convergence rates and see if they coincide with theoretical estimates. Here we expect a rate of 2 according to the various results in Sect. 2.10. A general function for computing convergence rates can be written like this:

Using the analytical solution from Sect. 2.2.2, we can call convergence_rates to see if we get a convergence rate that approaches 2 and use the final estimate of the rate in an assert statement such that this function becomes a proper test function:

Doing py.test -s -v wave1D_u0.py will run also this test function and show the rates 2.05, 1.98, 2.00, 2.00, and 2.00 (to two decimals).

2.3.5 Visualization: Animating the Solution

Now that we have verified the implementation it is time to do a real computation where we also display evolution of the waves on the screen. Since the solver function knows nothing about what type of visualizations we may want, it calls the callback function user_action(u, x, t, n). We must therefore write this function and find the proper statements for plotting the solution.

Function for administering the simulation

The following viz function

-

1.

defines a user_action callback function for plotting the solution at each time level,

-

2.

calls the solver function, and

-

3.

combines all the plots (in files) to video in different formats.

Dissection of the code

The viz function can either use SciTools or Matplotlib for visualizing the solution. The user_action function based on SciTools is called plot_u_st, while the user_action function based on Matplotlib is a bit more complicated as it is realized as a class and needs statements that differ from those for making static plots. SciTools can utilize both Matplotlib and Gnuplot (and many other plotting programs) for doing the graphics, but Gnuplot is a relevant choice for large N x or in two-dimensional problems as Gnuplot is significantly faster than Matplotlib for screen animations.

A function inside another function, like plot_u_st in the above code segment, has access to and remembers all the local variables in the surrounding code inside the viz function (!). This is known in computer science as a closure and is very convenient to program with. For example, the plt and time modules defined outside plot_u are accessible for plot_u_st when the function is called (as user_action) in the solver function. Some may think, however, that a class instead of a closure is a cleaner and easier-to-understand implementation of the user action function, see Sect. 2.8.

The plot_u_st function just makes a standard SciTools plot command for plotting u as a function of x at time t[n]. To achieve a smooth animation, the plot command should take keyword arguments instead of being broken into separate calls to xlabel, ylabel, axis, time, and show. Several plot calls will automatically cause an animation on the screen. In addition, we want to save each frame in the animation to file. We then need a filename where the frame number is padded with zeros, here tmp_0000.png, tmp_0001.png, and so on. The proper printf construction is then tmp_%04d.png. Section 1.3.2 contains more basic information on making animations.

The solver is called with an argument plot_u as user_function. If the user chooses to use SciTools, plot_u is the plot_u_st callback function, but for Matplotlib it is an instance of the class PlotMatplotlib. Also this class makes use of variables defined in the viz function: plt and time. With Matplotlib, one has to make the first plot the standard way, and then update the y data in the plot at every time level. The update requires active use of the returned value from plt.plot in the first plot. This value would need to be stored in a local variable if we were to use a closure for the user_action function when doing the animation with Matplotlib. It is much easier to store the variable as a class attribute self.lines. Since the class is essentially a function, we implement the function as the special method __call__ such that the instance plot_u(u, x, t, n) can be called as a standard callback function from solver.

Making movie files

From the frame_*.png files containing the frames in the animation we can make video files. Section 1.3.2 presents basic information on how to use the ffmpeg (or avconv) program for producing video files in different modern formats: Flash, MP4, Webm, and Ogg.

The viz function creates an ffmpeg or avconv command with the proper arguments for each of the formats Flash, MP4, WebM, and Ogg. The task is greatly simplified by having a codec2ext dictionary for mapping video codec names to filename extensions. As mentioned in Sect. 1.3.2, only two formats are actually needed to ensure that all browsers can successfully play the video: MP4 and WebM.

Some animations having a large number of plot files may not be properly combined into a video using ffmpeg or avconv. A method that always works is to play the PNG files as an animation in a browser using JavaScript code in an HTML file. The SciTools package has a function movie (or a stand-alone command scitools movie) for creating such an HTML player. The plt.movie call in the viz function shows how the function is used. The file movie.html can be loaded into a browser and features a user interface where the speed of the animation can be controlled. Note that the movie in this case consists of the movie.html file and all the frame files tmp_*.png.

Skipping frames for animation speed

Sometimes the time step is small and T is large, leading to an inconveniently large number of plot files and a slow animation on the screen. The solution to such a problem is to decide on a total number of frames in the animation, num_frames, and plot the solution only for every skip_frame frames. For example, setting skip_frame=5 leads to plots of every 5 frames. The default value skip_frame=1 plots every frame. The total number of time levels (i.e., maximum possible number of frames) is the length of t, t.size (or len(t)), so if we want num_frames frames in the animation, we need to plot every t.size/num_frames frames:

The initial condition (n=0) is included by n % skip_frame == 0, as well as every skip_frame-th frame. As n % skip_frame == 0 will very seldom be true for the very final frame, we must also check if n == t.size-1 to get the final frame included.

A simple choice of numbers may illustrate the formulas: say we have 801 frames in total (t.size) and we allow only 60 frames to be plotted. As n then runs from 801 to 0, we need to plot every 801/60 frame, which with integer division yields 13 as skip_frame. Using the mod function, n % skip_frame, this operation is zero every time n can be divided by 13 without a remainder. That is, the if test is true when n equals \(0,13,26,39,{\ldots},780,801\). The associated code is included in the plot_u function, inside the viz function, in the file wave1D_u0.py .

2.3.6 Running a Case

The first demo of our 1D wave equation solver concerns vibrations of a string that is initially deformed to a triangular shape, like when picking a guitar string:

We choose L = 75 cm, \(x_{0}=0.8L\), a = 5 mm, and a time frequency ν = 440 Hz. The relation between the wave speed c and ν is \(c=\nu\lambda\), where λ is the wavelength, taken as 2L because the longest wave on the string forms half a wavelength. There is no external force, so f = 0 (meaning we can neglect gravity), and the string is at rest initially, implying V = 0.

Regarding numerical parameters, we need to specify a \(\Delta t\). Sometimes it is more natural to think of a spatial resolution instead of a time step. A natural semi-coarse spatial resolution in the present problem is \(N_{x}=50\). We can then choose the associated \(\Delta t\) (as required by the viz and solver functions) as the stability limit: \(\Delta t=L/(N_{x}c)\). This is the \(\Delta t\) to be specified, but notice that if C < 1, the actual \(\Delta x\) computed in solver gets larger than \(L/N_{x}\): \(\Delta x=c\Delta t/C=L/(N_{x}C)\). (The reason is that we fix \(\Delta t\) and adjust \(\Delta x\), so if C gets smaller, the code implements this effect in terms of a larger \(\Delta x\).)

A function for setting the physical and numerical parameters and calling viz in this application goes as follows:

The associated program has the name wave1D_u0.py . Run the program and watch the movie of the vibrating string Footnote 3. The string should ideally consist of straight segments, but these are somewhat wavy due to numerical approximation. Run the case with the wave1D_u0.py code and C = 1 to see the exact solution.

2.3.7 Working with a Scaled PDE Model

Depending on the model, it may be a substantial job to establish consistent and relevant physical parameter values for a case. The guitar string example illustrates the point. However, by scaling the mathematical problem we can often reduce the need to estimate physical parameters dramatically. The scaling technique consists of introducing new independent and dependent variables, with the aim that the absolute values of these lie in \([0,1]\). We introduce the dimensionless variables (details are found in Section 3.1.1 in [11])

Here, L is a typical length scale, e.g., the length of the domain, and a is a typical size of u, e.g., determined from the initial condition: \(a=\max_{x}|I(x)|\).

We get by the chain rule that

Similarly,

Inserting the dimensionless variables in the PDE gives, in case f = 0,

Dropping the bars, we arrive at the scaled PDE

which has no parameter c 2 anymore. The initial conditions are scaled as

and

resulting in

In the common case V = 0 we see that there are no physical parameters to be estimated in the PDE model!

If we have a program implemented for the physical wave equation with dimensions, we can obtain the dimensionless, scaled version by setting c = 1. The initial condition of a guitar string, given in (2.33), gets its scaled form by choosing a = 1, L = 1, and \(x_{0}\in[0,1]\). This means that we only need to decide on the x 0 value as a fraction of unity, because the scaled problem corresponds to setting all other parameters to unity. In the code we can just set a=c=L=1, x0=0.8, and there is no need to calculate with wavelengths and frequencies to estimate c!

The only non-trivial parameter to estimate in the scaled problem is the final end time of the simulation, or more precisely, how it relates to periods in periodic solutions in time, since we often want to express the end time as a certain number of periods. The period in the dimensionless problem is 2, so the end time can be set to the desired number of periods times 2.

Why the dimensionless period is 2 can be explained by the following reasoning. Suppose that u behaves as \(\cos(\omega t)\) in time in the original problem with dimensions. The corresponding period is then \(P=2\pi/\omega\), but we need to estimate ω. A typical solution of the wave equation is \(u(x,t)=A\cos(kx)\cos(\omega t)\), where A is an amplitude and k is related to the wave length λ in space: \(\lambda=2\pi/k\). Both λ and A will be given by the initial condition I(x). Inserting this \(u(x,t)\) in the PDE yields \(-\omega^{2}=-c^{2}k^{2}\), i.e., ω = kc. The period is therefore \(P=2\pi/(kc)\). If the boundary conditions are \(u(0,t)=u(L,t)\), we need to have \(kL=n\pi\) for integer n. The period becomes P = 2L ∕ nc. The longest period is P = 2L ∕ c. The dimensionless period \(\tilde{P}\) is obtained by dividing P by the time scale L ∕ c, which results in \(\tilde{P}=2\). Shorter waves in the initial condition will have a dimensionless shorter period \(\tilde{P}=2/n\) (n > 1).

2.4 Vectorization

The computational algorithm for solving the wave equation visits one mesh point at a time and evaluates a formula for the new value \(u_{i}^{n+1}\) at that point. Technically, this is implemented by a loop over array elements in a program. Such loops may run slowly in Python (and similar interpreted languages such as R and MATLAB). One technique for speeding up loops is to perform operations on entire arrays instead of working with one element at a time. This is referred to as vectorization, vector computing, or array computing. Operations on whole arrays are possible if the computations involving each element is independent of each other and therefore can, at least in principle, be performed simultaneously. That is, vectorization not only speeds up the code on serial computers, but also makes it easy to exploit parallel computing. Actually, there are Python tools like Numba Footnote 4 that can automatically turn vectorized code into parallel code.

2.4.1 Operations on Slices of Arrays

Efficient computing with numpy arrays demands that we avoid loops and compute with entire arrays at once (or at least large portions of them). Consider this calculation of differences \(d_{i}=u_{i+1}-u_{i}\):

All the differences here are independent of each other. The computation of d can therefore alternatively be done by subtracting the array \((u_{0},u_{1},\ldots,u_{n-1})\) from the array where the elements are shifted one index upwards: \((u_{1},u_{2},\ldots,u_{n})\), see Fig. 2.3. The former subset of the array can be expressed by u[0:n-1], u[0:-1], or just u[:-1], meaning from index 0 up to, but not including, the last element (-1). The latter subset is obtained by u[1:n] or u[1:], meaning from index 1 and the rest of the array. The computation of d can now be done without an explicit Python loop:

or with explicit limits if desired:

Indices with a colon, going from an index to (but not including) another index are called slices. With numpy arrays, the computations are still done by loops, but in efficient, compiled, highly optimized C or Fortran code. Such loops are sometimes referred to as vectorized loops. Such loops can also easily be distributed among many processors on parallel computers. We say that the scalar code above, working on an element (a scalar) at a time, has been replaced by an equivalent vectorized code. The process of vectorizing code is called vectorization.

Test your understanding

Newcomers to vectorization are encouraged to choose a small array u, say with five elements, and simulate with pen and paper both the loop version and the vectorized version above.

Finite difference schemes basically contain differences between array elements with shifted indices. As an example, consider the updating formula

The vectorization consists of replacing the loop by arithmetics on slices of arrays of length n-2:

Note that the length of u2 becomes n-2. If u2 is already an array of length n and we want to use the formula to update all the ‘‘inner’’ elements of u2, as we will when solving a 1D wave equation, we can write

The first expression’s right-hand side is realized by the following steps, involving temporary arrays with intermediate results, since each array operation can only involve one or two arrays. The numpy package performs (behind the scenes) the first line above in four steps:

We need three temporary arrays, but a user does not need to worry about such temporary arrays.

Common mistakes with array slices

Array expressions with slices demand that the slices have the same shape. It easy to make a mistake in, e.g.,

and write

Now u[1:n] has wrong length (n-1) compared to the other array slices, causing a ValueError and the message could not broadcast input array from shape 103 into shape 104 (if n is 105). When such errors occur one must closely examine all the slices. Usually, it is easier to get upper limits of slices right when they use -1 or -2 or empty limit rather than expressions involving the length.

Another common mistake, when u2 has length n, is to forget the slice in the array on the left-hand side,

This is really crucial: now u2 becomes a new array of length n-2, which is the wrong length as we have no entries for the boundary values. We meant to insert the right-hand side array into the original u2 array for the entries that correspond to the internal points in the mesh (1:n-1 or 1:-1).

Vectorization may also work nicely with functions. To illustrate, we may extend the previous example as follows:

Assuming u2, u, and x all have length n, the vectorized version becomes

Obviously, f must be able to take an array as argument for f(x[1:-1]) to make sense.

2.4.2 Finite Difference Schemes Expressed as Slices

We now have the necessary tools to vectorize the wave equation algorithm as described mathematically in Sect. 2.1.5 and through code in Sect. 2.3.2. There are three loops: one for the initial condition, one for the first time step, and finally the loop that is repeated for all subsequent time levels. Since only the latter is repeated a potentially large number of times, we limit our vectorization efforts to this loop. Within the time loop, the space loop reads:

The vectorized version becomes

or

The program wave1D_u0v.py contains a new version of the function solver where both the scalar and the vectorized loops are included (the argument version is set to scalar or vectorized, respectively).

2.4.3 Verification

We may reuse the quadratic solution \(u_{\mbox{\footnotesize e}}(x,t)=x(L-x)(1+{\frac{1}{2}}t)\) for verifying also the vectorized code. A test function can now verify both the scalar and the vectorized version. Moreover, we may use a user_action function that compares the computed and exact solution at each time level and performs a test:

Lambda functions

The code segment above demonstrates how to achieve very compact code, without degraded readability, by use of lambda functions for the various input parameters that require a Python function. In essence,

is equivalent to

Note that lambda functions can just contain a single expression and no statements.

One advantage with lambda functions is that they can be used directly in calls:

2.4.4 Efficiency Measurements

The wave1D_u0v.py contains our new solver function with both scalar and vectorized code. For comparing the efficiency of scalar versus vectorized code, we need a viz function as discussed in Sect. 2.3.5. All of this viz function can be reused, except the call to solver_function. This call lacks the parameter version, which we want to set to vectorized and scalar for our efficiency measurements.

One solution is to copy the viz code from wave1D_u0 into wave1D_u0v.py and add a version argument to the solver_function call. Taking into account how much animation code we then duplicate, this is not a good idea. Alternatively, introducing the version argument in wave1D_u0.viz, so that this function can be imported into wave1D_u0v.py, is not a good solution either, since version has no meaning in that file. We need better ideas!

Solution 1

Calling viz in wave1D_u0 with solver_function as our new solver in wave1D_u0v works fine, since this solver has version=’vectorized’ as default value. The problem arises when we want to test version=’scalar’. The simplest solution is then to use wave1D_u0.solver instead. We make a new viz function in wave1D_u0v.py that has a version argument and that just calls wave1D_u0.viz:

Solution 2

There is a more advanced and fancier solution featuring a very useful trick: we can make a new function that will always call wave1D_u0v.solver with version=’scalar’. The functools.partial function from standard Python takes a function func as argument and a series of positional and keyword arguments and returns a new function that will call func with the supplied arguments, while the user can control all the other arguments in func. Consider a trivial example,

We want to ensure that f is always called with c=3, i.e., f has only two ‘‘free’’ arguments a and b. This functionality is obtained by

Now f2 calls f with whatever the user supplies as a and b, but c is always 3.

Back to our viz code, we can do

The new scalar_solver takes the same arguments as wave1D_u0.scalar and calls wave1D_u0v.scalar, but always supplies the extra argument version= ’scalar’. When sending this solver_function to wave1D_u0.viz, the latter will call wave1D_u0v.solver with all the I, V, f, etc., arguments we supply, plus version=’scalar’.

Efficiency experiments

We now have a viz function that can call our solver function both in scalar and vectorized mode. The function run_efficiency_ experiments in wave1D_u0v.py performs a set of experiments and reports the CPU time spent in the scalar and vectorized solver for the previous string vibration example with spatial mesh resolutions \(N_{x}=50,100,200,400,800\). Running this function reveals that the vectorized code runs substantially faster: the vectorized code runs approximately \(N_{x}/10\) times as fast as the scalar code!

2.4.5 Remark on the Updating of Arrays

At the end of each time step we need to update the u_nm1 and u_n arrays such that they have the right content for the next time step:

The order here is important: updating u_n first, makes u_nm1 equal to u, which is wrong!

The assignment u_n[:] = u copies the content of the u array into the elements of the u_n array. Such copying takes time, but that time is negligible compared to the time needed for computing u from the finite difference formula, even when the formula has a vectorized implementation. However, efficiency of program code is a key topic when solving PDEs numerically (particularly when there are two or three space dimensions), so it must be mentioned that there exists a much more efficient way of making the arrays u_nm1 and u_n ready for the next time step. The idea is based on switching references and explained as follows.

A Python variable is actually a reference to some object (C programmers may think of pointers). Instead of copying data, we can let u_nm1 refer to the u_n object and u_n refer to the u object. This is a very efficient operation (like switching pointers in C). A naive implementation like

will fail, however, because now u_nm1 refers to the u_n object, but then the name u_n refers to u, so that this u object has two references, u_n and u, while our third array, originally referred to by u_nm1, has no more references and is lost. This means that the variables u, u_n, and u_nm1 refer to two arrays and not three. Consequently, the computations at the next time level will be messed up, since updating the elements in u will imply updating the elements in u_n too, thereby destroying the solution at the previous time step.

While u_nm1 = u_n is fine, u_n = u is problematic, so the solution to this problem is to ensure that u points to the u_nm1 array. This is mathematically wrong, but new correct values will be filled into u at the next time step and make it right.

The correct switch of references is

We can get rid of the temporary reference tmp by writing

This switching of references for updating our arrays will be used in later implementations.

Caution

The update u_nm1, u_n, u = u_n, u, u_nm1 leaves wrong content in u at the final time step. This means that if we return u, as we do in the example codes here, we actually return u_nm1, which is obviously wrong. It is therefore important to adjust the content of u to u = u_n before returning u. (Note that the user_action function reduces the need to return the solution from the solver.)

2.5 Exercises

Exercise 2.1 (Simulate a standing wave)

The purpose of this exercise is to simulate standing waves on \([0,L]\) and illustrate the error in the simulation. Standing waves arise from an initial condition

where m is an integer and A is a freely chosen amplitude. The corresponding exact solution can be computed and reads

-

a)

Explain that for a function \(\sin kx\cos\omega t\) the wave length in space is \(\lambda=2\pi/k\) and the period in time is \(P=2\pi/\omega\). Use these expressions to find the wave length in space and period in time of \(u_{\mbox{\footnotesize e}}\) above.

-

b)

Import the solver function from wave1D_u0.py into a new file where the viz function is reimplemented such that it plots either the numerical and the exact solution, or the error.

-

c)

Make animations where you illustrate how the error \(e^{n}_{i}=u_{\mbox{\footnotesize e}}(x_{i},t_{n})-u^{n}_{i}\) develops and increases in time. Also make animations of u and \(u_{\mbox{\footnotesize e}}\) simultaneously.

Hint 1

Quite long time simulations are needed in order to display significant discrepancies between the numerical and exact solution.

Hint 2

A possible set of parameters is L = 12, m = 9, c = 2, A = 1, \(N_{x}=80\), C = 0.8. The error mesh function e n can be simulated for 10 periods, while 20–30 periods are needed to show significant differences between the curves for the numerical and exact solution.

Filename: wave_standing.

Remarks

The important parameters for numerical quality are C and \(k\Delta x\), where \(C=c\Delta t/\Delta x\) is the Courant number and k is defined above (\(k\Delta x\) is proportional to how many mesh points we have per wave length in space, see Sect. 2.10.4 for explanation).

Exercise 2.2 (Add storage of solution in a user action function)

Extend the plot_u function in the file wave1D_u0.py to also store the solutions u in a list. To this end, declare all_u as an empty list in the viz function, outside plot_u, and perform an append operation inside the plot_u function. Note that a function, like plot_u, inside another function, like viz, remembers all local variables in viz function, including all_u, even when plot_u is called (as user_action) in the solver function. Test both all_u.append(u) and all_u.append(u.copy()). Why does one of these constructions fail to store the solution correctly? Let the viz function return the all_u list converted to a two-dimensional numpy array.

Filename: wave1D_u0_s_store.

Exercise 2.3 (Use a class for the user action function)

Redo Exercise 2.2 using a class for the user action function. Let the all_u list be an attribute in this class and implement the user action function as a method (the special method __call__ is a natural choice). The class versions avoid that the user action function depends on parameters defined outside the function (such as all_u in Exercise 2.2).

Filename: wave1D_u0_s2c.

Exercise 2.4 (Compare several Courant numbers in one movie)

The goal of this exercise is to make movies where several curves, corresponding to different Courant numbers, are visualized. Write a program that resembles wave1D_u0_s2c.py in Exercise 2.3, but with a viz function that can take a list of C values as argument and create a movie with solutions corresponding to the given C values. The plot_u function must be changed to store the solution in an array (see Exercise 2.2 or 2.3 for details), solver must be computed for each value of the Courant number, and finally one must run through each time step and plot all the spatial solution curves in one figure and store it in a file.

The challenge in such a visualization is to ensure that the curves in one plot correspond to the same time point. The easiest remedy is to keep the time resolution constant and change the space resolution to change the Courant number. Note that each spatial grid is needed for the final plotting, so it is an option to store those grids too.

Filename: wave_numerics_comparison.

Exercise 2.5 (Implementing the solver function as a generator)

The callback function user_action(u, x, t, n) is called from the solver function (in, e.g., wave1D_u0.py) at every time level and lets the user work perform desired actions with the solution, like plotting it on the screen. We have implemented the callback function in the typical way it would have been done in C and Fortran. Specifically, the code looks like

Many Python programmers, however, may claim that solver is an iterative process, and that iterative processes with callbacks to the user code is more elegantly implemented as generators. The rest of the text has little meaning unless you are familiar with Python generators and the yield statement.

Instead of calling user_action, the solver function issues a yield statement, which is a kind of return statement:

The program control is directed back to the calling code:

When the block is done, solver continues with the statement after yield. Note that the functionality of terminating the solution process if user_action returns a True value is not possible to implement in the generator case.

Implement the solver function as a generator, and plot the solution at each time step.

Filename: wave1D_u0_generator.

Project 2.6 (Calculus with 1D mesh functions)

This project explores integration and differentiation of mesh functions, both with scalar and vectorized implementations. We are given a mesh function f i on a spatial one-dimensional mesh \(x_{i}=i\Delta x\), \(i=0,\ldots,N_{x}\), over the interval \([a,b]\).

-

a)

Define the discrete derivative of f i by using centered differences at internal mesh points and one-sided differences at the end points. Implement a scalar version of the computation in a Python function and write an associated unit test for the linear case \(f(x)=4x-2.5\) where the discrete derivative should be exact.

-

b)

Vectorize the implementation of the discrete derivative. Extend the unit test to check the validity of the implementation.

-

c)

To compute the discrete integral F i of f i , we assume that the mesh function f i varies linearly between the mesh points. Let f(x) be such a linear interpolant of f i . We then have

$$F_{i}=\int_{x_{0}}^{x_{i}}f(x)dx\thinspace.$$The exact integral of a piecewise linear function f(x) is given by the Trapezoidal rule. Show that if F i is already computed, we can find \(F_{i+1}\) from

$$F_{i+1}=F_{i}+\frac{1}{2}(f_{i}+f_{i+1})\Delta x\thinspace.$$Make a function for the scalar implementation of the discrete integral as a mesh function. That is, the function should return F i for \(i=0,\ldots,N_{x}\). For a unit test one can use the fact that the above defined discrete integral of a linear function (say \(f(x)=4x-2.5\)) is exact.

-

d)

Vectorize the implementation of the discrete integral. Extend the unit test to check the validity of the implementation.

Hint

Interpret the recursive formula for \(F_{i+1}\) as a sum. Make an array with each element of the sum and use the ″cumsum″ (numpy.cumsum) operation to compute the accumulative sum: numpy.cumsum([1,3,5]) is [1,4,9].

-

e)

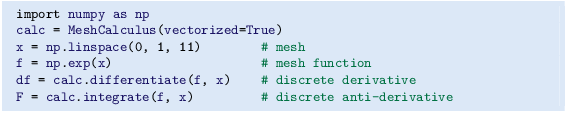

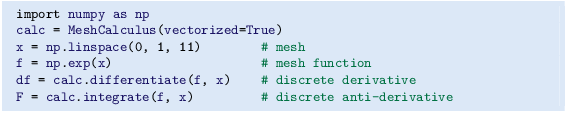

Create a class MeshCalculus that can integrate and differentiate mesh functions. The class can just define some methods that call the previously implemented Python functions. Here is an example on the usage:

Filename: mesh_calculus_1D.

2.6 Generalization: Reflecting Boundaries

The boundary condition u = 0 in a wave equation reflects the wave, but u changes sign at the boundary, while the condition \(u_{x}=0\) reflects the wave as a mirror and preserves the sign, see a web page Footnote 5 or a movie file Footnote 6 for demonstration.

Our next task is to explain how to implement the boundary condition \(u_{x}=0\), which is more complicated to express numerically and also to implement than a given value of u. We shall present two methods for implementing \(u_{x}=0\) in a finite difference scheme, one based on deriving a modified stencil at the boundary, and another one based on extending the mesh with ghost cells and ghost points.

2.6.1 Neumann Boundary Condition

When a wave hits a boundary and is to be reflected back, one applies the condition

The derivative \(\partial/\partial n\) is in the outward normal direction from a general boundary. For a 1D domain \([0,L]\), we have that

Boundary condition terminology

Boundary conditions that specify the value of \(\partial u/\partial n\) (or shorter u n ) are known as Neumann Footnote 7 conditions, while Dirichlet conditions Footnote 8 refer to specifications of u. When the values are zero (\(\partial u/\partial n=0\) or u = 0) we speak about homogeneous Neumann or Dirichlet conditions.

2.6.2 Discretization of Derivatives at the Boundary

How can we incorporate the condition (2.35) in the finite difference scheme? Since we have used central differences in all the other approximations to derivatives in the scheme, it is tempting to implement (2.35) at x = 0 and \(t=t_{n}\) by the difference

The problem is that \(u_{-1}^{n}\) is not a u value that is being computed since the point is outside the mesh. However, if we combine (2.36) with the scheme

for i = 0, we can eliminate the fictitious value \(u_{-1}^{n}\). We see that \(u_{-1}^{n}=u_{1}^{n}\) from (2.36), which can be used in (2.37) to arrive at a modified scheme for the boundary point \(u_{0}^{n+1}\):

Figure 2.4 visualizes this equation for computing \(u^{3}_{0}\) in terms of \(u^{2}_{0}\), \(u^{1}_{0}\), and \(u^{2}_{1}\).

Similarly, (2.35) applied at x = L is discretized by a central difference

Combined with the scheme for \(i=N_{x}\) we get a modified scheme for the boundary value \(u_{N_{x}}^{n+1}\):

The modification of the scheme at the boundary is also required for the special formula for the first time step. How the stencil moves through the mesh and is modified at the boundary can be illustrated by an animation in a web page Footnote 9 or a movie file Footnote 10.

2.6.3 Implementation of Neumann Conditions

We have seen in the preceding section that the special formulas for the boundary points arise from replacing \(u_{i-1}^{n}\) by \(u_{i+1}^{n}\) when computing \(u_{i}^{n+1}\) from the stencil formula for i = 0. Similarly, we replace \(u_{i+1}^{n}\) by \(u_{i-1}^{n}\) in the stencil formula for \(i=N_{x}\). This observation can conveniently be used in the coding: we just work with the general stencil formula, but write the code such that it is easy to replace u[i-1] by u[i+1] and vice versa. This is achieved by having the indices i+1 and i-1 as variables ip1 (i plus 1) and im1 (i minus 1), respectively. At the boundary we can easily define im1=i+1 while we use im1=i-1 in the internal parts of the mesh. Here are the details of the implementation (note that the updating formula for u[i] is the general stencil formula):

We can in fact create one loop over both the internal and boundary points and use only one updating formula:

The program wave1D_n0.py contains a complete implementation of the 1D wave equation with boundary conditions \(u_{x}=0\) at x = 0 and x = L.

It would be nice to modify the test_quadratic test case from the wave1D_u0.py with Dirichlet conditions, described in Sect. 2.4.3. However, the Neumann conditions require the polynomial variation in the x direction to be of third degree, which causes challenging problems when designing a test where the numerical solution is known exactly. Exercise 2.15 outlines ideas and code for this purpose. The only test in wave1D_n0.py is to start with a plug wave at rest and see that the initial condition is reached again perfectly after one period of motion, but such a test requires C = 1 (so the numerical solution coincides with the exact solution of the PDE, see Sect. 2.10.4).

2.6.4 Index Set Notation

To improve our mathematical writing and our implementations, it is wise to introduce a special notation for index sets. This means that we write x i , followed by \(i\in\mathcal{I}_{x}\), instead of \(i=0,\ldots,N_{x}\). Obviously, \(\mathcal{I}_{x}\) must be the index set \(\mathcal{I}_{x}=\{0,\ldots,N_{x}\}\), but it is often advantageous to have a symbol for this set rather than specifying all its elements (all the time, as we have done up to now). This new notation saves writing and makes specifications of algorithms and their implementation as computer code simpler.

The first index in the set will be denoted \(\mathcal{I}_{x}^{0}\) and the last \(\mathcal{I}_{x}^{-1}\). When we need to skip the first element of the set, we use \(\mathcal{I}_{x}^{+}\) for the remaining subset \(\mathcal{I}_{x}^{+}=\{1,\ldots,N_{x}\}\). Similarly, if the last element is to be dropped, we write \(\mathcal{I}_{x}^{-}=\{0,\ldots,N_{x}-1\}\) for the remaining indices. All the indices corresponding to inner grid points are specified by \(\mathcal{I}_{x}^{i}=\{1,\ldots,N_{x}-1\}\). For the time domain we find it natural to explicitly use 0 as the first index, so we will usually write n = 0 and t 0 rather than \(n=\mathcal{I}_{t}^{0}\). We also avoid notation like \(x_{\mathcal{I}_{x}^{-1}}\) and will instead use x i , \(i=\mathcal{I}_{x}^{-1}\).

The Python code associated with index sets applies the following conventions:

Notation | Python |

|---|---|

\(\mathcal{I}_{x}\) | Ix |

\(\mathcal{I}_{x}^{0}\) | Ix[0] |

\(\mathcal{I}_{x}^{-1}\) | Ix[-1] |

\(\mathcal{I}_{x}^{-}\) | Ix[:-1] |

\(\mathcal{I}_{x}^{+}\) | Ix[1:] |

\(\mathcal{I}_{x}^{i}\) | Ix[1:-1] |

Why index sets are useful

An important feature of the index set notation is that it keeps our formulas and code independent of how we count mesh points. For example, the notation \(i\in\mathcal{I}_{x}\) or \(i=\mathcal{I}_{x}^{0}\) remains the same whether \(\mathcal{I}_{x}\) is defined as above or as starting at 1, i.e., \(\mathcal{I}_{x}=\{1,\ldots,Q\}\). Similarly, we can in the code define Ix=range(Nx+1) or Ix=range(1,Q), and expressions like Ix[0] and Ix[1:-1] remain correct. One application where the index set notation is convenient is conversion of code from a language where arrays has base index 0 (e.g., Python and C) to languages where the base index is 1 (e.g., MATLAB and Fortran). Another important application is implementation of Neumann conditions via ghost points (see next section).

For the current problem setting in the x,t plane, we work with the index sets

defined in Python as

A finite difference scheme can with the index set notation be specified as

The corresponding implementation becomes

Notice

The program wave1D_dn.py applies the index set notation and solves the 1D wave equation \(u_{tt}=c^{2}u_{xx}+f(x,t)\) with quite general boundary and initial conditions:

-

x = 0: \(u=U_{0}(t)\) or \(u_{x}=0\)

-

x = L: \(u=U_{L}(t)\) or \(u_{x}=0\)

-

t = 0: \(u=I(x)\)

-

t = 0: \(u_{t}=V(x)\)

The program combines Dirichlet and Neumann conditions, scalar and vectorized implementation of schemes, and the index set notation into one piece of code. A lot of test examples are also included in the program:

-

A rectangular plug-shaped initial condition. (For C = 1 the solution will be a rectangle that jumps one cell per time step, making the case well suited for verification.)

-

A Gaussian function as initial condition.

-

A triangular profile as initial condition, which resembles the typical initial shape of a guitar string.

-

A sinusoidal variation of u at x = 0 and either u = 0 or \(u_{x}=0\) at x = L.

-

An analytical solution \(u(x,t)=\cos(m\pi t/L)\sin({\frac{1}{2}}m\pi x/L)\), which can be used for convergence rate tests.

2.6.5 Verifying the Implementation of Neumann Conditions

How can we test that the Neumann conditions are correctly implemented? The solver function in the wave1D_dn.py program described in the box above accepts Dirichlet or Neumann conditions at x = 0 and x = L. It is tempting to apply a quadratic solution as described in Sect. 2.2.1 and 2.3.3, but it turns out that this solution is no longer an exact solution of the discrete equations if a Neumann condition is implemented on the boundary. A linear solution does not help since we only have homogeneous Neumann conditions in wave1D_dn.py, and we are consequently left with testing just a constant solution: \(u=\hbox{const}\).

The quadratic solution is very useful for testing, but it requires Dirichlet conditions at both ends.

Another test may utilize the fact that the approximation error vanishes when the Courant number is unity. We can, for example, start with a plug profile as initial condition, let this wave split into two plug waves, one in each direction, and check that the two plug waves come back and form the initial condition again after ‘‘one period’’ of the solution process. Neumann conditions can be applied at both ends. A proper test function reads

Other tests must rely on an unknown approximation error, so effectively we are left with tests on the convergence rate.

2.6.6 Alternative Implementation via Ghost Cells

Idea

Instead of modifying the scheme at the boundary, we can introduce extra points outside the domain such that the fictitious values \(u_{-1}^{n}\) and \(u_{N_{x}+1}^{n}\) are defined in the mesh. Adding the intervals \([-\Delta x,0]\) and \([L,L+\Delta x]\), known as ghost cells, to the mesh gives us all the needed mesh points, corresponding to \(i=-1,0,\ldots,N_{x},N_{x}+1\). The extra points with i = −1 and \(i=N_{x}+1\) are known as ghost points, and values at these points, \(u_{-1}^{n}\) and \(u_{N_{x}+1}^{n}\), are called ghost values.

The important idea is to ensure that we always have

because then the application of the standard scheme at a boundary point i = 0 or \(i=N_{x}\) will be correct and guarantee that the solution is compatible with the boundary condition \(u_{x}=0\).

Some readers may find it strange to just extend the domain with ghost cells as a general technique, because in some problems there is a completely different medium with different physics and equations right outside of a boundary. Nevertheless, one should view the ghost cell technique as a purely mathematical technique, which is valid in the limit \(\Delta x\rightarrow 0\) and helps us to implement derivatives.

Implementation

The u array now needs extra elements corresponding to the ghost points. Two new point values are needed:

The arrays u_n and u_nm1 must be defined accordingly.

Unfortunately, a major indexing problem arises with ghost cells. The reason is that Python indices must start at 0 and u[-1] will always mean the last element in u. This fact gives, apparently, a mismatch between the mathematical indices \(i=-1,0,\ldots,N_{x}+1\) and the Python indices running over u: 0,..,Nx+2. One remedy is to change the mathematical indexing of i in the scheme and write

instead of \(i=0,\ldots,N_{x}\) as we have previously used. The ghost points now correspond to i = 0 and \(i=N_{x}+1\). A better solution is to use the ideas of Sect. 2.6.4: we hide the specific index value in an index set and operate with inner and boundary points using the index set notation.

To this end, we define u with proper length and Ix to be the corresponding indices for the real physical mesh points (\(1,2,\ldots,N_{x}+1\)):

That is, the boundary points have indices Ix[0] and Ix[-1] (as before). We first update the solution at all physical mesh points (i.e., interior points in the mesh):

The indexing becomes a bit more complicated when we call functions like V(x) and f(x, t), as we must remember that the appropriate x coordinate is given as x[i-Ix[0]]:

It remains to update the solution at ghost points, i.e., u[0] and u[-1] (or u[Nx+2]). For a boundary condition \(u_{x}=0\), the ghost value must equal the value at the associated inner mesh point. Computer code makes this statement precise:

The physical solution to be plotted is now in u[1:-1], or equivalently u[Ix[0]: Ix[-1]+1], so this slice is the quantity to be returned from a solver function. A complete implementation appears in the program wave1D_n0_ghost.py .

Warning

We have to be careful with how the spatial and temporal mesh points are stored. Say we let x be the physical mesh points,

‘‘Standard coding’’ of the initial condition,

becomes wrong, since u_n and x have different lengths and the index i corresponds to two different mesh points. In fact, x[i] corresponds to u[1+i]. A correct implementation is

Similarly, a source term usually coded as f(x[i], t[n]) is incorrect if x is defined to be the physical points, so x[i] must be replaced by x[i-Ix[0]].

An alternative remedy is to let x also cover the ghost points such that u[i] is the value at x[i].

The ghost cell is only added to the boundary where we have a Neumann condition. Suppose we have a Dirichlet condition at x = L and a homogeneous Neumann condition at x = 0. One ghost cell \([-\Delta x,0]\) is added to the mesh, so the index set for the physical points becomes \(\{1,\ldots,N_{x}+1\}\). A relevant implementation is

The physical solution to be plotted is now in u[1:] or (as always) u[Ix[0]: Ix[-1]+1].

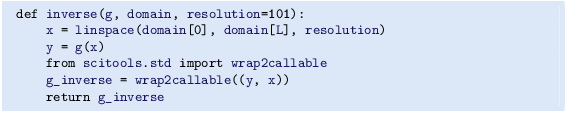

2.7 Generalization: Variable Wave Velocity

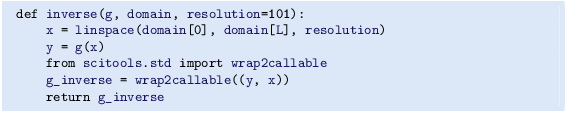

Our next generalization of the 1D wave equation (2.1) or (2.17) is to allow for a variable wave velocity c: \(c=c(x)\), usually motivated by wave motion in a domain composed of different physical media. When the media differ in physical properties like density or porosity, the wave velocity c is affected and will depend on the position in space. Figure 2.5 shows a wave propagating in one medium \([0,0.7]\cup[0.9,1]\) with wave velocity c 1 (left) before it enters a second medium \((0.7,0.9)\) with wave velocity c 2 (right). When the wave meets the boundary where c jumps from c 1 to c 2, a part of the wave is reflected back into the first medium (the reflected wave), while one part is transmitted through the second medium (the transmitted wave).

2.7.1 The Model PDE with a Variable Coefficient

Instead of working with the squared quantity \(c^{2}(x)\), we shall for notational convenience introduce \(q(x)=c^{2}(x)\). A 1D wave equation with variable wave velocity often takes the form

This is the most frequent form of a wave equation with variable wave velocity, but other forms also appear, see Sect. 2.14.1 and equation (2.125).

As usual, we sample (2.42) at a mesh point,

where the only new term to discretize is

2.7.2 Discretizing the Variable Coefficient

The principal idea is to first discretize the outer derivative. Define

and use a centered derivative around \(x=x_{i}\) for the derivative of ϕ:

Then discretize

Similarly,

These intermediate results are now combined to

With operator notation we can write the discretization as

Do not use the chain rule on the spatial derivative term!

Many are tempted to use the chain rule on the term \(\frac{\partial}{\partial x}\left(q(x)\frac{\partial u}{\partial x}\right)\), but this is not a good idea when discretizing such a term.

The term with a variable coefficient expresses the net flux qu x into a small volume (i.e., interval in 1D):

Our discretization reflects this principle directly: qu x at the right end of the cell minus qu x at the left end, because this follows from the formula (2.43) or \([D_{x}(qD_{x}u)]^{n}_{i}\).

When using the chain rule, we get two terms \(qu_{xx}+q_{x}u_{x}\). The typical discretization is

Writing this out shows that it is different from \([D_{x}(qD_{x}u)]^{n}_{i}\) and lacks the physical interpretation of net flux into a cell. With a smooth and slowly varying q(x) the differences between the two discretizations are not substantial. However, when q exhibits (potentially large) jumps, \([D_{x}(qD_{x}u)]^{n}_{i}\) with harmonic averaging of q yields a better solution than arithmetic averaging or (2.45). In the literature, the discretization \([D_{x}(qD_{x}u)]^{n}_{i}\) totally dominates and very few mention the alternative in (2.45).

2.7.3 Computing the Coefficient Between Mesh Points

If q is a known function of x, we can easily evaluate \(q_{i+\frac{1}{2}}\) simply as \(q(x_{i+\frac{1}{2}})\) with \(x_{i+\frac{1}{2}}=x_{i}+\frac{1}{2}\Delta x\). However, in many cases c, and hence q, is only known as a discrete function, often at the mesh points x i . Evaluating q between two mesh points x i and \(x_{i+1}\) must then be done by interpolation techniques, of which three are of particular interest in this context:

The arithmetic mean in (2.46) is by far the most commonly used averaging technique and is well suited for smooth q(x) functions. The harmonic mean is often preferred when q(x) exhibits large jumps (which is typical for geological media). The geometric mean is less used, but popular in discretizations to linearize quadratic nonlinearities (see Sect. 1.10.2 for an example).

With the operator notation from (2.46) we can specify the discretization of the complete variable-coefficient wave equation in a compact way:

Strictly speaking, \([D_{x}\overline{q}^{x}D_{x}u]^{n}_{i}=[D_{x}(\overline{q}^{x}D_{x}u)]^{n}_{i}\).

From the compact difference notation we immediately see what kind of differences that each term is approximated with. The notation \(\overline{q}^{x}\) also specifies that the variable coefficient is approximated by an arithmetic mean, the definition being \([\overline{q}^{x}]_{i+\frac{1}{2}}=(q_{i}+q_{i+1})/2\).

Before implementing, it remains to solve (2.49) with respect to \(u_{i}^{n+1}\):

2.7.4 How a Variable Coefficient Affects the Stability

The stability criterion derived later (Sect. 2.10.3) reads \(\Delta t\leq\Delta x/c\). If \(c=c(x)\), the criterion will depend on the spatial location. We must therefore choose a \(\Delta t\) that is small enough such that no mesh cell has \(\Delta t> \Delta x/c(x)\). That is, we must use the largest c value in the criterion:

The parameter β is included as a safety factor: in some problems with a significantly varying c it turns out that one must choose β < 1 to have stable solutions (β = 0.9 may act as an all-round value).

A different strategy to handle the stability criterion with variable wave velocity is to use a spatially varying \(\Delta t\). While the idea is mathematically attractive at first sight, the implementation quickly becomes very complicated, so we stick to a constant \(\Delta t\) and a worst case value of c(x) (with a safety factor β).

2.7.5 Neumann Condition and a Variable Coefficient

Consider a Neumann condition \(\partial u/\partial x=0\) at \(x=L=N_{x}\Delta x\), discretized as

for \(i=N_{x}\). Using the scheme (2.50) at the end point \(i=N_{x}\) with \(u_{i+1}^{n}=u_{i-1}^{n}\) results in

Here we used the approximation

An alternative derivation may apply the arithmetic mean of \(q_{n-\frac{1}{2}}\) and \(q_{n+\frac{1}{2}}\) in (2.53), leading to the term

Since \(\frac{1}{2}(q_{i+1}+q_{i-1})=q_{i}+{\cal O}(\Delta x^{2})\), we can approximate with \(2q_{i}(u_{i-1}^{n}-u_{i}^{n})\) for \(i=N_{x}\) and get the same term as we did above.

A common technique when implementing \(\partial u/\partial x=0\) boundary conditions, is to assume dq ∕ dx = 0 as well. This implies \(q_{i+1}=q_{i-1}\) and \(q_{i+1/2}=q_{i-1/2}\) for \(i=N_{x}\). The implications for the scheme are

2.7.6 Implementation of Variable Coefficients

The implementation of the scheme with a variable wave velocity \(q(x)=c^{2}(x)\) may assume that q is available as an array q[i] at the spatial mesh points. The following loop is a straightforward implementation of the scheme (2.50):

The coefficient C2 is now defined as (dt/dx)**2, i.e., not as the squared Courant number, since the wave velocity is variable and appears inside the parenthesis.

With Neumann conditions \(u_{x}=0\) at the boundary, we need to combine this scheme with the discrete version of the boundary condition, as shown in Sect. 2.7.5. Nevertheless, it would be convenient to reuse the formula for the interior points and just modify the indices ip1=i+1 and im1=i-1 as we did in Sect. 2.6.3. Assuming dq ∕ dx = 0 at the boundaries, we can implement the scheme at the boundary with the following code.

With ghost cells we can just reuse the formula for the interior points also at the boundary, provided that the ghost values of both u and q are correctly updated to ensure \(u_{x}=0\) and \(q_{x}=0\).

A vectorized version of the scheme with a variable coefficient at internal mesh points becomes

2.7.7 A More General PDE Model with Variable Coefficients

Sometimes a wave PDE has a variable coefficient in front of the time-derivative term:

One example appears when modeling elastic waves in a rod with varying density, cf. (2.14.1) with \(\varrho(x)\).

A natural scheme for (2.58) is

We realize that the \(\varrho\) coefficient poses no particular difficulty, since \(\varrho\) enters the formula just as a simple factor in front of a derivative. There is hence no need for any averaging of \(\varrho\). Often, \(\varrho\) will be moved to the right-hand side, also without any difficulty:

2.7.8 Generalization: Damping

Waves die out by two mechanisms. In 2D and 3D the energy of the wave spreads out in space, and energy conservation then requires the amplitude to decrease. This effect is not present in 1D. Damping is another cause of amplitude reduction. For example, the vibrations of a string die out because of damping due to air resistance and non-elastic effects in the string.

The simplest way of including damping is to add a first-order derivative to the equation (in the same way as friction forces enter a vibrating mechanical system):

where b ≥ 0 is a prescribed damping coefficient.

A typical discretization of (2.61) in terms of centered differences reads

Writing out the equation and solving for the unknown \(u^{n+1}_{i}\) gives the scheme

for \(i\in\mathcal{I}_{x}^{i}\) and n ≥ 1. New equations must be derived for \(u^{1}_{i}\), and for boundary points in case of Neumann conditions.

The damping is very small in many wave phenomena and thus only evident for very long time simulations. This makes the standard wave equation without damping relevant for a lot of applications.

2.8 Building a General 1D Wave Equation Solver

The program wave1D_dn_vc.py is a fairly general code for 1D wave propagation problems that targets the following initial-boundary value problem

The only new feature here is the time-dependent Dirichlet conditions, but they are trivial to implement:

The solver function is a natural extension of the simplest solver function in the initial wave1D_u0.py program, extended with Neumann boundary conditions (\(u_{x}=0\)), time-varying Dirichlet conditions, as well as a variable wave velocity. The different code segments needed to make these extensions have been shown and commented upon in the preceding text. We refer to the solver function in the wave1D_dn_vc.py file for all the details. Note in that solver function, however, that the technique of ‘‘hashing’’ is used to check whether a certain simulation has been run before, or not. This technique is further explained in Sect. C.2.3.

The vectorization is only applied inside the time loop, not for the initial condition or the first time steps, since this initial work is negligible for long time simulations in 1D problems.

The following sections explain various more advanced programming techniques applied in the general 1D wave equation solver.

2.8.1 User Action Function as a Class