Abstract

European companies of today are involved in many stages of the product life cycle. There is a trend towards the view of their business as a complex industrial product-service system (IPSS). This trend shifts the business focus from a traditional product oriented one to a function oriented one. With the function in focus, the seller shares the responsibility of for example maintenance of the product with the buyer. As such IPSS has been praised for supporting sustainable practices. This shift in focus also promotes longevity of products and promotes life extending work on the products such as adaptation and upgrades. Staying competitive requires continuous improvement of manufacturing and services to make them more flexible and adaptive to external changes. The adaptation itself needs to be performed efficiently without disrupting ongoing operations and needs to result in an acceptable after state. Virtual planning models are a key technology to enable planning and design of the future operations in parallel with ongoing operations. This chapter presents an approach to combine digitalization and virtual reality (VR) technologies to create the next generation of virtual planning environments. Through incorporating digitalization techniques such as 3D imaging, the models will reach a new level of fidelity and realism which in turn makes them accessible to a broader group of users and stakeholders. Increased accessibility facilitates a collaborative decision making process that invites and includes cross functional teams. Through such involvement, a broader range of experts, their skills, operational and tacit knowledge can be leveraged towards better planning of the upgrade process. This promises to shorten lead times and reduce risk in upgrade projects through better expert involvements and shorter iterations in the upgrade planning cycle.

You have full access to this open access chapter, Download chapter PDF

Similar content being viewed by others

Keywords

- 3D-imaging

- Collaboration

- Cross-functional teams

- Manufacturing

- Virtual reality

- Simulation and modelling

- Layout planning

1 Introduction

As stated by Reyes in Chapter “The Use-It-Wisely (UIW) Approach”, European industries face significant challenges due to global off-shoring, rapid business environment change, shrinking investment budgets, and environmental pressures (Schuh et al. 2011). Companies that work with high investment assets need strategies and tools to enable prolonged service life and even upgrades of functionality and capability over time. A high investment asset is typically something that has an expected return on investment of several years or even decades. Their operation typically includes providing some sort of service to internal or external customers. There are plenty of examples, of which most can be modelled as product-service system (IPSS). These systems consist of Products, the physical objects that are being offered, and Services, the additional business proposals that are offered alongside the physical products (Mont 2002). Also included in this system view, and generally thought to add to the complexity are the different actors whom interact directly and indirectly with the system. A common denominator for the IPSS system discussed in this project are that their physical objects or entities exists to provide some service or function over a reasonably long time span. These systems tend to be complex in nature and are often operated on and interacted with by a large number of actors. The involved actors tend to each have their own individual needs and requirements to fulfil their tasks and purposes, making the alignment towards a common, holistically optimal, goal complex. There are many examples of the type of system mentioned here and the clusters in the Use-it-Wisely project represents a subset of them, for example a communications satellite put into orbit, a passenger vessel for shipping industry, or an automotive production facility.

This chapter explores the use of VR and 3D-imaging technologies to support such upgrades to extend the operational phase of the IPSS system’s life cycle. Specific emphasis is put on how they can support maintenance, upgrade design, and implementation processes. VR technology provides immersive access to life sized models so that they can be experienced by end users in the design and upgrade stage. These users can be domain experts within the use-phase of the system that traditionally are not deeply involved in the development phase. When it comes to upgrades of existing systems, 3D-imaging provides generation of realistic, accurate, and up-to-date data which can be used as visualization models of the current system configuration. By merging these models with the upgrade design suggestions, realistic scenarios for the future system state can be created. Finally, by using VR technologies these future state models, can be reviewed by domain-experts early on in the design phase, giving them a tool to voice their needs and requirements in a concrete way. The involvement of cross functional actor teams is key in achieving a holistic approach to problem solving and ideation (Ahn et al. 2005; Song et al. 1998).

Section 2 gives an overview of the state of the art in the involved technologies. Section 3 presents the combined 3D-imaging and VR tool that was developed during the Use-it-Wisely project. Finally, Sect. 4 concludes the findings and lessons learned from this endeavour.

2 Generic Overview of Manufacturing Adaptation Processes and Related Technologies

The entry of computers to utilize digital tools and technologies in the design process has enabled an ever faster rate for developing products and services. It gives the ability for many engineers and other actors to work in parallel and share/replicate/combine their results across an infinite number of recipients with little added effort. Additions and changes to the design can be added without the need for any physical remake or rebuild of the objects. Thus, a development process can easily be shared between many actors and engineers in order to gain feedback and improvement suggestions. As the technology has been refined, more and more of the development and planning work can be conducted without the existence of any physical prototype. This reduces the need for multiple time consuming iterations of prototype building for verification and validation. This section serves as an introduction to VR, digital models, and 3D imaging in the upgrade design process.

2.1 Virtual Reality

Most commonly known as virtual reality (VR), the technology is sometimes also referred to as telepresence (Steuer 1992). The use of presence in the wording alludes to the experience of being present in a virtual environment. In other words, the mind is perceiving another surrounding and setting than the actual physical environment that surrounds the body. Steuer phrases the following definition:

A “virtual reality” is defined as a real or simulated environment in which a perceiver experiences telepresence

VR Definition, Steuer (1992)

Steuer presents a framework of dimensions to appraise the quality of a given VR technology. These dimensions are Vividness and Interactivity. Vividness signifies the breadth of the VR medium, e.g. how many senses that are exposed to stimuli, it also encompasses the depth of the stimuli, meaning the level of detail. Interactivity denotes the user’s possibility to navigate or affect the VR environment as well as how realistic that interaction is in terms of responsiveness and accuracy of movements (Steuer 1992).

In general, the term virtual reality refers to an immersive, interactive experience generated by a computer.

VR Definition, Pimentel and Texeira (1993)

Many authors have tried to characterize and measure VR-technologies in terms of quality of the experience. It is however an evasive quality and hard to measure in a quantifiable way. Gibson for example, who predates Steuer (1992) also talks of presence as the measure (Gibson 1979). In present terminology the word immersion is often used to describe the quality of the VR system. Immersion denotes the quality of the sensory stimuli that the system can produce. It is related, although not directly, to the subjective feeling of “presence” of the user. And logically the greater the quality of the stimuli the higher the probability of achieving a high level of presences. Though as many researchers in the field note, presence is highly dependent on the individual and some individuals have a greater capacity to experience presence. Presence can be interpreted as a measure of the extent the user forgets the medium to the benefit of the experience of “being” in the virtual environment (Loomis 1992).

Other examples are Loeffler and Anderson (1994) who defines VR as “a 3D virtual environment that is rendered in real time and controlled by the users”. Similarly to Steuer (1992) framework, they include the concepts of vividness (rendering) and interactivity (control). Although it seems to be narrower in the sense that is only alludes to visual stimuli, rendering.

There have been attempts at quantifying both immersion and presence. Pausch attempted to quantify the level of immersion in VR (Pausch et al. 1997). Meehan et al. (2002) wrote about physiological measurements of the VR experience by invoking stress on the subjects to grasp the fleeing aspect of presence. The measurements extended to heart rate, skin conductance, and skin temperature to determine the reaction of the test subject and compare to the change in the same measures given a real situation. The logic being that if our reactions to a situation in the virtual environment mimics our reaction to the same situation in the real world, our mind and bodies are likely believing the experience. The topic is debated from a different standpoint by Bowman, who poses the question of how much immersion is enough (Bowman and McMahan 2007)? This is indeed an interesting aspect when the purpose is to facilitate work tasks in industry. Then the immersion lacks value in and off itself, as opposed to VR for entertainment purposes where elevated immersion is sought fiercely. Teyseyre and Campo (2009) represent one attempt at identifying the strengths and weaknesses of 3D visualisation in general. Their findings are shown in Table 1.

A general motivation to start using VR is the limitation of what information that can be presented by traditional 2D models (Smith and Heim 1999). The same authors argue that VR makes it possible to make accurate and rapid decisions through the added understanding an immersive virtual environment gives (Smith and Heim 1999). Another strong driver for using VR technology compared to traditional visualization of 3D models is the increased spatial understanding that is achieved in a VR environment. This helps experts in domains outside of 3D modelling and CAD to reach the same, or close to the same, understanding of the models as the model developer.

2.2 Virtual Reality in the Adaptation Process

Systems are designed to fulfil some function or need for its users. Inevitably, the needs or functions will be altered over time and to keep fulfilling these the system has to adapt accordingly. This adaptation can be achieved either by improving the system’s current functions or by adding new functionality to the system. When designing and implementing adaptions to existing systems it is desirable to plan and foresee any problems that might arise. This is performed to ensure good quality and reduce the implementation time to minimize the downtime of the system during the adaptation process (Groover 2007).

Being able to access models through VR access to models through VR for better understanding. Access to models from various places. Many companies are operating on a global scale and need to be able to align and synchronize their efforts in a good and efficient way. This paper is concerned with upgrades and changes to long life assets. And specifically how to plan and optimize these upgrades in a collaborative way. Making use of the many various skills and expertise that exists in a company. In a sense, all the perceivable actors that interact with the IPSS should contribute their aspects and needs. This will support a holistic approach to the upgrade and reduces the risk of costly oversights of some critical functions and or aspects.

The idea of utilizing VR to support engineering work in general has been around for a long time. Deitz wrote in 1995 about the state of VR as a mechanical engineering tool. Concluding that it has the potential to “reduce the number of prototypes and engineering change orders”, “simplify design reviews”, and “make it easier for non-engineers to contribute to the design process” (Deitz 1995). High investment assets in nature tend to have many users and actors, many of them non-engineers, which interact with it over time. Often there are non-engineers that hold valuable tacit knowledge about the operational phase and maintenance of the asset. Enabling these individuals to be a part of the upgrade process can potentially bring about a more optimal end result that considers more aspects than a pure engineering solution would have.

This section goes into detail about VR, how it can be indexed and described and also gives an example of the various technological solutions that exist today. Further it introduces the field of 3D imaging as a technology to provide accurate digital 3D surface representations of the already existing assets. Discussing how these can be used in the ideation and design phase for an upgrade.

2.3 VR Technologies Related to Adaptation of Manufacturing Processes

For the purpose of the research presented in this project the focus has been on 3D environments for planning and evaluation of upcoming changes and updates of high investment assets. For this purpose, only a limited range of the field of VR have been considered and investigated. The aspects which have been included are visual stimuli, movements/locomotion in the environment and to some extent the ability to interact with modelled objects inside the virtual environment. For the extent of the implementation VR is defined as a 3D environment, rendered in real time over which the user has some ability to navigate around in and interact with. Apart from the addition in italics, this is much like the VR definition given by Loeffler and Anderson in 1994 (Loeffler and Anderson 1994).

When applying this scope to the field of VR there are a number of technologies to choose from. A number of them will be presented here. The selection is based on the purpose of using VR which is to give users a feeling of being inside the virtual environment, using some sort of display to visualise the 3D virtual environment (Korves and Loftus 1999).

Menck et al. lists general technologies used to create VR interfaces (Menck et al. 2012): computer display, head-mounted display (HMD), power wall, and cave automatic virtual environment (CAVE).

The above technologies are different on a number of factors, they present different inherent capabilities and their cost is also varying significantly, which can steer or limit the choice depending on application. From a capability perspective many aspects can be identified. For example; multi-user functionality, stereoscopic, real world blending or strictly virtual, passive or (inter-)active, and representing the user’s (or users’) body to name a few. These capabilities will have an effect on the level of immersion, or presence, that the users experience, as well as on their ability to conduct meaningful tasks in the virtual environment.

Computer displays are the most basic and least costly technology to interface the VE, movement is controlled using i.e. a 3D manipulator or even a regular computer mouse (Menck et al. 2012). Many users can be present at the same screen but all of them will share the same viewpoint and in that sense be passengers to the main user, who controls the navigation.

Head Mounted Displays (HMDs) have been available for a long time, but only recently have they developed to a level that can be said to trick the human sense well enough for an immersive experience. The HMD is worn over the head of the user and shuts out any external visual stimuli (Duarte Filho et al. 2010). Therefore the users is not inherently able to experience his or her body. There are ways of recording and rendering the users body and posture back into the virtual environment in real time, examples of this is using VR-gloves or 3D imaging sensors to map the user’s movements (Korves and Loftus 2000; Mohler et al. 2010). If such a mapping is performed, this solution can support multi-user environments through rendering the mapped body and postures or an avatar representation of them back into the virtual environment (Beck et al. 2013; Mohler et al. 2010). Recent technological development has significantly decreased the cost of HMDs, compared to when the cited work was written. In Chapter “Sustainable Furniture That Grows with End-Users” of this publication, Berglund et al. state that the industrial partner views HMDs as a scalable solution based on the price point.

Power walls is an umbrella term for large scale back projected displays. Traditionally they are limited to one point of view in the same ways as a computer screen, although there are recent examples where this limitation is overcome through a combination of DLP projectors and shutter glasses (Kulik et al. 2011). The size of the power walls make them suitable for team collaboration, and allow for both active participants and passive spectators in a larger forum (Waurzyniak 2002).

CAVEs are room environments, encapsulated by screens on all (or at least three) sides. The user stands in-between the walls and the virtual environment is projected around him or her. Tracking equipment is used to manipulate the environment to constantly match the user’s viewpoint (Duarte Filho et al. 2010).

With the many available solutions, choosing the appropriate one can be a challenging task. Mohler et al. (2010) stresses the importance of body representation in VR environments and shows that it significantly improves the users’ ability to accurately judge scale and distance. Kulik et al. (2011) focus on the importance of multi-user support in VR, and even state that it isn’t VR if it isn’t multi-user. Figure 1 depicts an abstraction of the main components of a VR system, incorporating 3D imaging data.

2.4 3D Imaging Introduction

Capturing spatial data can be done in a number of ways, utilizing a wide variety of technologies. These technologies are often categorised into tactile and non-tactile (Varady et al. 1997). The tactile technologies require physical contact with the measurand, while the non-tactile rely on some non-matter media for its interaction with the measurand. While tactile technologies are often characterized by high precision they also risk influencing the measured object during the measurement process. The inherent requirement of movement tends to result in comparably low data capture speeds and a limitation on the maximum measurement area. These drawbacks can create difficulties if the measurand has a soft or yielding surface, or is above a certain size (Varady et al. 1997). An industrially proven and frequently used type of tactile sensor is the Coordinate Measurement Machine, CMM. CMM machines rely on linear movement axes which provide three degrees of freedom coupled with a three degrees of freedom probe unit. The CMM machines are programmable and can be used as an integrated resource in a production facilities to conduct in-line automated measurement of products.

Non-tactile technologies exist in a number of forms, a common classification is active and passive non-contact sensors. Passive sensors make use of the existing background signals of the environment, such as light or noise. Active sensors emit some signal into the environment as uses the returned light to map the surroundings. 3D imaging describes the field of capturing spatial data from the real world and making it available in a digital form. It exists on a wide range of scales and for different purposes. The digital spatial data can be stored for future reference, or be processed in order to perform analysis for some specific purpose. The ASTM Subcommittee E57.01 on Terminology for 3D Imaging Systems defines 3D imaging systems as (ASTM 2011):

A non-contact measurement instrument used to produce a 3D representation (e.g., point cloud) of an object or a site.

The term point cloud in the definition deserves a closer explanation. It comes from the descriptive of the contents of the data set which results from a 3D imaging procedure. The data is recorded as coordinates in space, points. The cloud word can be traced to the fact that these coordinate points are unstructured (however, it can be argued that their sampling pattern is directly a function of the operational parameters of the 3D imaging technology). The cloud can also be said to relate to the lack of any semantic information. The point cloud generated from a measurement holds no explicit concept of objects or relationships between points. These may of course be generated or extracted using various techniques in a post processing or analysis operation.

There exists a multitude of measurement instruments for 3D imaging. Several surveys of the field exists to classify and describe available technologies for 3D imaging (Besl 1988; Beraldin et al. 2007). Figure 2 presents one such classification.

Spatial measurements and their suitability/application on scales of size and complexity (adopted from Boeheler 2005)

Since the publication of the work which Fig. 2 is based on the circles have widened considerably. An example is photogrammetry which now is capable of capturing the surface geometry of very complex and feature rich objects.

3D imaging is a technology used in many different fields. Some examples are given in Fig. 3a–d. The chosen technology is relate to both scale of the objects and data requirements connected to the intended use of the data.

-

Figure 3a. Product scan: 3D imaging is used in product development to digitalize for example clay models of product designs. It is also used in production to validate process output, e.g. shape conformance of the physical product to the designed tolerances (Yao 2005; Druve 2016)

-

Figure 3b. 3D Scanning of a building: Building Information Model (BIM) is an Area within facilities management that has adopted 3D imaging. For one, to map the existing facility more accurately, and for the other to improve visualization quality and real world likeness.

-

Figure 3c. 3D imaging of Cultural heritage: For cultural heritage preservation and archaeology 3D imaging has made a significant impact in the last decade, by digitalizing artefacts in a museum or entire structures or archaeologic dig out sites they can be share among researchers or the public at a global scale. Archaeology students from anywhere in the world can access a digital version of the Cheops pyramid or the Incan temples of Machu Pichu (Pieraccini et al. 2001; Sansoni et al. 2009).

-

Figure 3d. Pipe fitting to 3D imaging data: The use of reverse engineering of for example pipes is used frequently in process industry. Typically it provides current state in-data for installing new pipes and retrofitting old pipes (Olofsson et al. 2013).

2.4.1 3D Laser Scanning the Adaptation Process

3D Laser Scanning or Laser Detection and Ranging (LADAR) is a non-contact measurement technology for the capture of spatial data. The technology was developed within the field of surveying as a tool to map terrain as well as to control and monitor the status of construction jobs. Today it is used in a variety of fields, such as building and construction, tunnel and road surveying, robot cell verification, layout planning and Forensics (Slob and Hack 2004; Sansoni et al. 2009).

When capturing spatial data with a 3D scanner it is placed within the environment of interest; this could be an existing production system or a brown field factory floor. A laser pulse or beam is emitted around the environment and its reflection is logged as time of flight or phase shift. Today’s scanners are able to map their entire field of view up to eighty meters away in a matter of minutes with a positional accuracy of a few millimetres (FARO 2012). The resulting data is often referred to as a point cloud, a set of coordinates in 3D space, typically numbering in the tens of millions. The latest 3D scanners are equipped with RGB sensors to add colour information to the coordinates to further improve visualization.

As this technology matures and the tools and methods to capture data become more readily available there is also a steadily growing range of software tools to support its usage (Bi and Wang 2010). These tools are either specialized to visualize and edit point cloud data sets or they are extensions of traditional CAD and simulation tools able to integrate point cloud data. The integration into existing tools enables hybrid modelling environments where CAD and point cloud data are used in parallel. Using hybrid models, CAD models of new machine equipment or products in design stage are put into existing scanned production facilities for planning verification.

Some challenges with this new technology are the size of the data and issues with interoperability between vendor-specific data formats. However, several research efforts strive to automate translation of point cloud data into CAD surfaces to reduce data size (Bosche and Haas 2008; Huang et al. 2009). And new optimized software for visualization of this data format is being developed (Rusu and Cousins 2011). Ongoing standards activities are developing neutral processing algorithms and data formats to ensure repeatability, traceability and interoperability when working with point cloud data (ASTM 2011).

Figure 4 gives an insight to the nature of 3D laser-scanning data by zooming further in on the model until the individual measurement points are distinguishable. The measurement points are singular positions plotted in a 3D space, thus the software visualising them gives them an arbitrary pixel size.

3 3D-Imaging and Virtual Reality Integration Tool

This section describes the tool for integration of 3D imaging and virtual reality which was developed during the UIW-project. The description includes how the tool should be applied, the expected result of such an application, along with the detected limitations.

3.1 Introduction

The purpose of the tool is to understand reality through improved models and model exploration/visualization. 3D imaging provides a realistic and accurate model of current conditions. Virtual models can be accessed and viewed simultaneously by several actors regardless of physical location. The model also acts as a basis for modelling and designing additions and upgrades. Both for visualizing them and designing the physical properties of interfaces and connections to the existing system. Give users an experience that closely imitates physical presence and the possibilities associated with that. Shareable over time and space. Support collaborative work in cross functional, de-centralised project teams. Current status of the development can be found in Chapter “Sustainable Furniture That Grows with End-Users” Adaptation of high variant automotive production system: A collaborative approach supported by 3D-imaging.

3.2 The Application Process

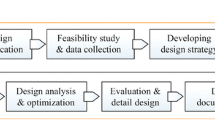

Following Fig. 5 from left to right including the feedback loop from the stakeholders/actors, the following steps can be identified:

3.2.1 Tools/Virtual Technologies Available as Input Data for Expert Tool

Mapping the current state of the system with PLM/MRP/MES system as well as using existing 3D imaging technologies in combination with CAD. Choice of technologies and approach is determined by the objectives as well as the size and complexity of the related objects in the system.

3.2.2 Expert Tool

The currently available input data are then reengineered by bringing in design documents and files for solutions into the environment and combined to reach an upgraded system with new functionalities using the expert development tool/programming solutions. It usually involves process like post-processing of scanned data and make it compatible for the expert tool, preparation of CAD data that are needed and integration with PLM/MES system if necessary.

3.2.3 Preparation of Testable Solutions

Based on the requirements of proposed upgrade, testable solutions can be developed using the current state data that has been collected. Thus the potential solutions can be prepared by topic expert using the expert tool and ready for evaluation by all the actors that involved in the upgraded process.

3.2.4 Accessing Solutions via Different Interfaces

The prepared testable solutions can be accessed in different platforms such as desktop web browser, desktop projector as well as virtual reality HMD. Dependent on the purpose and context of the to-be-evaluated solutions, one can choose either platform or any combination of the available platforms to facilitate better understanding of the proposed solutions.

3.2.5 Interactions/Functionalities

Various interactions are available to support the evaluation of the proposed solutions, from the basic functions like visualization and navigation through walking around and teleporting, to the more advanced functions such as new layout planning and feedback.

3.2.6 Evaluation Result/Feedback

All the involved actors give feedback based on their knowledge and experiences. The feedback is then gathered and reviewed to decide whether approve or disapprove proposed solutions. The synchronized feedback and improvements suggestions are sent upstream in the process to the designer who consolidates the information and if needed creates a new and improved set of solutions.

3.2.7 Concept Refinement

Based on the feedback, an iteration could be appropriate where the expert tool synchronisation needs to have another round of improvements of functionality/visual aids/interfacing or similar. The improved design is then prepared for a new iteration with the involved actors to re-evaluate.

3.2.8 Implementation

Once the concept and solution gives substantial benefits for the actors/stakeholders and they are satisfied with the tool, the next step will be to move towards implementation for structured use in real world cases incorporated in everyday work for the actors and stakeholders who has the most beneficial use out of the developed tool.

3.3 Expected Results from Application of the Tool

The results expected from the application of this tool are many. In response to the challenge faced by modern day industries, this tool is expected to reduce the lead times for design iterations of projects. These iterations can otherwise be costly, but with the use of VR technology early on in the process it is also expected that problems with designs can be found earlier, thereby costing less. Being able to update designs quickly is also believed to reduce the risk of faulty input data into other processes, as there will be a lower occurrence of outdated models. As VR immerses people in an alternate reality (Ref) it is further expected that project members will be able to gain an improved understanding of the project and the design, thus to improve the overall quality of the system and products. Further, this could be used as a marketing tool, where designs can be communicated in an un-ambiguous manner. Last but not least, with the realistic virtual environment available, it not only widen the accessibility of the data to all the involved actors, but can also reduce the travel substantially, which used to be needed. Therefore, further reduction in cost and improvement in sustainability are expected.

3.4 Limitations of the Tool

The technologies involved are currently available as off the shelf products and can be purchased or rented as needed with little foreseeable issues. However, the usage and operation of these tools are not yet commonplace. There is a need for expert users both for collecting 3D imaging data and for processing and preparing the data into a testable model that can be evaluated by topic experts. The navigation and usage of VR tools is also requiring a fairly experienced user to reach its full efficiency potential. The medium should not take over and be the central part of the experience when viewing a model, or else the results from the actual study will be muddled and potentially biased.

3.4.1 3D Imaging Related Limitations

Furthermore, a 3D imaging data set is not the same as having a full-fledged CAD representation. The 3D imaging data, given present day conditions, does not include any semantic information and has to be interpreted by a human to make sense. This reduces the amount of automated analysis and optimisation that is possible. This extends into the scope of the data in the case of 3D imaging, there is often not any data captured from the internal structure of the objects. Unless two technologies are combined together the user will have to choose to capture either surface geometries or internal geometries thorough, e.g. X-ray or CT scanning.

It is also clear that despite the added realism that comes from integrating 3D imaging and VR, it is not equivalent of a physical model. The strength of 3D imaging comes from the possibility of capturing reality, what is actually there, rather than what was meant to be there, i.e. a design model. However, this does not eliminate the risk of having bad data, or outdated data. Perhaps it can even strengthen the risk in some cases through its high fidelity and accuracy. It is necessary to put processes in place that verify the relevance of the datasets. This could be related to i.e. date of capture, scope of capture etc.

While there is a lot of ongoing research into the reverse engineering process and its automation, there is currently no complete way of creating CAD data from the 3D imaging data sets. This means that the process of converting data into use in conventional design software could be costly. So perhaps organizations have to take a step and broaden their design software to incorporate 3D imaging data capabilities also. This is a business decision to take in concur-rent times, but might soon be unnecessary as more and more software developers are integrating 3D imaging data support into their existing software.

Another issue that might occur is the fact that some 3D imaging technologies require the object of capture to be completely at rest during the data capture procedure. In some cases, this is either infeasible, or associated with a large cost.

3.4.2 VR Related Limitations

The current technology for viewing and interacting with VR environments is perhaps not sufficiently powerful to smoothly handle large scale 3D imaging models. If the users experience lag tendencies or other graphical glitches it might take away from the immersion and involvement during design review sessions. For instance, some observers may experience motion sickness as a result of these limitations (Kennedy et al. 1993). Ergonomic related issues is another obstacle that needs more studies and improvements as current VR solutions are not suitable for prolong usage (Cobb et al. 1999). There is also currently a limitation on physical interaction between persons, while immersed in VR. At the moment, it is not possible for multi-user interaction, something that may prove crucial when evaluating models for feasibility or suitability.

4 Conclusion

Promising technological developments have recently been made in the field of 3D imaging and VR technologies. These developments facilitate both wide spread (all employees through web interfaces) as well as detailed modelling and analysis for interesting questions and decisions for several actors (maintenance, designers, operators etc.). UIW is one of the first applied science projects in direct collaboration with industry to actually make use of these new opportunities. Acceptance/diffusion if innovation in this field is not a fast process since the actual beneficiary initially does not even know that the technology exists, and yet is the methodologies and work tasks to be performed to be tailor-made and then standardised, which is some the work UIW provides to European industry. This project provides an insight into the use of these technologies in a wide range of industries and services.

References

Ahn, H. J., Lee, H. J., Cho, K., & Park, S. J. (2005). Utilizing knowledge context in virtual collaborative work. Decision Support Systems, 39(4), 563–582.

ASTM E2544-11a. (2011). Standard terminology for three-dimensional (3D) imaging systems. West Conshohocken, PA: ASTM International. www.astm.org

ASTM Standard E2807. (2011). Standard specification for 3D imaging data exchange, Version 1.0. West Conshohocken, PA: ASTM International. www.astm.org

Beck, S., Kunert, A., Kulik, A., & Froehlich, B. (2013). Immersive group-to-group telepresence. IEEE Transactions on Visualization and Computer Graphics, 19(4), 616–625.

Beraldin, J. A., Blais, F., El-Hakim, S., Cournoyer, L., & Picard, M. (2007). Traceable 3D imaging metrology: Evaluation of 3D digitizing techniques in a dedicated metrology laboratory. In Proceedings of the 8th Conference on Optical 3-D Measurement Techniques.

Besl, P. J. (1988). Active, optical range imaging sensors. Machine Vision and Applications, 1(2), 127–152.

Bi, Z. M., & Wang, L. (2010). Advances in 3D data acquisition and processing for industrial applications. Robotics and Computer-Integrated Manufacturing, 26(5), 403–413.

Boeheler, W. (2005). Comparison of 3D laser scanning and other 3D measurement techniques. In Recording, modeling and visualization of cultural heritage (pp. 89–100). London: Taylor and Francis.

Bosche, F., & Haas, C. T. (2008). Automated retrieval of 3D CAD model objects in construction range images. Automation in Construction, 17(4), 499–512.

Bowman, Doug A., & McMahan, Ryan P. (2007). Virtual reality: how much immersion is enough? Computer, 40(7), 36–43.

Cobb, S. V., Nichols, S., Ramsey, A., & Wilson, J. R. (1999). Virtual reality-induced symptoms and effects (VRISE). Presence, 8(2), 169–186.

Deitz, D. (1995). Real engineering in a virtual world. Mechanical Engineering, 117(7), 78.

Druve, J. (2016). Reverse engineering av 3D-skannad data. Göteborg: Chalmers University of Technology.

Duarte Filho, N., Costa Botelho, S., Tyska Carvalho, J., Botelho Marcos, P., Queiroz Maffei, R., Remor Oliveira, R., et al. (2010). An immersive and collaborative visualization system for digital manufacturing. The International Journal of Advanced Manufacturing Technology, 50(9–12), 1253–1261.

FARO Technologies. (2012). FARO Technologies Inc.

Gibson, J. J. (1979). The ecological approach to visual perception. Boston: Houghton Mifflin.

Groover, M. P. (2007). Automation, production systems, and computer-integrated manufacturing. Prentice Hall Press.

Huang, H., Li, D., Zhang, H., Ascher, U., & Cohen-Or, D. (2009). Consolidation of unorganized point clouds for surface reconstruction. In ACM Transactions on Graphics (TOG) (Vol. 28, No. 5, p. 176). ACM.

Kennedy, R. S., Lane, N. E., Berbaum, K. S., & Lilienthal, M. G. (1993). Simulator sickness questionnaire: An enhanced method for quantifying simulator sickness. The International Journal of Aviation Psychology, 3(3), 203–220.

Korves, B., & Loftus, M. (1999). The application of immersive virtual reality for layout planning of manufacturing cells. Proceedings of the Institution of Mechanical Engineers, Part B: Journal of Engineering Manufacture, 213(1), 87–91.

Korves, B., & Loftus, M. (2000). Designing an immersive virtual reality interface for layout planning. Journal of Materials Processing Technology, 107(1), 425–430.

Kulik, A., Kunert, A., Beck, S., Reichel, R., Blach, R., Zink, A., et al. (2011). C1x6: A stereoscopic six-user display for co-located collaboration in shared virtual environments. ACM Transactions on Graphics (TOG), 30(6), 188.

Loeffler, C., & Anderson, T. (1994). The virtual reality casebook. Wiley.

Loomis, J. M. (1992). Distal attribution and presence. Presence: Teleoperators and Virtual Environments, 1, 113–119.

Meehan, M., Insko, B., Whitton, M., & Brooks Jr, F. P. (2002). Physiological measures of presence in stressful virtual environments. ACM Transactions on Graphics (TOG), 21(3), 645–652.

Menck, N., Yang, X., Weidig, C., Winkes, P., Lauer, C., Hagen, H., et al. (2012). Collaborative factory planning in virtual reality. In 45th CIRP Conference on Manufacturing Systems (pp. 317–322).

Mohler, B. J., Creem-Regehr, S. H., Thompson, W. B., & Bülthoff, H. H. (2010). The effect of viewing a self-avatar on distance judgments in an HMD-based virtual environment. Presence: Teleoperators and Virtual Environments, 19(3), 230–242.

Mont, O. (2002). Clarifying the concept of product–service system. Journal of Cleaner Production, 10, 237–245. doi:10.1016/S0959-6526(01)00039-7

Neugebauer, R., Purzel, F., Schreiber, A., & Riedel, T. (2011). Virtual reality-aided planning for energy-autonomous factories. In Proceedings of the 9th IEEE International Conference on Industrial Informatics (INDIN) (pp. 250–254).

Olofsson, A., Sandgren, M., Stridsberg, L., & Westberg, R. (2013). Användning av punktmolnsdata från laserskanning vid redigering, simulering och reverse engineering i en digital produktionsmiljö. Göteborg: Chalmers University of Technology.

Pausch, R., Dennis, P., & Williams, G. (1997). Quantifying immersion in virtual reality. In Proceedings of the 24th Annual Conference on Computer Graphics and Interactive Techniques (pp. 13–18). ACM Press/Addison-Wesley Publishing Co.

Pieraccini, M., Guidi, G., & Atzeni, C. (2001). 3D digitizing of cultural heritage. Journal of Cultural Heritage, 2(1), 63–70.

Pimentel, K., & Texeira, K. (1993). Virtual reality: Through the new looking-glass. Intel/Windcrest McGraw Hill.

Rusu, R. B., & Cousins, S. (2011). 3d is here: Point cloud library (pcl). In Proceedings of the IEEE International Conference on Robotics and Automation (ICRA) (pp. 1–4). IEEE.

Sansoni, G., Trebeschi, M., & Docchio, F. (2009). State-of-the-art and applications of 3D imaging sensors in industry, cultural heritage, medicine, and criminal investigation. Sensors, 9(1), 568–601.

Schuh, G., Aghassi, S., Orilski, S., Schubert, J., Bambach, M., Freudenberg, R., et al. (2011). Technology roadmapping for the production in high-wage countries. Production Engineering, 5(4), 463–473.

Slob, S., & Hack, R. (2004). 3D terrestrial laser scanning as a new field measurement and monitoring technique. In Engineering geology for infrastructure planning in Europe (pp. 179–189). Berlin, Heidelberg: Springer.

Smith, R. P., & Heim, J. A. (1999). Virtual facility layout design: The value of an interactive three-dimensional representation. International Journal of Production Research, 37(17), 3941–3957.

Song, X. M., Thieme, R. J., & Xie, J. (1998). The impact of cross-functional joint involvement across product development stages: An exploratory study. Journal of Product Innovation Management, 15(4), 289–303.

Steuer, J. (1992). Defining virtual reality: Dimensions determining telepresence. Journal of Communication, 42(4), 73–93.

Teyseyre, A., & Campo, R. M. (2009). An overview of 3D software visualization. IEEE TVCG, 15(1), 87–105.

Varady, T., Martin, R. R., & Cox, J. (1997). Reverse engineering of geometric models—An introduction. Computer Aided Design, 29(4), 255–268.

Waurzyniak, P. (2002). Going virtual. Manufacturing Engineering, 128(5), 77–88.

Yao, A. W. L. (2005). Applications of 3D scanning and reverse engineering techniques for quality control of quick response products. The International Journal of Advanced Manufacturing Technology, 26(11–12), 1284–1288.

Author information

Authors and Affiliations

Corresponding author

Editor information

Editors and Affiliations

Rights and permissions

Open Access This chapter is licensed under the terms of the Creative Commons Attribution-NonCommercial 4.0 International License (http://creativecommons.org/licenses/by-nc/4.0/), which permits any noncommercial use, sharing, adaptation, distribution and reproduction in any medium or format, as long as you give appropriate credit to the original author(s) and the source, provide a link to the Creative Commons license and indicate if changes were made.

The images or other third party material in this chapter are included in the chapter's Creative Commons license, unless indicated otherwise in a credit line to the material. If material is not included in the chapter's Creative Commons license and your intended use is not permitted by statutory regulation or exceeds the permitted use, you will need to obtain permission directly from the copyright holder.

Copyright information

© 2017 The Author(s)

About this chapter

Cite this chapter

Berglund, J., Gong, L., Sundström, H., Johansson, B. (2017). Virtual Reality and 3D Imaging to Support Collaborative Decision Making for Adaptation of Long-Life Assets. In: Grösser, S., Reyes-Lecuona, A., Granholm, G. (eds) Dynamics of Long-Life Assets. Springer, Cham. https://doi.org/10.1007/978-3-319-45438-2_7

Download citation

DOI: https://doi.org/10.1007/978-3-319-45438-2_7

Published:

Publisher Name: Springer, Cham

Print ISBN: 978-3-319-45437-5

Online ISBN: 978-3-319-45438-2

eBook Packages: Business and ManagementBusiness and Management (R0)