Abstract

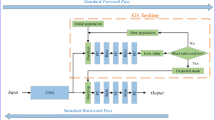

Dropout is a powerful way for preventing model overfitting. However, it is inefficient due to it randomly ignoring some neurons. Although there are many ways on Dropout, they are still either inefficient on improving generalization ability or not effective enough. In this paper, we propose Mutual Information Dropout, which is an efficient Dropout based on dropping neurons with low mutual information. In Mutual Information Dropout, instead of randomly ignoring some neurons, we first evaluated the mutual information of neurons to dropout with mutual information below a certain threshold. In this way, Mutual Information Dropout can achieve effective improving generalization ability with evaluate neurons. Extensive experiments on Three datasets show that Mutual Information Dropout is much more efficient than many existing Dropout and can meanwhile achieve comparable or even better generalization ability.

The code: https://github.com/shjdjjfi/MI-Dropout.git.

Access this chapter

Tax calculation will be finalised at checkout

Purchases are for personal use only

Similar content being viewed by others

References

LeCun, Y., Cortes, C., Burges, C.: The MNIST database of handwritten digits. In: International Conference on Pattern Recognition (ICPR), pp. 545–548. IEEE Computer Society, Washington, DC (1998)

Krizhevsky, A.: Learning Multiple Layers of Features from Tiny Images. In: Technical Report, University of Toronto, pp. 1–60 (2009)

Srivastava, N., Hinton, G., Krizhevsky, A., Sutskever, I., Salakhutdinov, R.: Dropout: a simple way to prevent neural networks from overfitting. J. Mach. Learn. Res. 15(1), 1929–1958 (2014)

Tompson, J., Goroshin, R., Jain, A., LeCun, Y., Bregler, C.: Efficient object localization using convolutional networks. In: Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, pp. 648–656. IEEE Computer Society, Boston, MA, USA (2015)

Klambauer, G., Unterthiner, T., Mayr, A., Hochreiter, S.: Self-Normalizing Neural Networks. In: Advances in Neural Information Processing Systems 30, pp. 971–980. Curran Associates, Inc., Red Hook, NY, USA (2017)

Srivastava, R.K., Greff, K., Schmidhuber, J.: Highway networks. In: Proceedings of the 17th International Conference on Artificial Intelligence and Statistics, pp. 646–654. PMLR, Reykjavik, Iceland (2014)

Zhang, C., Bengio, S., Hardt, M., Recht, B., Vinyals, O.: Understanding deep learning requires rethinking generalization. In: Proceedings of the International Conference on Learning Representations (ICLR), pp. 1–14 (2017)

Ethayarajh, K., Choi, Y., Swayamdipta, S.: Information-Theoretic Measures of Dataset Difficulty. CoRR, abs/2110.08420 (2021)

Wan, L., Zeiler, M., Zhang, S., Cun, Y.L., Fergus, R.: Regularization of Neural Networks using DropConnect. In: Proceedings of the 30th International Conference on Machine Learning, pp. 1058–1066. JMLR.org, Atlanta, GA, USA (2013)

Zhang, Y., Zhang, L., Yang, J.: A novel deep learning framework for imbalanced multi-class classification problems. IEEE Trans. Neural Networks Learn. Syst. 29(8), 3573–3584 (2018)

Belghazi, M.I., Baratin, A., Rajeswaran, A., Ozair, S., Bengio, Y., Courville, A.: Mine: Mutual information neural estimation. In: International Conference on Machine Learning, vol. 80, pp. 409–418. PMLR (2018)

Oord, A.V.D., Li, Y., Vinyals, O.: Representation learning with contrastive predictive coding. arXiv preprint arXiv:1807.03748 (2019)

Poole, B., Lahiri, S., Raghu, M., Sohl-Dickstein, J., Ganguli, S.: On variational bounds of mutual information. arXiv preprint arXiv:1905.06922 (2019)

Han, Y., Liu, Z., Zhang, H., Yang, M., Zhu, S.C.: An efficient framework for mutual information estimation with improved optimization. In: Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, pp. 3083–3092 (2021)

Liu, Z., Han, Y., Zhang, H., Yang, M., Zhu, S.C.: MIE: Mutual information estimation under distributional shift. arXiv preprint arXiv:2102.08584 (2021)

Bishop, D., et al.: Spatial mapping of local density variations in two-dimensional electron systems using scanning photoluminescence. Phys. Rev. Lett. 119, 136801 (2017)

Shao, W., Wang, B., Shen, Y., Liu, T., Yu, K.: Informative dropout for robust representation learning: a shape-bias perspective. In: Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR), pp. 6027–6036 (2021)

Wang, Y., Lin, Z., Wang, X., and Qian, C.: Multi-sample dropout for accelerated training and better generalization. In: Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, pp. 2766–2775 (2021)

Author information

Authors and Affiliations

Corresponding author

Editor information

Editors and Affiliations

Rights and permissions

Copyright information

© 2023 The Author(s), under exclusive license to Springer Nature Switzerland AG

About this paper

Cite this paper

Song, Z., Ma, S. (2023). Mutual Information Dropout: Mutual Information Can Be All You Need. In: Iliadis, L., Papaleonidas, A., Angelov, P., Jayne, C. (eds) Artificial Neural Networks and Machine Learning – ICANN 2023. ICANN 2023. Lecture Notes in Computer Science, vol 14262. Springer, Cham. https://doi.org/10.1007/978-3-031-44201-8_8

Download citation

DOI: https://doi.org/10.1007/978-3-031-44201-8_8

Published:

Publisher Name: Springer, Cham

Print ISBN: 978-3-031-44200-1

Online ISBN: 978-3-031-44201-8

eBook Packages: Computer ScienceComputer Science (R0)