Abstract

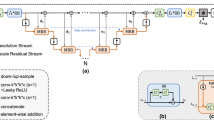

Multimodal 2D-3D co-registration is a challenging problem with numerous clinical applications, including improved diagnosis, radiation therapy, or interventional radiology. In this paper, we present StructuRegNet, a deep-learning framework that addresses this problem with three novel contributions. First, we combine a 2D-3D deformable registration network with an adversarial modality translation module, allowing each block to benefit from the signal of the other. Second, we solve the initialization challenge for 2D-3D registration by leveraging tissue structure through cascaded rigid areas guidance and distance field regularization. Third, StructuRegNet handles out-of-plane deformation without requiring any 3D reconstruction thanks to a recursive plane selection. We evaluate the quantitative performance of StructuRegNet for head and neck cancer between 3D CT scans and 2D histopathological slides, enabling pixel-wise mapping of low-quality radiologic imaging to gold-standard tumor extent and bringing biological insights toward homogenized clinical guidelines. Additionally, our method can be used in radiation therapy by mapping 3D planning CT into the 2D MR frame of the treatment day for accurate positioning and dose delivery. Our framework demonstrates superior results to traditional methods for both applications. It is versatile to different locations or magnitudes of deformation and can serve as a backbone for any relevant clinical context.

Access this chapter

Tax calculation will be finalised at checkout

Purchases are for personal use only

Similar content being viewed by others

References

Balakrishnan, G., Zhao, A., Sabuncu, M.R., Guttag, J., Dalca, A.V.: VoxelMorph: a learning framework for deformable medical image registration. IEEE Trans. Med. Imaging 38(8), 1788–1800 (2019). https://doi.org/10.1109/TMI.2019.2897538

Caldas-Magalhaes, J., et al.: The accuracy of target delineation in laryngeal and hypopharyngeal cancer. Acta Oncologica 54(8), 1181–1187 (2015). https://doi.org/10.3109/0284186X.2015.1006401

Chappelow, J., et al.: Elastic registration of multimodal prostate MRI and histology via multiattribute combined mutual information. Med. Phys. 38(4), 2005–2018 (2011). https://doi.org/10.1118/1.3560879

Ferrante, E., Paragios, N.: Slice-to-volume medical image registration: a survey. Med. Image Anal. 39, 101–123 (2017). https://doi.org/10.1016/j.media.2017.04.010

Geets, X., et al.: Inter-observer variability in the delineation of pharyngo-laryngeal tumor, parotid glands and cervical spinal cord: comparison between CT-scan and MRI. Radiother. Oncol.: J. Eur. Soc. Ther. Radiol. Oncol. 77(1), 25–31 (2005). https://doi.org/10.1016/j.radonc.2005.04.010

Guo, H., Xu, X., Xu, S., Wood, B.J., Yan, P.: End-to-end ultrasound frame to volume registration (2021)

Heinrich, M.P., et al.: MIND: modality independent neighbourhood descriptor for multi-modal deformable registration. Med. Image Anal. 16(7), 1423–1435 (2012). https://doi.org/10.1016/j.media.2012.05.008

Jaganathan, S., Wang, J., Borsdorf, A., Shetty, K., Maier, A.: Deep iterative 2D/3D registration. arXiv:2107.10004 [cs, eess], vol. 12904, pp. 383–392 (2021). https://doi.org/10.1007/978-3-030-87202-1_37

Jager, E.A., et al.: Interobserver variation among pathologists for delineation of tumor on H &E-sections of laryngeal and hypopharyngeal carcinoma. How good is the gold standard? Acta Oncologica 55(3), 391–395 (2016). https://doi.org/10.3109/0284186X.2015.1049661

Kimm, S.Y., et al.: Methods for registration of magnetic resonance images of ex vivo prostate specimens with histology. J. Magn. Reson. Imaging 36(1), 206–212 (2012)

Kuckertz, S., Papenberg, N., Honegger, J., Morgas, T., Haas, B., Heldmann, S.: Learning deformable image registration with structure guidance constraints for adaptive radiotherapy. In: Špiclin, Ž, McClelland, J., Kybic, J., Goksel, O. (eds.) WBIR 2020. LNCS, vol. 12120, pp. 44–53. Springer, Cham (2020). https://doi.org/10.1007/978-3-030-50120-4_5

Lee, M.C.H., Oktay, O., Schuh, A., Schaap, M., Glocker, B.: Image-and-spatial transformer networks for structure-guided image registration (2019). https://doi.org/10.48550/arXiv.1907.09200

Leroy, A., et al.: MO-0476 statistical discrepancies in GTV delineation for H &N cancer across expert centers. Radiother. Oncol. 170, S426–S427 (2022). https://doi.org/10.1016/S0167-8140(22)02370-2

Leroy, A., et al.: End-to-end multi-slice-to-volume concurrent registration and multimodal generation. In: Wang, L., Dou, Q., Fletcher, P.T., Speidel, S., Li, S. (eds.) MICCAI 2022. LNCS, pp. 152–162. Springer, Cham (2022). https://doi.org/10.1007/978-3-031-16446-0_15

Li, L., et al.: Co-registration of ex vivo surgical histopathology and in vivo T2 weighted MRI of the prostate via multi-scale spectral embedding representation. Sci. Rep. 7(1), 8717 (2017). https://doi.org/10.1038/s41598-017-08969-w

Markova, V., Ronchetti, M., Wein, W., Zettinig, O., Prevost, R.: Global multi-modal 2D/3D registration via local descriptors learning (2022)

Njeh, C.F.: Tumor delineation: the weakest link in the search for accuracy in radiotherapy. J. Med. Phys./Assoc. Med. Physicists India 33(4), 136–140 (2008). https://doi.org/10.4103/0971-6203.44472

Ohnishi, T., et al.: Deformable image registration between pathological images and MR image via an optical macro image. Pathol. Res. Pract. 212(10), 927–936 (2016). https://doi.org/10.1016/j.prp.2016.07.018

Rusu, M., et al.: Registration of presurgical MRI and histopathology images from radical prostatectomy via RAPSODI. Med. Phys. 47(9), 4177–4188 (2020)

Shao, W., et al.: ProsRegNet: a deep learning framework for registration of MRI and histopathology images of the prostate. arXiv:2012.00991 [eess] (2020)

Tian, L., Lee, Y.Z., Estépar, R.S.J., Niethammer, M.: LiftReg: limited angle 2D/3D deformable registration (2023)

Ward, A.D., et al.: Prostate: registration of digital histopathologic images to in vivo MR images acquired by using endorectal receive coil. Radiology 263(3), 856–864 (2012). https://doi.org/10.1148/radiol.12102294

Xiao, G., et al.: Determining histology-MRI slice correspondences for defining MRI-based disease signatures of prostate cancer. Comput. Med. Imaging Graph. 35(7), 568–578 (2011). https://doi.org/10.1016/j.compmedimag.2010.12.003

Xu, Z., et al.: Adversarial uni- and multi-modal stream networks for multimodal image registration. arXiv:2007.02790 [cs, eess] (2020)

Zhu, J.Y., Park, T., Isola, P., Efros, A.A.: Unpaired image-to-image translation using cycle-consistent adversarial networks. arXiv:1703.10593 [cs] (2020)

Author information

Authors and Affiliations

Corresponding author

Editor information

Editors and Affiliations

1 Electronic supplementary material

Below is the link to the electronic supplementary material.

Rights and permissions

Copyright information

© 2023 The Author(s), under exclusive license to Springer Nature Switzerland AG

About this paper

Cite this paper

Leroy, A. et al. (2023). StructuRegNet: Structure-Guided Multimodal 2D-3D Registration. In: Greenspan, H., et al. Medical Image Computing and Computer Assisted Intervention – MICCAI 2023. MICCAI 2023. Lecture Notes in Computer Science, vol 14229. Springer, Cham. https://doi.org/10.1007/978-3-031-43999-5_73

Download citation

DOI: https://doi.org/10.1007/978-3-031-43999-5_73

Published:

Publisher Name: Springer, Cham

Print ISBN: 978-3-031-43998-8

Online ISBN: 978-3-031-43999-5

eBook Packages: Computer ScienceComputer Science (R0)