Abstract

This paper discusses structure model based correction of thermal induced errors at machine tools. Using a machine model evaluated in thermal real-time, the thermal induced errors at the tool center point (TCP) are calculated based on information gotten from the machine control (e.g., axes velocities, positions, and motor currents) and ambient temperature. The machine model describes the physical relationships and considers the structure and structural variability resulting in traverse movements of the feed axes – the so-called structure model. To create this, finite elements are used as thermal and thermo-elastic models, and model order reduction (MOR) techniques are used to enable the calculation of high-resolution models in thermal real-time. Subsequent parameter updates can improve the accuracy of the initial parameter set of thermal models. A systematic procedure developed for this purpose and its application to a demonstrator machine are presented. For the update, parameters are selected which can change over the operating time, e.g., due to wear. Temperature sensor positions are chosen, sensitive to changes in these parameters. Simulations with parameters varied in a plausible range are used to determine whether parameter optimizations are reasonable. The parameter optimization runs in a trusted execution environment (TEE) on a server in parallel to the calculation of the correction model on the machine control. The confidential input data of the model and the model itself have to be protected from unauthorized access. The efficient model calculation and parameter optimization in a secure server environment leads to an adaptive thermal model (digital twin).

You have full access to this open access chapter, Download conference paper PDF

Similar content being viewed by others

Keywords

1 Introduction

Conventional counter measures for the reduction of thermal errors of machine tools like machine cooling are energy consuming [1]. Alternative approaches without additional energy consumption are model based corrections of the errors [2]. One of these approaches is the structure model based correction [3]. The structure model is a physical based model of the machine tool, e.g., a finite element model. Accessible data (e.g., axes velocities, axes positions, motor currents) in the control are utilized as input information for the model. Based on this information, the heat sources (e.g., friction) in the machine tool are calculated. Additionally, the heat conduction depending on this information is determined, e.g., heat conduction in bearings. For these calculations, empirical models are normally used. The temperature field of the machine tool is calculated based on the heat sources and thermal conductions by a physical based model. The thermal deformation of the machine tool is calculated with the help of the temperature field also by a physical based model. In this way, the error at the tool center point is determined and corrected. This paper focuses on the thermal model as part of the structure model.

The thermal behavior of machine tools change over the lifetime due to wear, changed preload and lubrication of machine components. For example, in [4] significant changes in the friction of linear axes are detected after four and seven years. The friction has reduced up to 70%. The reasons therefore are the run in process and the wear caused reduction of the diameter of rolling elements, which leads to a reduced preload. The temperature field is influenced by the reduced heat flow due to the reduced friction. Other examples are the reduced preload between ball screw nut and ball screw spindle of up to 75% over lifetime in [5] and the preload degradation for linear rolling guides of up to 82% in [6]. The preload degradation leads to a reduced friction in these machine components.

The parameters of the model should be updated over the lifetime to match these changes in order to maintain and improve the accuracy of the calculated temperature field and therefore the quality of the correction. In this paper, a systematic procedure is developed for this purpose. At first, a workflow for the creation of efficient calculating models is introduced. Afterwards, the approach for the parameter update is explained. Additionally, the trusted execution environment (TEE) is described, which is used to run the parameter optimization safely on a server in parallel to the calculation of the correction model on the machine control. Finally, the approach is applied to a demonstrator machine.

2 Thermo-Elastic Model

2.1 Fundamentals

The basis of all structural models consists in physically founded model formulations, which require the solution of partial differential equations. Using a discretization method, here by FEA (Finite Element Analysis), results in a system of coupled ordinary differential Eqs. (1), which allow the simulation by numerical time integration routines.

The coefficients are:

Muu – the matrix of inertia properties

Duu – the matrix of damping properties

Kuu – the matrix of stiffness properties

KuT – the coupling matrix to consider the thermally induced strain

CTT – the matrix of heat capacities

LTT – the matrix of heat conduction and heat transfer within the system

CTu – the coupling matrix to consider the heat generation by deformation

T – the vector of temperatures

F – the vector of external forces and moments

\({\dot{\mathbf{Q}}}\) – the vector of heat flows at the system boundaries

The elimination of sub-matrixes in Eq. (1), which are not required from a technical point of view and with respect to their characteristics, enables the separate solution of thermal and mechanical problem on different time steps:

2.2 Modeling Conception

The modeling pursues the goal of integrating a state space model into a CNC in form of a process-parallel program code. This code calculates correction values based on current thermal state and their resulting thermally induced deformation of a machine in order to apply them to the axis set points of the machine control. For this purpose, a programming environment with its own model description language (here MATLAB® with its programming language “M”) constitutes the central working environment. The FEA software environment (here ANSYS®) is used exclusively for the creation of the state space model and the associated system matrices.

From a programmer’s perspective, the modeling follows an object-oriented concept in form of a class library. The individual classes encapsulate various model information and provide methods for managing a structural model. The class library divides into four categories:

-

Elements and parts represent the sub-models in the sense of an FEA environment and provide the import and conversion routines, among other things.

-

Groups and axes allow parts and elements to be combined into assemblies that are stationary resp. Movable relative to each other.

-

Configurations represent the physical domains in the model as well as the individual variants of a model’s pose dependencies in the workspace.

-

Matrices and additional information contain the mathematical representation of the sub-models and manage the assignments to the finite elements and nodes as well as their degree of freedom.

From the user’s perspective, the modeling follows an assembly-oriented concept. The instantiation of the mentioned classes allows the construction of a model hierarchy, which in turn follows the kinematic chain of a machine tool.

2.3 Modeling Procedure

The setup of a model, the computation of its temperature fields and resulting elastic deformations can be summarized in the following basic procedure, see Fig. 1:

-

1.

Preparation of CAD geometry for all machine parts: This includes geometry defeaturing, segmentation of functional surfaces etc. and assembling.

-

2.

Definition of all surfaces where thermal and/or mechanical boundary conditions or coupling conditions will be modeled later.

-

3.

Discretization of the geometry into finite elements, parametrization of the time-invariant quantities (e.g. material parameters) and creation of the system matrices in a FEA software.

-

4.

Export of the required data from the FEM environment.

-

5.

Import of these data into the already mentioned programming environment with structured data storage as well as the separation of the thermal (according to Eq. (2)) and mechanic (according to Eq. (3)) model equations.

-

6.

Supplementing the parameterization functions for the time-dependent parameters and boundary conditions: This includes motion profiles of the machine axes, power loss models, free and forced convection, etc.

-

7.

Simulation of the temperature field in time domain,

-

8.

Computation of the deformation field from the temperature field at discrete points in time and

-

9.

Calculation resp. Application of the axis correction values and/or visualization and evaluation of the results, either in the programming environment or after re-transmission in the FEA environment.

Especially for steps 7 and 8 in order to limit the computation times, it is recommended to reduce the system degree of freedom (DoF) by applying model order reduction (MOR) procedures. These generate the projection matrixes V and W for the subspaces (with index R). In this case, the state space model has a control matrix \({\mathbf{B}}_{{\text{R}}} = {\mathbf{W}}^{{\text{T}}}\) and observer matrixes \({\mathbf{C}}_{{{\text{Rth}}}} = {\mathbf{V}}\) resp. \({\mathbf{C}}_{{{\text{Rel}}}} = - {\mathbf{K}}_{{{\text{uu}}}}^{ - 1} \cdot{\mathbf{K}}_{{{\text{uT}}}} \cdot{\mathbf{V}}\).

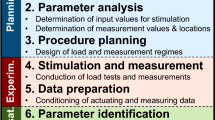

3 Parameter Update

In this section, the approach for the parameter update is explained. In Fig. 2 the flowchart for the parameter update is shown. On the left, the results of the thermal simulation are compared cyclical at specific points (marked in the picture with red dots) with the measured temperatures. If the difference between simulated and measured temperatures exceeds the required limits for the model accuracy, measures have to be taken to increase the accuracy of the model. These limits depend on the requirements of the specific model application. In order to correct the thermal-based errors, the goal is to maintain the model accuracy throughout the lifetime of the machine. The next step is to check if a parameter optimization is reasonable. Therefore, the parameters in question are varied in a plausible range to determine minimum and maximum reachable temperature with the simulation model. This calculation can be done on a server. If the measured temperature exceeds these extrema, a parameter optimization is not reasonable because there are no plausible parameters, which allow the model to reach the measured value. Possible reasons for this are a defect sensor, a defect machine component or an insufficient model. Either way, the user is getting a warning ((6) in Fig. 2) and no parameter optimization and update is conducted. If the measured value is within range, a parameter optimization is done. The results are fed back to the cyclical running thermal model at the machine control.

To perform the described procedure, certain information is required:

At first the parameters in the model ((1) in Fig. 2) are determined, which are uncertain over the lifetime of the machine tool and have a significant influence on the temperature field of the machine tool. Heat sources and heat sinks have a dominant influence and are model by empirical functions. The parameters of these functions are usually uncertain [7]. Components at which friction occurs are able to change their behavior during the life time due to wear (e.g., pitting, micro pitting, cracks), changes in lubrication (e.g., aging, regreasing, other lubricant, filling quantity), changed preload, fouling with dust and dirt particles.

In the second step, sensor positions have to be selected for the temperature measurement ((2) in Fig. 2). A sensitivity analysis has to be conducted to find the positions at which the temperature is dominantly influenced by a heat source or heat sinks. In addition, the influence of the heat sources and sinks should be separable at the positions. The necessary datasets for the analysis can be gathered by simulation or experiments. For the analysis, the temperature field during thermal steady state is used. Because at this state the heat capacity, which is a certain model parameter, has no effect and the heat conduction (partially uncertain) and heat sources and sinks (uncertain) determine the temperature field. As load cases, combined and separated loads at heat sources and sinks in different heights should be used. One possible method for the sensitivity analysis is “pearson partial correlation coefficients adjusted for internal variables” [8, 9]. The temperature sensor positions with highest pearson partial correlation coefficient to the heat source or sink is selected. In addition, the p-value have to be checked. If it is above the significance level of 5%, the influence of the heat sources or sinks cannot be separated.

The maximum permissible difference (limits) between measurement and simulation at these positions has to be defined ((3) in Fig. 2) after the selection of the sensor positions. These values determine the accuracy of the correction over the whole lifetime of the machine tool. The first parameterization of the model based on literature values is usual uncertain. Therefore, experiments are conducted to adjust the model parameters before the correction is activated. The maximum absolute difference between simulation and measurement after the adjustment is the model accuracy, which should be obtained. Whenever the simulated temperatures exceed these limits the variant calculations should be conducted and if reasonable the parameter optimization.

A reasonable range of the model parameters ((4) in Fig. 2) have to be estimated for the calculation of the maximum and minimum reachable temperatures at the measurement positions. The range have to be obtained from literature, which investigates the behavior of machine components over lifetime. It defines also the lower and upper boundaries for the optimization of the model parameters.

The time window ((5) in Fig. 2) for which the variant calculation and the parameter optimization has to be conducted depends on the thermal time behavior of the components of the machine tool. Therefore, the thermal time behavior has to be characterized. This behavior can be approximated by lag elements of first order. This property is also used by correction approaches that use transfer functions as a model [10,11,12]. The time behavior of a lag element of first order depends on the time constant τ. The time constant is determined by fitting a lag element to a simulated step response for a load step. Since the thermal time behavior can be load depended, different load steps are applied to the machine component models, e.g. 10%, 50% and 100% of the maximum load. The loads are distinguished into internal and external influences on the machine component [13]. Typical internal heat sources and sinks are the power losses in the drives, friction and machine cooling. The environment of the machine tool is the external influence via heat conduction to the machine foundation, convection to the surrounding air and radiation to the background. The largest time constant τmax determines how large the time window for the variant calculation and the parameter optimization has to be. The permissible error resulting from only using this time window for the calculation of the temperature field should be an order of magnitude smaller than the reasonable parameter range. The temperature error (\(\Delta T_{E}\)) is quantified in relation to the thermal steady-state temperature after a load step (\(\Delta T_{S}\)) (e.g. \({{\Delta T_{E} } \mathord{\left/ {\vphantom {{\Delta T_{E} } {\Delta T_{S} }}} \right. \kern-\nulldelimiterspace} {\Delta T_{S} }} = 1\%\)). With the permissible error and the Eq. (4) the time window tWindow for the variant calculation and parameter optimization can be determined.

In the optimization ((7) in Fig. 2) the error squares between measured and simulated temperature values are minimized. An optimization method is needed for nonlinear multivariable problems with constrained variables. In this paper, the interior point method [14] is chosen. In previous studies [3, 15] this method has to been shown to be suitable for parameter optimization of thermal models. All parameters of the machine component are optimized at the same time, which belong to the sensor that exceeds the permitted difference to the simulated value. The optimization is computationally expensive, which is why it should be performed in a trusted execution environment on a server.

4 Trusted Execution Environment

Servers located at datacenters provide a cost-efficient yet powerful option to execute parameter optimizations at machine runtime and during simulations. To address security concerns, e.g., data theft or manipulation by collocated potentially malicious software or personnel, modern technologies offer protection beyond data encryption on a hard drive. Nowadays, software can be shielded against privileged software, e.g., the operating system, that could use its elevated permissions for attacks. During execution, when code and data have been loaded into memory, other (malicious) software could try to read, steal or corrupt data. This includes machine models or parameter settings, which in turn could have disastrous effects in production.

Among other technology providers, Intel has developed dedicated hardware instructions called Software Guard Extensions (Intel-SGX) [16] that allow for the creation of a trusted execution environment (TEE). TEEs execute software in an enclave, with encryption facilitated only by the processor. Both the executed code and data loaded to main memory is encrypted directly by the processor, effectively shielding it from access by any other application. Combined with encrypted communication, parameter optimizations can be executed on a server distant to the in-production machines without sacrificing security.

While encryption increases security, it also implies additional operations to be executed. Given that all accessed data and code in memory needs to be decrypted and encrypted by the processor during execution of the application, a potentially non-negligible performance reduction can be observed. Reducing the performance penalty imposed by Intel-SGX has been the focus of many researchers, allowing optimized code to be executed with a 10% to 20% slowdown, while non-optimized code could face a 100% slowdown (calculations take twice as long).

5 Application at Demonstrator Machine

The approach for the parameter update is demonstrated on the model of a Cartesian 3-axis machine. The machine is shown in Fig. 3. The slides of the machine are built with braced aluminum plates in order to get a lightweight structure. Three ball screw axes drive the Z-slide. Two linear direct drives drive the Y-slide and one linear direct drive drives the X-slide. The components are connected via profile rail guides and bearings. Except for the main spindle, the machine has no build in cooling. Due to the aluminum plate construction and the uncooled heat sources in the machine structure, it is susceptible to thermally induced errors. This makes the machine an interesting demonstration object.

5.1 Model of the Demonstrator Machine

The entire structural model is divided into main assemblies named above. The creation and preparation of the geometry model of the main assemblies follows the original CAD, but with these simplifications for the subsequent FE model:

-

the removal of all screw holes,

-

the removal of all tension rods, because no suitable multi-physics elements exist for them within used FEA system,

-

the reduction of the cross-sections of the profiled rail guides to a rectangular profile and of the ball screw spindles to a circular profile, and

-

the segmentation of the functional surfaces, especially on the guiding elements, so that they correspond to the actual movement length of the axes.

The submodels for the main assemblies each have their own FE model. These define the solid bodies of the components, all surfaces of the boundary and transition conditions (for convection, thermal transition, contact stiffness etc.), the mesh of the finite elements as well as the resulting system matrices according to Eq. (1). In this state, the FE model of the complete machine including test arbor has a degree of freedom of 345,256 in the thermal and of 1,035,768 in the elastic partial model.

Importing the model data into MATLAB, instantiating the model objects and grouping them to match the kinematic chain results in a model structure as shown in Fig. 4. The tension rods, the contacts in bearings and guides, and the machine grounding are supplemented by additional finite elements from the library. In the figure, these and all interface objects for the boundary conditions are neglected to maintain clarity. The latter are assigned to the configurations.

In respect to the time-consuming calculation of the thermally induced displacements in an elastic model at each time step, the working space is divided into 3 × 3 × 3 discrete grid points and the solution will be performed only for these points. Figure 5 shows three of the 27 configurations under MATLAB.

Figure 6 shows as an example two simulated temperature fields of the demonstrator machine. The left temperature field results from a continuous movement of the Y-axis over 15 min. The right temperature field shows the temperature field after 120 min of movement. The temperature at the linear direct drives and the profile rail guides increases significantly, which results in a temperature rise in the Y- and Z-slide. Therefore, the model parameters of the power loss in profile rail guides and linear direct drives have a big impact on the temperature field.

5.2 Parameter Update of Friction Model of Profile Rail Guides

Uncertain parameters over the lifetime of the demonstrator machine model refer to components with friction, wear and changed lubrication. These components are profile rail guides, bearings, contacts between ball screw spindles and ball screw nuts. The profile rail guides in Y-direction are chosen as example in this paper.

47 Pt100 resistance temperature sensors are applied within the demonstrator machine. 26 sensors are in the Y- and the Z-slide. For these sensors, a sensitivity analysis is conducted with “pearson partial correlation coefficients adjusted for internal variables” and a wide range of simulated load cases. Two sensors (see Y1 and Y2 in Fig. 7) under the profile rail guides in Y-direction are selected. The correlation coefficients to the heat source friction in the guides are 0.9978 for both sensor positions. The p-values are 1.5E-21 (sensor Y1) and 1.8E-21 (sensor Y2). This is far below the significance level of 5%. Therefore, the two sensors are suitable for monitoring the friction in the profile rail guides.

An initial parameter adjustment was carried out based on experiments with different movement speed of the Y-axis. The maximum error between measurement and simulation after the parameter adjustment was ±0.82 K for Y1 and ±0.76 K for Y2. These errors are selected as the limit value for the deviation between simulation and measurement in further operation of the machine. Therefore, a parameter update will be initiated after exceeding these limits. The friction is described qualitative well by an empirical function [17] but have to be adjusted to meet the quantitative real behavior. Therefore, scaling factors are introduced as parameters for the calculation of the friction for both guides. The plausible range for these scaling factors is defined based on literature references and assumptions. In [18] the friction of the investigated profile rail guides is reduced by up to 90% over the lifetime. This includes run-in effects at the beginning of the lifetime. The lower limit of the scaling factor is 0.1. Regreasing of the guides can lead to an increased friction. It is assumed that the increase is in guides similar to bearings. Newly greased bearings can have an up to 45% increased friction [19]. Therefore, the upper limit of the scaling factor is estimated with 1.45.

The thermal time behavior of the Y-slide and the Z-slide is investigated as described in Sect. 3. The maximum time constant for the Y-slide is 75 min and for the Z-slide is 116 min. The larger time constant is selected for the calculation of the time window (see Eq. (4)) for the variant calculation and parameter optimization. The maximum error for the calculation is defined with \(\Delta T_{E} /\Delta T_{S} = 1\%\). The resulting time window is tWindow = 534 min.

The initial parameter adjustment was conducted in 2017. In the following, an experiment is evaluated, which was conducted three years later in 2020. During the experiment, the Y-slide is moved over nearly the whole axis length from axis position -190 mm to 190 mm. The movement parameters are: maximum velocity of 0.75 m/s, maximum acceleration of 1.8 m/s2 and maximum jerk of 400 m/s3. The duration of the movement is 25 min and followed by a measurement cycle. The displacement of the tool center point (TCP) is measured during the measurement cycle. Only slow movements and therefore a small load is applied to the guides. The measurement cycle takes 6 min. Movement and measurement cycle are repeated cyclically until the thermal steady state is nearly reached.

The temperature curves at the sensor positions for both profile rail guides are pictured in Fig. 8. The limit values (blue dotted line) is exceeded at sensor Y1 after about 49 min of movement (marked with a vertical dotted line). The simulated values (dashed line) at sensor position Y2 stay below the upper limit. After the limit is exceeded, different variants of parameters are calculated to determine the minimum and maximum temperature (marked with blue triangles) with plausible parameters. The measured value at Y1 is 22.83 ℃. The minimum temperature is 21.98 ℃ and the maximum temperature is 24.58 °C. Therefore, the parameter adjustment is reasonable. The optimization leads to a decreased friction in the model by 46%. The simulated values fit the measured value (solid line) well after the optimization. Three hours after the optimization, the simulated values are slightly lower than the measured value.

The accuracy improvement is shown in Fig. 9 based on the residual temperature error. The maximum and mean residual error is compared for the simulation model without parameter update (solid line) and with parameter update (dashed line). Therefore, the 26 temperature sensors within the Y- and the Z-slide are evaluated. After the optimization and the parameter update (dotted vertical line) the maximum residual error as well as the mean residual error are reduced at these sensor positions. The mean residual error is reduced from 0.457 K to 0.342 K for the time period shown. In conclusion, the parameter update leads to a significant improvement of the model accuracy for the changes in thermal behavior three years after initial parameter adjustment.

5.3 Performance in Trusted Execution Environment

In addition to the evaluation depicted beforehand, we evaluated the calculation of the temperature model with regard to performing a computationally intensive tasks within trusted execution environments (TEEs), (see Sect. 4). To make use of the dedicated hardware instructions that create the encrypted enclave, we incorporated the SCONE framework [20]. SCONE allows for the execution of applications within a TEE on Intel CPUs without code manipulations or recompilation. Unfortunately, some limitations apply as SCONE is only available for Linux and currently doesn’t support Matlab. Therefore, the Matlab code to perform calculations for the temperature model was ported to Python. The Python code is then executed on a server running Ubuntu-Linux 20.04 in different variants. As it is common practice to execute applications on servers in virtualized environments, we additionally used Docker to run the application and evaluate the increase in execution runtime for the different variants.

Docker is a lightweight alternative and performant alternative to a virtual machine. Similar to virtual machines, Docker containers include an operating system that runs the desired application. Figure 10 depicts different variants for executing the Python application in different Docker containers and without Docker. Additionally, the evaluation shows the performance impact of the trusted execution environment (SCONE). The bars in Fig. 10 are split into the main calculation of the temperature model with order reduction (MOR) and the retransformation from the reduced model to a full model. The fastest execution is achieved by the calculation without Docker virtualization and without added security by the SCONE framework (TEE). It takes 53.4 + 6.2 s to calculate the machine temperature fields for a full hour with a step size of 10 s. By executing the calculation in a Docker container with Ubuntu Linux as operating system, the execution time is increased by 17% to 70.2 s. Executing the Python application inside a TEE in a Docker container further increases the duration by 10% to 77.8 s (or by 30% in total).

6 Summary and Outlook

In this paper, a modelling workflow for the efficient creation and calculation of thermal models is introduced. Based on this kind of model, a workflow for the parameter update of the machine model over machine lifetime is presented. The approach is demonstrated using the example of a 3-axis machine in lightweight design. It is shown that the presented approach can be beneficial to maintain the accuracy of the thermal model even over long operating time of the machine tool. The parameter optimization can be conducted safely in a trusted execution environment on a server with acceptable additional computation time. Further performance improvements for calculations within trusted execution environments are anticipated by using Matlab, as it provides higher efficiency compared to Python. The current execution overhead of about 30% for the trusted execution environment can additionally be further reduced by code optimization.

In future works, the parameters of the empirical models for friction should be updated separately (e.g., the lubrication viscosity). Furthermore, the model needs an improvement of the convection description in order to increase the overall accuracy of the model.

References

Denkena, B., Abele, E., Brecher, C., Dittrich, M.-A., Kara, S., Mori, M.: Energy efficient machine tools. CIRP Ann. 69, 646–667 (2020). https://doi.org/10.1016/j.cirp.2020.05.008

Großmann, K. (ed.): Thermo-Energetic Design of Machine Tools. LNPE, Springer, Cham (2015). https://doi.org/10.1007/978-3-319-12625-8

Thiem, X., Kauschinger, B., Ihlenfeldt, S.: Online correction of thermal errors based on a structure model. Int. J. Mechatron. Manuf. Syst. 12, 49–62 (2019). https://doi.org/10.1504/IJMMS.2019.097852

Reuss, M., Dadalau, A., Verl, A.: Friction variances of linear machine tool axes. Procedia CIRP 4, 115–119 (2012). https://doi.org/10.1016/j.procir.2012.10.021

Zhou, H.-X., Zhou, C.-G., Wang, X.-Y., Feng, H.-T., Xie, J.-L.: Degradation reliability modeling for two-stage degradation ball screws. Precis. Eng. 73, 347–362 (2022). https://doi.org/10.1016/j.precisioneng.2021.09.018

Zhou, C.-G., Ren, S.-H., Feng, H.-T., Shen, J.-W., Zhang, Y.-S., Chen, Z.-T.: A new model for the preload degradation of linear rolling guide. Wear 482–483, 203963 (2021). https://doi.org/10.1016/j.wear.2021.203963

Schroeder, S., Kauschinger, B., Hellmich, A., Ihlenfeldt, S., Phetsinorath, D.: Identification of relevant parameters for the metrological adjustment of thermal machine models. Int. J. Interact. Des. Manuf. 13(3), 873–883 (2019). https://doi.org/10.1007/s12008-019-00529-y

Stuart, A., Ord, J.K., Arnold, S., Kendall, M.G.: Classical Inference and the Linear Model. 6th edn., Wiley, Chichester (2008)

Fisher, R.A.: The distribution of the partial correlation coefficient. Metron, pp. 329–332 (1924)

Mareš, M., Horejš, O., Havlík, L.: Thermal error compensation of a 5-axis machine tool using indigenous temperature sensors and CNC integrated Python code validated with a machined test piece. Precis. Eng. 66, 21–30 (2020). https://doi.org/10.1016/j.precisioneng.2020.06.010

Bitar-Nehme, E., Mayer, J.R.R.: Modelling and compensation of dominant thermally induced geometric errors using rotary axes’ power consumption. CIRP Ann. 67, 547–550 (2018). https://doi.org/10.1016/j.cirp.2018.04.080

Wennemer, M.: Methode zur messtechnischen Analyse und Charakterisierung volumetrischer thermo-elastischer Verlagerungen von Werkzeugmaschinen. Apprimus Wissenschaftsverlag, Aachen (2018)

Weck, M., McKeown, P., Bonse, R., Herbst, U.: Reduction and compensation of thermal errors in machine tools. CIRP Ann. Manuf. Technol. 44, 589–598 (1995). https://doi.org/10.1016/S0007-8506(07)60506-X

Waltz, R.A., Morales, J.L., Nocedal, J., Orban, D.: An interior algorithm for nonlinear optimization that combines line search and trust region steps. Math. Program. 107, 391–408 (2006). https://doi.org/10.1007/s10107-004-0560-5

Thiem, X., Kauschinger, B., Ihlenfeldt, S.: Structure model based correction of machine tools. In: Conference on Thermal Issues in Machine Tools. Verlag Wissenschaftliche Scripten, Auerbach/Vogtl, pp. 309–3018 (2018)

Costan, V., Devadas, S.: Intel SGX explained. IACR Cryptol. ePrint Arch. (2016)

Jungnickel, G.: Simulation des thermischen Verhaltens von Werkzeugmaschinen. Modellierung und Parametrierung. Schriftenreihe des Lehrstuhls für Werkzeugmaschinen, Dresden (2010)

Wang, X.-Y., Zhou, C., Ou, Y.: Experimental analysis of the wear coefficient for the rolling linear guide. Adv. Mech. Eng. 11 (2019). https://doi.org/10.1177/1687814018821744

Reibung und Erwärmung | Schaeffler medias. https://medias.schaeffler.de/de/friction-and-increases-in-temperature. Accessed 2 Sep 2022

Arnautov, S., et al.: SCONE: secure Linux containers with Intel SGX. In: OSDI, vol. 16, pp. 689–703 (2016)

Acknowledgements

Funded by the German Research Foundation – Project-ID 174223256 – TRR 96.

Author information

Authors and Affiliations

Corresponding author

Editor information

Editors and Affiliations

Rights and permissions

Open Access This chapter is licensed under the terms of the Creative Commons Attribution 4.0 International License (http://creativecommons.org/licenses/by/4.0/), which permits use, sharing, adaptation, distribution and reproduction in any medium or format, as long as you give appropriate credit to the original author(s) and the source, provide a link to the Creative Commons license and indicate if changes were made.

The images or other third party material in this chapter are included in the chapter's Creative Commons license, unless indicated otherwise in a credit line to the material. If material is not included in the chapter's Creative Commons license and your intended use is not permitted by statutory regulation or exceeds the permitted use, you will need to obtain permission directly from the copyright holder.

Copyright information

© 2023 The Author(s)

About this paper

Cite this paper

Thiem, X., Rudolph, H., Krahn, R., Ihlenfeldt, S., Fetzer, C., Müller, J. (2023). Adaptive Thermal Model for Structure Model Based Correction. In: Ihlenfeldt, S. (eds) 3rd International Conference on Thermal Issues in Machine Tools (ICTIMT2023). ICTIMT 2023. Lecture Notes in Production Engineering. Springer, Cham. https://doi.org/10.1007/978-3-031-34486-2_6

Download citation

DOI: https://doi.org/10.1007/978-3-031-34486-2_6

Published:

Publisher Name: Springer, Cham

Print ISBN: 978-3-031-34485-5

Online ISBN: 978-3-031-34486-2

eBook Packages: EngineeringEngineering (R0)