Abstract

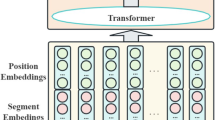

Confront the problems of the semantic representation, classification accuracy and efficiency of text sentiment analysis methods need to be optimized, this paper proposes an optimized text sentiment classification model (Shap-PreBiNT) based on Transformer. In the text embedding stage, a Shap-Word model is devised to improve the ability of text semantic representation. The Shapley-value method is introduced to calculate the contribution weight of words in sentences, which fused with Word2Vec word vector. In the feature extraction stage, a bidirectional normalization layer is designed to regulate the feature distribution from multi-dimension. In the stage of network structure optimization, a pre-normalization structure is adopted to stabilize the gradient norm and accelerate the convergence rate. The experimental results demonstrate that the proposed model has a better performance by comparing with other related models. On the IMDB English dataset, the classification accuracy and F1-score reach 94.87% and 94.83%, which are 1.48% and 1.47% higher than Transformer. On the ChnSentiCorp Chinese dataset, our model achieves the highest accuracy and F1-Score, which are 91.82% and 91.66%, respectively, and increased by 2.43% and 2.51%.

Access this chapter

Tax calculation will be finalised at checkout

Purchases are for personal use only

Similar content being viewed by others

References

Alashri, S., Kandala, S.S., Bajaj, V., Ravi, R., Desouza, K.C.: An analysis of sentiments on facebook during the 2016 u.s. presidential election. In: 2016 IEEE/ACM International Conference on Advances in Social Networks Analysis and Mining (ASONAM) (2016)

Bahdanau, D., Cho, K., Bengio, Y.: Neural machine translation by jointly learning to align and translate. arXiv preprint arXiv:1409.0473 (2014)

Chen, P., Sun, Z., Bing, L., Yang, W.: Recurrent attention network on memory for aspect sentiment analysis. In: Proceedings of the 2017 Conference on Empirical Methods in Natural Language Processing, pp. 452–461 (2017)

Cho, K., et al.: Learning phrase representations using rnn encoder-decoder for statistical machine translation. arXiv preprint arXiv:1406.1078 (2014)

Dai, Z., Yang, Z., Yang, Y., Carbonell, J., Salakhutdinov, R.: Transformer-xl: Attentive language models beyond a fixed-length context (2019)

Dey, A.: Attention based lstm cnn framework for sentiment extraction from bengali texts. In: 2020 11th International Conference on Electrical and Computer Engineering (ICECE), pp. 226–229. IEEE (2020)

Dong, Y., Cordonnier, J.B., Loukas, A.: Attention is not all you need: Pure attention loses rank doubly exponentially with depth. arXiv preprint arXiv:2103.03404 (2021)

Hochreiter, S., Schmidhuber, J.: Long short-term memory. Neural Comput. 9(8), 1735–1780 (1997)

Johnson, R., Zhang, T.: Deep pyramid convolutional neural networks for text categorization. In: Proceedings of the 55th Annual Meeting of the Association for Computational Linguistics (Volume 1: Long Papers), pp. 562–570 (2017)

Keinan, A., Sandbank, B., Hilgetag, C.C., Meilijson, I., Ruppin, E.: Fair attribution of functional contribution in artificial and biological networks. Neural Comput. 16(9), 1887–1915 (2004)

Kim, Y.: Convolutional neural networks for sentence classification. Eprint Arxiv (2014)

Liu, B.: Sentiment Analysis: Mining Opinions, Sentiments, and Emotions. Mining Opinions, Sentiments, and Emotions, Sentiment Analysis (2020)

Maas, A., Daly, R.E., Pham, P.T., Huang, D., Ng, A.Y., Potts, C.: Learning word vectors for sentiment analysis. In: Proceedings of the 49th Annual Meeting of the Association for Computational Linguistics: Human Language Technologies, pp. 142–150 (2011)

Mikolov, T., Chen, K., Corrado, G., Dean, J.: Efficient estimation of word representations in vector space. arXiv preprint arXiv:1301.3781 (2013)

Mnih, V., Heess, N., Graves, A., et al.: Recurrent models of visual attention. In: Advances in Neural Information Processing Systems, pp. 2204–2212 (2014)

Mullen, T., Collier, N.: Sentiment analysis using support vector machines with diverse information sources. In: Proceedings of the 2004 Conference on Empirical Methods in Natural Language Processing, EMNLP 2004, A meeting of SIGDAT, a Special Interest Group of the ACL, held in conjunction with ACL 2004, 25–26 July 2004, Barcelona, Spain (2004)

Shen, Y., Tan, S., Sordoni, A., Courville, A.: Ordered neurons: Integrating tree structures into recurrent neural networks (2018)

Shen, Y., Tan, S., Sordoni, A., Courville, A.: Ordered neurons: Integrating tree structures into recurrent neural networks. arXiv preprint arXiv:1810.09536 (2018)

Tan, S., Zhang, J.: An empirical study of sentiment analysis for Chinese documents. Expert Syst. Appl. 34(4), 2622–2629 (2008)

Tay, Y., Dehghani, M., Bahri, D., Metzler, D.: Efficient transformers: A survey. arXiv preprint arXiv:2009.06732 (2020)

Vaswani, A., et al.: Attention is all you need. In: Advances in Neural Information Processing Systems, pp. 5998–6008 (2017)

Wang, W., Li, B., Feng, D., Zhang, A., Wan, S.: The ol-dawe model: tweet polarity sentiment analysis with data augmentation. IEEE Access 8, 40118–40128 (2020)

Wang, Y., Sun, A., Han, J., Liu, Y., Zhu, X.: Sentiment analysis by capsules. In: Proceedings of the 2018 World Wide Web Conference, pp. 1165–1174 (2018)

Author information

Authors and Affiliations

Corresponding author

Editor information

Editors and Affiliations

Rights and permissions

Copyright information

© 2023 The Author(s), under exclusive license to Springer Nature Switzerland AG

About this paper

Cite this paper

Zhang, K., Feng, L., Yu, X. (2023). Shap-PreBiNT: A Sentiment Analysis Model Based on Optimized Transformer. In: Li, B., Yue, L., Tao, C., Han, X., Calvanese, D., Amagasa, T. (eds) Web and Big Data. APWeb-WAIM 2022. Lecture Notes in Computer Science, vol 13422. Springer, Cham. https://doi.org/10.1007/978-3-031-25198-6_33

Download citation

DOI: https://doi.org/10.1007/978-3-031-25198-6_33

Published:

Publisher Name: Springer, Cham

Print ISBN: 978-3-031-25197-9

Online ISBN: 978-3-031-25198-6

eBook Packages: Computer ScienceComputer Science (R0)