Abstract

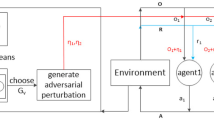

Cooperative Multi-Agent Reinforcement Learning (c-MARL) enables a team of agents to determine the global optimal policy that maximizes the sum of their accumulated rewards. This paper investigates the robustness of c-MARL to a novel adversarial threat, where we target and weaponize one agent, termed the compromised agent, to create natural observations that are adversarial for its team. The goal is to lure the compromised agent to follow an adversarial policy that pushes activations of its cooperative agents’ policy networks off distribution. This paper shows mathematically the exploitation steps of such an adversarial policy in the centralized-learning and decentralized-execution paradigm of c-MARL. We also empirically demonstrate the susceptibility of the state-of-the-art c-MARL algorithms, namely MADDPG and QMIX, to the compromised agent threat by deploying four attack strategies in three environments in white and black box settings. By targeting a single agent, our attacks yield highly negative impact on the overall team reward in all environments, reducing it by at least 33% and at most 89.6%. Finally, we provide recommendations on improving the robustness of c-MARL.

Access this chapter

Tax calculation will be finalised at checkout

Purchases are for personal use only

Similar content being viewed by others

Notes

- 1.

- 2.

Our code and demos are available here:https://github.com/SarraAlqahtani22/MARL-Robustness.

References

Amoozadeh, M., et al.: Security vulnerabilities of connected vehicle streams and their impact on cooperative driving. IEEE Commun. Mag. 53(6), 126–132 (2015). https://doi.org/10.1109/MCOM.2015.7120028

Bagnell, J.A.D.: An invitation to imitation. Technical report. CMU-RI-TR-15-08, Carnegie Mellon University, Pittsburgh, PA (2015)

Bakakeu, J., Kisskalt, D., Franke, J., Baer, S., Klos, H.H., Peschke, J.: Multi-agent reinforcement learning for the energy optimization of cyber-physical production systems. In: 2020 IEEE Canadian Conference on Electrical and Computer Engineering (CCECE), pp. 1–8 (2020). https://doi.org/10.1109/CCECE47787.2020.9255795

Dadras, S., Dadras, S., Winstead, C.: Collaborative attacks on autonomous vehicle platooning. In: 2018 IEEE 61st International Midwest Symposium on Circuits and Systems (MWSCAS), pp. 464–467 (2018). https://doi.org/10.1109/MWSCAS.2018.8624026

Foerster, J.N., Farquhar, G., Afouras, T., Nardelli, N., Whiteson, S.: Counterfactual multi-agent policy gradients. In: AAAI (2018)

Fu, J., Luo, K., Levine, S.: Learning robust rewards with adversarial inverse reinforcement learning (2018)

Higgins, F., Tomlinson, A., Martin, K.M.: Survey on security challenges for swarm robotics. In: 2009 Fifth International Conference on Autonomic and Autonomous Systems, pp. 307–312 (2009). DOI: https://doi.org/10.1109/ICAS.2009.62

Huang, S., Papernot, N., Goodfellow, I., Duan, Y., Abbeel, P.: Adversarial attacks on neural network policies (2017)

Jaques, N., et al.: Social influence as intrinsic motivation for multi-agent deep reinforcement learning (2019)

Jo, U., Jo, T., Kim, W., Yoon, I., Lee, D., Lee, S.: Cooperative multi-agent reinforcement learning framework for scalping trading (2019)

Kos, J., Song, D.: Delving into adversarial attacks on deep policies (2017)

Levine, S.: Reinforcement learning and control as probabilistic inference: tutorial and review (2018)

Lin, J., Dzeparoska, K., Zhang, S.Q., Leon-Garcia, A., Papernot, N.: On the robustness of cooperative multi-agent reinforcement learning (2020)

Lin, Y.C., Hong, Z.W., Liao, Y.H., Shih, M.L., Liu, M.Y., Sun, M.: Tactics of adversarial attack on deep reinforcement learning agents (2019)

Liu, M., et al.: Multi-agent interactions modeling with correlated policies (2020)

Lowe, R., Wu, Y., Tamar, A., Harb, J., Abbeel, P., Mordatch, I.: Multi-agent actor-critic for mixed cooperative-competitive environments. In: Proceedings of the 31st International Conference on Neural Information Processing Systems, pp. 6382–6393. NIPS 2017, Curran Associates Inc., Red Hook, NY, USA (2017)

Peake, A., McCalmon, J., Raiford, B., Liu, T., Alqahtani, S.: Multi-agent reinforcement learning for cooperative adaptive cruise control. In: 2020 IEEE 32nd International Conference on Tools with Artificial Intelligence (ICTAI), pp. 15–22 (2020). https://doi.org/10.1109/ICTAI50040.2020.00013

Rashid, T., Samvelyan, M., de Witt, C.S., Farquhar, G., Foerster, J., Whiteson, S.: Qmix: monotonic value function factorisation for deep multi-agent reinforcement learning (2018)

Shapley, L.S.: Stochastic games. Proc. National Acad. Sci. 39(10), 1095–1100 (1953). https://doi.org/10.1073/pnas.39.10.1095, https://www.pnas.org/content/39/10/1095

Sutton, R.S., Barto, A.G.: Reinforcement Learning: An Introduction. A Bradford Book, Cambridge, MA, USA (2018)

Tang, Y.C.: Towards learning multi-agent negotiations via self-play (2020)

Tian, Z., et al.: Learning to communicate implicitly by actions (2019)

Wen, Y., Yang, Y., Luo, R., Wang, J., Pan, W.: Probabilistic recursive reasoning for multi-agent reinforcement learning (2019)

Wiyatno, R., Xu, A.: Maximal Jacobian-based saliency map attack (2018)

Wu, X., Guo, W., Wei, H., Xing, X.: Adversarial policy training against deep reinforcement learning. In: 30th USENIX Security Symposium (USENIX Security 21), pp. 1883–1900. USENIX Association (2021). https://www.usenix.org/conference/usenixsecurity21/presentation/wu-xian

Xia, Y., Qin, T., Chen, W., Bian, J., Yu, N., Liu, T.Y.: Dual supervised learning (2017)

Yang, Y., Luo, R., Li, M., Zhou, M., Zhang, W., Wang, J.: Mean field multi-agent reinforcement learning (2018)

Zhao, Y., Shumailov, I., Cui, H., Gao, X., Mullins, R., Anderson, R.: Blackbox attacks on reinforcement learning agents using approximated temporal information (2019)

Acknowledgment

This material is based upon work supported by the National Science Foundation (NSF) under grant no. 2105007.

Author information

Authors and Affiliations

Corresponding author

Editor information

Editors and Affiliations

Rights and permissions

Copyright information

© 2023 The Author(s), under exclusive license to Springer Nature Switzerland AG

About this paper

Cite this paper

Liu, T., McCalmon, J., Rahman, M.A., Lischke, C., Halabi, T., Alqahtani, S. (2023). Weaponizing Actions in Multi-Agent Reinforcement Learning: Theoretical and Empirical Study on Security and Robustness. In: Aydoğan, R., Criado, N., Lang, J., Sanchez-Anguix, V., Serramia, M. (eds) PRIMA 2022: Principles and Practice of Multi-Agent Systems. PRIMA 2022. Lecture Notes in Computer Science(), vol 13753. Springer, Cham. https://doi.org/10.1007/978-3-031-21203-1_21

Download citation

DOI: https://doi.org/10.1007/978-3-031-21203-1_21

Published:

Publisher Name: Springer, Cham

Print ISBN: 978-3-031-21202-4

Online ISBN: 978-3-031-21203-1

eBook Packages: Computer ScienceComputer Science (R0)