Abstract

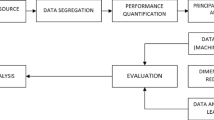

Identifying groups of player behavior is a crucial step in understanding the player base of a game. In this work, we use a recurrent autoencoder to create representations of players from sequential game data. We then apply two clustering algorithms–k-means and archetypal analysis–to identify groups, or clusters, of player behavior. The main contribution to this work is to determine the efficacy of the Wasserstein loss in the autoencoder, evaluate the loss’s effect on clustering, and provide a methodology that game analysts can apply to their games. We perform a quantitative and qualitative analysis of combinations of models and clustering algorithms and determine that using the Wasserstein loss results in better clustering.

Access this chapter

Tax calculation will be finalised at checkout

Purchases are for personal use only

Similar content being viewed by others

Notes

References

Akiba, T., Sano, S., Yanase, T., Ohta, T., Koyama, M.: Optuna: A next-generation hyperparameter optimization framework. In: Proceedings of the 25rd ACM SIGKDD International Conference on Knowledge Discovery and Data Mining (2019)

Arjovsky, M., Bottou, L.: Towards principle methods for training generative adversarial networks (2017). arXiv:1701.04862

Arjovsky, M., Chintala, S., Bottou, L.: Wasserstein GAN (2017). arXiv:1701.07875

Boubekki, A., Kampffmeyer, M., Brefeld, U., Jenssen, R.: Joint optimization of an autoencoder for clustering and embedding. Machine Learning 110(7), 1901–1937 (2021). https://doi.org/10.1007/s10994-021-06015-5

Calinski, T., Harabasz, J.: A dendrite method for cluster analysis. Commun. Stat.- Theory Methods 3(1), 1–27 (1974). https://doi.org/10.1080/03610927408827101

Cutler, A., Breiman, L.: Archetypal Analysis. Technometrics 36(4), 338–347 (Nov 1994). https://doi.org/10.1080/00401706.1994.10485840

Davies, D.L., Bouldin, D.W.: A Cluster Separation Measure. IEEE Trans. Pattern Anal. Mach. Intell. PAMI-1(2), 224–227 (1979). https://doi.org/10.1109/TPAMI.1979.4766909

Drachen, A., Canossa, A., Yannakakis, G.N.: Player modeling using self-organization in tomb raider: underworld. In: 2009 IEEE Symposium on Computational Intelligence and Games, pp. 1–8. IEEE, Milano, Italy (2009). https://doi.org/10.1109/CIG.2009.5286500

Drachen, A., Sifa, R., Bauckhage, C., Thurau, C.: Guns, swords and data: clustering of player behavior in computer games in the wild. In: 2012 IEEE Conference on Computational Intelligence and Games (CIG), pp. 163–170. IEEE, Granada, Spain (2012). https://doi.org/10.1109/CIG.2012.6374152

Goodfellow, I., et al.: Generative adversarial nets. In: Ghahramani, Z., Welling, M., Cortes, C., Lawrence, N., Weinberger, K.Q. (eds.) Advances in Neural Information Processing Systems, vol. 27. Curran Associates, Inc. (2014), https://proceedings.neurips.cc/paper/2014/file/5ca3e9b122f61f8f06494c97b1afccf3-Paper.pdf

Gulrajani, I., Ahmed, F., Arjovsky, M., Dumoulin, V., Courville, A.: Improved Training of Wasserstein GANs (2017). arXiv:1704.00028

Lloyd, S.: Least squares quantization in PCM. IEEE Trans. Infor. Theory 28(2), 129–137 (1982). https://doi.org/10.1109/TIT.1982.1056489

McInnes, L., Healy, J., Melville, J.: UMAP: Uniform manifold approximation and projection for dimension reduction (2020). arXiv:1802.03426

Paszke, A., et al.: PyTorch: an imperative style, high-performance deep learning library. In: Wallach, H., Larochelle, H., Beygelzimer, A., Alché-Buc, F.d., Fox, E., Garnett, R. (eds.) Advances in Neural Information Processing Systems 32, pp. 8024–8035. Curran Associates, Inc. (2019). http://papers.neurips.cc/paper/9015-pytorch-an-imperative-style-high-performance-deep-learning-library.pdf

Tolstikhin, I., Bousquet, O., Gelly, S., Schoelkopf, B.: Wasserstein Auto-Encoders (2019). arXiv:1711.01558

Author information

Authors and Affiliations

Corresponding author

Editor information

Editors and Affiliations

Rights and permissions

Copyright information

© 2022 IFIP International Federation for Information Processing

About this paper

Cite this paper

Tan, J., Katchabaw, M. (2022). A Reusable Methodology for Player Clustering Using Wasserstein Autoencoders. In: Göbl, B., van der Spek, E., Baalsrud Hauge, J., McCall, R. (eds) Entertainment Computing – ICEC 2022. ICEC 2022. Lecture Notes in Computer Science, vol 13477. Springer, Cham. https://doi.org/10.1007/978-3-031-20212-4_24

Download citation

DOI: https://doi.org/10.1007/978-3-031-20212-4_24

Published:

Publisher Name: Springer, Cham

Print ISBN: 978-3-031-20211-7

Online ISBN: 978-3-031-20212-4

eBook Packages: Computer ScienceComputer Science (R0)