Abstract

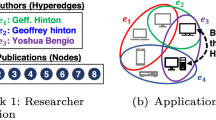

Many problems such as node classification and link prediction in network data can be solved using graph embeddings. However, it is difficult to use graphs to capture non-binary relations such as communities of nodes. These kinds of complex relations are expressed more naturally as hypergraphs. While hypergraphs are a generalization of graphs, state-of-the-art graph embedding techniques are not adequate for solving prediction and classification tasks on large hypergraphs accurately in reasonable time. In this paper, we introduce HyperNetVec, a novel hierarchical framework for scalable unsupervised hypergraph embedding. HyperNetVec exploits shared-memory parallelism and is capable of generating high quality embeddings for real-world hypergraphs with millions of nodes and hyperedges in only a couple of minutes while existing hypergraph systems either fail for such large hypergraphs or may take days to produce the embeddings.

Access this chapter

Tax calculation will be finalised at checkout

Purchases are for personal use only

Similar content being viewed by others

References

Bengio, Y., Courville, A., Vincent, P.: Representation learning: a review and new perspectives. IEEE Trans. Pattern Anal. Mach. Intell. 35(8), 1798–1828 (2013). https://doi.org/10.1109/TPAMI.2013.50

Bordes, A., Usunier, N., Garcia-Durán, A., Weston, J., Yakhnenko, O.: Translating embeddings for modeling multi-relational data. In: Proceedings of the 26th International Conference on Neural Information Processing Systems (2013)

Bui, T.N., Jones, C.: A heuristic for reducing fill-in in sparse matrix factorization. In: SIAM Conference on Parallel Processing for Scientific Computing, March 1993

Chen, H., Perozzi, B., Hu, Y., Skiena, S.: Harp: Hierarchical representation learning for networks (2017)

dataset, D.: citation dataset dblp. https://www.aminer.org/lab-datasets/citation/DBLP-citation-Jan8.tar.bz

Deng, C., Zhao, Z., Wang, Y., Zhang, Z., Feng, Z.: Graphzoom: a multi-level spectral approach for accurate and scalable graph embedding (2020)

Devine, K.D., Boman, E.G., Heaphy, R.T., Bisseling, R.H., Catalyurek, U.V.: Parallel hypergraph partitioning for scientific computing. In: Proceedings of the 20th International Conference on Parallel and Distributed Processing (2006). http://dl.acm.org/citation.cfm?id=1898953.1899056

FAERS: drug dataset. https://www.fda.gov/Drugs/

Feng, Y., You, H., Zhang, Z., Ji, R., Gao, Y.: Hypergraph neural networks (2019)

Getoor, L.: Cora dataset. https://linqs.soe.ucsc.edu/data

Giurgiu, M., et al.: CORUM: the comprehensive resource of mammalian protein complexes-2019. Nucl. Acids Res. (2019)

Grover, A.: High performance implementation of node2vec. https://github.com/snap-stanford/snap/tree/master/examples/node2vec

Grover, A., Leskovec, J.: node2vec: Scalable feature learning for networks (2016)

Hamilton, W.L., Ying, R., Leskovec, J.: Inductive representation learning on large graphs (2018)

Harper, F.M., Konstan, J.A.: The Movielens datasets: history and context. ACM Trans. Interact. Intell. Syst. 5(4) (2015). https://doi.org/10.1145/2827872

Karypis, G., Aggarwal, R., Kumar, V., Shekhar, S.: Multilevel hypergraph partitioning: applications in VLSI domain. IEEE Trans. Very Large Scale Integr. Syst. (1999)

Kirkland, S.: Two-mode networks exhibiting data loss. J. Complex Netw. 6(2), 297–316 (2017). https://doi.org/10.1093/comnet/cnx039

Li, Q., Han, Z., Wu, X.M.: Deeper insights into graph convolutional networks for semi-supervised learning (2018)

Liang, J., Gurukar, S., Parthasarathy, S.: Mile: a multi-level framework for scalable graph embedding (2020)

Maleki, S., Agarwal, U., Burtscher, M., Pingali, K.: Bipart: a parallel and deterministic hypergraph partitioner. SIGPLAN Not. (2021). https://doi.org/10.1145/3437801.3441611

Mateev, N., Pingali, K., Stodghill, P., Kotlyar, V.: Next-generation generic programming and its application to sparse matrix computations. In: Proceedings of the 14th International Conference on Supercomputing. ICS 2000, pp. 88–99. Association for Computing Machinery, New York (2000)

Perozzi, B., Al-Rfou, R., Skiena, S.: Deepwalk: online learning of social representations. In: Proceedings of the 20th ACM SIGKDD International Conference on Knowledge Discovery and Data Mining. KDD 2014. ACM, New York (2014)

Pingali, K., et al.: The tao of parallelism in algorithms. In: PLDI 2011, pp. 12–25 (2011)

Piñero, J., et al.: The DisGeNET knowledge platform for disease genomics: 2019 update. Nucl. Acids Res. 48(D1), D845–D855 (2019)

Qiu, J., Dong, Y., Ma, H., Li, J., Wang, K., Tang, J.: Network embedding as matrix factorization. In: Proceedings of the Eleventh ACM International Conference on Web Search and Data Mining (2018). https://doi.org/10.1145/3159652.3159706

Rogers, A., Pingali, K.: Compiling for distributed memory architectures. IEEE Trans. Parallel Distrib. Syst. 5(3), 281–298 (1994)

Tang, J., Qu, M., Wang, M., Zhang, M., Yan, J., Mei, Q.: Line. In: Proceedings of the 24th International Conference on World Wide Web (2015)

Taubin, G.: A signal processing approach to fair surface design. In: Proceedings of the 22nd Annual Conference on Computer Graphics and Interactive Techniques (1995). https://doi.org/10.1145/218380.218473

Tu, K., Cui, P., Wang, X., Wang, F., Zhu, W.: Structural deep embedding for hyper-networks (2018)

Xu, K., Hu, W., Leskovec, J., Jegelka, S.: How powerful are graph neural networks? (2019)

Yadati, N., Nimishakavi, M., Yadav, P., Nitin, V., Louis, A., Talukdar, P.: HyperGCN: a new method of training graph convolutional networks on hypergraphs (2019)

Yang, J., Leskovec, J.: Defining and evaluating network communities based on ground-truth (2012)

Zhang, M., Cui, Z., Jiang, S., Chen, Y.: Beyond link prediction: predicting hyperlinks in adjacency space. In: AAAI, pp. 4430–4437 (2018)

Zhang, R., Zou, Y., Ma, J.: Hyper-SAGNN: a self-attention based graph neural network for hypergraphs. In: International Conference on Learning Representations (ICLR) (2020)

Zheng, V.W., Cao, B., Zheng, Y., Xie, X., Yang, Q.: Collaborative filtering meets mobile recommendation: a user-centered approach. In: Proceedings of the Twenty-Fourth AAAI Conference on Artificial Intelligence (2010)

Zien, J.Y., Schlag, M.D.F., Chan, P.K.: Multilevel spectral hypergraph partitioning with arbitrary vertex sizes. IEEE Trans. Comput. Aided Des. Integr. Circuits Syst. (1999). https://doi.org/10.1109/43.784130

Author information

Authors and Affiliations

Corresponding author

Editor information

Editors and Affiliations

Rights and permissions

Copyright information

© 2022 Springer Nature Switzerland AG

About this paper

Cite this paper

Maleki, S., Saless, D., Wall, D.P., Pingali, K. (2022). HyperNetVec: Fast and Scalable Hierarchical Embedding for Hypergraphs. In: Ribeiro, P., Silva, F., Mendes, J.F., Laureano, R. (eds) Network Science. NetSci-X 2022. Lecture Notes in Computer Science(), vol 13197. Springer, Cham. https://doi.org/10.1007/978-3-030-97240-0_13

Download citation

DOI: https://doi.org/10.1007/978-3-030-97240-0_13

Published:

Publisher Name: Springer, Cham

Print ISBN: 978-3-030-97239-4

Online ISBN: 978-3-030-97240-0

eBook Packages: Computer ScienceComputer Science (R0)