Abstract

In contrast to mainstream research methods in psychology, the project Children’s Drawings of Gods encompasses computer vision and mathematical methods to analyse the data (drawings and drawing annotations). The first part of the present work describes a set of methods designed to extract measures, namely features, directly from the drawings and from annotations of the images. Then, the dissimilarities between the drawings are computed based on particular features (such as the gravity centre of the smallest image unit, namely pixel, or the annotated position of god) and combined in order to measure numerically the differences between the drawings. In the second part, we conduct an exploratory data analysis based on these dissimilarities, including multidimensional scaling and clustering, in order to determine whether the chosen features permit us to distinguish the different strategies that the children used to draw god.

You have full access to this open access chapter, Download chapter PDF

Similar content being viewed by others

Keywords

- Computer vision

- Children’s drawings

- Mathematical methods

- K-means clustering

- Squared Euclidean dissimilarities

- (Semi-)automatic analysis of drawings

A crucial and original point of the project Children’s Drawings of GodsFootnote 1 is its intercultural and interfaith nature. In order to accomplish intercultural and interfaith comparisons, it is necessary to have a large number of drawings. However, it becomes difficult to analyse each drawing using methods that are standard to psychological research because the standard methods are very resource-intensive, especially if an interrater is needed. In the latter case, moreover, the results could sometimes be subjective, because they depend, to some extent, on the raters. This project makes an original contribution by encompassing computer vision and mathematical methods to analyse the drawings and furnish (semi-)automatic methods to treat large numbers of drawings.

While computer vision methods are well developed to analyse natural images (see, e.g., Szeliski, 2011), such as aerial photography, human portraits, or natural landscapes, they are less developed for artistic work, such as paintings or drawings, with a few exceptions (see, e.g., Stork, 2009; Manovich, 2012; Romero et al., 2018). As for psychological studies of drawings, there are only a few works using computer vision (see, e.g., Kim et al., 2007; Kim et al., 2012; Ahi, 2017). Ahi (2017) uses the ImageJ program, a tool largely used in the area of biological imaging and fully discussed in Schneider et al. (2012). Regarding annotation tools, they are often developed for specific purposes, but with a usage either too restrictive or too permissive according to the aims of the project (Cocco et al., 2018).

Therefore, we developed specific methods to answer various research questions (see Table 9.1 for the relation between the research questions, the methods, and the chapters of this volume). In a nutshell, we developed two main method types in this project in order to extract features from the drawings: methods based on annotations and methods of computer vision. Both methods enable the extraction of counts and measurements (namely of features) from the drawings.

First, regarding the methods based on annotations, we created a specific annotation tool, dubbed Gauntlet, for this project (Dessart et al., 2016).Footnote 2 This tool proposes a fixed list of items that can be annotated (with a box or a point depending on the items). The output provides a list of the annotated items with the coordinates of the boxes or points. Two types of annotations were produced with this tool in the present project and its sequel: annotations for anthropomorphism and annotations for position. Regarding annotations for anthropomorphism, we annotated all anthropomorphic items in the drawings.Footnote 3 Only the names of the items were used here. For position annotations, we placed a rectangular box around the godFootnote 4 representations in the drawings, in accordance with instructions found and detailed earlier (Chaps. 6 and 7, this volume). Based on this box position, we extracted features, namely the vertical and the horizontal position of god.

Second, we developed various methods of computer vision, enabling us to directly extract features from the drawings. Three general features are discussed in this segment: the gravity, which mainly consists of the positional mean of coloured pixels; the colour frequencies; and the colour organisation (both of which are based on the RGB (red, green and blue) colour space representation of the drawings).

Although we developed these types of methods to answer a variety of questions (see Table 9.1), they can sometimes serve more than one purpose; more than one method can aid us in answering a single question. For instance, to understand where children draw god on the page, we can: (1) consider the complete composition of the drawing with the mean position of coloured pixels (gravity features); or (2) focus on the annotated position of the figure of god (position features).

The aim of this work is to go further in the automated processes to see if expected patterns can be seen and new patterns discovered by using clustering methods that have not been used in other segments of analysis, as well as to add new features and to combine them. In the first part, we present the method: first, the feature extractions; second the transformation of features into dissimilarities; and third, the clustering method, namely the K-means. The next part provides results (illustrations) of applied clustering in combination with previously defined features. Finally, we discuss the contribution of this method to the psychological research questions.

Method

In this section, we first describe the dataset of drawings and drawing annotations. Then we present various features that characterise the drawings (gravity, colour frequencies, colour organisation, god position, and anthropomorphism), features that have been extracted from the drawings and/or from the annotations. We explain how those features are used in computations to measure the dissimilarities between each pair of drawings (dissimilarities based on features). Finally, the clustering technique is described (clustering based on dissimilarities).

Dataset

Each i = 1, …, N drawing from the dataset of size N = 1211 has different origins, as summarised in Table 9.2. The drawings in this dataset were collected between 2003 and 2016 in small groups of compulsory school aged children.

Not all drawings were annotated for position. In drawings with multiple figures it was difficult, or even impossible, to identify which or how many of the figures represented god. Although we were not able to annotate all drawings for the position, we were able to annotate all drawings for anthropomorphism (with the exception of Russia, for this subset of drawings our work is still in progress). To date, we have been able to annotate 745 drawings for anthropomorphic characteristics and 1162 for the position of the god figure.

Each i drawing is defined here as a mathematical object consisting of a d = 3 dimensional matrix or array of size n × m × d, coding the vertical position on the Y-axis, the horizontal position on the X-axis and the colour, respectively (think about a regular 3D grid). With y = 1, …, n and x = 1, …, m, each pyx element represents the colour value or the pixel at the (x, y) coordinates. More precisely, pyx is defined by a triplet of values in the RGB colour space.

Features

We extracted two types of features from the drawings in the dataset. There are features extracted from manually executed annotations (god’s position and anthropomorphism) and features automatically computed from the drawings (gravity, colour frequencies, and colour organisation) according to computer vision approach.

Gravity

The disjunctive configuration of the coloured pixels (absence or presence), permits us to extract their so-called mean position, based on the weighted mean of the gravity computation proposed by Konyushkova et al. (2015). First, the pixels colour space is converted from RGB → HSV (Hue, Saturation and Value) and the retained \( {p}_{yx}^b \) coloured pixel follows

Then, the standardised \( \left(\overline{x^b},\overline{y^b}\right)\in \left[0,1\right] \) mean position coordinates of the coloured pixels are obtained with

where the normalisation factor \( {f}^b=1/\sum \limits_{xy}{p}_{yx}^b \) is the inverse number of coloured pixels.Footnote 5

Moreover, the inertia Δb, measuring the dispersion of the coloured pixels inertia, is computed as:

where var(xb) and var(yb) are defined as:

and

Thus, both the weighted mean of gravity and the inertia, called together gravity features in the sequel, are computed based on coloured pixels. This means that if pixels are not coloured, for example if a child left a part in white, it influences these features. The next step in this work will be to find a way to consider these cases, using a method such as the one developed by Seong-in Kim et al. (2012).

In order to keep the process completely automatic, the whole dataset was included in the analysis for these gravity features. While we expected that some drawings (such as those left as blank sheets) would be automatically removed from this portion of the analysis, they were not, because there is always at least one coloured pixel, sometimes due to the scan or to noise.

Colour Frequencies

On a finer level, the colour frequencies (in pixel count) can be extracted automatically for each drawing with the method proposed by Cocco et al. (2019). This method uses a two-step procedure that assigns all pixels pyx first to a set of 117 micro-colours, and second to a set of a defined palette of G = 10 colours: gray-black scale (achromatic), blue, cyan, green, orange, pink, purple, red, white, and yellow.Footnote 6 Finally, a binary matrix of the same dimension as the considered drawing is obtained, \( {b}_{yx}^g \), for each colour g = 1, …, G, whose components are 1 if the pixel has the colour g. Thus, for each drawing, \( {c}^g=\sum \limits_{xy}{b}_{yx}^g \) is the number of pixels per image for each colour. This allowed us to create a contingency table, VCOL where images are the rows; and colours, the columns.

Colour Organization

Looking deeper, each drawing expresses a particular organisation of colours, which can be quantified by a measure of entropy (Parker, 2011): the higher the entropy, the more dispersed or “random” the corresponding colour distribution will be. Conversely, a lower measure of entropy corresponds to the use of less colours, and may indicate a more organised state of colours.

The triplet value defining the colour of a pixel in the RGB space is first linearly converted to the so-called grey level \(\tilde{p}\) defined as \( \tilde{p}=0.2125{p}_R+0.7154{p}_G+0.0721{p}_B \) which results in 256 discrete grey levels in t = 0, …, T = 1. Then, for each drawing, the relative frequency of each level of grey t occurs at

with P = n × m the pixel number of the drawing. Thus, the entropy (in bits) associated with the drawing of the 256 colours is

and obeys 0 ≤ H(T) ≤ log2(256) = 8 bits.

God Position

We annotated drawings in order to locate the god figure’s position in the pictorial space (god position in the image). At this stage, only drawings with a single god figure have been analysed (see Chap. 7, this volume). For each drawing, god’s representation is delimited by a box defined as two points xmin, ymin, in the upper left corner, and xmax, ymax, in the bottom right corner. The position is defined by the centroid-standardized coordinates (xc, yc) ∈ [0, 1] such as:

Anthropomorphism

We annotated drawings with various labels and positions related to anthropomorphism.Footnote 7 For this task, we kept only the labels (position was not used) and only those directly connected to anthropomorphism. A contingency table VANT = (vil) was obtained, where vil counts the number of occurrences of the lth anthropomorphic feature in the ith drawing. We considered 13 labels, namely:

-

heads,

-

eyes,

-

noses,

-

mouths,

-

ears,

-

hair,

-

beard,

-

clothes,

-

arms,

-

hands,

-

legs,

-

feet and

-

no anthropomorphic item.

The last label (l= “no anthropomorphic item”) was used when no other label applied to the drawing i (vil = 1), in order to avoid drawings without labels and to be therefore able to include all drawings in the dissimilarity computation detailed in the section below.

Dissimilarities Based on Features

When each drawing has been characterized by uni- or multi-variate features, computing the dissimilarities between each pair of i, j drawings constitutes a natural way to reveal their contrast within a large dataset.

We computed two types of dissimilarities regarding the quotient of the features. The numerical measure yields the n × n symmetric dissimilarity matrix D = (dij)with \( {d}_{ij}^2=\parallel {\overrightarrow{x}}_i-{\overrightarrow{x}}_j{\parallel}^2 \) representing the squared Euclidean distances. Otherwise, the categorical feature with m modalities yields a contingency table V = (qil) of size n × m, a matrix counting the number of occurrences of modality l in drawing i. From the latter, a chi-squared dissimilarity denoted here χ2 can be computed. However, the categorical features under investigation include various modalities that are over-represented and hide other subtle yet relevant modalities that are less frequently represented (think about a distribution count of white pixels in front of the distribution counts of pink or yellow pixels). The generalized χ2 defined below (Ceré & Egloff, 2018) provides a parameter θ ≥ 0 to adapt the sensitivity of the measure to the high or low frequencies in the distribution of each l modality, respectively θ > 1 or θ < 1 whereas θ = 1 provides the usual χ2. Then \( {d}_{ij}^{\chi^2}=\sum \limits_l{v}_l{\left({\rho}_{il}^{\theta }-{\rho}_{jl}^{\theta}\right)}^2 \) where \( {v}_l=\frac{q_{\bullet l}}{q\bullet \bullet } \) is the modality weight and \( {\rho}_{il}=\frac{q_{il}{q}_{\bullet \bullet }}{q_{i\bullet }{q}_{\bullet l}} \) the independence quotient.

In both cases, those dissimilarities are squared Euclidean, and so are their p-variate mixtures \( {D}_{ij}^{\prime }=\sum \limits_{k=1}^p{\alpha}_k{D}_{ij}^{(k)} \), where D(k) is the k-th dissimilarity Dij/Δ standardized by the corresponding inertia Δ for the k-variable, and the free coefficient αk ≥ 0 with \( \sum \limits_k^p{\alpha}_k=1 \) permits us to tune the relative weight of each contribution.

Therefore, for the five dissimilarity matrices of Table 9.1, three are straightforward squared Euclidean dissimilarities:

-

DVAR = (‖H(t)i − H(t)j‖2) from the colour variety feature,

-

\( {D}^{GRAV}=\left({\left\Vert \overline{x_i^b}-\overline{x_j^b}\right\Vert}^2+{\left\Vert \overline{y_i^b}-\overline{y_j^b}\right\Vert}^2+{\left\Vert {\varDelta}_i^b-{\varDelta}_j^b\right\Vert}^2\right) \) from the gravity features,

-

\( {D}^{POS}=\left({\left\Vert {x}_i^c-{x}_j^c\right\Vert}^2+{\left\Vert {y}_i^c-{y}_j^c\right\Vert}^2\right) \) from the position features; and two are chi-squared dissimilarities:

-

DCOL from the colour frequencies features and

-

DANT from the anthropomorphism features.

Clustering Based on Dissimilarities

As the dataset is large enough (a large n that will increase drastically in the near future of the project) a clustering method is needed to identify possible homogeneous drawings aggregations. From among many other methods, the well-known iterative K-means approach has been adapted here according to Cocco (2014) for the formalism D = (dij) and performed to attribute each drawing in c < N clusters.Footnote 8 We consider a uniform weight for each i drawing, such as fi = 1/N and \( \sum \limits_i{f}_i=1 \).

In contrast with the current practice of this method,Footnote 9 we first define a uniformly random binary partition matrix Z = (zik) where \( \sum \limits_k^c{z}_{ik}=1 \). Each drawing is then attributed to the cluster k with the probability zik.

Iteratively, the distance between the drawings and the intermediate k-centroids \( {D}_i^k \) is

where \( {f}_j^k={f}_i{z}_{ik}/{\rho}_k \) is the distribution of the drawing i within cluster k and obeys \( \sum \limits_i{f}_i^k=1 \), where \( {\rho}_k=\sum \limits_i{f}_i{z}_{ig} \) is the relative weight of cluster k. At each iteration, the drawing i is attributed to the nearest kth cluster, \( {k}_i=\arg {\min}_{k=0}^c{D}_i^k \) that is zik = 1 if k = ki, and zik = 0 otherwise, and the process is continued until the partition Z converges.

The number of c clusters is chosen accordingly to the heuristic rule of Hartigan (Chiang & Mirkin, 2010; Hartigan, 1975; Sablatnig et al., 1998) which defines the optimal number of clusters c⋆ as the minimal c for which the Hartigan index \( HK=\left(\frac{w_c}{w_{c+1}}-1\right)\left(n-c-1\right) \) satisfies HK(c) ≤ 10, where wc = \( \sum \limits_{ik}{D}_i^k \) is the sum of the within-cluster distances to the c centroids. Such a criterion seeks to minimize the variation of wcwhile increasing c. If the value HK(c) ≤ 10 is never reached, the number of clusters is chosen as \( {c}^{\star }=\arg {\min}_{c=2}^{c=10}\mid {w}_c-{w}_{c+1}\mid \) (de Amorim & Hennig, 2015).

The above yields various distinct and homogeneous clusters of the drawings, whereas the Multi-Dimensional Scaling (MDS) permits to explore the dissimilarities between drawings by representing and visualizing the dataset in a lower number of dimensions. Although the dimension reduction implies a loss of information,Footnote 10 the combination of the labelled drawings and dissimilarities in a 2-dimensional plot constitutes a particularly intuitive way to understand patterns of two aspects in a large dataset. This is useful when analysing two aspects such as:

-

1.

The similarities between drawings within a given cluster and between differing clusters,

-

2.

The identification of the uni- or multivariate profile most contributing to the distinction between drawings, or groups of drawings.

To perform the MDS, the matrix of scalar products weighted B are computed from the dissimilarity matrix (Bavaud, 2011; Cocco, 2014) as:

which permits us to define the matrix of weighted scalar products \( {K}_{ij}=\sqrt{f_i{f}_j}{B}_{ij} \) whose trace is equal to the inertia Δ of the configuration. The spectral decomposition K = UΛU′ (where Λ is diagonal and U orthogonal) finally defines the factor coordinates of each i drawing upon the new αth dimension as

where the eigen value λα represents the inertia explained by the αth dimension. Ideally, the first dimensions express an important part of the inertia, thus justifying the 2-dimensional plots, as illustrated in the section below.

Results

In this section, we propose some possible answers to questions mentioned in Table 9.1. For each feature or combination of features, a MDS and a K-means were computed, as described in the “Method” section, and the MDS served to plot the results.Footnote 11 For each cluster obtained with the K-means, four types of proportions were additionally computed, according to the metadata (country, sex, age and context) of the drawings:

-

The proportion of children from each country;

-

The proportion of males and females;

-

The proportion of children in each age group:

-

Group “young”: until 9 years and 5 months,

-

Group “middle”: between 9 years and 6 months and 12 years and 5 months,

-

Group “old”: at least 12 years and 6 months; and

-

-

The proportion of children met in a religious or in a public context.

Because the number of drawings varies in each sample based on the metadata, we computed a sample adjustment for these proportions. Indeed, each illustration of frequencies by clusters below shows the relative group proportion, which balances the number of subjects for each metadata group. For instance, we re-weighted the number of children in each country so that, for the calculations, the three countries contain the same number of children.

Anthropomorphism

In order to understand if children represent god(s) as human, we computed a K-means on the χ2 dissimilarities DANT, with the free parameter θ = 0.5,Footnote 12 produced with the anthropomorphism contingency table and the results are detailed in Figs. 9.1, 9.2, and 9.3. As explained before, these results cannot be used to answer the research questions directly, because features include the anthropomorphic items of the whole drawing and not only of the god figure. However, they do permit us to distinguish some patterns that characterize the available dataset.

For each cluster obtained with the K-means applied to anthropomorphic features, this figure shows relative group proportions of children from each country (top left), of males and females (top right), of children in each age group (bottom left), and of children from religious or public school contexts (bottom right)

For instance, the most remarkable cluster (the third one) includes drawings without human items (Fig. 9.2). As expected, this cluster is highly distant from others (Fig. 9.1). Moreover, there is a majority of Swiss children, males, older children and/or of drawings collected in a religious context (Fig. 9.3). Cluster 2 is also different enough from the others, containing drawings with an average of more than 10 heads, 20 arms and legs, but less than five noses or mouths on average (Fig. 9.2). It contains drawings with many small anthropomorphic figures that lack details, namely mouths and noses. All the drawings in Cluster 2 come from Switzerland and were produced primarily by females and older children in a religious context (Fig. 9.3). Figure 9.2 also demonstrates that Cluster 9 contains drawings with anthropomorphic figures without hands; Cluster 4, more heads than arms or legs; Cluster 7, more than two heads on average; Cluster 5, 6, and 8, one head on average; and Cluster less than one head on average. Therefore, the first cluster is composed of drawings with one main anthropomorphic figure, without eyes, as seen in the drawing by a Japanese boy presented in Fig. 9.4. Children from Japan, the religious context, and the older group drew most of the representations in this cluster.

Drawing by a Japanese boy of 13 years and 11 months old in 2003 in a public school context, part of Cluster 1 in the anthropomorphic clustering (see Fig. 9.1) http://ark.dasch.swiss/ark:/72163/1/0105/MIRjVt_RRZuLljX5gHR6rgY.20200318T123648872549Z

Position and Gravity

To understand the position of children’s god representation on the page, we developed two techniques. The first one is based on the position of coloured pixels and takes into account the whole drawing (gravity). The second one is based on an annotation that delimits only the figure of god (position). As explained in the method, the K-means can be applied to a single set of features, such as position or gravity, or to a combination of feature sets, such as position and gravity.

Position

As this feature has only two components, xc and, yc the MDS plots exactly the position, with the exception of the direction and thus, the sum of the variances explained by the two first dimensions is equal to 100% (see Fig. 9.5).

The first quadrant of Fig. 9.5 (top-right) corresponds to the fourth quadrant of the drawing (bottom-right), the second quadrant of the Fig. 9.5 (top-left) to the first quadrant of the drawing (top-right), and so on. Thus, it is a 90° counter-clockwise rotation and Cluster 5 represents drawings where god’s representation is in the upper-left position of the drawing, while in Cluster 7, god is represented in the lower part of the drawing. It is important to note that this rotation is due to the fact that the vertical position of god, represented by the first dimension, explains 71% of the variance, compared to only 29% for the horizontal dimension: the vertical position of god turns out to be more efficient to differentiate between drawings than the horizontal position.

As shown in the Fig. 9.6, the majority of drawings in Cluster 5 (god is at the upper-left position of the drawing) were drawn by Russian children, boys and children aged between 10 and 12 years old and/or met in a public context. Cluster 4 represents drawings where god was drawn on the right part of the page, near the bottom, mainly produced by Swiss children and children met in the religious context. Drawings in Cluster 7 represent drawings where god was drawn at the bottom. As for Cluster 4, the majority of these representations were drawn by Swiss children. Moreover, these drawing were mainly produced by young children. As in the case of Cluster 5, the Clusters 8 and 9, with god drawn at the left middle portion of the page, contain mainly Russian drawings. Moreover, representations in Cluster 8, where god is depicted slightly lower than the centre, were mainly drawn by girls; and those of Cluster 9, where the representation of god is situated higher than the centre, were drawn mainly by children of middle age group.

For each cluster obtained with the K-means applied to position features, this figure shows relative group proportions of children from each country (top left), of males and females (top right), of children in each age group (bottom left), and of children from religious or public school contexts (bottom right)

Gravity

In contrast to the position features, gravity features include not only the \( \left(\overline{x^b},\overline{y^b}\right) \)coordinates on the X- and Y-axis, Eq. (9.1), but also the inertia Δb, Eq. (9.2). Therefore, the sum of the variance explained by the two first dimensions is no longer equal to 100% (see Fig. 9.7). It is therefore more complicated to interpret the axes. However, Cluster 1 contains drawings in which the coloured pixels are, on average, found at the bottom of the page. These were produced mainly by Swiss children (relative group proportion of 50.2%) and young children (56%). In Cluster 3, drawings in which the coloured pixels are, on average, on the left, rather on the top of the page, were mainly drawn by boys (62.4%) and/or by children in a public school context (63.7%). Finally in Cluster 5, drawings in which the coloured pixels are, on average, at the top, were mainly produced by males (59%).

Combination of Position and Gravity

Even if the position and gravity features are obtained with completely different procedures (by the mean of annotations for the former and by computer vision for the latter, respectively), the illustrations above demonstrate that they help us to grasp the same type of information. Thus, we combined these two features, as D′ = 1/2 ∗ DGRAV + 1/2 ∗ DPOS, in order to see if they provide more information together.

The aspect of Fig. 9.8 is similar to the aspect of Figs. 9.5 and 9.7. This is consistent with the fact that xc is positively correlated with \( \overline{x^b} \), respectively yc with \( \overline{y^b} \) from Eqs. (9.1) and (9.3), as shown in Table 9.5. Moreover, it seems that the MDS grasps the position of god better than it grasps the gravity centre of the drawing. Indeed, we see again that the more the drawing appears on the right of the graph, the more the position of the representation of god is up (higher on the page). Likewise, the more the drawing appears on the left of the graph, the more the position of the representation is down (lower on the page). It seems that drawings in the upper part of the graph exhibit god on the left, but for drawings in the lower part of the graph, the god location of the representation is less clearly predictable.

Combining the features, clusters become less easy to interpret. However, in Clusters 1 and 7 (Fig. 9.8), there are drawings where god is depicted on the lower part of the page. More precisely, Cluster 7 contains drawings with god at the extreme bottom of the page, mainly produced by Swiss children and younger children (Fig. 9.9). This result is consistent with Cluster 7, obtained above using only the position features (Figs. 9.5 and 9.6). Cluster 1 (Fig. 9.8) is also made up of drawings where god is depicted farther from the bottom than in Cluster 7, again mainly produced by participants in the group of younger children, but also by children from the religious schooling context (Fig. 9.9).

For each cluster obtained with the K-means applied to the combination of position and gravity features, this figure shows relative group proportions of children from each country (top left), of males and females (top right), of children in each age group (bottom left), and of children from religious or public school contexts (bottom right)

Cluster 4 is particular and consists of 39 drawings where god is situated in the upper left part of the page (Fig. 9.8). The majority of these drawings were composed by Russian children, by boys and/or by children from a public school context (Fig. 9.9). Finally, god depicted between the centre and the upper part of the page for the drawings on the Cluster 5 (Fig. 9.8) were mainly produced by children from a public school context.

Colour

Colour Frequencies

To answer the question about the colours that children chose to use in their drawings (see more details in Chap. 8, this volume) the K-means was applied to the χ2 distances DCOL, with the free parameter θ = 0.9, obtained with the correspondence table for colours (Fig. 9.10).

In order to better understand the clusters obtained with the K-means, we computed the proportion of the number of pixels of each colours out of the number of coloured pixels for each cluster (all drawings of the cluster considered as a bag of pixels). Results are presented in Fig. 9.11.

Colour distribution for the seven clusters obtained with the K-means (Fig. 9.10). The color white is not represented on this graph, since it represents a large number of pixels in the majority of drawings, blurring the results for the other colours

As we can see, the first cluster is characterised by a high proportion of yellow in the drawings (Fig. 9.11), these were mainly drawn by females and/or collected in a religious context (Fig. 9.13). A sample of drawings from this cluster is presented in Fig. 9.12. In Cluster 4 (only 30 drawings), orangeFootnote 13 is the most important colour, even if it represents only a third of the coloured pixels (Fig. 9.11). Here, we find more Japanese drawings, mostly drawn by girls, by young children, and by children in a religious context (Fig. 9.13). Clusters 5 and 6 are characterised by a high proportion of blue. This is especially true for Cluster 5 where a blue background (the sky) is drawn (Fig. 9.11). The drawings from both of these clusters were mainly produced by girls; those of Cluster 5 were produced primarily by young children and/or by children in the context of religious schooling (Fig. 9.13). Finally, Clusters 2, 3, and 7 are characterised by a higher proportion of achromatic colours. Cluster 7 has an especially high percentage (more than 70%) of achromatic colours for 31 drawings (Fig. 9.11). These drawings were mainly drawn by Swiss children, by boys and by children in the religious schooling context (Fig. 9.13).

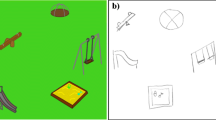

Examples of drawings included in the first cluster of the cluster groups on colours. This figure shows a drawing by a Swiss girl of 10 years and 3 months old in 2009 collected in a public school context (top left) http://ark.dasch.swiss/ark:/72163/1/0105/3eerzOZERRy=S4hVKX5iRwN.20201008T081948966918Z, a drawing by a Swiss boy of 12 years and 8 months old in 2016 collected in a religious context (top right) http://ark.dasch.swiss/ark:/72163/1/0105/QW2PW3EaSb=RYRO999KkxAu.20180702T163238646Z, a drawing by a Japanese girl of 13 years and 9 months old in 2003 collected in a religious context (bottom left) http://ark.dasch.swiss/ark:/72163/1/0105/2EguYRzfSc6KAJN5aEZ1=Q5.20200311T145859927809Z, and a drawing by a Russian girl of 7 years and 1 month in 2009 collected in a religious context (bottom right) http://ark.dasch.swiss/ark:/72163/1/0105/Y96k8K5HT3KUb8hnwq4Y0AU.20180702T194155712Z

Colour Gravity

While it is possible to combine distances to apply the K-means algorithm, it is also possible to mix the features creation methods to obtain new features. With the aim of seeing if the colours were all distributed in the same way on the page, we computed the gravity mean on the X- and Y-axes for each colour. The result is presented in the Fig. 9.14. First, some colours, such as purple or pink, appear in fewer drawings than other colours do, such as yellow or achromatic. Indeed, the gravity position for one colour is computed only if at least one pixel of the drawing was recognised as being of this colour and there are drawings that do not contain some colours. Second, there is no important difference between the mean positions of the gravity position for each colour (diamond symbol in Fig. 9.14). Finally, while the gravity of position of white is fairly centred, which seems normal since children used blank sheets of paper, the gravity positions of the other colours are widely dispersed on the sheets.

Colour Organization

The last research question we aimed to answer with these automatic methods concerns the complete composition of the page: do children use the whole sheet to do their drawings or do they draw a lonely figure with no background (see Table 9.1)? To answer this question, the colour variety features seem to be the most appropriate. Results of clustering for this feature obtained with the K-means are presented in Fig. 9.15. The first observation concerns the fact that almost 100% of the variance of these features is explained by the first dimension, since it is a unidimensional feature.

As shown in the Table 9.3, this first dimension represents the colour organisation and especially the proportion of coloured surface. Drawings with coloured background are on the left and drawings without background are on the right. In the middle, there are drawings with a background that is not completely coloured.

Clusters 2 and 5 contain drawings with coloured backgrounds, completely and partially coloured, respectively. The extreme Cluster 2 consists mainly of drawings produced by young children and by children in the religious schooling context (Fig. 9.16); in Cluster 5 there are drawings produced by Japanese children and/or by females. Unlike Clusters 2 and 5, the drawings in Cluster 1 do not have background (Fig. 9.15 and Table 9.3). They were mainly drawn by Swiss children, by males and by children from a public school context (Fig. 9.16).

For each cluster obtained with the K-means applied to the colour organisation feature, this figure shows relative group proportions of children from each country (top left), of males and females (top right), of children in each age group (bottom left) and of children from religious or public school contexts (bottom right)

Combination of Features Directly Extracted from the Drawing

We combined all of the features directly extracted from the drawings (and thus without direct human intervention) in order to see if this combination would enable us to cluster the drawings in a meaningful way. We obtained our results with the combination of three types of features either numeric or categorical, i.e. colour organisation, colour counts, and gravity features, such that the dissimilarities for this combination are: D′ = 1/3 ∗ DVAR + 1/3 ∗ DCOL + 1/3 ∗ DGRAV, with θ = 0.5 for DCOL. As shown in Fig. 9.17, the percentage of variance explained by the first two dimensions is only equal to 56.65% and thus, the graphic is difficult to interpret. The same occurs for the clusters obtained with the K-means. Nevertheless, some clusters display clearer patterns than other clusters.

For instance, Clusters 1, 5, 6, and 8 contain drawings with a small surface area coloured, mostly in the centre. Apparently, in these drawings, the main elements, and some secondary elements, are coloured, but not the whole background. By contrast, the drawings in Cluster 9 have a large surface area coloured, often the entire sheet.

While the position and gravity features, when treated separately, enabled us to distinguish types of drawings, the combination selected here does not seem to produce interesting patterns. As explained above, we chose two types of parameters, αk = 1, …, 3 = 1/3 and θ = 0.5 for DCOL. A next step could be to modify these parameters in order to investigate if a more discriminative clustering could be obtained.

Combination of All Features

As a final step, all features (those obtained with annotations and those obtained directly from drawings) are combined with αk = 1, …, 5 = 1/5 and θ = 0.5 for DCOL and DANT. Again, the aim is to determine if the combination of all the methods (those with and those without human intervention) permit us to cluster the drawings in a meaningful way. The number of drawings included in this analysis is lower than in the previous one because, as mentioned above, some of the drawings were not annotated for the position of the god’s representation and/or for the anthropomorphism.

The clustering resulted in the creation of nine clusters (Fig. 9.18) rather difficult to interpret (Table 9.4). Perhaps more salient results could be obtained by using high-dimensional embedding of the dissimilarities and done with the Schoenberg’s transformations (Schoenberg, 1938), more specifically: transforming squared Euclidean dissimilarities into other squared Euclidean dissimilarities (Bavaud, 2011). Yet, Schoenberg’s transformations constitute an infinite family of various parametric functional forms, and selecting a particularly relevant Schoenberg transformation adapted for our purpose is thus beyond the scope of this chapter.

Correlation Between Numeric Features

From the perspective of clustering methods, it is interesting to combine features only if each feature provides supplementary information to distinguish between the elements. As seen above, including a great number of features in the analysis creates difficulties in the interpretation of results. In order to measure the usefulness of each numeric feature in the clustering, we computed their correlations (Table 9.5).Footnote 14 As expected and already mentioned above, there is a high correlation between the horizontal gravity centre \( \overline{x^b} \) and the horizontal position of god xc, respectively between the vertical gravity centre \( \overline{y^b} \) and the vertical position yc. Thus, we can predict that the removal of either the gravity features or the position features would not significantly alter the type of results.

Discussion

The present work has demonstrated the use of computer vision and mathematical methods to treat a large number of drawings in research on children’s drawings of god. The results illustrated the use of these methods to answer specific psychological questions, questions that are treated with more detail using different methods and are presented in other chapters of this volume. While our methods result in consistent findings (the majority of conclusions obtained with our methods match the conclusions in the related chapters), our methods also allow us to go further and easily treat larger datasets, as described below.

Although a limited set of drawings have been annotated for anthropomorphism to date, and despite the fact that the whole drawing was considered (not only the god figure), we can identify various strategies at work. Moreover, we find that these strategies are related to the ones found in related writings on anthropomorphism: (see Chap. 4, this volume). For instance, a cluster of drawings without human features emerged. Dessart and Brandt (Chap. 3, this volume) found that the percentage of non-anthropomorphic drawings increases with age and religious context. Consistent with their conclusions, we found that this cluster of drawings (those lacking human figures) were composed mostly by children from the older age group and from the context of religious schooling. In contrast with results presented by Dessart and Brandt in their research about anthropomorphism, most of the drawings in this cluster were composed by boys. It should be noted that our work considers drawings from three countries; Dessart and Brandt were using only the Swiss dataset for their research on the anthropomorphism. Moreover, we did not employ statistical tests in the current chapter because they fell outside of the scope of our research focus. Another cluster corresponds to the incomplete strategy of de-anthropomorphization proposed by Dessart and Brandt in their chapter about anthropomorphism. This cluster is comprised of drawings that contain one main anthropomorphic figure, and they lack eyes. While the majority of the drawings in this cluster were drawn by older children, consistent with the conclusions of Dessart and Brandt, they were also mostly drawn by children in the context of religious schooling. Additional research is needed to improve the techniques of isolating and treating the god figure, separate from the drawn background that surrounds it. This can possibly be done by adapting the way researchers annotate the drawings.

Regarding the position and gravity features, we have showed the importance of the vertical axis (compared to the horizontal axis) to explain the variance of the position was shown. This finding explains why Dandarova-Robert et al. only studied the vertical position in their research on position (see Chap. 7, this volume). Also consistent with Dandarova-Robert et al., we found that it is quite rare for children to draw all the way to the edges of the paper, or to place a god figure at the very edge of the paper. Finally, without contradicting Dandarova-Robert et al.’s findings, we noted that representations of god (or the average pixels) located at the bottom were mainly drawn by young children, while those at the upper part of the page are mainly produced by boys.

The features involving colour frequencies were extracted in the same way that Cocco, et al. did it in their work on colours (Chap. 8, this volume). They, too, considered only coloured pixels (no the white pixels), but they did not use the clustering technique. Although the method of analysis in Cocco, et al. is more detailed, we see, as expected the same general results. First, the discriminative colours for the clusters seem to be yellow, orange, blue, and achromatic. This is consistent with Cocco, et al.’s findings that the main colours found in all countries are, in the order of their rank, achromatic, yellow, orange, and blue. Second, the cluster with the highest proportion of orange contains more Japanese drawings, which corresponds to our observation during the research that orange is the third most used colour (based on the amount of colour) in children’s drawings from Japan, but not in the drawings from other countries. While the colour organisation features cannot be directly related Cocco, et al.’s work, they permit us to detect, automatically, two different strategies children use in their drawings: coloured background or no background.

Results illustrated here are not only consistent with those found in other chapters of this volume; they also enable us to go further. For instance, the anthropomorphism clusters show us that there is a cluster of drawings with numerous representations of human figures; the position cluster shows us that some children choose to draw god more toward the left side of the paper. Moreover, these methods give us the opportunity to deal with a large amount of data, and, for the features that can be extracted directly from the drawings; the analysis can be accomplished without requiring additional human intervention.

In conclusion, there are a few ways to differentiate the drawings, depending on the research questions: with or without background, a high proportion of a specific colour, the number of humans in the drawing, the position of god, and so on. As explained above, this chapter presents an illustration of results that can be obtained with this type of method. As a next step, it could be possible to provide a more detailed analysis of the data, splitting the dataset by countries (as has been done in other chapters of this volume) if there are enough data, because sharper data-analytic patterns can be expected with larger amounts of data.

Notes

- 1.

The international project, Drawings of Gods: A Multicultural and Interdisciplinary Approach to Children’s Representations of Supernatural Agents, is also known in French as Dessins de dieux (DDD), and referred to in this volume simply as Children’s Drawings of Gods.

- 2.

The annotation tool has now been replaced by another annotation tool, based on VIA https://www.robots.ox.ac.uk/~vgg/software/via

- 3.

This annotation process included several other types of annotations. However, there were not used in the sequel and so not described here.

- 4.

Why the term god begins sometimes with an uppercase letter G, sometimes with a lowercase letter g, and why it appears sometimes in the singular and sometimes in the plural, is explained in the introductive chapter of this book (Chap. 1, this volume).

- 5.

The center of the pixel is considered as the reference point, thus we subtract 0.5.

- 6.

For these features, the images were resized in such fashion that the length of the drawing’s longest size is normalized to n = 320.

- 7.

It is important to note that the whole drawing was annotated for anthropomorphism. Therefore, the anthropomorphic features refer to the whole drawing, including angels, bishops, etc., and not only to the god character.

- 8.

Our choice of this method is motivated mainly by the goal of providing, as far as possible, an automatic and reproducible procedure that will facilitate further research upon similar datasets.

- 9.

Current practice deals directly with the features rather than the dissimilarities.

- 10.

The proportion of variance explained by each dimension is indicated directly on the plot.

- 11.

Recall that the initial partition in the K-means algorithm is chosen randomly. Thus, the results below correspond to one start and could differ (although not much, presumably) if another point was chosen for the initial partition.

- 12.

The free parameter θ has been determined by numerical experiments under the heuristic rule of Hartigan.

- 13.

As explained in Cocco et al. (2019), the orange can include brown and beige colours.

- 14.

The categorical features are not included in this analysis.

References

Ahi, B. (2017). The world of plants in children’s drawings: The color preference and the effect of age and gender on these preferences. Journal of Baltic Science Education, 16(1), 32–42.

Bavaud, F. (2011). On the Schoenberg transformations in data analysis: Theory and illustrations. Journal of Classification, 28(3), 297–314. https://doi.org/10.1007/s00357-011-9092-x

Ceré, R., & Egloff, M. (2018). An illustrated approach to Soft Textual Cartography. Applied Network Science, 3(1), 3–27. https://doi.org/10.1007/s41109-018-0087-y

Chiang, M. M.-T., & Mirkin, B. (2010). Intelligent choice of the number of clusters in K-means clustering: An experimental study with different cluster spreads. Journal of Classification, 27(1), 3–40. https://doi.org/10.1007/s00357-010-9049-5

Cocco, C. (2014). Typologies textuelles et partitions musicales: Dissimilarités, classification et autocorrélation (PhD thesis). Université de Lausanne. Retrieved from https://tel.archives-ouvertes.fr/tel-01074904/document

Cocco, C., Ceré, R., Xanthos, A., & Brandt, P.-Y. (2019). Identification and quantification of colours in children’s drawings. In Proceedings of the Workshop on Computational Methods in the Humanities 2018 (Vol. 2314, pp. 11–21). June 4–5, 2018: Michael Piotrowski. Retrieved from http://ceur-ws.org/Vol-2314/paper1.pdf

Cocco, C., Dessart, G., Serbaeva, O., Brandt, P.-Y., Vinck, D., & Darbellay, F. (2018). Potentialités et difficultés d’un projet en humanités numériques (DH): confrontation aux outils et réorientations de recherche. Digital Humanities Quarterly, 12(1). Retrieved from http://www.digitalhumanities.org/dhq/vol/12/1/000359/000359.html

de Amorim, R. C., & Hennig, C. (2015). Recovering the number of clusters in data sets with noise features using feature rescaling factors. Information Sciences, 324, 126–145. https://doi.org/10.1016/j.ins.2015.06.039

Dessart, G., Sankar, M., Chasapi, A., Bologna, G., Dandarova Robert, Z., & Brandt, P.-Y. (2016). A web-based tool called Gauntlet: From iterative design to interactive drawings annotation. In Digital Humanities 2016: Conference Abstracts (pp. 778–779). Jagiellonian University & Pedagogical University.

Hartigan, J. A. (1975). Clustering algorithms. Wiley.

Kim, S., Bae, J., & Lee, Y. (2007). A computer system to rate the color-related formal elements in art therapy assessments. The Arts in Psychotherapy, 34(3), 223–237.

Kim, S., Han, J., & Oh, Y.-J. (2012). A computer art assessment system for the evaluation of space usage in drawings with application to the analysis of its relationship to level of dementia. New Ideas in Psychology, 30(3), 300–307. https://doi.org/10.1016/j.newideapsych.2012.02.002

Konyushkova, K., Arvanitopoulos, N., Robert, Z. D., Brandt, P.-Y., & Süsstrunk, S. (2015). God(s) know(s): Developmental and cross-cultural patterns in children drawings. arXiv:1511.03466 [cs]. Retrieved from http://arxiv.org/abs/1511.03466

Manovich, L. (2012). How to compare one million images? In D. M. Berry (Ed.), Understanding digital humanities (pp. 249–278). Palgrave Macmillan. https://doi.org/10.1057/9780230371934_14

Parker, J. R. (2011). Algorithms for image processing and computer vision (2nd ed.). Wiley Computer Pub.

Romero, J., Gómez-Robledo, L., & Nieves, J. (2018). Computational color analysis of paintings for different artists of the XVI and XVII centuries. Color Research & Application, 43(3), 296–303. https://doi.org/10.1002/col.22211

Sablatnig, R., Kammerer, P., & Zolda, E. (1998). Hierarchical classification of paintings using face- and brush stroke models. In Proceedings. Fourteenth International Conference on Pattern Recognition (Vol. 1, pp. 172–174). IEEE.

Schneider, C. A., Rasband, W. S., & Eliceiri, K. W. (2012). NIH Image to ImageJ: 25 years of image analysis. Nature Methods, 9, 671–675. https://doi.org/10.1038/nmeth.2089

Schoenberg, I. J. (1938). Metric spaces and positive definite functions. Transactions of the American Mathematical Society, 44(3), 522–536. https://doi.org/10.2307/1989894

Stork, D. G. (2009). Computer vision and computer graphics analysis of paintings and drawings: An introduction to the literature. In X. Jiang & N. Petkov (Eds.), Computer analysis of images and patterns (pp. 9–24). Springer.

Szeliski, R. (2011). Computer vision: Algorithms and applications. Springer.

Acknowledgments

We would like to thank the reviewers for their constructive feedback. We would also like to thank Pierre-Yves Brandt, Zhargalma Dandarova-Robert and François Bavaud for the stimulating discussions about this paper. The research presented in this chapter was partially supported by the Swiss National Science Foundation (SNSF), grant no. CR11I1_156383.

Author information

Authors and Affiliations

Corresponding author

Editor information

Editors and Affiliations

Rights and permissions

Open Access This chapter is licensed under the terms of the Creative Commons Attribution 4.0 International License (http://creativecommons.org/licenses/by/4.0/), which permits use, sharing, adaptation, distribution and reproduction in any medium or format, as long as you give appropriate credit to the original author(s) and the source, provide a link to the Creative Commons license and indicate if changes were made.

The images or other third party material in this chapter are included in the chapter's Creative Commons license, unless indicated otherwise in a credit line to the material. If material is not included in the chapter's Creative Commons license and your intended use is not permitted by statutory regulation or exceeds the permitted use, you will need to obtain permission directly from the copyright holder.

Copyright information

© 2023 The Author(s)

About this chapter

Cite this chapter

Cocco, C., Ceré, R. (2023). Computer Vision and Mathematical Methods Used to Analyse Children’s Drawings of God(s). In: Brandt, PY., Dandarova-Robert, Z., Cocco, C., Vinck, D., Darbellay, F. (eds) When Children Draw Gods. New Approaches to the Scientific Study of Religion , vol 12. Springer, Cham. https://doi.org/10.1007/978-3-030-94429-2_9

Download citation

DOI: https://doi.org/10.1007/978-3-030-94429-2_9

Published:

Publisher Name: Springer, Cham

Print ISBN: 978-3-030-94428-5

Online ISBN: 978-3-030-94429-2

eBook Packages: Religion and PhilosophyPhilosophy and Religion (R0)