Abstract

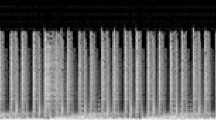

Embedding music genre classifiers in music recommendation systems offers a satisfying user experience. It predicts music tracks depending on the user’s taste in music. In this paper, we propose a preprocessing approach for generating STFT spectrograms and upgrades to a CNN-based music classifier named Bottom-up Broadcast Neural Network (BBNN). These upgrades concern the expansion of the number of inception and dense blocks, as well as the enhancement of the inception block through reduction block implementation. The proposed approach is able to outperform state-of-the-art music genre classifiers in terms of accuracy scores. It achieves an accuracy of 97.51% and 74.39% over the GTZAN and the FMA dataset respectively. Code is available at https://github.com/elachkarcharbel/music-genre-classifier.

Access this chapter

Tax calculation will be finalised at checkout

Purchases are for personal use only

Similar content being viewed by others

References

Freitag, M., Amiriparian, S., Pugachevskiy, S., Cummins, N., Schuller, B.: auDeep: unsupervised learning of representations from audio with deep recurrent neural networks. J. Mach. Learn. Res. 18, 12 (2017)

Liu, C., Feng, L., Liu, G., Wang, H., Liu, S.: Bottom-up broadcast neural network for music genre classification. Multimedia Tools Appl. 80, 1–19 (2021)

Karunakaran, N., Arya, A.: A scalable hybrid classifier for music genre classification using machine learning concepts and spark. In: 2018 International Conference on Intelligent Autonomous Systems (ICoIAS), pp. 128–135 (2018)

Dai, J., Liu, W., Ni, C., Dong, L., Yang, H.: Multilingual deep neural network for music genre classification. In: INTERSPEECH (2015)

Mujtaba, G., Lee, S., Kim, J., et al.: Client-driven animated GIF generation framework using an acoustic feature. Multimedia Tools Appl. (2021). https://doi.org/10.1007/s11042-020-10236-6

Szegedy, C., Ioffe, S., Vanhoucke, V., Alemi, A.A.: Inception-v4, inception-ResNet and the impact of residual connections on learning. In: AAAI’17: Proceedings of the Thirty-First AAAI Conference on Artificial Intelligence Go to Proceedings of the Thirty-First AAAI Conference on Artificial Intelligence (2017)

Szegedy, C., et al.: Going deeper with convolutions. In: 2015 IEEE Conference on Computer Vision and Pattern Recognition (CVPR), pp. 1–9 (2015)

Tzanetakis, G., Essl, G., Cook, P.: Automatic musical genre classification of audio signals (2001).http://ismir2001.ismir.net/pdf/478tzanetakis.pdf

Defferrard, M., Benzi, K., Vandergheynst, P., Bresson, X.: FMA: a dataset for music analysis. In: 18th International Society for Music Information Retrieval Conference (ISMIR), (2017). ArXiv arXiv:1703.09179

Panagakis, Y., Kotropoulos, C.: Music genre classification via topology preserving non-negative tensor factorization and sparse representations. In: 2010 IEEE International Conference on Acoustics, Speech and Signal Processing, pp. 249–252 (2010)

Zhang, W., Lei, W., Xu, K., Xing, X.: Improved music genre classification with convolutional neural networks. In: INTERSPEECH (2016)

Choi, K., Fazekas, G., Sandler, M., Cho, K.: Transfer learning for music classification and regression tasks. ArXiv arXiv:1703.09179 (2017)

Bian, W., Wang, J., Zhuang, B., Yang, J., Wang, S., Xiao. J.: Audio-based music classification with DenseNet and data augmentation. In: PRICAI (2019)

Ghosal, D., Kolekar, M.: Music genre recognition using deep neural networks and transfer learning. In: INTERSPEECH (2018)

Sturm, B.L.: The GTZAN dataset: its contents, its faults, their effects on evaluation, and its future use. 11, 1–29 (2013). https://doi.org/10.1080/09298215.2014.894533

Valerio, V.D., Pereira, R.M., Costa, Y.M.G., Bertolini, D., Silla, N.: A resampling approach for Imbalanceness on music genre classification using spectrograms. In: FLAIRS Conference (2018)

Zhang, C., Zhang, Y.: SongNet: Real-time Music Classification. (2018)

Park, J., Lee, J., Park, J., Ha, J.-W., Nam, J.: Representation learning of music using artist labels. In: ISMIR (2018)

Acknowledgments

This work was funded by the “Agence universitaire de la Francophonie” (AUF) and supported by the EIPHI Graduate School (contract ANR-17-EURE-0002). Computations have been performed on the supercomputer facilities of the “Mésocentre de Franche-Comté”.

Author information

Authors and Affiliations

Corresponding author

Editor information

Editors and Affiliations

Rights and permissions

Copyright information

© 2021 Springer Nature Switzerland AG

About this paper

Cite this paper

El Achkar, C., Couturier, R., Atéchian, T., Makhoul, A. (2021). Combining Reduction and Dense Blocks for Music Genre Classification. In: Mantoro, T., Lee, M., Ayu, M.A., Wong, K.W., Hidayanto, A.N. (eds) Neural Information Processing. ICONIP 2021. Communications in Computer and Information Science, vol 1517. Springer, Cham. https://doi.org/10.1007/978-3-030-92310-5_87

Download citation

DOI: https://doi.org/10.1007/978-3-030-92310-5_87

Published:

Publisher Name: Springer, Cham

Print ISBN: 978-3-030-92309-9

Online ISBN: 978-3-030-92310-5

eBook Packages: Computer ScienceComputer Science (R0)