Abstract

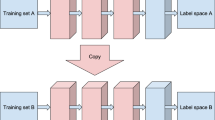

Deep neural networks tend to be accurate but computationally expensive, whereas ensembles tend to be fast but do not capitalize on hierarchical representations. This paper proposes an approach that attempts to combine the advantages of both approaches. Hierarchical ensembles represent an effort in this direction, however they are not compositional in a representational sense, since they only combine classifier decisions and/or outputs. We propose to take this effort one step further in the form of compositional ensembles, which exploit the composition of the hidden representations of classifiers, here defined as tiny networks on account of being neural networks of significantly limited scale. As such, our particular instance of compositional ensembles is called Compositional Committee of Tiny Networks (CoCoTiNe). We experimented with different CoCoTiNe variants involving different types of composition, input usage, and ensemble decisions. The best variant demonstrated that CoCoTiNe is more accurate than standard hierarchical committees, and is relatable to the accuracy of vanilla Convolutional Neural Networks, whilst being 25.7 times faster in a standard CPU setup. In conclusion, the paper demonstrates that compositional ensembles, especially in the context of tiny networks, are a viable and efficient approach for combining the advantages of deep networks and ensembles.

Access this chapter

Tax calculation will be finalised at checkout

Purchases are for personal use only

Similar content being viewed by others

References

Deng, L.: The MNIST database of handwritten digit images for machine learning research. IEEE Signal Process. Mag. 29(6), 141–142 (2012)

Ganaie, M., Hu, M., et al.: Ensemble deep learning: a review. arXiv preprint arXiv:2104.02395 (2021)

Jain, R., Duppada, V., Hiray, S.: Seernet@ INLI-FIRE-2017: hierarchical ensemble for Indian native language identification. In: FIRE (Working Notes), pp. 127–129 (2017)

Kim, B.-K., Roh, J., Dong, S.-Y., Lee, S.-Y.: Hierarchical committee of deep convolutional neural networks for robust facial expression recognition. J. Multimodal User Interfaces 10(2), 173–189 (2016). https://doi.org/10.1007/s12193-015-0209-0

Kim, K., Lin, H., Choi, J.Y., Choi, K.: A design framework for hierarchical ensemble of multiple feature extractors and multiple classifiers. Pattern Recognit. 52, 1–16 (2016)

Krizhevsky, A., Hinton, G., et al.: Learning multiple layers of features from tiny images (2009)

Liang, X., Ma, Y., Xu, M.: THU-HCSI at SemEval-2019 task 3: hierarchical ensemble classification of contextual emotion in conversation. In: Proceedings of the 13th International Workshop on Semantic Evaluation, pp. 345–349 (2019)

Marndi, A., Patra, G.K., Gouda, K.C.: Short-term forecasting of wind speed using time division ensemble of hierarchical deep neural networks. Bull. Atmos. Sci. Technol. 1(1), 91–108 (2020). https://doi.org/10.1007/s42865-020-00009-2

Singh, R.K., Gorantla, R.: Dmenet: diabetic macular edema diagnosis using hierarchical ensemble of CNNs. Plos One 15(2), e0220677 (2020)

Sudderth, E., Freeman, W.: Hierarchical ensemble of global and local classifiers for face recognition. IEEE Signal Process. Mag. 25(2), 114–141 (2008)

Tolstikhin, I., et al.: MLP-Mixer: an all-MLP architecture for vision. arXiv preprint arXiv:2105.01601 (2021)

Valentini, G.: Hierarchical ensemble methods for protein function prediction. International Scholarly Research Notices 2014 (2014)

Wang, H., Li, J., He, K.: Hierarchical ensemble reduction and learning for resource-constrained computing. ACM Trans. Des. Autom. Electron. Syst. (TODAES) 25(1), 1–21 (2019)

Wang, R., Li, H., Lan, R., Luo, S., Luo, X.: Hierarchical ensemble learning for Alzheimer’s disease classification. In: 2018 7th International Conference on Digital Home (ICDH), pp. 224–229. IEEE (2018)

Xiao, H., Rasul, K., Vollgraf, R.: Fashion-MNIST: a novel image dataset for benchmarking machine learning algorithms. arXiv preprint arXiv:1708.07747 (2017)

Author information

Authors and Affiliations

Corresponding author

Editor information

Editors and Affiliations

Rights and permissions

Copyright information

© 2021 Springer Nature Switzerland AG

About this paper

Cite this paper

Seng, G.H., Maul, T., Kapadnis, M.N. (2021). Compositional Committees of Tiny Networks. In: Mantoro, T., Lee, M., Ayu, M.A., Wong, K.W., Hidayanto, A.N. (eds) Neural Information Processing. ICONIP 2021. Communications in Computer and Information Science, vol 1517. Springer, Cham. https://doi.org/10.1007/978-3-030-92310-5_45

Download citation

DOI: https://doi.org/10.1007/978-3-030-92310-5_45

Published:

Publisher Name: Springer, Cham

Print ISBN: 978-3-030-92309-9

Online ISBN: 978-3-030-92310-5

eBook Packages: Computer ScienceComputer Science (R0)