Abstract

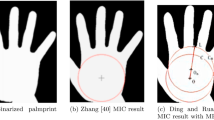

Bimodal palmprint recognition use palm vein and palmprint images at the same time, which can achieve high accuracy and has intrinsic anti-falsification property. For bimodal palmprint recognition and verification, the ROI detection and ROI alignment of palmprint region-of-interest (ROI) are two crucial points for bimodal palmprint matching. Most existing plamprint ROI detection methods are based on keypoint detection algorithms, however the intrinsic difficulties lying in keypoint detection tasks make the results not accurate. Besides, in these methods the ROI alignment and feature fusion algorithms at image-level are not fully investigated. To improve the performance and bridge the gap, we propose our Bimodal Palmprint Fusion Network (BPFNet) which focuses on ROI localization, alignment and bimodal image fusion. BPFNet is an end-to-end deep learning framework which contains two parts: The detection network directly regresses the palmprint ROIs and conducts alignment by estimating translation. In the downstream, the fusion network conducts bimodal ROI image fusion leveraging a novel cross-modal selection scheme. To demonstrate the effectiveness of BPFNet, we implement experiments on two touchless palmprint datasets and the proposed framework achieves state-of-the-art performances.

Access this chapter

Tax calculation will be finalised at checkout

Purchases are for personal use only

Similar content being viewed by others

References

Deng, J., Guo, J., Xue, N., Zafeiriou, S.: Arcface: additive angular margin loss for deep face recognition. In: CVPR, pp. 4690–4699 (2019)

Fei, L., Zhang, B., Xu, Y., Guo, Z., Wen, J., Jia, W.: Learning discriminant direction binary palmprint descriptor. IEEE Trans. Image Process. 28(8), 3808–3820 (2019)

Genovese, A., Piuri, V., Plataniotis, K.N., Scotti, F.: Palmnet: gabor-pca convolutional networks for touchless palmprint recognition. IEEE Trans. Inf. Forensics Secu. 14(12), 3160–3174 (2019)

He, K., Gkioxari, G., Dollar, P., Girshick, R.: Mask R-CNN. In: ICCV (2017)

He, K., Zhang, X., Ren, S., Sun, J.: Deep residual learning for image recognition. In: CVPR, pp. 770–778 (2016)

Jia, W., et al.: Palmprint recognition based on complete direction representation. IEEE Trans. Image Process. 26(9), 4483–4498 (2017)

Kong, A.W., Zhang, D.: Competitive coding scheme for palmprint verification. In: Int. Conf. Pattern Recogn. 1, 520–523 (2004)

Li, Z., Liang, X., Fan, D., Li, J., Jia, W., Zhang, D.: Touchless palmprint recognition based on 3D gabor template and block feature refinement. arXiv preprint (2021). arXiv:2103.02167

Lin, T.Y., Goyal, P., Girshick, R., He, K., Dollár, P.: Focal loss for dense object detection. In: ICCV, pp. 2980–2988 (2017)

Luo, Y.T., et al.: Local line directional pattern for palmprint recognition. Pattern Recogn. 50, 26–44 (2016)

Matkowski, W.M., Chai, T., Kong, A.W.K.: Palmprint recognition in uncontrolled and uncooperative environment. IEEE Trans. Inf. Forensics Secur. 15, 1601–1615 (2019)

Mousavian, A., Anguelov, D., Flynn, J., Kosecka, J.: 3D bounding box estimation using deep learning and geometry, In: CVPR (2017)

Xiao, B., Wu, H., Wei, Y.: Simple baselines for human pose estimation and tracking. In: ECCV, pp. 466–481 (2018)

Zhang, D., Kong, W.K., You, J., Wong, M.: Online palmprint identification. TPAMI 25(9), 1041–1050 (2003)

Zhang, L., Li, L., Yang, A., Shen, Y., Yang, M.: Towards contactless palmprint recognition: a novel device, a new benchmark, and a collaborative representation based identification approach. Pattern Recogn. 69, 199–212 (2017)

Zhang, Y., Zhang, L., Zhang, R., Li, S., Li, J., Huang, F.: Towards palmprint verification on smartphones. arXiv preprint (2020). arXiv:2003.13266

Zhao, S., Zhang, B., Chen, C.P.: Joint deep convolutional feature representation for hyperspectral palmprint recognition. Inf. Sci. 489, 167–181 (2019)

Sun, Z., Tan, T., Wang, Y., Li, S.Z.: Ordinal palmprint represention for personal identification [represention read representation]. In: CVPR. vol. 1, pp. 279–284 (2005)

Zhou, X., Wang, D., Krähenbühl, P.: Objects as points. In: arXiv preprint (2019). arXiv:1904.07850

Zhu, J., Zhong, D., Luo, K.: Boosting unconstrained palmprint recognition with adversarial metric learning. IEEE Trans. Biometr. Behav. Ident. Sci. 2(4), 388–398 (2020)

Acknowledgement

This work is supported by Shenzhen Institute of Artificial Intelligence and Robotics for Society. The work is also supported in part by the NSFC under Grants 6217070450 and 62076086, Shenzhen Science and Technology Program (RCBS20200714114910193), Open Project Fund from Shenzhen Institute of Artificial Intelligence and Robotics for Society (AC01202005017).

Author information

Authors and Affiliations

Corresponding author

Editor information

Editors and Affiliations

Rights and permissions

Copyright information

© 2021 Springer Nature Switzerland AG

About this paper

Cite this paper

Li, Z., Liang, X., Fan, D., Li, J., Zhang, D. (2021). BPFNet: A Unified Framework for Bimodal Palmprint Alignment and Fusion. In: Mantoro, T., Lee, M., Ayu, M.A., Wong, K.W., Hidayanto, A.N. (eds) Neural Information Processing. ICONIP 2021. Communications in Computer and Information Science, vol 1517. Springer, Cham. https://doi.org/10.1007/978-3-030-92310-5_4

Download citation

DOI: https://doi.org/10.1007/978-3-030-92310-5_4

Published:

Publisher Name: Springer, Cham

Print ISBN: 978-3-030-92309-9

Online ISBN: 978-3-030-92310-5

eBook Packages: Computer ScienceComputer Science (R0)