Abstract

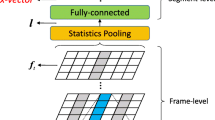

Time Delay Neural Network (TDNN)-based speaker embeddings extraction have become the dominant approach for text-independent speaker verification. Several single and hybrid deep learning architectures have been proposed for improving the performance. In this paper, we propose yet another hybrid configuration that employs Convolution Neural Network (CNN), TDNN and Long Short-Term Memory (LSTM) for training and extraction of speaker discriminant utterance level representations. In the proposed framework, we also use SpecAugment for on the fly data augmentation and multi-level statistics pooling from across CNN, LSTM and TDNN layers for aggregating the frame level information into utterance level speaker embeddings via fully connected layers. For performance evaluation of the proposed framework, speaker verification experiments are carried out across NIST SRE 2016, Voxceleb and Short duration Speaker Verification (SdSV) challenge 2021 corpora. Evaluation metrics chosen for performance evaluation are equal error rate (EER), minimum decision cost function (minDCF), and actual decision cost function (actDCF). Experimental results depict the proposed hybrid approach yielding improvements over the original TDNN, TDNN-LSTM hybrid architecture, as well as some other previously-proposed approaches.

Access this chapter

Tax calculation will be finalised at checkout

Purchases are for personal use only

Similar content being viewed by others

References

Bhattacharya, G., Alam, J., Kenny, P.: Deep speaker recognition: modular or monolithic?. In: Proceedings Interspeech 2019, pp. 1143–1147 (2019)

Chen, C., Zhang, S., Yeh, C., Wang, J., Wang, T., Huang, C.: Speaker characterization using TDNN-LSTM based speaker embedding. In: ICASSP 2019–2019 IEEE International Conference on Acoustics, Speech and Signal Processing (ICASSP), pp. 6211–6215 (2019)

Chung, J.S., Nagrani, A., Zisserman, A.: Voxceleb2: deep speaker recognition. arXiv preprint arXiv:1806.05622 (2018)

Dehak, N., Kenny, P.J., Dehak, R., Dumouchel, P., Ouellet, P.: Front-end factor analysis for speaker verification. IEEE Trans. Audio Speech Lang. Process. 19(4), 788–798 (2011)

Desplanques, B., Thienpondt, J., Demuynck, K.: Ecapa-tdnn: emphasized channel attention, propagation and aggregation in tdnn based speaker verification. Interspeech 2020, October 2020. http://dx.doi.org/10.21437/Interspeech.2020-2650

Gao, S., Cheng, M., Zhao, K., Zhang, X., Yang, M., Torr, P.H.S.: Res2net: a new multi-scale backbone architecture. CoRR abs/1904.01169 (2019). http://arxiv.org/abs/1904.01169

Garcia-Romero, D., McCree, A.: Supervised domain adaptation for i-vector based speaker recognition. In: 2014 IEEE International Conference on Acoustics, Speech and Signal Processing (ICASSP), pp. 4047–4051 (2014)

Garcia-Romero, D., Sell, G., Mccree, A.: MagNetO: x-vector magnitude estimation network plus offset for improved speaker recognition. In: Proceedings Odyssey 2020 The Speaker and Language Recognition Workshop, pp. 1–8 (2020)

Gusev, A., et al.: Deep speaker embeddings for far-field speaker recognition on short utterances (2020)

Hajavi, A., Etemad, A.: A deep neural network for short-segment speaker recognition. In: Proceedings Interspeech (2019), pp. 2878–2882 (2019)

Hansen, J.H.L., Hasan, T.: Speaker recognition by machines and humans: a tutorial review. IEEE Sig. Process. Mag. 32(6), 74–99 (2015)

He, K., Zhang, X., Ren, S., Sun, J.: Deep residual learning for image recognition. CoRR abs/1512.03385 (2015). http://arxiv.org/abs/1512.03385

Hu, J., Shen, L., Sun, G.: Squeeze-and-excitation networks. CoRR abs/1709.01507 (2017). http://arxiv.org/abs/1709.01507

Huang, C.L.: Speaker characterization using TDNN, TDNN-LSTM, TDNN-LSTM-attention based speaker embeddings for NIST SRE 2019. In: Proceedings Odyssey 2020 The Speaker and Language Recognition Workshop, pp. 423–427 (2020)

Li, N., Tuo, D., Su, D., Li, Z., Yu, D.: Deep discriminative embeddings for duration robust speaker verification. In: Proceedings Interspeech (2018), pp. 2262–2266 (2018)

Liang, T., Liu, Y., Xu, C., Zhang, X., He, L.: Combined vector based on factorized time-delay neural network for text-independent speaker recognition. In: Proceedings Odyssey 2020 The Speaker and Language Recognition Workshop, pp. 428–432 (2020)

Lin, W., Mak, M.W., Yi, L.: Learning mixture representation for deep speaker embedding using attention. In: Proceedings Odyssey 2020 The Speaker and Language Recognition Workshop, pp. 210–214 (2020)

Monteiro, J., Albuquerque, I., Alam, J., Hjelm, R.D., Falk, T.: An end-to-end approach for the verification problem: learning the right distance. In: International Conference on Machine Learning (2020)

Novoselov, S., Shulipa, A., Kremnev, I., Kozlov, A., Shchemelinin, V.: On deep speaker embeddings for text-independent speaker recognition. CoRR abs/1804.10080 (2018). http://arxiv.org/abs/1804.10080

Park, D.S., et al.: SpecAugment: a simple data augmentation method for automatic speech recognition. In: Proceedings Interspeech (2019), pp. 2613–2617 (2019)

Povey, D., et al.: The kaldi speech recognition toolkit (2011)

Sadjadi, S.O., et al.: The 2016 NIST speaker recognition evaluation. In: Proceedings Interspeech (2017), pp. 1353–1357 (2017)

Snyder, D., Garcia-Romero, D., Sell, G., Povey, D., Khudanpur, S.: X-vectors: robust dnn embeddings for speaker recognition. In: 2018 IEEE International Conference on Acoustics, Speech and Signal Processing (ICASSP), pp. 5329–5333 (2018)

Sztahó, D., Szaszák, G., Beke, A.: Deep learning methods in speaker recognition: a review (2019)

Tang, Y., Ding, G., Huang, J., He, X., Zhou, B.: Deep speaker embedding learning with multi-level pooling for text-independent speaker verification. In: ICASSP 2019–2019 IEEE International Conference on Acoustics, Speech and Signal Processing (ICASSP), pp. 6116–6120 (2019)

Wang, S., Rohdin, J., Plchot, O., Burget, L., Yu, K., Černocký, J.: Investigation of specaugment for deep speaker embedding learning. In: ICASSP 2020–2020 IEEE International Conference on Acoustics, Speech and Signal Processing (ICASSP), pp. 7139–7143 (2020)

Xiang, X., Wang, S., Huang, H., Qian, Y., Yu, K.: Margin matters: Towards more discriminative deep neural network embeddings for speaker recognition. arXiv preprint arXiv:1906.07317 (2019)

Xie, W., Nagrani, A., Chung, J.S., Zisserman, A.: Utterance-level aggregation for speaker recognition in the wild. In: ICASSP 2019–2019 IEEE International Conference on Acoustics, Speech and Signal Processing (ICASSP), pp. 5791–5795. IEEE (2019)

Zeinali, H., Lee, K.A., Alam, J., Burget, L.: Short-duration speaker verification (SdSV) challenge 2021: the challenge evaluation plan (2021)

Zhang, R., et al.: ARET: aggregated residual extended time-delay neural networks for speaker verification. In: Proceedings Interspeech (2020), pp. 946–950 (2020)

Author information

Authors and Affiliations

Corresponding author

Editor information

Editors and Affiliations

Rights and permissions

Copyright information

© 2021 Springer Nature Switzerland AG

About this paper

Cite this paper

Alam, J., Fathan, A., Kang, W.H. (2021). Text-Independent Speaker Verification Employing CNN-LSTM-TDNN Hybrid Networks. In: Karpov, A., Potapova, R. (eds) Speech and Computer. SPECOM 2021. Lecture Notes in Computer Science(), vol 12997. Springer, Cham. https://doi.org/10.1007/978-3-030-87802-3_1

Download citation

DOI: https://doi.org/10.1007/978-3-030-87802-3_1

Published:

Publisher Name: Springer, Cham

Print ISBN: 978-3-030-87801-6

Online ISBN: 978-3-030-87802-3

eBook Packages: Computer ScienceComputer Science (R0)