Abstract

Uncertainty affects all phases of the product life cycle of technical systems, from design and production to their usage, even beyond the phase boundaries. Its identification, analysis and representation are discussed in the previous chapter. Based on the gained knowledge, our specific approach on mastering uncertainty can be applied. These approaches follow common strategies that are described in the subsequent chapter, but require individual methods and technologies. In this chapter, first legal and technical aspects for mastering uncertainty are discussed. Then, techniques for product design of technical systems under uncertainty are presented. The propagation of uncertainty is analysed for particular examples of process chains. Finally, semi-active and active technical systems and their relation to uncertainty are discussed.

You have full access to this open access chapter, Download chapter PDF

Similar content being viewed by others

The first Chapters of this book provide the conceptual basis for mastering uncertainty in design, production and usage of load-bearing structures in mechanical engineering. This forms the fundamental floor of our house, presented in Chap. 1. Besides the presentation of our motivation, Chap. 1 reflects on the source and quality of models, as well as on data and the term “structures”. Chapter 2 provides a consistent classification and definition of uncertainty. This is essential for our specific approach on mastering uncertainty, which is described in Chap. 3, making use of three exemplary technical systems, which are introduced in Sect. 3.6. On the middle floor of the framework of mastering uncertainty, presented in Fig. 1.12, we discuss methods for analysis, quantification and evaluation of uncertainty in Chap. 4 and now introduce methods and technologies to apply our approach to technical systems.

Therefore, we first cover the technical and legal requirements for mastering uncertainty with a focus on product safety and liability in Sect. 5.1. Although legal uncertainty usually expresses itself during product usage, it has to be considered from the very beginning of the product life cycle during design and production. The technical specification of products, which has to define the technical requirements on the product, effects the uncertainty in an early stage of the development process. From a legal perspective, two points are important: firstly, to meet product safety requirements, sufficient knowledge of the legal framework regarding the specific application must be available; this might be challenging, especially for new developments; secondly, liability risks have to be minimised during the design and production phase.

In Sect. 5.2, we propose several methods to uncover and master uncertainty during the design phase. Therefore, we first introduce a method for the estimation of uncertainty occurring during the whole product life cycle, and a generic process model used to uncover uncertainty in production or application processes. Furthermore, uncertainty arising from models which are indispensable for product design, is discussed, and different ways to deal with it are presented. Our specific approach to master uncertainty in the product development process is presented using our our three demonstrator systems Modular Active Spring-Damper System, see Sect. 3.6.1, Active Air Spring, see Sect. 3.6.2, and 3D Servo Press, see Sect. 3.6.3.

The production phase usually takes place in the form of a process chain. Each single process, as well as the material passing through the process chain, is subject to uncertainty. The uncertainty is propagated through the process chain, see Sect. 3.2. In Sect. 5.3 we give examples on how to master this propagation of uncertainty in process chains.

Section 5.4 deals with methods and technologies to manipulate single processes and their application on both the production and usage phase. Based on the definition of semi-active and active processes, which is given in Sect. 3.3, we cover the manipulation of production processes using innovative components. Furthermore, we present several semi-active and active technologies to master uncertainty within the usage phase.

5.1 Technical and Legal Requirements for Mastering Uncertainty

Defining requirements in order to master uncertainty is the main goal of the following section. Both, technical and legal requirements may be established. Regarding the product phases design (A), production (B) and usage (C) presented in Fig. 1.3 legal uncertainty usually manifests itself during the usage phase, if the product causes damage. Ideally, legal requirements influence technical requirements and are already considered during the design and production phase of the product in order to achieve the highest possible certainty regarding legal liability of producers. The economic impact of legal requirements can be derived from the legal framework. We will focus on product safety and product liability. In the following, we understand product development as the totality of all steps leading to a marketable product, including design and production.

In Sect. 1.6 product design was discussed as a constrained optimisation problem, where the objective function has three challenging aspects, namely (i) minimal effort, (ii) maximal availability and (iii) maximal acceptability. Conformity with product liability is the formal aspect of acceptance.

The process of defining specifications in engineering design can be understood as a socio-technical system, whereby the functionality and quality of a product are the result of a complex and dynamic interaction of different stakeholder groups. In Sect. 5.1.1, we examine this process of how specifications are formulated. Furthermore, we consider the way specifications are used in product development. Therefore, a classification of specifications into objectives and constraints is introduced.

From the technical perspective, defining a precise and complete technical specification is the basis for the subsequent development process of any new product. In classical engineering design, uncertainty in the use of the product can derive from the misinterpretation of product requirements during product development. This uncertainty can potentially be addressed at a very early stage of the development process. We will discuss the general problem of specification uncertainty, as well as the impact technical specifications may have on the overall uncertainty of a complex load-bearing system in Sect. 5.1.2.

In order to clarify product requirements from a legal perspective, we need to apply the abstract knowledge of the legal framework to specific applications. Combining legal expertise with the fields of engineering and mathematics allows a more user-oriented approach to discuss the problem of legal uncertainty where it occurs.

Therefore, product safety requirements and the importance of technical standards will be addressed in order to prevent cost-intensive product recalls. For innovative products in particular, the problem arises that producers may not rely on technical standards in their development process, as such standards do not yet exist. The question, how producers should cope with this uncertainty is also part of our discussion in Sect. 5.1.3.

From a product liability perspective, many liability-causing legal risks can be avoided in the design of the product and during the organisation of the production process. We take an application-oriented approach to answer the question, which measures the producer needs to implement in order to minimise liability risks. The specific requirements for producers using algorithmic optimisation techniques in product development (Sect. 5.1.5) are of a different nature than the requirements for producers implementing an autonomous production process (Sect. 5.1.4). In both cases, the difficult question arises, how liability risks can be minimised, when using innovative development techniques or production methods.

Technical standards, although not always legally binding, are often the basis of contracts when selling products. Compliance with standards is therefore a requirement for products. However, technical standards must apply to many different occasions, and the language used is therefore ambiguous. In Sect. 5.1.6, an information system is proposed that detects semantic uncertainty in standards and provides suggestions to the users of standards to resolve the uncertainty.

Uncertainty does not only affect product development, but planning processes in general. In the scientific discipline of project management, concepts, methods and practices for dealing with uncertainty have been developed during the last decades. In Sect. 5.1.7, we reflect on these approaches from a historical perspective in order to provide a better understanding of current tools for planning processes.

In addition to the general technical methods for mastering uncertainty, we hope to provide another dimension to the tools which are discussed in Sect. 3.3.

5.1.1 ‘Just Good Enough’ Versus ‘as Good as It Gets’: Negotiating Specifications in a Conflict of Interest of the Stakeholders

Technical specifications provide requirements of the product or services that are considered in the design process and are discussed, quantified and checked by the stakeholders involved. In classical engineering design, they are supposed to be defined at the beginning of the product lifecycle. This classic approach dating back to Pahl and Beitz [215] and being the fundament of the guideline VDI 2221 [271] is related to uncertainty for two reasons. Firstly, at the beginning of the engineering design process, various ways of using and misusing the product have to be anticipated. Secondly, in retrospect of every engineering design process, the product functionality and quality can be seen as a result of a complex and dynamic interaction of the three stakeholders (1) supplier, (2) customer, (3) society. For us the supplier is in most cases identical with the manufacturer. There are several strategies known for mastering this uncertainty, such as user-centred design, requirement management and agile project management. A review of these methods exceeds the scope of this section. Instead, the following pages embody a reflection on how specifications are used as a design method in the context of Sustainable Systems Design (SSD) and of mathematical optimisation. Moreover, it outlines the process of how specifications are formulated by the three stakeholders mentioned above.

Classification of specifications into objectives and constraints

In SSD, see Sect. 1.6, Figs. 1.9 and 1.10, a constrained optimisation problem in the form of “maximise quality subject to functionality” is solved in the design process. Quality corresponds to the objectives and the functionality to the constraints of the optimisation problem.

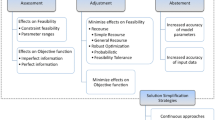

The tree diagram in Fig. 5.1 provides a classification into two branches, objectives and constraints, which have four specification types in the leaves of the tree. There is one objective (a) and three different types of constraints (b), (c), (d):

-

(a)

quality objective,

-

(b)

quality constraints,

-

(c)

functional constraints,

-

(d)

restricted design space.

The assignment to constraints and sub-objectives is done by each stakeholder individually even though the same system is addressed as we will see in the following. The term “constraints” originates from the field of mathematical optimisation and describes the restricted solution space of the optimisation problem. In the context of technical specifications, it describes possible restrictions and limitations, but also demands and requirements.

-

(a)

Quality objectives are specified by weighted sub-objectives. Weights are impact-specific weighting factors often known as cost factors (e.g. pricing the environmental impact by \(CO_2\) taxation). Also, impact-specific incentives (e.g. financial subsidies for improvement) can be used as weights. The sub-objectives are the three quality directions minimal effort, maximal availability, maximal acceptability, Sect. 1.6. The three quality directions are in agreement with Taguchi’s quality engineering methodology [258]. Taguchi demands that manufacturers also consider the effort, i.e. the economic costs and the costs to society, as a quality measure. The weighted sub-objectives are approximated by agents (humans and/or machines) in an incremental Continuous Improvement Process (CIP) controlled by the target and impact-specific weighting factors. Hence, a quality objective defines a direction and not the required quality level.

-

(b)

Quality constraints ensure such required quality level. The advantage of quality constraints versus quality objectives is the clear commitment. The disadvantage is that constraints are fixed and not optimised. Hence, optimal quality and hence sustainability will never be reached by quality constraints. A typical quality specification is a cost limit to be reached in a design-to-cost engineering process or the setting of a pollution limit.

-

(c)

Functional constraints are specified on the basis of expressed or anticipated customer needs.

-

(d)

Restricted design space is given by available technologies, resources, operating modes, services. The design space may be extended by innovations or restricted by banning technologies, such as demanding carbon-free energy supply.

The tree diagram (solid line) classifies system specifications in the context of SSD as presented in Sect. 1.6 into objectives and constraints. There are a quality objectives, b quality constraints and c functional constraints specified on the basis of named or anticipated customer needs. A restriction of the design space d is the third and last constraint

Section 1.6 classifies acceptability into informal and formal acceptability. Formal acceptability is achieved by ensuring legal conformity or compliance with a contract between stakeholders. Hence, formal acceptability is assigned to quality specifications in the first place. Informal acceptability is fostered through positive user experience or positively perceived functional performance. The quality objective in Robust Design is a prominent example as presented in the third case study of Sect. 3.5. But how does the process of specifying objectives and constraints work? How are quality directions given and assigned to the different stakeholder groups?

Product functionality and quality is negotiated in a cybernetic system of the three external stakeholder groups (1) supplier, (2) customer, (3) society. Internal stakeholders, i.e. the employees of a company, are not the primary focus here. To understand the basic dynamics and outcome of this negotiating process, the external stakeholders can be modelled as agents in a cybernetic control system. The purpose of this section is not to provide and evaluate a detailed model, but to illustrate how the boundaries of SSD can be extended from an techno-economic system to a socio-technical system, see Fig. 5.2.

Cybernetic stakeholder system

The values of various parameters relevant in the process of negotiating and formulating the specifications (quality objectives, quality constraints, ...) in SSD are subject to processes that transcend the purely technical system. In order to understand how specifications are determined, we suggest that the socio-technical system can be modelled as a cybernetic system, see Fig. 5.2. We begin by identifying three stakeholders that are coupled in a control loop: (1) supplier, (2) customer of products and services like owners and operators and (3) society represented by one or several governments and non-governmental organisations.

This concept is now applied to analyse the dynamics of specifications using the example of the so called “energy ship”, cf. 1st case study in Sect. 3.3. Here, the supplier (2) is the ship manufacturer. The customer (1) is the owner and operator of the energy ships. The customer follows the Friedman doctrine, “the social responsibility of business is to increase profit” [109]. This is reflected in the customer’s quality objective: minimising the levelised costs of hydrogen (LCOH).

Tolerated functional and quality constraints are usually communicated to the supplier in a feedforward control. For example, quality constraints such as tolerated efficiency limits or service life guarantees can be required at the lowest possible price, which is a quality objective assigned to the supplier by the customer. However, formulating the quality constraints by the customer does not give the supplier any incentive to deliver the best possible product, but only to act according to the precept “just good enough”. On the one hand, this attitude is welcome in order to avoid overachievement. On the other hand, this attitude limits sustainability. Another possibility would be to map the quality constraint to a quality objective, i.e. to accept a higher price if the component efficiency is higher. This leads to the principle “as good as it gets”, which is essential for the development of sustainable products: quality objectives are followed in an incremental feed back control strategy as Fig. 5.3 implies. As discussed in Chap. 1, feed back control is robust with respect to uncertainty in the controlled systems. In contrast, feed forward control demands models of the controlled systems.

Society has many options for intervening as shown in Figs. 5.2 and 5.3. It may manipulate weighting factors in the quality objectives resulting in (i) incentivisation, i.e. certain technologies or solutions can become incentivised like discounted loans for fuel cell technology; (ii) penalisation, i.e. certain technologies or impacts are penalised for example by fiscal policies like \(C\!O_2\) tax; setting limits in the quality constraints by (iii) regulation, i.e. legal constraints are introduced to limit impact like minimum efficiency levels for fuel cells; or reduce the design space leading to (iv) restriction, i.e. the use of a certain technology is restricted like banning Diesel engines, which leads to an increased use of hydrogen and more profitability for the customer. This concerns both the customer and the supplier. As there is constant interaction, the respective specifications are negotiated in the market and they influence all stakeholders.

This classification is not strict and absolute. For example, companies can voluntarily overfulfil the normative requirements of the government, which is done in the context of Corporate Social Responsibility (CSR), and thus deviate from the Friedman doctrine [212]. However, this unnecessary over-fulfilment of quality constraints can lead to an increased reputation in turn, increased profit and thus improve the quality objective—maximise profit – of the company [212]. Companies of the Value Balancing Alliance [267], founded by BASF SE, Deutsche Bank, SAP and other companies in 2019, go one step further by defining the impact specific cost and the positive impact of their actions themselves. The high level of uncertainty provides scope for interpretation and raises the suspicion of greenwashing [251]. In the perspective of the presented model, the responsibilities of the stakeholders are mixed up, which can cause unintended dynamics. This is why transparent and measurable Key Performance Indicators for the impact are necessary.

Summary

It has been shown that specifications can be grouped into four categories: quality objectives, quality constraints, functional constraints and restrictions of the design space. To understand the process of establishing specifications in socio-technical systems, we interpreted the system from a cybernetic point of view. The analysis shows that the wide range of stakeholders generates further sources of uncertainty in the specification, which are located outside of the product design itself. This must be taken into account and adequately modelled, especially when anticipating future developments as done in feed forward control. Compliance with the Friedman doctrine, which has been dominant in the past two decades, has failed with regard to the development of sustainable socio-technical systems. Both suppliers and customers have to adopt the functional constraints of the other stakeholders as quality objectives. Only the clear commitment to this and making the design process transparent do enable sustainable socio-technical systems in the future. To shift the paradigm from “just good enough” to “as good as it gets”, the CIP, a well-known tool in business management, can be used.

5.1.2 Technical Specification

Uncertainty occurs in load-bearing systems in all phases of their virtual and real product life cycle, cf. Chap. 1. Especially, the development of new products is associated with particularly high uncertainty [216]. One important source for uncertainty is the technical specification of products, which is discussed in the following.

Uncertainty in product development can only be mastered in the long term, if it is given equal consideration to technical, economic and ecological requirements from the very first beginning. Correspondingly, reliable information from the technical specification supports decisions with far-reaching consequences in the development process.

New product ideas are often described by prospective users based on the intended application process or their contribution to the functionality of an overall system. However, the description of new product ideas is usually very imprecise. Thus, at the beginning of the development process, developers have to define the product properties in such a way that the resulting products are equipped with the desired properties in the use phase, cf. [224, 225].

The development task is analysed and detailed with regard to functionality and quality, effort, availability and acceptance, cf. Sect. 1.6. Usually, the development task is formally described in the form of requirements; and it is summarised in a document for the entire development team. The formal documentation is called technical specification. The document is frequently referred to as a requirements list, specification sheet, product concept catalogue or similar [69].

Although the technical solution is still largely unknown in the design phase, e.g. load assumptions must be made, product functions and basic dimensions have to be defined, as well as restrictions, e.g. with respect to costs and dimensions. Product development teams then use the technical specification during the entire development process as an information storage and decision basis.

The technical specification contributes to mastering of uncertainty in the development process by (i) defining requirements which are subject to uncertainty and (ii) directly specifying the requirements of the system in later use including foreseen uncertainties. Both contributions lead to a reduced modelling uncertainty.

The complete and detailed specification of the functional requirements helps product developers to reduce uncertainty during the creation of technical solutions. The completeness of the technical specification avoids that important functionalities are overlooked, whereas the sufficient level of detail prevents misinterpretations during product development. Both reduce model uncertainty, see Sect. 2.2, and structural uncertainty, see Sect. 2.3, in the development process, as well as uncertainty regarding the expectations of product use.

The models we have developed for the identification, classification and evaluation of uncertainty help designers to foresee influences relevant to uncertainty and to quantify an acceptable level of uncertainty, especially in product use. These can be formulated and documented as requirements in the technical specification. With the methods we have developed to master uncertainty in product development, uncertainty in life cycle processes can be predicted and the specified uncertainty requirements are met during product development.

Mastering uncertainty due to modelling

The development of new products is characterised by gradually more detailed models, such as functional structures, effect relationships, embodiment designs and geometry representations. The developers create these models based on assumptions, e.g. for loads, performance and available assembly space. These assumptions are based on information that is documented in the technical specification. Thus, the guidelines we developed to reduce modelling uncertainty [283] also serve as a guide to reduce uncertainty through the technical specification.

We exemplify the role of technical specifications by the development of a new bearing concept, presented in Sect. 5.4.2. The bearing is used as a key element in the challenging development of the 3D Servo Press, enabling a force of 1600 kN, see Sect. 3.6.3. It consists of a combination of roller and plain bearings. The use of bearings in such a special application leads to uncertainty in the development process, due to the complex load and installation conditions.

The choice of an appropriate and meaningful generic model for a development step is supported in particular by the most detailed possible documentation of critical system states and load cases.

For the description of the bearing function we use the model according to Holland [158], described in Sect. 5.4.2, which was adapted to the mode of operation of the combined bearing concept [247]. The model assumes a force equilibrium between the operating force \(F_{\text {op}}\), the plain bearing force \(F_{\text {pb}}\) and the roller bearing forces \(F_{\text {rb}}\). The plain bearing force \(F_{\text {pb}}\) is based on a pressure build-up in the lubricant, which depends on the dynamic viscosity \(\eta \) of the lubricant, which is supplied with pressure \(p_{\text {in}}\) and volume flow \(Q_{\text {in}}\), as well as the speeds of the shaft, \(\omega _{\text {shaft}}\), and bearing shell, \(\omega _{\text {shell}}\), and the position of the shaft inside the bearing shell.

For common plain bearing designs, a quasi-static load condition of the rotating shaft by a constant load is assumed. In this case, the rotation speeds of shaft and bearing shell cause a non-constant pressure distribution \(p_{\text {rot}}\) in the circumferential direction due to the radial shaft displacement e. However, the detailed specification of the force curve over the angle of rotation, which occurs in mechanical presses, indicates that a significant temporal change of the shaft displacement occurs due to a force peak at the operating point. This leads to an additional pressure build-up \(p_{\text {sq}}\) in the area of the minimal bearing gap \(h_\mathrm {min}\), which is not covered by the lubrication model for steady operation. The resulting forces on the shaft can be expressed by the Sommerfeld numbers for rotation, \(\text {So}_{\text {rot}}\), and squeezing, \(\text {So}_{\text {sq}}\),

Here, \(\delta \) describes the position of the minimal bearing gap in polar coordinates while \(\dot{\varepsilon }\) is the squeezing rate. The consideration of these two forces in the model is of decisive importance for the specific application. In Sect. 4.3.5 we have seen how large the effects of model uncertainty on the results can be when a model is applied inappropriately because of ignorance. Initiated by the specification of the force curve over the shaft’s angle of rotation, which varies considerably over time, the development of the combined roller and plain bearing system of the 3D Servo Press was based on the assumption that, in addition to the hydrodynamic effect, a displacement of the oil must be taken into account when designing the plain bearing. Since the relevant load conditions are thus recorded much more accurately, the uncertainty of the press development could be significantly reduced by the choice of the model.

In general, when specialising a generic model for the current development task, e.g. a functional structure, the developers define an appropriate system boundary and granularity of the model. The system boundary represents the part of reality that is considered as relevant. The granularity results from the scope and thus the number of components for modelling the system, see Sect. 1.3.

The technical specification influences uncertainty in modelling the system by information that acts as a basis for assumptions and decisions of the developers. In particular, detailed requirements regarding load assumptions, disturbance variables, resources and boundary conditions enable the developer to choose a model horizon that takes into account essential uncertainty-critical influencing parameters. The identification of critical uncertainty in the technical specification supports the definition of relevant components with adequate complexity. This ensures the conciseness of the model as a very important model feature and a prerequisite for successful product design.

We use the specification of the viscosity range of the system element lubricant which is a part of the roller and plain bearing as an example. The combined roller and plain bearing system is exposed to high loads. Due to its significant influence the temperature dependence of the dynamic lubricant viscosity \(\eta \) must be taken into account.

Another example concerning the adequate mapping of the combined roller plain bearing system in a model is the deviation of the assumed loading situation. A possible deviation from assumed conditions is given by an inclination of the operating load caused by uncertainty outside the system boundary of the combined bearing. The behaviour of the combined bearing under such disturbing influences is investigated in [132].

The force conduction through the roller bearings is calculated by means of \({F_{\text {rb}}=c_{\text {rb}}\, e}\) with \(c_{\text {rb}}\) representing the stiffness of the rolling elements, neglecting elastic deformations of the shaft and the plain bearing shell.

This example shows that neglecting insignificant influences contributes to simplifying models and improving their conciseness. The differentiation and detailing of critical requirements urges developers to decide explicitly on the admissibility of simplifying assumptions that affect particularly critical system properties, see Sect. 4.3.

In the example of the combined bearing system, the dependencies of viscosity on pressure and temperature were recognised by references in the technical specification, but the uncertainty by neglecting these dependencies was assumed to be acceptable.

In summary, it can be stated that the modelling of the system is based on the information of the technical specification. The technical specification supports the developers in making relevant assumptions and to work out appropriate system models by specific documentation of critical functional requirements. Thus, it can reduce the influence of uncertainty. Model uncertainty can be effectively controlled by incorporating all relevant system areas and system properties into the development process to optimally meet customer expectations, despite the simplification of reality.

Mastering uncertainty due to requirements

As shown in Sect. 1.6, informal acceptance can represent the fulfilment of customer expectations, which has a significant influence on the success of the product. As described there, a high level of acceptance is achieved by reducing effort and increasing availability. In order to ensure a high level of availability in product development, product developers detail this objective right from the start in the form of requirements that are as precise as possible or even quantified. They document these requirements in the technical specification as a basis for decision-making during product development.

However, the quantification of availability depends strongly on product usage and is not always possible, as already stated in Sect. 1.6. The models and methods we have developed for the identification, evaluation and quantification of uncertainty and uncertainty influences allow in these cases the indirect specification of availability requirements without the technical solutions being known.

The type of the product to be developed and its conditions of use determine the scope and description of the uncertainty that must be taken into account in the development. Depending on the degree of uncertainty these are documented in the technical specification in the form of requirements regarding reliability or robustness properties.

Systems and machines for stationary use in production are usually operated in factory buildings with largely determined environmental and process conditions. The reliability specification defines “the probability that the required function of a product will not fail during a defined period of time and under given working conditions” [143]. Reliability is indicated in the technical specification by performance indicators such as Mean Time Between Failure (MTBF), Mean Time To Repair (MTTR), Uptime or Production Yield. Reliability is therefore based on the assumption that the causes of uncertainty are largely known and vary only slightly. The specification must therefore ensure that disturbance influences are defined or eliminated during the operation of the systems.

When developing robust systems, information regarding uncertainty causes in the specification are a prerequisite to guarantee a high availability in use, cf. [192].

In our case of highly loaded roller bearings, lubrication must be ensured so that the calculated service life and frictional torques (and thus power loss) are achieved during operation. Regarding the combined roller and plain bearing presented in Sect. 5.4.2, the volume flow \(Q_{\text {pb}}\) of the lubricant exiting the plain bearing in the axial direction serves to lubricate the roller bearings. However, this coupling of the lubricant flows of the plain bearing and the rolling bearings has a considerable influence on the system behaviour and must therefore be taken into account in the development. However, on the one hand, the lubricant properties are predetermined by the design of the plain bearing and, on the other hand, the lubricant temperature and the lubricant flow vary depending on the operating conditions of the plain bearing. The developer can only master the undefined lubrication conditions by a robust design of the rolling bearing. A prerequisite for mastering the uncertainty in the robust design of roller bearings is therefore comprehensive information on the properties of the lubricant and the lubricant flow in the technical specification.

Conclusion

In a nutshell, during the development of new products, developers may significantly influence the uncertainty of the product to be developed with the technical specification. The specified properties of the system can have an indirect or direct influence.

Mainly the complete coverage of causes of uncertainty contributes to mastering uncertainty in the product development process. A mostly complete specification of functionality and uncertainty influences can reduce model uncertainty and indirectly enhance correct predictions and availability of the system and thus the informal acceptance.

Uncertainty can be directly reduced if uncertainty effects from and on the system environment are captured and specified comprehensively and as detailed as possible, preferably quantitatively. The checklists of the robust design methods of the early phases of product development can be particularly helpful in this respect.

Elaborating the technical specification, it is purposeful to anticipate development steps and to support the developers in making assumptions by specific information structured according to model characteristics, cf. [283].

5.1.3 Product Safety Requirements for Innovative Products

Product recalls, as discussed in Sect. 1.1, are expensive and harmful to the producer’s image and therefore should be avoided. Although product recalls become relevant in the usage phase of a product, the root cause often traces back to the design and production phase. Figure 1.3 presents an overview of the three product phases and how they are linked. But product recalls are only necessary for products that can be dangerous, i.e. they present potential risks to the body, health or life of consumers, users or third parties in general. Reasons for recalls can be manifold. For example, in certain sectors, the product quality decreases due to economic factors and strong competition [278, p. 62].

But another reason for product quality not complying with product safety requirements can be, that the requirements are becoming more and more diverse and complex, Sect. 1.1. Or even the fact that no technical standards are applicable to new and innovative products.

If a product is in use and it turns out that it poses a safety issue, it needs to be taken off the market. Legal product safety is this strict due to its preventive character. Its aim is to ensure that only safe products are in use and on the market. Together with product liability, product safety requirements provide full protection of the consumers’ legally protected rights. In this subsection we aim at clarifying what is required for the producer to prove the safety of his product.

Product safety

The Product Safety Act is the main legal framework for product safety in Germany. It is accompanied by numerous technical standards, which specify requirements for the practical application [173, marg. 2]. Furthermore, it is subject to European regulation, which is why many of the technical standards are developed by European Standardisation Organisations. Basically, two groups of products need to be distinguished. Those which fall into the category of harmonised products and those which do not. In the case of harmonised products, the producer compulsorily needs to comply with the provisions, applicable for the specific case. These provisions are mostly based on European Standards. If the product complies with said provisions, it is assumed to be safe and can therefore be placed on the market [195, marg. 3]. National provisions for harmonised products often only regulate a minimum of safety which the product has to achieve. They are often accompanied by industry standards which are more detailed but not obligatory since they lack legal quality [66, p. 42]; [117, p. 1492]. In the case of non-harmonised products, the producer can exclusively follow national standards. Similarly to harmonised products, the compliance with these standards implies their safety. But, the application of these technical standards is not mandatory. Nevertheless, producers need to prove the compliance of the product with safety standards set by the national provision and technical standards, as well as state-of-the-art technology. On the contrary, the non-compliance with said standards does not automatically imply that the product is unsafe. Unsafe products are rather those which pose a potential risk to the legal rights the Product Safety Act aims to protect namely a person’s health, body and life.

The Product Safety Act is the hurdle which anyone placing a non-food product on the market for the first time needs to clear. At the same time the product needs to be made available on the market for commercial use. Further, the Product Safety Act is not only applicable to products, but also to facilities which are deemed hazardous and thus require monitoring and inspection. For this discussion, we will solely focus on products.

Market surveillance

In cases of non-compliance with the safety requirements set by provisions and technical standards, Market Surveillance Authorities can direct producers to different behaviour in order to avert danger for consumers, due to the respective product. These measures may consist of requesting the producer to take action in order to end the risk accompanied with the product. They can also prohibit the further distribution of the product, or, in particularly risky cases, even demand the product to be recalled. Any of the mentioned measures are likely to have a negative impact on the producer’s reputation and are certain to cost money. Therefore, the objective is to avoid authority intervention at all times.

RAPEX

The so-called RAPEX-System (Rapid exchange of Information) enables both member states and the European Commission to exchange information on dangerous products which are on the European market, but are being taken off the market, or are part of a product recall.

In addition, the RAPEX-System determines the level of risk of a product [174, p. 38]. Producers need to apply this assessment system to their product. The level of risk also determines possible measures taken by the market surveillance authorities.

Technical standards and the proof of safety

Technical standards are developed by private institutions, which collect knowledge from industry standards and compile it into one comprehensive document. These standards are more user-friendly than the requirements set by legal provisions, since they can have a very specific scope of application. Furthermore, legal provisions are vaguer in order to be applicable to a wide variety of use cases. As mentioned before, technical standards concerning non-harmonised products have no legally binding character, as they do not undergo a legislative procedure. Nevertheless, they represent the state-of-the-art technology. Therefore, when the producer designs a product following the technical standards, it implies that the product complies with the current minimum safety standard existing in the industry. As a result, the product is deemed to be safe and can be distributed on the market.

Non-compliance with existing technical standards

If producers do not comply with existing and applicable technical standards, this does not per se indicate that the product is unsafe [165, p. 722]. The reasons for this non-compliance can vary. The producer could just have overlooked the existence of the technical standard or he could have consciously neglected it. The reason behind this could be that the producer found a better, easier or cheaper option to provide the same level of safety of the product with a different construction than recommended by the technical standard. Therefore, it is necessary for the producer to prove that the minimum safety, set by the technical standards applicable, is met otherwise.

Missing technical standards

Another problem arises, if no technical standards are available to the producer when designing the product. This can be the case for innovative products, when no clear industry standard has been developed, yet. Without technical standards, there is no implication for the safety of the product in terms of the Product Safety Act [117, p. 1493]. So, how can the safety of the product be determined? This question is particularly important for the producer, as the product cannot be marketed without the positive safety implication.

In most cases, it is neither practical nor possible to apply technical standards developed for a different scope of application to another product, even if the products are similar [213].

It becomes apparent that the effort for providing proof of the safety of the product is much higher than in the case of existing technical standards. The producer must apply some kind of risk analysis to prove the safety of the product. But no official guidelines exist for how this analysis should be executed and what information needs to be taken into account. This uncertainty increases the efforts of the producer to provide the necessary proof of safety even more.

Practical guidelines would help the producer to do what is expected. This would equally make products safer compared to the case where a producer sets his or her own standards to prove the safety of any innovative product. German jurisdiction only offers guidance, as it was decided that sampling inspections are not a permissible basis for providing proof of the product series as a whole [213].

Machinery directive

The Machinery Directive [97] also demands a risk analysis and gives guidance as to which aspects the producer needs to consider during the analysis. For example, the producer needs to consider the intended use in addition to the foreseeable misuse of the product and determine the limits of the product. In the case of the Machinery Directive, the product is a machine and the Directive only applicable to such. Additionally, the producer needs to identify potential hazards and dangerous situations caused by the machine. Just by mentioning these requirements, it becomes clear that the risk analysis described by the Machinery Directive is not very specific and does not include specifications on how the information to evaluate said aspects should be gained.

Product safety and market surveillance package

The requirements stated in Article 8 of the Proposal for a Product Safety and Market Surveillance Package [96] are similarly vague. In this proposal, technical documentation for the product is required and should contain, amongst others, an analysis of the possible risks related to the product. But again, it contains no guidance as to which information is relevant for the analysis.

Non-legislative guidelines

The decision of the European Commission concerning the RAPEX-Guidelines [95] is a non-legislative and therefore non-binding document. But other than the mentioned legislative acts, this guideline provides useful information on how to perform a risk analysis for products and recommends a three-part assessment.

The foundation of this assessment is to describe the product and the inherent danger as well as identifying the group of potential users. Regarding this, intended, as well as unintended users need to be considered. Potential users should also be categorised according to their vulnerability. For instance, children and elderly people are vulnerable users in most cases. But depending on the use case or type of product usually not particularly vulnerable users might become vulnerable, for example, if warnings and instructions on the product are written in a foreign language. If different types of users can be identified, the risk assessment might have to be performed for different scenarios considering the different users in order to identify the highest risk combination. For the actual risk analysis, the producer should first anticipate a situation in which the product inherent risk manifests itself by injuring a person. This scenario will mostly revolve around the potential defect the product can have during its lifetime. This defect then causes the injury of a user or person, who comes into contact with the product.

Furthermore, it is significant for the assessment of how severe the anticipated injury would be. According to the RAPEX-Guideline, factors for the evaluation can be the duration the hazard of the product has on the potential victim, the body part which would potentially be injured and the impact on the respective body part. For example, losing a finger is a much more severe injury than lightly burning the skin on one finger. The severity of the injury can then be used as an indicator for the level of danger the product poses and vice-versa. In case different scenarios are possible, the one causing the severest injury should be considered first, as preventing this scenario provides the greatest safety for potential users. The second step would be to determine the probability of the scenario and therefore the probability of the person getting injured due to the hazardousness of the product. The RAPEX-Guideline recommends separating the anticipated scenario into smaller steps, identifying the probability of each individual step and then multiplying the individual probabilities to receive the overall probability of the scenario.

Lastly the RAPEX-Guideline recommends that the producer should combine the two steps, joining hazardousness and probability to obtain the risk of the product. Four risk categories are intended: serious, high, medium and low. Figure 5.4 demonstrates the combination of the probability of the injury, the severity of the expected injury, as well as the impact on the risk classification. Depending on the risk category, it is expected of the producer to apply a suitable effort to prevent the calculated risk. The higher the risk, the more important it is to mention that the whole process behind the described risk analysis should be documented, in particular in the case market surveillance authorities request this information. Figure 5.5 provides a simplified overview of the recommended procedure.

Risk level from the combination of the severity of injury and probability derived from RAPEX-Guideline [95], Table 4

Risk analysis derived from RAPEX-Guideline [95]

Finally, the recommended sources of information for all the consideration above are accident statistics, knowledge gained from previous and similar products, as well as experts’ opinions. As the problem discussed regards the question of how producers of innovative products can provide proof for the safety of their product in case no technical standards exist, it is highly likely that neither knowledge nor accident statistics exist that would be applicable to the product in question. Therefore, producers should base their assessments on information gained from experts’ opinions but not without checking the obtained information for plausibility.

Conclusion

We conclude that technical standards are a useful tool for mastering uncertainty. Compliance with these standards implies that the minimum safety requirements are met and that the product is safe enough to be distributed on the market. In some cases of new technology, technical standards do not exist and therefore no guidance is available to the producer. As a result, the producer must provide proof of the safety of his product in other ways. As no legally binding documents exist, we found that following the risk assessment described in the RAPEX-Guideline is currently the safest way for the producer to demonstrate the safety of his product.

5.1.4 Legal Uncertainty and Autonomous Manufacturing Processes

In the future of manufacturing more and more autonomous systems will be implemented to raise product quality and product safety. But first and foremost, they are implemented to make production processes more cost- and material-efficient. Although fully autonomously acting manufacturing processes are still to come, the discussion of relevant legal issues is very present. Without autonomous manufacturing processes on the market, there can be no jurisdiction to base legal requirements on. Uncertainty regarding legal requirements and legal risks can be one reason for the stagnation of innovation. The general issue and characterisation of uncertainty is discussed in Chap. 2. If the legal requirements that manufacturers must comply with could be specified today, innovation could be promoted and guided in the right direction. This tool for mastering legal uncertainty accompanies the methods for mastering uncertainty in a general technical sense as discussed in Sect. 3.3.

Legal literature often discusses, whether or not our existing liability regime is applicable to new and innovative technology [285]. This question arises because the current legal liability framework was established during a time way before automated processes were thinkable. Without going into more detail, it can be stated, that the current liability regime has such a broad spectrum that even autonomous systems generally fall into its scope. It goes without saying that some alterations will have to be made to the way we interpret regulations. Without these alterations, liability gaps can occur.

For the sake of a liability regime that is well suited for the innovative technology to come, and provides guidance as to what is legally expected from innovators and producers, this interpretation needs to take a practical approach. What are the specific problems that occur, when using, e.g. autonomous production processes, and how do we interpret the existing liability regime accordingly? Regarding liability risks in manufacturing processes, both conventional and autonomous, we are concerned with two main rules: Sect. 1 paragraph 1 of the Product Liability Act and Sect. 823 paragraph 1 of the German Civil Code. Contracts between the parties can also result in compensation, if they are violated. In the context of this discussion, however, we will exclusively focus on the aforementioned rules. Firstly, this is so because contracts are only binding between the parties resulting in the risk of uncertainty being much lower. Secondly, because the real legal uncertainty lies in the liability for damages inflicted on users and third parties.

Section 823 paragraph 1 of the german civil code

Section 823 paragraph 1 of the German Civil Code applies to damages done to life, body, health and property of a person. It is the key rule of German tort law. Manufacturers’ liability is only one facet of this rule, for a historical account see [36]. Its broad scope applies to many different actions causing the damages mentioned above. The first requirement of this rule to be applicable is that damage is done to one of the protected rights. Secondly, the damage must be caused by an intentional or negligent action. Finally, this action needs to be unlawful, meaning, not complying with the general principles of the law. Assuming that, in most cases, the manufacturer does not intend to harm another person’s rights, which is considered to be an intentional action, the large number of cases will evolve around the question of how to determine negligence. A negligent action is based on the violation of a security obligation. Generally, anyone who uses or creates a source of danger to others, needs to ensure that no harm is done [37, marg. 10]; [33, marg. 21 f.]. The security requirements that derive from this obligation depend on different factors: Potential users, whether they are consumers or professionals, intended and unintended but foreseeable use cases, which are limited by intentional misuse. All of these criteria need to be taken into consideration by the manufacturer. Users’ safety expectations must also be taken into account as far as they are reasonable.

In addition, the product has to comply with the current state-of-the-art safety standards. In this context, current refers to the point in time in which the product is marketed. German legislation has developed specifications for the safety obligations, the manufacturer has to comply with. They can be categorised in the areas of design, construction, instruction and monitoring of the product during its use phase. During the design phase, the producer is expected to choose the final product design with the required safety in mind. At the same time, he needs to document his decisions in this respect. In the event of liability claims, the producer needs to prove that no other design alternative would have been better suited to prevent the occurred damage [32, marg. 16].

Product liability act

For damages done to body, health or property of a person, Sect. 1 paragraph 1 of the Product Liability Act is applicable besides the general provisions of tort law. The Product Liability Act explicitly addresses producers of defective products. A product is defective, if it does not meet the expected safety standard. Safety requirements can be established similarly to Sect. 823 paragraph 1 of the German Civil Code. The same categorisation applies, except there is no obligation to monitor the product during its use phase. This is due to the fact that the aim of the Product Liability Act is to ensure that only safe products are placed on the market. Therefore, its relevance ends with the first placement of the product onto the market. Everything past this moment can only be regulated by Sect. 823 paragraph 1 of the German Civil Code. The main difference between the two provisions is the fact that the liability stated by the Product Liability Act is not based on fault, making it more consumer-friendly.

Automated deep drawing

For discussion purposes, we are going to evaluate the product liability issues concerning autonomous production processes referencing an autonomous deep drawing process. In order to get a better understanding of the problem, it is necessary to evaluate current state-of-the-art closed-loop controlled processes.

The demands on geometry and ever new materials pose a challenge for conventional deep drawing processes. The wall thickness of the final part has to be further reduced in order to make better use of the material, which increases the risk of wrinkling and cracking [7]. The use of more robust and thinner materials contributes to this effect as well. In addition, there are the fluctuations inherent in the process or the fluctuations in the material. All of this narrows the process window [168]. The deep drawing process consists of different steps, starting with the production of a blank cut from supplier coil material, followed by the actual deep drawing step and the quality control. The highly automated process has the ability to take data into account, which is provided by the supplier of the coil material. Also, the process can forward information from each production step to the next. Fluctuations and uncertainty in materials and between the individual steps of the process can therefore be taken into consideration. Information on the quality of the final product can then be fed back to adapt blank holder forces in the deep drawing process. Achieving the maximum drawing ratio, as displayed in Fig. 5.6, enables better material utilisation and the deep drawing of more complex geometries. Drawing ratio in general refers to the ratio of the blank diameter to the punch diameter. In conventional deep drawing processes, the maximum drawing ratio is linked to a higher risk of wrinkling or tearing, and therefore, to potential failure of the final product. With highly automated processes, the desired maximum drawing ratio becomes more achievable without taking higher risks.

Process window for deep drawing following [111]

Quality control checks random samples for compliance with specified product requirements. This is achieved by both optical measurements for the geometrical properties and destructive testing for robustness. Further, it is possible to measure blank holder forces, draw-in ratio of the blank, as well as wrinkling and tearing with real-time sensors in the tools. However, it is not possible to detect thinning of sheet metal or microscopic cracks without destructive testing. Thus, although the process can adapt to material fluctuations so that the geometry produced stays constant, other material properties that have never been problematic before can fluctuate. These undiscovered property fluctuations can have a great impact on the safety of a product, e.g. for load-bearing car components, such as car doors. Therefore, products can pass optical quality control and still fail during their use.

State-of-the-art deep drawing processes are based on the control of blank holder force in order to prevent wrinkling and tearing. Still, these offline closed-loop processes do not feedback information from the quality control or crash test data. As a consequence, the next step in the evolution of the deep drawing process must include such a feedback-loop, as well as the ability to adapt to an online closed-loop process. This will also involve processes to be able to learn. But while learning from geometry, data alone will prevent wrinkling and tearing, necking for example might be left unseen. In addition, the learning process needs to consider strength parameters. Since measuring strength has not yet been feasible inline, the measuring effort would be high. For the process to learn according to given strength parameters, measurements have to be taken frequently, for example every 100 parts.

Outlook on future autonomous processes

The autonomous production process will be able to make many relevant decisions by itself without human interaction, assuming that there is enough data from the use phase and failure of the previous product available. Even decisions concerning the design will be made by the process itself, for example choosing the coil material for deep drawing. According to the desire to produce more light-weight products, the process might decide to use a material that has not been used before, by applying the knowledge it has achieved from previous iterations with other materials. Errors might occur, if the new material behaves differently, due to the fact that it is more brittle, for example. Therefore, microscopic cracks may occur in the final product that cannot be detected 100 % by optical quality control, yet. Consequently, the process could not adapt its production parameters as there is no data base to learn from. The process might have to be limited to materials that have already been tested before. In this sense, the process could not act fully autonomous. Nevertheless, the ability to learn and adapt production parameters for every product counteracts material fluctuations as well as fluctuations in tolerances from upstream processes, and therefore copes better with many of the uncertainties equally occurring in current production processes.

Discussion

If products, manufactured by an autonomous production chain, cause harm to a person’s rights, the question arises who will take responsibility for the damages done. In most cases both Sect. 823 paragraph 1 of the German Civil Code and Sect. 1 paragraph 1 of the Product Liability Act apply. The Manufacturer of the product is held liable. Section 823 paragraph 1 of the German Civil Code requires a breach of duty of care, whereas liability under the Product Liability Act requires a defective product. Therefore, different categories of defects have been established: errors in the design, the fabrication, instruction or product monitoring. Errors in fabrication refer to deviations between the designed product and the final product after manufacturing. But, a 100 % error-free production is not expected of the producer. Therefore, he is not held liable for so called outliers in production [35, marg. 12]. For autonomous production processes, this category is no longer useful. Therefore, we focus on the other categories.

The interesting, and from a legal perspective, important feature of the autonomous production process is its ability to learn. During the deep drawing process, the parameters can be adapted to produce a product with a defined geometry. The process decides on the used material, the applied blank holder force and the duration of the deep drawing process. All these choices influence not only the geometry of the final product, but also its safety, for instance in the event of a crash. Conventionally, these choices would be made by the manufacturer, but in autonomous production processes, decisions are transferred to the process itself. Consequently, legal obligations concerning the safe product design can no longer be ascribed to a specific choice of the manufacturer. In order to identify legal obligations for the use of an autonomous production process, we have to consider the production process in its entirety, rather than the individual product, for a deeper discussion, also see [163].

Use of autonomous production processes

The first question to answer is whether autonomous production processes should be used at all, which is a crucial question for all new technologies that pose new risks. In order to give an answer, the interests at stake need to be considered. In an autonomous production process, parameters are set automatically during the process itself, rather than manually as in conventional production processes after a product inspection. Ideally, autonomous production processes minimise the risks of human misbehaviour and thus the risk of product failure.

In reality, there is still a lot of uncertainty, when trying to identify the actual risk of a product failure. Along the example of the automated deep drawing process, manufacturing close to the process limit also poses the risk of material thinning, which cannot be detected in a non- destructive way, yet. At the same time, completely new failures could occur, which have not been relevant for conventional production processes yet. The manufacturer needs to ensure that the products manufactured by such a process are as safe as the current state-of-the-art products [32]. The learning phase during the development of the production process needs to produce a steady product quality complying with the current state-of-the-art safety standards. Even then, a residual risk cannot be eradicated completely. Therefore, manufacturers have to find a way to quantify the risks as well as the safety-gain inherent to the autonomous production process. At which level of residual risk the autonomous production process may be used is not clear yet. This depends on the acceptance of such processes in the public and political opinion. Nevertheless, setting a standard for the permissible risk should not be left to the industry. Instead, clear guidelines should be developed by political institutions.

Obligations during the use of autonomous production processes

When applying the currently applicable legal framework to the technological innovation of the autonomous production process, two problems become apparent. Firstly, the learning character of the process is based on failure. Learning can only come from failure, whereas the legal obligation is based on avoiding such failure. Secondly, the functionality of the process is based on software. It is acknowledged that software cannot be produced 100 % free of errors. Knowing this, the manufacturer needs to take particular care in monitoring the functionality of the production process [253, p. 3147]. This obligation is conventionally not prioritised from a legal perspective, but serves as a compensation for the fact that all relevant design choices are made by the process, not the manufacturer. The producer’s obligation to design a safe product is therefore less demanding.

The question then is, how this obligation to monitor the process should be implemented. It is also worth mentioning that one cannot always conclude the safety of the product from the fact that the process functions correctly. As a result, the manufacturer should also identify safety relevant properties of the product and monitor them as well. The effort, the manufacturer needs to put into monitoring the process and the product depend on how the process learns and adapts. Particularly the frequency of a learning impulse is relevant, since the process only changes and adapts the process parameters after such impulses. If the process is based on the data of every single product, parameters can change after each product. Thus, the manufacturer would also have to monitor the safety relevant properties of each product. Whereas, if the data of only every hundredth product is used as a learning impulse, a change in the process parameters only occurs at this frequency. Then, it would be sufficient to check the properties of every hundredth product, i.e. the product after the learning impulse.

Obligations concerning the products of autonomous production processes

Lastly, the manufacturer has an obligation to monitor the product during its use phase. This way, defects that occur after a certain time in combination with other products or in general “in the field” can be detected. The information derived from this monitoring process is valuable and must be used to either prevent damage to protected legal rights or simply as input for future product development processes. The measures that need to be taken to monitor the product are manifold and depend on the specific product. But in general, it can be stated that the manufacturer needs to implement a complaint management system [103, marg. 379]. In addition to managing this passively achieved information, the manufacturer needs to actively inform himself about possible defects and errors concerning his product by checking newspapers, relevant journals [34, marg. 34], test reports or internet forums [146, p. 2729]. With the advancing technological innovation, it could also be possible to monitor products in real time. This would pose new legal questions which have to be discussed in the future.

Conclusions

In summary, autonomous production processes enable different and more efficient production ways. The current legal framework adapts to the new production technology. Manufacturers need to “train” the autonomous process until reliable results are achieved. The residual risks and the safety gained are to be quantified in some way. Guidelines to what level of residual risk is permissible should be developed by political institutions. When using an autonomous production process, the focus must be on monitoring the process and the resulting products during the production process, as well as on monitoring the products during the use phase.

5.1.5 Optimisation Methods and Legal Obligations

Computer software and hardware have improved so far that using simulation and optimisation tools in product development processes has become a common feature. These tools allow for more complex designs and larger systems, as well as improvement of development time, accuracy and safety. At the same time, optimisation methods are becoming more and more popular in developing product parts or a system topology. A new trend in mathematical optimisation is the treatment of resilient systems. Methods for developing resilient systems explicitly take failures of components into consideration, trying to guarantee at least a predefined limited function of the system itself. Although these methods are highly promising, the implementation is very complex and is usually done in the context of data, model and structural uncertainty, see Chap. 2. This uncertainty can then result in legal liability, if a person’s rights are harmed. In order to minimise legal uncertainty in the development process using optimisation methods as well as resilience optimisation methods, we need to understand the way these methods work and how their implementation affects the legal product development requirements we know. The concept and use of resilience for mastering uncertainty is described in Sects. 3.5 and 6.3.1.

Legal literature usually takes a more abstract approach to discussing the impact new technologies and new algorithmic design methods have on the existing legal framework. This is why we take a rather technical approach in order to gain a more specific and concrete idea as to how our current liability regime adapts to new algorithmic design methods. We also want to discuss which legal requirements can be derived for producers to comply with, and if and how the current legal framework might have to be adapted.

Legal liability

As seen in Sect. 5.1.4, Sect. 823 paragraph 1 of the German Civil Code is the key liability rule. In addition to producer liability, the provision generally covers cases in which a person is injured, killed, or the person’s property is impaired by a source of danger in circulation. The basis of this liability is the violation of a duty of care. Anyone who benefits from a dangerous product has to ensure that this product does not harm others [28]. In this sense, dangerous products are those, which are potentially dangerous for others, simply because of their nature, for example cars. The determination of necessary precautions depends on a number of factors. On the one hand, it depends on who the potential users are and therefore who is potentially at risk. On the other hand, it depends on which dangers can potentially occur. All this has to be considered by the manufacturer of a product or the operator of a system.

It should be emphasised, however, that only what is reasonable can be expected. The manufacturer or operator does not have to eliminate every residual risk at every price in order to be spared from liability. What can reasonably be expected varies from case to case. In principle, however, it can be said that the safety standard set by state-of-the-art technology has to be satisfied. On the one hand, the state-of-the-art is represented by norms and standards, but on the other hand by the solutions available on the market. Still, compliance with the current norms and standards alone does not necessarily protect against liability. In some cases, it will rather be necessary to take safety measures beyond norms and standards that are available according to the state-of-the-art technology. Norms and standards thus form a kind of minimum safety that should not be undercut. Although they are not legally binding, non-compliance with them has an indicative effect for non-compliance with safety requirements, which would have been possible according to state-of-the-art technology [31, with further references, marg. 16].

Resilience

The anticipation of several possible failures of the system or a product is a promising step into trying to make products safer. The idea is to ensure a pre-determined minimum functionality that the system or the product will still be able to achieve in case of disturbance or the malfunctioning of a specific amount of product components. Resilience can be implemented in the product development process, for instance by only considering design options that achieve the minimum functionality in the event of k component failures [9]. The product or system then has a so-called buffering capacity of k. For more than k component failures, no reliable outcome can be predicted. This is, in a way, a restriction when designing a product using resilience optimisation methods. Nevertheless, it is possible to concentrate on a defined number of component failures, rather than what causes a failure of the product or system at large. Furthermore, it is worth mentioning that the number of possible failure scenarios increases with the number of components and design options. Hence, the enumeration of all possible failure scenarios becomes practically impossible. More details on resilient system design can be found in Sect. 6.3.

Review of legal obligations

In order to identify the manufacturer’s duty of care when using optimisation methods, we take a closer look at the selection of the model on which algorithmic design methods are based on and differentiate between optimisation methods in general and resilience optimisation methods.

Selection of the model

All algorithmic design methods are based on a model of the system. In order to specify the legal obligations when using algorithmic design methods, considering the selection of the model of the system should be the starting point. These models always represent reality in a simplified way, see Sect. 1.3. Therefore, one model only applies to the specific application for which it is designed. If a model is used for the development of an application for which it does not provide reliable information, considerable deviations between the modelled system behaviour and the actual behaviour can occur during the usage phase.

It is important to note that in most cases many models are available, while some represent reality better than others. This results in the important restriction of the most reliable models often being too complex and thus too time-consuming for computation. In this respect, the developer needs to find a balance between an accurate model and the appropriate computation time.

When discussing legal obligations, the level of risk to third parties’ protected rights has to be determined and should be foreseen by the developer of a product [26, marg. 6]. First of all, the developer would have to ensure that the model he uses can generally provide reliable information about the product. The developer also needs to take the boundaries of the model into consideration, cf. Sect. 2.2. Furthermore, he has to have some kind of proof that the approximations made on the basis of the underlying model transfer into reality. This is usually achieved by model validation and verification. With the help of either experimental data or simulation results, it can be shown that the predicted behaviour is in accordance with the actual behaviour of the product. This procedure can be expected from the developer. Validating the model can imply that the producer of the product has fulfilled the safety obligations in the development phase. But in order to fully protect the developer from liability claims, safety aspects must be reproduced by the model as well.

Use of optimisation methods

Optimisation methods allow the development of more complex systems or products, as they simplify the design choices for the development process. These methods are based on mathematical models of the system and its environment. As already mentioned, one of the causes for uncertainty in using such methods arises from the fact that models can never be an exact representation of reality, as the latter is too complex to be computed. The outcome of the design with optimisation methods depends on the chosen model and on the chosen input parameters. These parameters are mostly economical and structural factors set by the developer. Moreover, the number of input parameters is finite. Safety of the product as such cannot be an input parameter. In fact, an optimal solution for the given optimisation problem can be structurally unsafe. This becomes clear, when considering optimisation problems that are used to minimise the material usage of a product.

As the producer cannot rely on the optimisation method to choose a particularly safe product design, he still needs to ensure that safety standards are met. So far, this process does not deviate from a development process which does not rely on optimisation methods. In a conventional development process, the developer would consider different design alternatives which solve the problem defined. These are the alternatives the developer would then have to choose from [274, marg. 972]. The decision would be made considering functionality and economic factors. Simultaneously, safety requirements can and should be considered when choosing the final product design.