Abstract

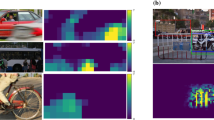

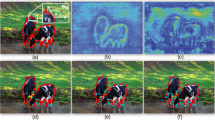

Despite deep convolutional neural networks’ great success in object classification, recent work has shown that they suffer from a severe generalization performance drop under occlusion conditions that do not appear in the training data. Due to the large variability of occluders in terms of shape and appearance, training data can hardly cover all possible occlusion conditions. However, in practice we expect models to reliably generalize to various novel occlusion conditions, rather than being limited to the training conditions. In this work, we integrate inductive priors including prototypes, partial matching and top-down modulation into deep neural networks to realize robust object classification under novel occlusion conditions, with limited occlusion in training data. We first introduce prototype learning as its regularization encourages compact data clusters for better generalization ability. Then, a visibility map at the intermediate layer based on feature dictionary and activation scale is estimated for partial matching, whose prior sifts irrelevant information out when comparing features with prototypes. Further, inspired by the important role of feedback connection in neuroscience for object recognition under occlusion, a structural prior, i.e. top-down modulation, is introduced into convolution layers, purposefully reducing the contamination by occlusion during feature extraction. Experiment results on partially occluded MNIST, vehicles from the PASCAL3D+ dataset, and vehicles from the cropped COCO dataset demonstrate the improvement under both simulated and real-world novel occlusion conditions, as well as under the transfer of datasets.

M. Xiao and R. Wu—Work done at Johns Hopkins University.

Access this chapter

Tax calculation will be finalised at checkout

Purchases are for personal use only

Similar content being viewed by others

References

DeVries, T., Taylor, G.W.: Improved regularization of convolutional neural networks with cutout. arXiv preprint arXiv:1708.04552 (2017)

Economist, T.: Why uber’s self-driving car killed a pedestrian (2017)

Fawzi, A., Frossard, P.: Measuring the effect of nuisance variables on classifiers. In: Proceedings of the British Machine Vision Conference (BMVC), pp. 137.1-137.12. BMVA (2016). https://doi.org/10.5244/C.30.137

Fu, J., Zheng, H., Mei, T.: Look closer to see better: recurrent attention convolutional neural network for fine-grained image recognition. In: Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, pp. 4438–4446 (2017)

Gilbert, C.D., Li, W.: Top-down influences on visual processing. Nat. Rev. Neurosci. 14(5), 350 (2013)

He, K., Zhang, X., Ren, S., Sun, J.: Deep residual learning for image recognition. In: Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, pp. 770–778 (2016)

Kortylewski, A., He, J., Liu, Q., Yuille, A.L.: Compositional convolutional neural networks: A deep architecture with innate robustness to partial occlusion. In: Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, pp. 8940–8949 (2020)

Kortylewski, A., Liu, Q., Wang, H., Zhang, Z., Yuille, A.: Combining compositional models and deep networks for robust object classification under occlusion. In: The IEEE Winter Conference on Applications of Computer Vision, pp. 1333–1341 (2020)

LeCun, Y.: The mnist database of handwritten digits. http://yann.lecun.com/exdb/mnist/ (1998)

Li, X., Jie, Z., Feng, J., Liu, C., Yan, S.: Learning with rethinking: recurrently improving convolutional neural networks through feedback. Pattern Recognit. 79, 183–194 (2018)

Liao, R., Schwing, A., Zemel, R., Urtasun, R.: Learning deep parsimonious representations. In: Advances in Neural Information Processing Systems, pp. 5076–5084 (2016)

Lin, T.-Y., et al.: Microsoft COCO: common objects in context. In: Fleet, D., Pajdla, T., Schiele, B., Tuytelaars, T. (eds.) ECCV 2014. LNCS, vol. 8693, pp. 740–755. Springer, Cham (2014). https://doi.org/10.1007/978-3-319-10602-1_48

Lloyd, S.: Least squares quantization in PCM. IEEE Trans. Inf. Theory 28(2), 129–137 (1982)

Nayebi, A., et al.: Task-driven convolutional recurrent models of the visual system. In: Advances in Neural Information Processing Systems, pp. 5290–5301 (2018)

O’Reilly, R.C., Wyatte, D., Herd, S., Mingus, B., Jilk, D.J.: Recurrent processing during object recognition. Front. Psychol. 4, 124 (2013)

Rajaei, K., Mohsenzadeh, Y., Ebrahimpour, R., Khaligh-Razavi, S.M.: Beyond core object recognition: recurrent processes account for object recognition under occlusion. PLoS Comput. Biol. 15(5), e1007001 (2019)

Redmon, J., Divvala, S., Girshick, R., Farhadi, A.: You only look once: unified, real-time object detection. In: Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, pp. 779–788 (2016)

Ren, S., He, K., Girshick, R., Sun, J.: Faster r-cnn: towards real-time object detection with region proposal networks. In: Advances in Neural Information Processing Systems, pp. 91–99 (2015)

Simonyan, K., Zisserman, A.: Very deep convolutional networks for large-scale image recognition. arXiv preprint arXiv:1409.1556 (2014)

Snell, J., Swersky, K., Zemel, R.: Prototypical networks for few-shot learning. In: Advances in Neural Information Processing Systems, pp. 4077–4087 (2017)

Spoerer, C.J., McClure, P., Kriegeskorte, N.: Recurrent convolutional neural networks: a better model of biological object recognition. Front. Psychol. 8, 1551 (2017)

Sutskever, I., Hinton, G.E., Krizhevsky, A.: Imagenet classification with deep convolutional neural networks. In: Advances in Neural Information Processing Systems, pp. 1097–1105 (2012)

Vinyals, O., et al.: Matching networks for one shot learning. In: Advances in Neural Information Processing Systems, pp. 3630–3638 (2016)

Wang, J., Zhang, Z., Xie, C., Premachandran, V., Yuille, A.: Unsupervised learning of object semantic parts from internal states of cnns by population encoding. arXiv preprint arXiv:1511.06855 (2015)

Wang, J., et al.: Visual concepts and compositional voting. arXiv preprint arXiv:1711.04451 (2017)

Xiang, Y., Mottaghi, R., Savarese, S.: Beyond pascal: a benchmark for 3D object detection in the wild. In: IEEE Winter Conference on Applications of Computer Vision, pp. 75–82. IEEE (2014)

Yang, H.M., Zhang, X.Y., Yin, F., Liu, C.L.: Robust classification with convolutional prototype learning. In: Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, pp. 3474–3482 (2018)

Yang, Y., Zhong, Z., Shen, T., Lin, Z.: Convolutional neural networks with alternately updated clique. In: Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, pp. 2413–2422 (2018)

Yun, S., Han, D., Oh, S.J., Chun, S., Choe, J., Yoo, Y.: Cutmix: regularization strategy to train strong classifiers with localizable features. arXiv preprint arXiv:1905.04899 (2019)

Zhang, Q., Nian Wu, Y., Zhu, S.C.: Interpretable convolutional neural networks. In: Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, pp. 8827–8836 (2018)

Zhang, Z., Xie, C., Wang, J., Xie, L., Yuille, A.L.: Deepvoting: a robust and explainable deep network for semantic part detection under partial occlusion. In: Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, pp. 1372–1380 (2018)

Zhu, H., Tang, P., Yuille, A.: Robustness of object recognition under extreme occlusion in humans and computational models. arXiv preprint arXiv:1905.04598 (2019)

Acknowledgements

This work was partly supported by ONR N00014-18-1-2119.

Author information

Authors and Affiliations

Corresponding author

Editor information

Editors and Affiliations

1 Electronic supplementary material

Below is the link to the electronic supplementary material.

Rights and permissions

Copyright information

© 2020 Springer Nature Switzerland AG

About this paper

Cite this paper

Xiao, M., Kortylewski, A., Wu, R., Qiao, S., Shen, W., Yuille, A. (2020). TDMPNet: Prototype Network with Recurrent Top-Down Modulation for Robust Object Classification Under Partial Occlusion. In: Bartoli, A., Fusiello, A. (eds) Computer Vision – ECCV 2020 Workshops. ECCV 2020. Lecture Notes in Computer Science(), vol 12536. Springer, Cham. https://doi.org/10.1007/978-3-030-66096-3_31

Download citation

DOI: https://doi.org/10.1007/978-3-030-66096-3_31

Published:

Publisher Name: Springer, Cham

Print ISBN: 978-3-030-66095-6

Online ISBN: 978-3-030-66096-3

eBook Packages: Computer ScienceComputer Science (R0)