Abstract

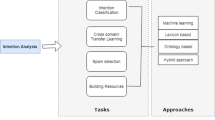

With the rapid development of Artificial Intelligence and Big Data technology, intelligent chatbot in insurance industry has become the major technical means to reduce labor costs and improve the quality of service. The core technology of this application is to understand and classify the users’ intentions accurately. However, insurance as a product with complex knowledge system and long service cycle, users’ intentions and the corresponding corpus is rather scattered. The initial corpus is especially scarce at the early stage of new business. So it is very important to classify the customers’ intentions accurately based on the rare corpus. This paper offers an empirical case study on intention classification of insurance data by using transfer learning model BERT. The experimental comparative analysis result shows that method based on BERT model can better reduce the error rate than other existing model methods (TextCNN, HAN, ELMo).

Access this chapter

Tax calculation will be finalised at checkout

Purchases are for personal use only

Similar content being viewed by others

References

Pan, S.J., Yang, Q.: A survey on transfer learning. IEEE Trans. Knowl. Data Eng. 22(10), 1345–1359 (2010)

Kim, Y.: Convolutional neural networks for sentence classification. In: Proceedings of the 2014 Conference on Empirical Methods in Natural Language Processing (EMNLP 2014), Doha, Qatar, pp. 1746–1751 (2014)

Zhang, X., Zhao, J., Lecun, Y.: Character-level convolutional networks for text classification. In: Proceedings of the International Conference on Neural Information Processing Systems (NIPS 2015), Montreal, Canada, pp. 1–9 (2015)

Yang, Z., Yang, D., Dyer, C., He, X., Smola, A., Hovy, E.: Hierarchical attention networks for document classification. In: Proceedings of the 2016 Conference of the North American Chapter of the Association for Computational Linguistics: Human Language Technologies (NAACL-HLT 2016), San Diego, USA, pp. 1480–1489 (2016)

Bojanowski, P., Grave, E., Joulin, A., Mikolov, T.: Enriching word vectors with subword information. Trans. Assoc. Comput. Linguist. 5, 135–146 (2017)

Peters, M.E., et al.: Deep contextualized word representations. In: Proceedings of the 2018 Conference of the North American Chapter of the Association for Computational Linguistics: Human Language Technologies (NAACL-HLT 2018), New Orleans, USA, pp. 2227–2237 (2018)

Kant, N., Puri, R., Yakovenko, N., Catanzaro, B.: Practical Text Classification with Large Pretrained Language Models. https://arxiv.org/abs/1812.01207, submitted on 4 December 2018

Devlin, J., Chang, M.-W., Lee, K., Toutanova, K.: BERT: pre-training of deep bidirectional transformers for language understanding. In: Proceedings of the 2019 Conference of the North American Chapter of the Association for Computational Linguistics: Human Language Technologies (NAACL-HLT 2019), Minneapolis, USA, pp. 4171–4186 (2019)

Sun, C., Qiu, X., Xu, Y., Huang, X.: How to Fine-Tune BERT for Text Classification? https://arxiv.org/abs/1905.05583, submitted on 14 May 2019

Vaswani, A., Shazeer, N., Parmar, N., Uszkoreit, J., et al.: Attention Is All You Need. https://arxiv.org/abs/1706.03762, submitted on 12 June 2017

Acknowledgment

This work is sponsored by Shanghai Pujiang Program under Grant No. 18PJ1433400, Key Disciplines of Computer Science and Technology of Shanghai Polytechnic University under Grant No. XXKZD1604, and Leap Funding of SSPU Scientific Research under Grant No. EGD19XQD09.

Author information

Authors and Affiliations

Corresponding author

Editor information

Editors and Affiliations

Rights and permissions

Copyright information

© 2019 Springer Nature Switzerland AG

About this paper

Cite this paper

Tang, S., Liu, Q., Tan, Wa. (2019). Intention Classification Based on Transfer Learning: A Case Study on Insurance Data. In: Milošević, D., Tang, Y., Zu, Q. (eds) Human Centered Computing. HCC 2019. Lecture Notes in Computer Science(), vol 11956. Springer, Cham. https://doi.org/10.1007/978-3-030-37429-7_36

Download citation

DOI: https://doi.org/10.1007/978-3-030-37429-7_36

Published:

Publisher Name: Springer, Cham

Print ISBN: 978-3-030-37428-0

Online ISBN: 978-3-030-37429-7

eBook Packages: Computer ScienceComputer Science (R0)