Abstract

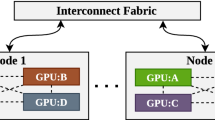

Training deep neural networks on big datasets remains a computational challenge. It can take hundreds of hours to perform and requires distributed computing systems to accelerate. Common distributed data-parallel approaches share a single model across multiple workers, train on different batches, aggregate gradients, and redistribute the new model. In this work, we propose NoSync, a particle swarm optimization inspired alternative where each worker trains a separate model, and applies pressure forcing models to converge. NoSync explores a greater portion of the parameter space and provides resilience to overfitting. It consistently offers higher accuracy compared to single workers, offers a linear speedup for smaller clusters, and is orthogonal to existing data-parallel approaches.

Access this chapter

Tax calculation will be finalised at checkout

Purchases are for personal use only

Similar content being viewed by others

References

Andreas, J., Rohrbach, M., Darrell, T., Klein, D.: Learning to compose neural networks for question answering. CoRR abs/1601.01705 (2016)

Bahdanau, D., Cho, K., Bengio, Y.: Neural machine translation by jointly learning to align and translate. CoRR abs/1409.0473 (2014)

Dauphin, Y.N., de Vries, H., Chung, J., Bengio, Y.: RMSProp and equilibrated adaptive learning rates for non-convex optimization. CoRR abs/1502.04390 (2015)

Dean, J.: Large scale deep learning (2014). https://research.google.com/people/jeff/CIKM-keynote-Nov2014.pdf

Dean, J., et al.: Large scale distributed deep networks. In: Advances in Neural Information Processing Systems, pp. 1223–1231 (2012)

Goyal, P., et al.: Accurate, large minibatch SGD: training ImageNet in 1 hour. CoRR abs/1706.02677 (2017)

Gupta, S., Zhang, W., Wang, F.: Model accuracy and runtime tradeoff in distributed deep learning: a systematic study. In: Proceedings of IEEE International Conference on Data Mining, ICDM, pp. 171–180 (2017)

He, K., Zhang, X., Ren, S., Sun, J.: Deep residual learning for image recognition. CoRR abs/1512.03385 (2015)

Iandola, F.N., Ashraf, K., Moskewicz, M.W., Keutzer, K.: FireCaffe: near-linear acceleration of deep neural network training on compute clusters. CoRR abs/1511.00175 (2015)

Kennedy, J., Eberhart, R.: Particle swarm optimization. In: Proceedings of IEEE International Conference on Neural Networks, vol. 4, pp. 1942–1948 (1995)

Keskar, N.S., Mudigere, D., Nocedal, J., Smelyanskiy, M., Tang, P.T.P.: On large-batch training for deep learning: Generalization gap and sharp minima. CoRR abs/1609.04836 (2016)

Krizhevsky, A.: One weird trick for parallelizing convolutional neural networks. CoRR abs/1404.5997 (2014)

Mitliagkas, I., Zhang, C., Hadjis, S., Re, C.: Asynchrony begets momentum, with an application to deep learning. In: 54th Annual Allerton Conference on Communication, Control, and Computing, Allerton 2016, pp. 997–1004 (2017)

Niu, F., Recht, B., Re, C., Wright, S.J.: HOGWILD!: a lock-free approach to parallelizing stochastic gradient descent, pp. 1–22 (2011)

Paine, T., Jin, H., Yang, J., Lin, Z., Huang, T.S.: GPU asynchronous stochastic gradient descent to speed up neural network training. CoRR abs/1312.6186 (2013)

Silver, D., et al.: Mastering the game of go without human knowledge. Nature 550, 354 (2017)

Strom, N.: Scalable distributed DNN training using commodity GPU cloud computing. In: Proceedings of the Annual Conference of the International Speech Communication Association, INTERSPEECH 2015, pp. 1488–1492 (2015)

Akiba, T., Suzuki, S., Fukuda, K.: Extremely large minibatch SGD: training ResNet-50 on ImageNet in 15 minutes (2017)

You, Y., Zhang, Z., Hsieh, C., Demmel, J.: 100-epoch ImageNet training with AlexNet in 24 minutes. CoRR abs/1709.05011 (2017)

Zhang, S., Choromanska, A., LeCun, Y.: Deep learning with elastic averaging SGD. CoRR abs/1412.6651 (2014)

Author information

Authors and Affiliations

Corresponding author

Editor information

Editors and Affiliations

Rights and permissions

Copyright information

© 2018 Springer Nature Switzerland AG

About this paper

Cite this paper

Isakov, M., Kinsy, M.A. (2018). NoSync: Particle Swarm Inspired Distributed DNN Training. In: Kůrková, V., Manolopoulos, Y., Hammer, B., Iliadis, L., Maglogiannis, I. (eds) Artificial Neural Networks and Machine Learning – ICANN 2018. ICANN 2018. Lecture Notes in Computer Science(), vol 11140. Springer, Cham. https://doi.org/10.1007/978-3-030-01421-6_58

Download citation

DOI: https://doi.org/10.1007/978-3-030-01421-6_58

Published:

Publisher Name: Springer, Cham

Print ISBN: 978-3-030-01420-9

Online ISBN: 978-3-030-01421-6

eBook Packages: Computer ScienceComputer Science (R0)