Abstract

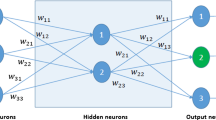

We present an analytic solution to the problem of on-line gradient-descent learning for two-layer neural networks with an arbitrary number of hidden units in both teacher and student networks. The technique, demonstrated here for the case of adaptive input-to-hidden weights, becomes exact as the dimensionality of the input space increases.

Access this chapter

Tax calculation will be finalised at checkout

Purchases are for personal use only

Preview

Unable to display preview. Download preview PDF.

Similar content being viewed by others

References

G. Cybenko, Approximation by superposition of sigmoidal functions, Math. Control Signals and Systems Vol. 2 (1989), pp303–314.

M. Biehl and H. Schwarze, Learning by online gradient descent, J. Phys. A Vol. 28 (1995), pp643–656.

D. Saad and S. A. Solla, Exact solution for on-line learning in multilayer neural networks, Phys. Rev. Lett. Vol. 74 (1995), pp4337–4340.

D. Saad and S. A. Solla, On-line learning in soft committee machines, Phys. Rev. E Vol 52 (1995), pp4225–4243.

Author information

Authors and Affiliations

Editor information

Editors and Affiliations

Rights and permissions

Copyright information

© 1997 Springer Science+Business Media New York

About this chapter

Cite this chapter

Saad, D., Solla, S.A. (1997). On-Line Learning in Multilayer Neural Networks. In: Ellacott, S.W., Mason, J.C., Anderson, I.J. (eds) Mathematics of Neural Networks. Operations Research/Computer Science Interfaces Series, vol 8. Springer, Boston, MA. https://doi.org/10.1007/978-1-4615-6099-9_53

Download citation

DOI: https://doi.org/10.1007/978-1-4615-6099-9_53

Publisher Name: Springer, Boston, MA

Print ISBN: 978-1-4613-7794-8

Online ISBN: 978-1-4615-6099-9

eBook Packages: Springer Book Archive