Abstract

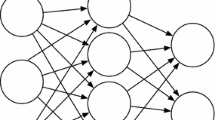

Text categorization and retrieval tasks are often based on a good representation of textual data. Departing from the classical vector space model, several probabilistic models have been proposed recently, such as PLSA. In this paper, we propose the use of a neural network based, non-probabilistic, solution, which captures jointly a rich representation of words and documents. Experiments performed on two information retrieval tasks using the TDT2 database and the TREC-8 and 9 sets of queries yielded a better performance for the proposed neural network model, as compared to PLSA and the classical TFIDF representations.

An erratum to this chapter can be found at http://dx.doi.org/10.1007/11550907_163 .

Access this chapter

Tax calculation will be finalised at checkout

Purchases are for personal use only

Preview

Unable to display preview. Download preview PDF.

Similar content being viewed by others

References

Sebastiani, F.: Machine learning in automated text categorization. ACM Computing Surveys 34, 1–47 (2002)

Hofmann, T.: Unsupervised learning by Probabilistic Latent Semantic Analysis. Machine Learning 42, 177–196 (2001)

Le Cun, Y., Huang, F.J.: Loss functions for discriminative training of energy-based models. In: Proc. of AIStats (2005)

Salton, G., Wong, A., Yang, C.: A Vector Space Model for Automatic Indexing. Communication of the ACM 18 (1975)

Salton, G., Buckley, C.: Term-weighting approaches in automatic text retrieval. Information Processing and Management 24, 513–523 (1988)

Deerwester, S.C., Dumais, S.T., Landauer, T.K., Furnas, G.W., Harshman, R.A.: Indexing by Latent Semantic Analysis. Journal of the American Society of Information Science 41, 391–407 (1990)

Gaussier, E., Goutte, C., Popat, K., Chen, F.: A hierarchical model for clustering and categorising documents. In: Crestani, F., Girolami, M., van Rijsbergen, C.J.K. (eds.) ECIR 2002. LNCS, vol. 2291, pp. 229–247. Springer, Heidelberg (2002)

Blei, D., Ng, A., Jordan, M.: Latent Dirichlet Allocation. JMLR 3, 993–1022 (2003)

Buntine, W.: Variational extensions to em and multinomial pca. In: Elomaa, T., Mannila, H., Toivonen, H. (eds.) ECML 2002. LNCS (LNAI), vol. 2430, pp. 23–34. Springer, Heidelberg (2002)

Keller, M., Bengio, S.: Theme topic mixture model: A graphical model for document representation. In: PASCAL Workshop on Learning Methods for Text Understanding and Mining (2004)

Cristianini, N., Shawe-Taylor, J., Lodhi, H.: Latent semantic kernels. J. Intell. Inf. Syst. 18, 127–152 (2002)

Bengio, Y., Ducharme, R., Vincent, P., Gauvin, C.: A Neural Probabilistic Language Model. JMLR 3, 1137–1155 (2003)

Collobert, R., Bengio, S.: Links between perceptrons, MLPs and SVMs. In: Proceedings of ICML (2004)

Lewis, D.D.: The trec-4 filtering track. In: TREC (1995)

Author information

Authors and Affiliations

Editor information

Editors and Affiliations

Rights and permissions

Copyright information

© 2005 Springer-Verlag Berlin Heidelberg

About this paper

Cite this paper

Keller, M., Bengio, S. (2005). A Neural Network for Text Representation. In: Duch, W., Kacprzyk, J., Oja, E., Zadrożny, S. (eds) Artificial Neural Networks: Formal Models and Their Applications – ICANN 2005. ICANN 2005. Lecture Notes in Computer Science, vol 3697. Springer, Berlin, Heidelberg. https://doi.org/10.1007/11550907_106

Download citation

DOI: https://doi.org/10.1007/11550907_106

Publisher Name: Springer, Berlin, Heidelberg

Print ISBN: 978-3-540-28755-1

Online ISBN: 978-3-540-28756-8

eBook Packages: Computer ScienceComputer Science (R0)