Abstract

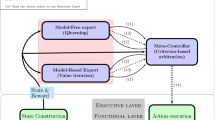

The design and implementation of a robot brain often requires making decisions between different modules with similar functionality. Many implementations and components are easy to create or can be downloaded, but it is difficult to assess which combination of modules work well and which does not. This paper discusses a reinforcement learning mechanism where the robot is choosing between the different components using empirical feedback and optimization criteria. With the interval estimation algorithm the robot deselects poorly functioning modules and retains only the best ones. A discount factor ensures that the robot keeps adapting to new circumstances in the real world. This allows the robot to adapt itself continuously on the architecture level and also allows working with large development teams creating several different implementations with similar functionalities to give the robot biggest chance to solve a task. The architecture is tested in the RoboCup@Home setting and can handle failure situations.

Chapter PDF

Similar content being viewed by others

Keywords

References

Asada, M., Hosoda, K., Kuniyoshi, Y., Ishiguro, H., Inui, T., Yoshikawa, Y., Ogino, M., Yoshida, C.: Cognitive developmental robotics: A survey. IEEE Transactions on Autonomous Mental Development 1(1), 12–34 (2009)

Bellas, F., Duro, R., Faina, A., Souto, D.: Multilevel darwinist brain (mdb): Artificial evolution in a cognitive architecture for real robots. IEEE Transactions on Autonomous Mental Development 2(4), 340–354 (2010)

van Dijk, S.G., Polani, D., Nehaniv, C.L.: Hierarchical Behaviours: Getting the Most Bang for Your Bit. In: Kampis, G., Karsai, I., Szathmáry, E. (eds.) ECAL 2009, Part II. LNCS, vol. 5778, pp. 342–349. Springer, Heidelberg (2011)

Gerkey, B.P., Vaughan, R.T., Howard, A.: The player/stage project: Tools for multi-robot and distributed sensor systems. In: Proceedings of the 11th International Conference on Advanced Robotics, pp. 317–323 (2003)

Kaelbling, L.P.: Learning in Embedded Systems. MIT Press (1993)

Kitano, H., Asada, M., Kuniyoshi, Y., Noda, I., Osawa, E., Matsubara, H.: RoboCup: A Challenge Problem for AI. AI Magazine 18(1), 73–85 (1997)

Montemerlo, M., Roy, N., Thrun, S.: Perspectives on standardization in mobile robot programming: The carnegie mellon navigation (carmen) toolkit. In: Proc. of the IEEE/RSJ Int. Conf. on Intelligent Robots and Systems (IROS), pp. 2436–2441 (2003)

Sutton, R., Barto, A.: Reinforcement Learning: an Introduction. MIT Press (1998)

Vigorito, C., Barto, A.: Intrinsically motivated hierarchical skill learning in structured environments. IEEE Transactions on Autonomous Mental Development 2(2), 132–143 (2010)

Wiering, M., Schmidhuber, J.: Efficient model-based exploration. In: Proceedings of the Sixth International Conference on Simulation of Adaptive Behavior: From Animals to Animats 6, pp. 223–228. MIT Press/Bradford Books (1998)

Wisspeintner, T., van der Zant, T., Iocchi, L., Schiffer, S.: RoboCupHome: Scientific Competition and Benchmarking for Domestic Service Robots. Interaction Studies 10(3), 392–426 (2009), http://dx.doi.org/10.1075/is.10.3.06wis

der Zant, T.V., Wiering, M., Eijck, J.V.: On-line robot learning using the interval estimation algorithm. In: Proceedings of the 7th European Workshop on Reinforcement Learning, pp. 11–12 (2005)

Author information

Authors and Affiliations

Editor information

Editors and Affiliations

Rights and permissions

Copyright information

© 2012 Springer-Verlag Berlin Heidelberg

About this paper

Cite this paper

van der Zant, T. (2012). Adaptivity on the Robot Brain Architecture Level Using Reinforcement Learning. In: Röfer, T., Mayer, N.M., Savage, J., Saranlı, U. (eds) RoboCup 2011: Robot Soccer World Cup XV. RoboCup 2011. Lecture Notes in Computer Science(), vol 7416. Springer, Berlin, Heidelberg. https://doi.org/10.1007/978-3-642-32060-6_45

Download citation

DOI: https://doi.org/10.1007/978-3-642-32060-6_45

Publisher Name: Springer, Berlin, Heidelberg

Print ISBN: 978-3-642-32059-0

Online ISBN: 978-3-642-32060-6

eBook Packages: Computer ScienceComputer Science (R0)