Abstract

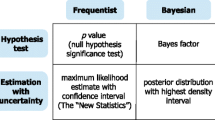

The power fallacy refers to the misconception that what holds on average –across an ensemble of hypothetical experiments– also holds for each case individually. According to the fallacy, high-power experiments always yield more informative data than do low-power experiments. Here we expose the fallacy with concrete examples, demonstrating that a particular outcome from a high-power experiment can be completely uninformative, whereas a particular outcome from a low-power experiment can be highly informative. Although power is useful in planning an experiment, it is less useful—and sometimes even misleading—for making inferences from observed data. To make inferences from data, we recommend the use of likelihood ratios or Bayes factors, which are the extension of likelihood ratios beyond point hypotheses. These methods of inference do not average over hypothetical replications of an experiment, but instead condition on the data that have actually been observed. In this way, likelihood ratios and Bayes factors rationally quantify the evidence that a particular data set provides for or against the null or any other hypothesis.

Similar content being viewed by others

Notes

While odds lie on a naturally meaningful scale calibrated by betting, characterizing evidence through verbal labels such as “moderate” and “strong” is necessarily subjective (Kass & Raftery, 1995). We believe the labels are useful because they facilitate scientific communication, but they should only be considered an approximate descriptive articulation of different standards of evidence.

In order to obtain a t value of 5 with a sample size of only 10 participants, the precognition score needs to have a large mean or a small variance.

In order to obtain a t value of 1.7 with a sample size of 100, the the precognition score needs to have a small mean or a high variance.

References

Bakker, M., van Dijk, A., Wicherts, J. M. (2012). The rules of the game called psychological science. Perspectives on Psychological Science, 7, 543–554.

Bem, D. J. (2011). Feeling the future: experimental evidence for anomalous retroactive influences on cognition and affect. Journal of Personality and Social Psychology, 100, 407–425.

Berger, J. O., & Wolpert, R. L. (1988). The likelihood principle Vol. 2. Hayward (CA): Institute of Mathematical Statistics.

Button, K. S., Ioannidis, J. P. A., Mokrysz, C., Nosek, B. A., Flint, J., Robinson, E. S. J., Munafò, M. R. (2013). Power failure: why small sample size undermines the reliability of neuroscience. Nature Reviews Neuroscience, 14, 1–12.

Cohen, J. (1990). Things I have learned (thus far). American Psychologist, 45, 1304–1312.

Faul, F., Erdfelder, E., Lang, A.-G., Buchner, A. (2007). G ∗Power 3: a flexible statistical power analysis program for the social, behavioral, and biomedical sciences. Behavior Research Methods, 39, 175–191.

Galak, J., LeBoeuf, R. A., Nelson, L. D., Simmons, J. P. (2012). Correcting the past: failures to replicate Psi. Journal of Personality and Social Psychology, 103, 933–948.

Gönen, M., Johnson, W. O., Lu, Y., Westfall, P. H. (2005). The Bayesian two–sample t test. The American Statistician, 59, 252– 257.

Ioannidis, J. P. A. (2005). Why most published research findings are false. PLoS Medicine, 2, 696–701.

Jaynes, E. T. (2003). Probability theory: the logic of science. Cambridge: Cambridge University Press.

Jeffreys, H. (1961). Theory of probability (3 ed). Oxford: Oxford University Press.

Johnson, V. E. (2013). Revised standards for statistical evidence. In Proceedings of the national academy of sciences of the United States of America (Vol. 11, pp. 19313–19317).

Kass, R. E., & Raftery, A. E. (1995). Bayes factors. Journal of the American Statistical Association, 90, 773–795.

Lee, M. D., & Wagenmakers, E.-J (2013). Bayesian modeling for cognitive science: a practical course. Cambridge University Press.

Morey, R. D., & Wagenmakers, E.-J. (2014). Simple relation between Bayesian order-restricted and point-null hypothesis tests. Statistics and Probability Letters, 92, 121–124.

Pratt, J. W. (1965). Bayesian interpretation of standard inference statements. Journal of the Royal Statistical Society B, 27, 169– 203.

Ritchie, S. J., Wiseman, R., French, C. C. (2012). Failing the future: three unsuccessful attempts to replicate Bem’s ‘retroactive facilitation of recall’ effect. PLoS ONE, 7, e33423.

Sellke, T., Bayarri, M. J., Berger, J. O. (2001). Calibration of p values for testing precise null hypotheses. The American Statistician, 55, 62–71.

Sham, P. C., & Purcell, S. M. (2014). Statistical power and significance testing in large-scale genetic studies. Nature Reviews Genetics, 15, 335–346.

Author information

Authors and Affiliations

Corresponding author

Additional information

Author Note

This work was supported by an ERC grant from the European Research Council. Correspondence concerning this article may be addressed to Eric-Jan Wagenmakers, University of Amsterdam, Department of Psychology, Weesperplein 4, 1018 XA Amsterdam, the Netherlands. Email address: EJ.Wagenmakers@gmail.com.

Rights and permissions

About this article

Cite this article

Wagenmakers, EJ., Verhagen, J., Ly, A. et al. A power fallacy. Behav Res 47, 913–917 (2015). https://doi.org/10.3758/s13428-014-0517-4

Published:

Issue Date:

DOI: https://doi.org/10.3758/s13428-014-0517-4