Abstract

The majority of research on visual memory has taken a compartmentalized approach, focusing exclusively on memory over shorter or longer durations, that is, visual working memory (VWM) or visual episodic long-term memory (VLTM), respectively. This tutorial provides a review spanning the two areas, with readers in mind who may only be familiar with one or the other. The review is divided into six sections. It starts by distinguishing VWM and VLTM from one another, in terms of how they are generally defined and their relative functions. This is followed by a review of the major theories and methods guiding VLTM and VWM research. The final section is devoted toward identifying points of overlap and distinction across the two literatures to provide a synthesis that will inform future research in both fields. By more intimately relating methods and theories from VWM and VLTM to one another, new advances can be made that may shed light on the kinds of representational content and structure supporting human visual memory.

Similar content being viewed by others

Introduction

Human visual memory is impressive and fundamental to cognition and remains one of the core areas of human cognition that artificial intelligence (AI) systems have yet to be able to replicate (Andreopoulos & Tsotsos, 2013; DiCarlo, Zoccolan, & Rust, 2012; Pinto, Cox, & DiCarlo, 2008). We can recognize past objects we have seen when given incredibly brief exposures (Biederman & Ju, 1988; Potter, 1976; Potter & Levy, 1969), despite drastic image-level differences across encounters (through variability in factors such as orientation and lighting: Cox & Dicarlo, 2008; Cox, Meier, Oertelt, & DiCarlo, 2005; Dicarlo & Cox, 2007; Rust & Stocker, 2010; Wallis & Bülthoff, 2001), and even when we have not seen an object for months, years, or even decades (i.e., many people can distinctly remember what subway tokens or an Atari game system look like). The exact computational and neural explanations of this ability remain unknown and are likely key to understanding disorders that impair visual memory and recognition, such as dementia.

The majority of research on visual memory has taken a compartmentalized approach, focusing exclusively on memory over shorter or longer durations, that is, on working or episodic long-term memory, respectively. The purpose of the present review is simply to supply a primer that spans the two areas, with readers in mind who may only be familiar with one or the other. Through this survey, I aim to identify points of overlap and distinction across the two literatures to provide a synthesis that will inform future research investigating the major questions remaining on human visual memory.

Distinguishing visual working memory and visual episodic long-term memory

Memories for visual information are typically distinguished as belonging to either visual working memory (VWM) or visual episodic long-term memory (VLTM). VWM is considered an online system that retains and manipulates information over the short term (Baddeley & Hitch, 1974; Cowan, 2008; Ma, Husain, & Bays, 2014; Vogel, Woodman, & Luck, 2006), whereas VLTM is typically defined as the passive storage of visual information over longer periods of time (Brady, Konkle, & Alvarez, 2011; Cowan, 2008; Squire, 2004). Further distinguishing these two systems is that they appear to rely on distinct neural substrates. For example, VWM tends to be associated with activity in the occipital and parietal cortex (Harrison & Tong, 2009; Pessoa, Gutierrez, Bandettini, & Ungerleider, 2002; Todd & Marois, 2004), whereas VLTM is associated with the use of the medial temporal lobe and hippocampus (Squire, 2004; Yassa et al., 2011; Yonelinas, Aly, Wang, & Koen, 2010).

Broadly speaking, it is intuitive to distinguish different memory systems, especially VWM and VLTM, by the time scale over which the memory takes place. However, while time may constrain systems in different ways, it may not be sufficient to understand all possible differences and similarities between them. A more holistic approach may be to distinguish these systems in terms of their functions. If we know the function of a system, we can clearly identify the specific challenges facing such functions, even if we do not know the specific solutions to these challenges a system may choose to employ.

The function of VWM

When establishing the function of VWM, researchers early on defined it as a space divided between storage and other processing demands (Baddeley & Hitch, 1974). It is thought to be the combination of multiple processes, providing an interface between perception, short-term memory, and other mechanisms such as attention (Cowan, 2008). VWM’s most notable characteristic is that it has a limited capacity (Awh, Barton, & Vogel, 2007; Vogel, Woodman, & Luck, 2001), and it is thought to be a core cognitive process underlying a wide range of behaviors (Baddeley, 2003; Ma et al., 2014). Given these descriptions, the function of VWM may be best described as supporting complex cognitive behaviors that require temporarily storing and manipulating information in order to produce actions.

VWM representations must therefore be in a format that is easily amendable and malleable, while remaining general enough to support a wide range of behaviors: it needs to output representations that can be taken as input by relevant processes. For example, an important role of VWM is to bridge gaps in our perception (both spatially and temporally) created by eye movements. Indeed, VWM has been shown to store features of saccade targets for subsequent comparisons (Hollingworth, Richard, & Luck, 2008), and the contents of VWM influence even simple saccades (Hollingworth, Matsukura, & Luck, 2013). In order to act as this bridge, representations in VWM must be content neutral (i.e., not specific to any one feature or class of objects) and also malleable (in order to integrate and compare information across time).

The function of VLTM

VLTM is typically described in terms of episodic recognition, which is commonly defined as the conscious recognition of visual events (Brady et al., 2011; Squire, 2004). This is a generally vague description that does not elucidate the function of VLTM. However, we can find insight into the function of VLTM from the study of object recognition. The goal of any object recognition system is to identify an object based on some previous input. Clearly, when VLTM studies utilize images of real-world objects in their tasks, the situation is analogous to an object recognition task. When referring to object recognition here, I mean so explicitly in the context of episodic long-term memories for objects, as opposed to other kinds of learning utilized by long-term memory, such as semantic or categorical information about those objects.

The fundamental challenge facing object recognition is the need for the system to demonstrate both tolerance and discrimination.Footnote 1 When we see an object again, we never encounter that same object exactly the same way twice. We see things briefly, in different contexts, with differences in lighting, viewpoint, or orientation that make direct comparisons of the perceptual input to our long-term memory representations next to impossible (Cox & Dicarlo, 2008; Cox et al., 2005; Dicarlo & Cox, 2007; Rust & Stocker, 2010; Wallis & Bulthoff, 1999). In order to recognize objects over the long term, our representations need to demonstrate tolerance. By tolerance, I am referring to our ability to recognize the same object across different encounters.

When perceiving or recognizing objects over the short term, your visual system has multiple cues to assist in acquiring tolerance. For example, if you toss a ball and watch it move through the air, the image hitting your retina transforms considerably over the ball’s journey. However, despite drastic changes in the appearance of the ball from moment to moment, you are still able to recognize that the ball is the same at each point in time. This is because your visual system can exploit expectations of spatiotemporal continuity (where an object is in space and time) and as a consequence can demonstrate tolerance to these changes (Kahneman, Treisman, & Gibbs, 1992; Scholl & Flombaum, 2010; Schurgin & Flombaum, 2018). However, over the long term, spatiotemporal continuity and other cues used to facilitate tolerance are unavailable, and thus tolerance must be reflected within long-term memory representations themselves.

While tolerance allows us to identify previous experiences despite considerable changes across encounters, there is a potential danger of overtolerance. One could imagine that if a representation is too tolerant, then it risks an observer mistaking every object she encounters as one that she’s seen before. To address this problem, our representations must also demonstrate a certain level of discrimination. In contrast to tolerance, discrimination refers to our ability to distinguish similar but distinct inputs from one another.

For example, imagine one day my coworker and I are having lunch in a break room and we each have an apple. We place our lunches beside each other and then both exit the room to grab some napkins. When we return, there are two apples on the table that are quite similar in appearance, but one apple is mine and the other is my coworker’s. In order to know which is mine, I must have a representation that is discriminating. Despite the similarities between both apples, I should be able to identify which apple belongs to me.

Similar to issues created by overtolerance, if a memory system is too discriminating then it would operate poorly since an observer would likely fail to recognize any previously seen input unless it was exactly the same as when it was first encountered. Given the variability in our visual inputs described earlier (e.g., due to differences in size, lighting, orientation, etc.), as well as the general assumption that our visual experience is noisy, this is simply an impossible threshold to meet. As a result, there is a natural tension that VLTM must manage between tolerance and discrimination. This is the function of VLTM—to manage this tension in order to recognize a previous visual experience given new input.

Core concepts of VLTM

As discussed previously, VLTM is typically defined as the passive storage system of visual episodic memory. The function of this system is to identify a past visual experience given some previous input, while balancing the need for both tolerance and discrimination. Having defined VLTM and identified its functions, we can expand our understanding of VLTM by establishing what core concepts researchers have identified in the field.

Familiarity and recollection

When trying to understand the nature of VLTM, researchers have focused on investigating the kinds of information VLTM may utilize. One particularly influential distinction in VLTM (and episodic long-term memory research generally) has been recollection and familiarity. Recollection refers to an observer accessing specific details about a previously experienced item. For example, let us say you happen to have gone to a friend’s house for a party the other night and met a variety of new people. In particular, you happened to meet a man named Bob, with whom you talked at length about the Chicago Cubs. The next day, you enter a coffee shop and as you get in line you happen to see that Bob is in front of you. Despite seeing Bob after a long delay, and in a completely new context, you remember all the specific details associated with him—his funky hairstyle, the color of his eyes, along with all the details of when you last met (i.e., your conversation about the Cubs, etc.).

In contrast to recollection, familiarity refers to an observer knowing an item is old or new, without having specific details associated with that memory—“familiarity is the process of recognizing an item on the basis of its perceived memory strength but without retrieval of any specific details about the study episode” (Diana, Yonelinas, & Ranganath, 2007, p. 379). This notion of familiarity is quite common, and perhaps something we have all experienced. Given the example above, consider an alternate universe where you see Bob the next day in the coffee shop. While you recognize him as someone you know, you cannot remember any of the specific details related to this knowledge, including where you met him or what context you know him from. This is familiarity.

Dual-process signal-detection model

One model used to explain recollection and familiarity processes is the dual-process signal-detection (DPSD) model (Yonelinas et al., 2010). The DPSD model specifies that familiarity is primarily a signal-detection process of discriminating between two Gaussian distributions of memory-match strength that differ on average between old and new items, where familiarity occurs when a signal exceeds a decision criterion (see Fig. 1). Therefore, your ability to be familiar with something you have seen before depends on the strength of the memory signal and an individual’s decision criterion (how liberal or conservative that individual is being with their decisions). Experiences that are very different (i.e., new) should produce very little to no overlap, so familiarity should not occur. But if given visual inputs that are highly similar (even if you have not necessarily encountered them before), this will likely result in a familiarity signal.

Illustration of the dual-process signal-detection (DPSD) and continuous dual-process (CDP) models. In the DPSD model, familiarity and recollection are two distinct but parallel processes. Familiarity is a signal-detection process of discriminating between two Gaussian distributions between old and new items, where familiarity occurs when the signal exceeds a decision criterion (i.e., participants “know” they saw the item). Recollection is a threshold-based process where signal strength passes a certain threshold and is either recollected or not. In the diagram, both Stimuli A and B pass the threshold and would thus be recollected with the same amount of detail, regardless that each stimulus may illicit different amounts of memory-match signal strength. Stimuli C does not pass the threshold and would thus not be recollected. In the CDP model, both familiarity and recollection vary continuously and operate using signal-detection-based processes. These processes are interactive and are combined during decision-making, resulting in a single distribution for studied items. In the simplest version of the model, familiarity occurs when the memory-match signal exceeds a lower decision criterion (i.e., “know”), whereas recollection occurs when the signal exceeds a higher decision criterion (i.e., participants “remember” the details of the item)

The DPSD model defines recollection as a threshold-based process. In order to recollect an item, an observer must collect enough information. Once enough information is collected, it has passed the “threshold,” and specific details will then be recollected. These classifications are exclusive and are an “all-or-nothing” process. It does not matter if an observer collects more information as long as the threshold has been passed. However, if an observer does not accumulate enough information, the threshold is not passed and recollection will not occur. Figure 1 schematizes this process. For example, let us imagine you have a memory associated with your favorite coffee mug, which has a certain recollection threshold. If you see your coffee mug clearly on your desk, then you receive a strong memory signal (Stimulus A), so the threshold is passed and your memory is recollected. The next day you see the coffee mug on your desk, but it is in a different place and is partially occluded (Stimulus B). While the perceptual information and signal provided is not as strong, you still gain enough information that it passes the threshold and your memory is recollected with the same amount of detail as the day before. However, later in the week, you see your coffee mug from very far away (Stimulus C). While there is some overlapping perceptual information here and you do receive some memory signal, it is not enough to pass your threshold and so you do not recollect the memory.

It is important to note that the example described above is provided to illustrate how a threshold process might operate (specifically within one potential visual context). While sensory overlap may drive recollection automatically in some cases, it can operate in many other circumstances as well. Indeed, in many studies of recollection, subjects are instructed to attempt recall, with varying levels of success. In these studies, a subject’s success or failure to recall information may depend on higher or lower thresholds relative to the memories being recalled.

The DPSD model is based on the claim that familiarity and recollection are two distinct but parallel processes. Despite their distinction from one another, humans likely utilize both processes in a variety of long-term memory tests and behaviors. Familiarity or recollection would both be sufficient to recognize something you have seen before. For example, in order to recognize someone you have previously met, you could rely on familiarity (i.e., a feeling that you have seen that person before) or recollection (i.e., specific details related to that person). Along similar lines, many common long-term memory tests ask observers to classify images as ones they saw during encoding (old) or as completely new images. An observer could use either familiarity or recollection to correctly classify an image as old.

Continuous dual-process model

Numerous studies exist in support of the DPSD, extending past humans (Bowles et al., 2010; Yonelinas et al., 2010) to research involving rodents (Fortin, Wright, & Eichenbaum, 2004) and monkeys (Miyamoto et al., 2014). However, this is not the only computational approach to understanding the possible distinction between familiarity and recollection. For example, Dede, Squire, and Wixted (2014) suggested these processes are better described computationally by the continuous dual-process (CDP) model. In this model, familiarity operates exactly as defined in the DPSD model. However, unlike the DPSD, the CDP model proposes that recollection can vary continuously using the same signal-detection process as familiarity. This means recollection is not an all-or-nothing process, and that different memories may illicit different memory-match signals that will vary in detail. Furthermore, during memory decision-making, familiarity and recollection are combined and are thus interactive. Since both familiarity and recollection can vary in confidence and accuracy, observers can combine both sources of information to inform subsequent performance. As a result, the CDP model has only a single distribution for studied items, reflecting variable information from both familiarity and recollection. In the simplest version of this model, familiarity occurs when the memory-match signal exceeds a lower decision criterion (i.e., participants “know” they saw an item), whereas recollection occurs when the signal exceeds a higher decision criterion (i.e., participants “remember” specific details of the item; Dunn, 2004; Wixted & Stretch, 2004; see Fig. 1).

In contrast, the DPSD model assumes these two processes are independent but operate in parallel. As a result, they should not interact. For example, if recollection is successful, then an observer would not use any familiarity information at test, as recollection would provide all the evidence needed to make a decision. However, if recollection failed, then an observer would rely solely on the strength of the familiarity signal.

Both the CDP and DPSD models have similar predictions in terms of performance in recognition tasks, but vary according to the types of systematic errors they would produce. By modeling systematic errors, Dede et al. (2014) found support that human data was better fit by the CDP model than a DPSD equivalent. This demonstrates that the CDP model is consistent with previous research in support of the DPSD model, but may more accurately capture the specific computations used in recognition decision-making. It also alters the previous distinctions between familiarity and recollection processes by suggesting an interactive relationship, rather than one that is orthogonal.

Potential limitations

It is unclear if researchers intend for the familiarity/recollection dichotomy to designate different types of representations, or different affordances from a single VLTM representation. In general, a feature of these models that leaves them difficult to interpret is that they are content neutral; the same models are applied in the same way regardless of what is to be remembered, and without any claims about how those things are described in symbolic or activation terms.

For example, it is possible that familiarity and recollection each reflect memories with different contents, something like a gist (familiarity) and a more detailed representation (recollection). Yonelinas et al. (2010) appear to endorse this kind of view, as they argue that recollection and familiarity are separate processes that make independent contributions to recognition memory. This is an intuitive conclusion under the DPSD model, as it specifies familiarity is a signal-detection-based process, whereas recollection is a threshold-based process, and thus likely differ in their content and format. This would suggest that individuals are able to make accurate familiarity-based judgments even when detailed representations fail to consolidate.

But under this view, we should then want specific accounts of the contents and formats in each representation. What goes into a gist? One possibility is that it is simply categorical or semantic knowledge for something you have seen before. Such knowledge would be able to interact with more detailed representations, allowing for observers to utilize both types of information when making memory judgments. However, defining gist in this way seems problematic, since almost any information related to a category should therefore create some familiarity signal.

By defining recollection and familiarity as exclusive processes, this also creates potential limitations in the kinds of information gist and more detailed representations can provide. Can an observer have familiarity with some aspects of an object and recollect others? Given a DPSD framework, this is not possible. Familiarity is recognition without any specific details, and recollection is an all-or-none process. What happens then when an observer confuses a previously seen object with a similar-looking item? Does this mean that they incorrectly recollected that object? Or does it mean that they relied only on familiarity to make their judgment? It is not clear in the DPSD model what information an observer may be relying on when making such errors.

In contrast, the CDP model would be able to account for such differences, as recollection can vary continuously (and is thus not all-or-none) and is also integrated into familiarity-based information when making a decision. Given that both familiarity and recollection can vary continuously, this creates more flexibility for different kinds of memory performance. It is possible for observers to have a strong familiarity signal (and more confidently report they “know” they saw an item), or other experiences where recollection occurred but the signal was weak, resulting in an observer reporting they “know” they saw an item rather than they “remember” all the details related to that item (Ingram, Mickes, & Wixted, 2012). For example, an observer could recollect varying amounts of specific details related to an object, which is not possible under the DPSD model.

However, the CDP model has its own limitations. The CDP provides a framework to explain the nature of memory signals but does not explain their underlying representations. The information and structure contained in memory remains unspecified (both in the CDP and DPSD). Again, it would be useful to specify the contents and formats of the representation in some detail to understand how it produces the response patterns taken to indicate familiarity and recollection.

Pattern separation and pattern completion

Another way researchers have tried to understand the nature of VLTM is to take an approach informed by neuroanatomy. The idea is that certain brain areas related to memory have specific properties that may make them amenable to completing different types of processes. Pattern completion and pattern separation is one such model seeking to explain distinctions in memory, but is also supported by brain localization properties.

Pattern completion is the process by which incomplete or degraded signals are filled in based on previously stored representations (Yassa & Stark, 2011). After you see an object, the next time you encounter that object the image hitting your retina is not going to be exactly the same (due to differences in viewpoint, orientation, lighting, etc.). Pattern completion is the process that would assist in VLTM being tolerant to variability in inputs related to the same object across encounters.

Pattern separation is the process that reduces the overlap between similar inputs in order to reduce interference at later recall (Yassa & Stark, 2011; see Fig. 2). This process supports the discriminatory ability of VLTM. It seeks to parse overlapping signals into distinct representations in order to assist in the individual recall of both items.

Illustration of pattern separation and pattern completion processes. Pattern separation is the process by which two overlapping signals are recognized as distinct from one another. Pattern completion is the process by which two overlapping signals are recognized as being from the same source and are combined into a single representation

This model was in part designed to address the issue that we want to be able to remember certain events as related but other events as distinct (i.e., tolerance and discrimination). For example, even when an observer is given different stimuli, considerable overlap might exist between the inputs into the system, causing different items to be incorrectly identified as related to one another (i.e., the problem of saturation). One possible way to address this issue would be to make the input representation very sparse, with a large and distributed network of neurons creating multiple potential pathways of activation for inputs. The hippocampus, which previous examples have shown is critical to episodic and recognition memory, has a region with such properties—the dentate gyrus (DG). This is consistent with pattern separation, which seeks to separate overlapping representations as distinct from one another.

Another issue this model sought to address is to explain how we remember certain events as being related to one another. Specifically, what is the process that recognizes two partially overlapping signals as arising from the same source? One possible solution would be to have a set of pathways that feeds back into itself to fill in potential missing information, so that partial or incomplete signals can be recognized. Another area of the hippocampus, CA3, has such properties, through recurrent collaterals that form a feedback loop (i.e., the axons of neurons in the circuit circle back to the inputs [dendrites] of neighboring axons), consistent with pattern completion computations (Yassa & Stark, 2011).

Even though there can be many different computations in support of distinctions in memory, the framework of pattern separation and pattern completion is made more viable by taking into account the specific properties of brain areas that may implement such processes. In fact, recent evidence using rats in a spatial maze has shown that the CA3 demonstrated coherent responses during conflict (when the global cues of the maze were rotated but local information remained the same), consistent with pattern completion, whereas the DG demonstrated disrupted responses during such conflict, consistent with pattern separation (Neunuebel & Knierim, 2014). While the contents of these processes are not specified, they provide a useful example for how a distinction supported by brain localization properties may inform our understanding of memory.

Potential limitations

Unfortunately, many aspects of pattern separation and pattern completion remain unspecified. This results in a host of potential limitations. Often, this framework is referred to as a “neurocomputational” approach, but the computations of these processes are never defined. There are many possible ways one could design a system to parse or complete the same overlapping inputs that would result in vastly different outputs. In addition, the content and format of these inputs are not specified. This information would greatly affect the kinds of computations one might use to address these types of processes. While the specific representational content and format of pattern separation and completion remains elusive, researchers have begun using new approaches to further define these processes. For example, Schapiro, Turk-Browne, Botvinick, and Norman (2017) used hippocampal neural network modeling to make more specific assumptions for how pattern separation and completion may be instantiated. Although these assumptions remain primarily in neural terms, further specificity in the neural pathways and areas involved in these processes may help inform their potential representational content and format.

Pattern separation and pattern completion are also limited by their focus to explain memory-based processes exclusively on properties of the hippocampus. Pattern completion shares similarities with familiarity, as both processes could be used to explain how an observer might recognize a previously seen image with degraded input. But pattern completion is specified to occur in the CA3 region of the hippocampus. Thus, it is unable to explain why amnesic patients with brain damage to the hippocampus can still demonstrate unimpaired associative (or familiarity-based) memory performance (Gabrieli, Fleischman, Keane, Reminger, & Morrell, 1995).

Theoretical overlap and conclusion

Theories of familiarity and recollection bear some resemblance to pattern separation and completion. For example, familiarity refers to some sort of gist knowledge, when an observer knows they have seen something before but without specific details associated with that memory. And pattern completion is the process through which two overlapping signals are recognized as arising from the same source. One way the gist knowledge of familiarity could be expressed is through a mechanism such as pattern separation—recognizing overlap between signals to recognize an item as previously seen, without necessarily extracting specific details associated with a previous memory.

Along similar lines, recollection refers to an observer accessing specific details about a previously experienced item. This process is critical for our discriminatory ability to distinguish similar-looking items from ones we have seen before. Indeed, in order to assess recollection-based memory many times researchers give participants lures (very similar-looking items) at test and assess their ability to discriminate these lures. Pattern separation describes exactly the process through which a lure may be distinguished as being distinct from a previously studied item. Thus, while pattern separation has distinct functions and neural predictions in comparison to recollection, there is clearly overlap between these two theories of memory.

Regardless of the potential similarities between these models, and despite disagreements in the field as to which better explains VLTM, there is a critical consensus among these models that there are different kinds of information VLTM may rely on. This generally involves some sort of gist information associated with a previous item (familiarity, pattern completion), as well as more specific visual details related to that same item (recollection, pattern separation). Both kinds of information likely affect decision-making in a variety of VLTM tasks. As a result, it is important to keep in mind that some tasks may rely on one process or kind of information more than another.

Common methods in VLTM

Old/similar/new judgment

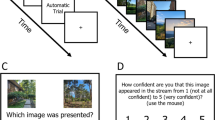

One way to measure the discriminatory ability of VLTM is to utilize a paradigm often referred to as the old/similar/new judgment. Participants first view and encode images of objects, typically while doing an incidental encoding cover task (e.g., would this object fit in a shoe box?). At test, participants serially view objects that were present in the encoding task (old images), objects similar but not identical to ones in the encoding task (similar images), and completely new objects that were not present during encoding (new images). Participants then judge whether the images they see are old, similar, or new (see Fig. 3).

Illustration of typical VLTM methods. In a general incidental encoding task, participants see a stream of images of real-world objects and make some judgment about those objects (i.e., indoor/outdoor, does this fit in a shoe box?). After, they may be tested using several methods. Using old/similar/new judgment, participants are shown objects that were exactly the same as encoding (old), similar but not identical to images at encoding (similar) and completely new images (new), and are asked to classify them accordingly. In two-alternative forced-choice tests, they are shown two images, one they have seen before and either a completely new (old–new comparison) or similar looking object (old–similar comparison) and are asked to judge which of the two images they have previously seen. In delayed-estimation tasks, participants are initially shown a grayscale image of a previously seen object and are asked to report its color using a color wheel. (Color figure online)

The primary purpose of this task is to evaluate the discriminatory ability of VLTM, and how memories may acquire different levels of discriminability. For example, correctly classifying a similar item likely requires an observer to have more specific details in memory (i.e., recollection-based) than correctly identifying an old item, which could be accomplished using a gist-based or familiarity-based process (Kensinger, Garoff-Eaton, & Schacter, 2006; Kim & Yassa, 2013; Schurgin & Flombaum, 2017; Schurgin, Reagh, Yassa, & Flombaum, 2013; Stark, Yassa, Lacy, & Stark, 2013).

A potential limitation of old/similar/new judgments is that it is not clear what constitutes a false alarm for similar items. In contrast, when using a simpler old/new procedure researchers can obtain an unbiased measure of memory discriminability (d') by taking the difference between the normalized proportion of hits (correctly classifying an old item is old) and the normalized proportion of false alarms (incorrectly classifying a new item as old; Green & Swets, 1966). However, what constitutes a false alarm for a similar judgment? Is it classifying an old item as similar? Or a new item as similar? Or a similar item as old? Without a clear understanding of what constitutes a false alarm, it is difficult to normalize responses given to similar items for potential biases. While different analyses have attempted to address this issue, research suggests analyzing old/similar/new responses using a signal-detection-based framework (da) may provide an accurate, unbiased measure of memory performance for similar items (see Loiotile & Courtney, 2015).

How does emotion affect visual memory?

One study that utilized this method sought to investigate how negative emotional context may affect the likelihood of remembering an item’s specific visual details. Participants first completed an incidental encoding task, where they were exposed to hundreds of images of real-world objects (for 250 ms or 500 ms) and had to judge whether each object would fit in a shoebox. Half of the images were rated as being negative and arousing, and the other objects were rated as neutral. Two days after incidental encoding, participants were then given a surprise test. Participants viewed old images (exactly the same as encoding), similar images (similar but not identical), and completely new images relative to encoding. They were told to classify them accordingly (old/similar/new; Kensinger et al., 2006).

At test, it was observed that for old items, negative emotional context led to an increase in correct classification. This was true for items presented both for 250 ms and 500 ms, but was stronger as encoding time increased. However, for similar items there was no main effect of emotional context or encoding time. As a result of the main effect of emotional context for old images, the researchers concluded that negatively arousing content increased the likelihood that visual details of an object would be remembered (Kensinger et al., 2006). However, given the lack of an effect for similar images, it would be more accurate to conclude that emotion may have enhanced certain aspects of visual memory (i.e., for old images).

How do familiarity and recollection contribute to responses?

This method has also been used to investigate the relative contributions that familiarity and recollection processes may have in the behavioral responses of old, similar, and new judgments. In the study, participants completed a two-stage recognition test. In the first phase, participants saw 128 images of real-world objects on a computer screen for 2 seconds each, and were asked to report whether an object was an “indoor” or “outdoor” object. In the second phase, participants were given a surprise test where they viewed old images (exactly the same as encoding), similar images (similar but not identical), and completely new images. They were told to classify the images as either old, similar, or new. Additionally, after indicating what category an image belonged to, participants were then instructed to indicate whether they “remember” seeing the same image in the study session or if they just “know” that they have seen the same image without any conscious recollection of its original presentation (Kim & Yassa, 2013). It is theorized that “remember” judgments reflect recollection-based processes, whereas “know” judgments reflect familiarity-based processes, although there exist several criticisms that these are not true indices of these processes but rather reflect subjective states of awareness or differences in confidence (Yonelinas, 2002).

As expected, at test they found very different accuracy for classifying old (70% correct), similar (53% correct), and new (74% correct) images. When analyzing these responses according to whether a participant reported they “remembered” (recollection based) or “knew” (familiarity based), a few interesting patterns emerged. When judging old items correctly, observers made primarily “remember” responses, suggesting that correct classifications of old items was primarily driven by recollection. For similar items, there was a slight trend to report “remember” rather than “know” both when the item was judged as old or similar. This suggests that observers can classify similar items with or without recollection, and that incorrectly identifying similar items (i.e., misclassifying them as old) is not simply driven by familiarity signals (Kim & Yassa, 2013).

Source localization judgments

One approach to evaluate the strength of memory and further distinguish potential errors is to use a paradigm combining old/new judgments with source localization. At encoding, participants view images of objects typically presented in one of four quadrants in the display. Then, at test, participants are shown old, similar, and new images in the center of the screen they must classify as old (previously seen images) or new (similar or new images), and indicate which of the four quadrants the object originally appeared in (Cansino, Maquet, Dolan, & Rugg, 2002; Reagh & Yassa, 2014; see Fig. 4).

Illustration of typical localization VLTM methods. In a general incidental encoding task, participants see a stream of images of real-world objects and make some judgment about those objects (i.e., Does this fit in a shoe box?). Critically, every image is presented in one of four (or more) possible quadrants. After, they may be tested using several methods. Using old/new and localization tasks, participants are shown objects that were exactly the same as encoding (old) and similar-looking or completely new images (new), and are asked to classify them accordingly. If they classify an object as old, they are then asked to indicate which of the four quadrants the image originally appeared in. In classification tasks, participants are shown either repetitions (old image, same location), object lures (similar images, same location), spatial lures (old image, different location), or new images. They are asked to classify the images accordingly

The underlying assumption of source localization manipulations is that more episodic information is retrieved on trials when the source judgment was successful than on trials when it was not. This is similar to the logic behind the remember/know procedure discussed previously. When an observer makes a correct classification and source judgment, the assumption is that this indicates a recollection-like memory, whereas if an observer makes a correct classification of an image but an incorrect source judgment, this may indicate a familiarity-based memory. However, unlike the remember/know procedure, source localization judgments do not rely on the observer’s own introspection in order to classify memory quality. Thus, the aim of the paradigm is to evaluate what percentage of responses using old/new judgments may rely on memories that contain more or less information, and what brain areas may be involved in these processes.

What brain areas and behaviors are involved in source judgments?

Cansino et al. (2002) were interested in using this method to investigate what brain regions may be involved in different memory processes beyond simple item recognition tasks. To accomplish this, participants first viewed images of real-world objects and were asked to judge whether the objects were natural or artificial. Critically, each image was presented in one of four quadrants in the display. After completing the task, participants were then administered a surprise test where previously shown images (old images) were mixed with completely new images. These images were shown in the center of the screen. Participants had to judge whether each image was old or new. They were instructed to press a single key if an image was new, and if an image was old participants indicated which position the image was presented during encoding using one of four keys. If a participant did not know which quadrant an old image originated from, they were instructed to guess.

At test it was found that when classifying previously seen (old) items, observers correctly identified 87% of items presented. However, 60.7% of these responses contained correct source responses, and 26.3% of responses contained incorrect source responses. This suggests that even when classifying old and new objects in a typical retrieval task, memories for these items likely contain additional information beyond simply categorical or familiarity-based knowledge. Additionally, they observed via collected fMRI data that when recognizing an old object with a correct versus incorrect source judgment, there was greater activity observed in the right hippocampus and left prefrontal cortex (Cansino et al., 2002). This suggests that memories containing more information may elicit greater memory signals and decision-making coordination.

Potential neural correlates of “what” and “where” memory

Source localization judgments have also been used to explore possible dissociations between object (what) and spatial (where) memories and their potential neural correlates. In the experiment, participants first completed an encoding task where images of real-world objects were presented in one of 31 possible locations on the screen for 3 seconds each. They were instructed to first judge whether the object was an indoor or outdoor object, and then whether the object appeared on the left or right relative to the center of the screen. Afterward, participants were given a surprise test with four possible trial types: repeated images (old images in the same location), lure images (similar images in the original object’s location), spatial lure images (old images in a slightly different location), or new images (not shown during encoding). Participants were instructed to indicate whether an image showed no change, object change, location change, or new (Reagh & Yassa, 2014).

Behaviorally, there was no difference in lure discrimination whether it was an object trial (i.e., similar image) or spatial trial (i.e., old image in slightly different location). This effect was consistent across both high-similarity and low-similarity stimuli. Neuroimaging data were also collected via fMRI and demonstrated unique differences based on lure type. It was observed that the lateral entorhinal cortex (LEC) was more engaged during object lure discrimination than during spatial lure discrimination, whereas the opposite pattern was observed in the medial entorhinal cortex (MEC). Additionally, the perirhinal cortex (PRC) was more active during correct rejections of object than spatial lures, whereas the parahippocampal cortex (PHC) was more active during correct rejections of spatial than object lures. Regardless of lure type, the dentate gyrus (DG) and subregion CA3 demonstrated greater activity during lure discrimination. Overall, this suggests two parallel but interacting networks in the hippocampus and related regions for managing object identity and spatial interference (Reagh & Yassa, 2014).

Two-alternative forced-choice test (2AFC)

A paradigm referred to as the two-alternative forced-choice (2AFC) test has been primarily used to study the capacity of visual episodic long-term memory. In a typical test, observers see two objects on the screen during test, one that they have seen before and another object they have not encountered previously. The other may be completely novel (old–new comparison) or a similarly related lure (old–similar comparison; Brady, Konkle, Alvarez, & Oliva, 2008; Brady, Konkle, Oliva, & Alvarez, 2009; Konkle, Brady, Alvarez, & Oliva, 2010b). The logic of this test is that it can tap into even “weak” memories that other methods may fail to reveal. It makes sense that a 2AFC judgment is easier than other kinds of responses because it is a binary response.

Typically, 2AFC is conceptualized using a signal-detection-theory framework (Green & Swets, 1966; Loiotile & Courtney, 2015). The logic is that observers have a memory representation that creates a normally distributed signal in a “memory strength” space. At test, when observers are shown an old item, that item elicits a normally distributed memory-match signal, which due to noise and other factors will vary in strength. If an observer was simply shown the old object, depending on the decision criteria this may result in incorrectly identifying an old object as new. However, by giving observers a foil in the 2AFC task, whether that foil is a completely new or similar-looking object, this gives observers a second normally distributed signal to assist in the comparison process. This second signal should be centered around a lower memory-match signal (i.e., zero) than the old image. As a result, observers can simply pick the max between the two items to correctly identify the old image (Macmillan & Creelman, 2004; see Fig. 5). This framework demonstrates from a modeling perspective why 2AFC tasks should be easier and are able to provide better performance for items that may otherwise fail to be remembered or classified correctly in other types of memory tasks—it is always easier to pick the max of two things.Footnote 2 Moreover, this performance is higher by a fixed amount, suggesting 2AFC taps into the same underlying memory signal as old/new testing procedures (Macmillan & Creelman, 2004).

Visualization of 2AFC logic. At test, observers are shown both an old and new image, which elicit their own normally distributed memory-match signals. While the old item signal may vary in the strength of its memory signal, it is more likely to be higher than the signal of the new item. By simply picking the item with the maximum signal, observers are likely to choose the old item. Thus, with the same underlying memory strength, 2AFC test procedures produce better memory performance compared to testing procedures where observers are only shown the old image

In addition to traditional 2AFC tasks, which involve an old item paired with a new or similar-looking item, researchers have also expanded on the number of potential options, creating 3AFC and 4AFC-type tasks. Generally, these tasks involve the addition of multiple similar-looking lures at test, in order to further evaluate the ability to discriminate between different kinds of lures. A potential limitation of 3AFC or 4AFC tasks is that adding lures may create interference and increase task difficulty, such as through adding increased decision noise (Holdstock et al., 2002). Additionally, when varying the kinds of lures available at test (such as providing two similar lures, or a similar lure and a completely new image), this creates conditions where the information available to an observer is not equivalent (Guerin, Robbins, Gilmore, & Schacter, 2012). This means performance across different testing conditions cannot be directly compared with one another.

The capacity of VLTM

Brady et al. (2008) sought to investigate the capacity of VLTM using a 2AFC method. Participants were presented 2,500 images of real-world objects for 3 seconds each and told to remember all the details of each image. After completing this study portion, participants were then given a 2AFC task where they saw two images on the screen. One was a previously encountered image from the previous session, whereas the other was either a novel image, an exemplar of an object they had previously encountered, or an image of an object they had previously encountered in a new state (i.e., changed orientation). Participants were instructed to indicate which of the two images they had previously encountered. Overall, performance was quite high, with significantly better accuracy for novel comparisons (92% correct), a replication of previous work by Standing (1973) showing incredibly high performance for novel test comparisons even when encoding 10,000 items into VLTM (see also Shepard 1967). However, quite surprisingly they observed extremely accurate performance for state and exemplar comparisons (87%–88% correct; Brady et al., 2008). These results demonstrate that even when given very brief exposure of images, humans are able to remember thousands of objects (seemingly with no limit) with extremely high accuracy. Furthermore, they suggest that human’s VLTM representations contain visual information necessary to assist in making difficult state and exemplar comparisons, beyond simply categorical or semantic knowledge of previous encounters.

Similar results have been found not just for objects but for the visual memory of scenes as well. Konkle et al. (2010a) demonstrated that after studying thousands of images of scenes, participants were able to recognize 96% of the previously seen images in a novel comparison test. Again, they also observed that performance for test comparisons with a similar foil was quite high, with participants 84% correct even when they studied four of the same exemplar (a potential source of interference) during encoding. This suggests that the incredibly large capacity and high-fidelity representations observed in visual long-term memory are not isolated to a specific stimulus class (i.e., objects or scenes), but rather appear to be general properties of the system.

Given the logic of 2AFC discussed previously, one could assume if participants had been given a single image of an object at test and told to discriminate whether it was old or new, performance would be worse (despite the same underlying memory strength). Thus, the results discussed above are best described as a potential upper bound of VLTM performance. Under different testing procedures, performance will likely differ. However, these results still demonstrate that under potentially ideal testing conditions, visual long-term memory not only has a massive capacity but also contains representations with visual-rich and detailed information.

Delayed estimation (continuous report)

In order to estimate the fidelity of VLTM (i.e., the amount of information in memory) experiments have utilized a delayed estimation paradigm. At encoding participants observe objects embedded in unique colors. Then, at subsequent test, observers see grayscale versions of objects they observed previously and use a color wheel to indicate its original color. By taking the error in degrees between the response and the true value, researchers can create a distribution of long-term color memory responses and measure the standard deviation of the distribution to understand the fidelity of that representation (Brady, Konkle, Gill, Oliva, & Alvarez, 2013).

The precision of information in VLTM representations

Brady et al. (2013) used this method to understand the precision of color memory representations across VWM and VLTM. In the study, researchers gave participants two separate tasks. In the VWM condition, participants saw three real-world objects simultaneously for 3 seconds, arranged in a circle around fixation. Participants were instructed to remember the color of all the objects. After a 1-second delay, one of the objects reappeared in grayscale, and participants could alter the color of the image using their mouse and were told to click the mouse when it matched the original color. In the VLTM condition, participants first underwent a study block viewing images sequentially for 1 second each, with a 1-second blank interval between images. Similar to the VWM condition, participants were instructed to remember the color of the object.

After the study block, the color of the items was tested one at a time in a randomly chosen sequence, and participants reported the image color using the same response mechanism used in the short-term memory condition. The precision of participants’ memory representations was determined by calculating the distribution of the degree error of each response in color space (with larger mistakes represented by greater degree error). In the VWM condition, at Set Size 3 (and above), participants’ precision was 17.8 degrees, which did not significantly differ from the precision observed in the VLTM condition, 19.3 degrees (Brady et al., 2013). Therefore, it appears the precision of color representations across VWM and VLTM have equivalent limits, suggesting they may share or be constrained by similar processes.

Interactions between VLTM and perception

Delayed estimation has also been used to investigate the potential role long-term memories may have when biasing new perceptual information. In a series of experiments by Fan, Hutchinson, and Turk-Browne (2016), participants completed a set of initial exposure trials, where they encountered unique shapes embedded in specific colors. Each shape was shown for half a second, and after a brief delay (1.5 seconds) an achromatic version of the shape reappeared, and participants reported the color of the image using their mouse. During the initial exposure session, participants encountered the same shape three times on separate trials (randomly assorted), always in the same color. As a result, each unique shape was associated with a specific color in long-term memory.

After completing the initial exposure trials, participants were then given final test trials. These final test trials were similar, except now each shape was shown in an unrelated color, and participants had to judge the appearance of the new colors. Researchers found that participants’ responses for the final test trials were best characterized as a mixture of the original and current-color representations, suggesting participants had anchored their responses to their representations in long-term memory. Moreover, this anchoring effect increased when perceptual input became more degraded (for example, by shortening the stimulus presentation during the final test trials). These results demonstrate that while perceptual judgments do reflect the current state of the environment, they can be affected by previous experience and long-term memory (Fan et al., 2016).

Core concepts of VWM

As previously discussed, VWM is typically thought of as the interface of multiple processes including perception, short-term memory, and attention (Baddeley & Hitch, 1974; Cowan, 2008). Due to this conception, researchers have generally described the function of VWM as supporting complex cognitive behaviors that require temporarily storing and manipulating information in order to produce actions (Baddeley, 2003; Ma et al., 2014). In particular, a large body of research over the past decade has focused on the capacity limitations of VWM. As a result, many of the models proposed to explain VWM have focused on this limitation.

Fixed slot model

When trying to understand the inherent capacity limitations of VWM, a particularly influential model has been the fixed slot model. It suggests that VWM can only store a discrete number of integrated object representations (see Fig. 6). This model was proposed by a highly influential study conducted by Luck and Vogel (1997), who used a change-detection task to quantify VWM capacity. In the task, participants were instructed to remember an array consisting of items of a single or a conjunction of features (color, orientation, etc.). After a brief delay (900 ms), a test array was presented that was either identical to the previous array or differed in terms of a single feature. Participants were instructed to indicate whether a change had occurred. Accuracy was assessed as a function of the number of items in the stimulus array in order to determine how many items could be accurately maintained in VWM.

Illustration of VWM models. According to the fixed slot model, VWM can only store a discrete number of integrated objects. The continuous resource model proposes that VWM has a finite resource that becomes more thinly distributed as the number of items in a display increases. And the flexible slot model is an integration of the latter two, stating VWM has a discrete number of slots, but that a finite resource can be flexibly allocated to each slot

In a series of experiments, Luck and Vogel (1997) gave participants change-detection tasks that varied the number of colored squares presented in an array (one to 12). They observed that performance was at ceiling for arrays of one to three items, and then declined systematically as set size increased from four to12 items. Overall, the average K value (estimations of VWM capacity) among participants was around three or four items. This finding led to the foundation of the slot model, which posits that individuals can only store between three and four objects in VWM.

In addition to experiments consisting of arrays of single features, Luck and Vogel (1997) also presented participants with arrays consisting of a conjunction of features (i.e., lines differing in orientation and color). Participants completed a change-detection task, but the researchers varied whether participants had to remember a single feature or a conjunction of features. For example, participants would see an array consisting of lines of different orientations and colors. In the color condition, only a color change could occur, and participants were instructed to look for a color change. In the orientation condition, only an orientation change could occur, and participants were instructed to look for an orientation change. And in the conjunction condition, either a color or orientation change could occur, and participants were instructed to remember both features of each item. Thus, in the conjunction condition, participants had to remember eight features but only four integrated objects. If VWM storage capacity is limited by individual features (e.g., color, orientation), then performance should decline at lower set sizes in the conjunction compared to the single feature conditions. However, if VWM storage capacity is limited by integrated objects (e.g., one red, horizontal line), then the same pattern of results should be observed throughout all three conditions.

Consistent with the latter case, they observed that VWM capacities were the same for single feature and conjunction items. Altogether, this provided the basis for the fixed slot model that VWM capacity was constrained by slots of ~3–4 integrated objects. While further research has expanded upon these initial findings, it is also important to note that several experiments have failed to replicate the critical conjunction experiments. These follow-up studies have observed that VWM capacity is, in fact, reduced as feature load increases, irrespective of the number of objects (Fougnie, Asplund, & Marois, 2010; Olson & Jiang, 2002; Wheeler & Treisman, 2002). To explain performance for conjunction conditions, researchers must consider both feature load (i.e., number of features to be remembered) and object load (i.e., number of objects in the display; Hardman & Cowan, 2015). Taken together, the current consensus in the field is that there is a cost to maintaining integrated features in VWM.

A key component of this model is that these VWM slots are considered all or nothing—an observer either remembers every object with the same fidelity (i.e., amount of information) within the capacity limit or fails to remember the object completely. This all-or-nothing component is potentially problematic, as it suggests that an observer has the same amount of information per item whether they viewed single or multiple items. What if an observer sees two encoding displays in a typical change-detection task: one with a single image of an apple and another with four images of very similar looking apples? In each test array, there is either no change, or one of the objects is replaced with another very similar looking image of an apple. According to the fixed slot model, the precision of information available to the observer is the same in both conditions. It does not account for the potential interference four very similar objects may have in terms of their representations in VWM, or how they may affect the decision-making process when an observer decides whether a change has occurred.

Continuous resource model

Another model used to explain apparent limitations in VWM is referred to as the continuous resource model. Unlike the fixed slot model, which defines capacity as being constrained by all-or-nothing slots of integrated objects, the continuous resource model conceptualizes VWM capacity as information based and limited by a finite resource (see Fig. 6). Furthermore, this finite resource can vary unevenly across different items in a display. This unequal division of resources across representations can differ due to a variety of factors, such as top-down goals (e.g., attention) or the total information load of the display (i.e., set size; Bays & Husain, 2008; Wilken & Ma, 2004).

Support for the continuous resource model came from Wilken and Ma (2004), who developed the continuous report method as a way of measuring the fidelity, or amount of information, contained in VWM representations. In the continuous report paradigm, participants are instructed to remember an array consisting of items of a single feature (color, orientation, etc.). After a brief delay (1.5 seconds), a square cue appeared centered at the location of one of the previously presented items. At the same time, a test probe is displayed in the center of the screen, which allows for continuous report of the probed feature. For example, in the color condition, a color wheel containing all possible color values appears in the center of the screen, and participants indicate the color of the probed item by clicking a color on the wheel, using a mouse. Responses are then reported as the degree error from the true color value, and a distribution can be made based on a participant’s responses. The standard deviation (SD) of this distribution can then be used to estimate a participant’s precision of color information in VWM.

In a series of experiments, Wilken and Ma (2004) varied the set size of displays as well as the type of feature being probed in memory. Regardless of the type of feature probed, they observed that as set size increased, the precision of VWM representations decreased. However, even at large set sizes, the distribution of responses was still centered around the true value of an item, and the precision (i.e., SD) was large but still well above chance. This led the researchers to conclude that individuals could store a continuous amount of information in VWM, but the precision with which an individual item was represented varied as a function of the total information load of the display (i.e., set size). When set size is greater than four items, observers are able to store more than four items in memory. However, fewer resources are available to allocate to each item. Given the constraints of a typical change-detection task, an item may still be represented in VWM, but without the necessary amount of information to make a successful comparison. This suggests that the ~3–4 object limit proposed by the fixed slot model may simply be a behavioral artifact of the tasks being used to assess such limits.

A potential limitation of the research used to support the continuous resource model is that variable precision has not been shown for holistic representations of objects. Studies in support of this model typically probe memory along a single feature dimension (i.e., color), even if observers are viewing images of real-world objects. It remains possible that representations for objects in VWM rely on different kinds of information, some of which may be variable and others that may not. For example, when an observer sees a single image of a real-world object, their representation of that object may contain categorical knowledge (e.g., teddy bear) in addition to other knowledge such as color (e.g., brown). The information of color available to the observer may be variable as a function of set size, but it is likely that categorical knowledge is not—observers either know the categorical identity of an object or they do not.

Work by Schurgin and Flombaum (2015, 2018) provides evidence this may be the case. In their task, participants saw two images of real-world objects in a display, that were then briefly masked, and participants then had to make a 2AFC judgment containing a previously seen object and a completely new object (indicating which of the two was the old object). Critically, they added image noise to stimuli at test by randomly scrambling up to 75% of the pixels in each image. They observed that VWM performance was unaffected by noise at test, with 0% noise and 75% noise demonstrating the same level of performance. The continuous resource model predicts that noise at test should make comparisons in memory harder, as observers would be comparing a noisy internal representation to a noisy external representation (i.e., test stimuli). Thus, when noise is added at test, performance should decrease. In contrast, the fixed slot model would predict no performance decrease. Observers would have no noise in their internal representation, which would allow them to manage noise at test when making a comparison. Altogether, this work suggests that while resolution for memory could vary along a continuous resource for a single feature, such as color, the same may not be true along all dimensions, such as holistic object representations.

Flexible slot model

Contrasting evidence exists that may support either the fixed slot or continuous resource models. The flexible slot model provides a middle ground between the two, proposing that VWM is constrained to a maximum of ~3–4 representations, but that this capacity may also be limited by the amount of information load in the display. In short, VWM is constrained by slots, but there is flexibility within the system for distributing limited resources across these slots.

One study interpreted by some to support the flexible slot model was conducted by Alvarez and Cavanagh (2004). They utilized a typical change-detection task but varied the information load of displays by changing the type of stimuli presented. On each trial, one to 15 objects were presented for 500 ms, followed by a brief delay (900 ms), and then a test array. On half the trials, one of the objects changed identity, and on the other half, the displays were identical. Participants were instructed to indicate whether one of the objects had changed. Critically, trials could contain stimuli pertaining to a single stimulus class that each differed in their visual complexity: line drawings, shaded cubes, random polygons, Chinese characters, and colored squares.

If VWM is limited by a fixed number of representations (i.e., slots), then performance should be equivalent across all stimulus categories, but if VWM capacity is limited by information load, then these estimates should vary across stimulus categories. After converting responses to K estimates of VWM capacity, Alvarez and Cavanagh (2004) observed there was varying capacity estimates across different stimulus classes, ranging from 1.6 for shaded cubes to 4.4 for colored squares. This provided support that VWM is limited in its number of representations (~4 objects), but also limited by the amount of information (i.e., stimulus complexity) of what is being remembered. However, while some may interpret these results in support of the flexible slot model, a limited number of representations in VWM is not necessarily the same as objects being stored in a slot-like way.

There is disagreement as to whether these differences reflect storage limitations, therefore supporting a flexible slot model, or rather reflect comparison errors made during the decision-making process. It could be that items with higher visual complexity also have higher similarity to one another, and this will lead to greater errors at test even though overall memory capacity for items is the same. Awh et al. (2007) investigated this possibility using Alvarez and Cavangh’s (2004) method and stimuli, but with one critical modification: categorical change. When a change occurred at test, they could either be within-category changes (as in Alvarez & Cavanagh; i.e., a Chinese character replaced with a different Chinese character), or they could be between-category changes (i.e., a Chinese character replaced with a line drawing). They observed that for within-category changes, performance varied across stimulus categories, consistent with Alvarez and Cavanagh. Conversely, for between-category changes, they observed no difference in performance relative to stimulus category. This was taken to suggest that variable performance across different kinds of stimuli may be due to differences in similarity, and not information load (consistent with the fixed slot model). However, recent research has found these between-category changes are primarily driven by the use of global ensemble or texture representations. When objects are clustered in a display by type, individuals can discriminate based on a change in clustering (i.e., using an ensemble or texture representation) rather than one based on individual item memory (Brady & Alvarez, 2015). These results suggest the need for more flexible models of VWM that integrate a role for spatial ensemble representations.

Considerations affecting VWM beyond capacity

An important consideration to our theoretical understanding of working memory is that there are large individual differences in performance, specifically in relation to capacity. Previous research has found capacity estimates of VWM vary substantially across individuals, ranging from 1.5 to 5.0 objects (Vogel et al., 2001; Brady et al., 2011), and that these individual differences in capacity correlate strongly with broad measures of cognitive function, such as academic performance (Alloway & Alloway, 2010) and fluid intelligence (Fukuda, Vogel, Mayr, & Awh, 2010). These individual differences in capacity and relationships with other cognitive functions are likely because VWM is the combination of multiple processes, including short-term memory and executive control mechanisms, many of which vary in performance across individuals (Baddeley, 2003; Conway, Kane, & Engle, 2003).

In the context of the models discussed above, each could be easily amended to account for individual differences. Whether VWM capacity is limited by a fixed or continuous resource, either could conceivably vary across individuals. However, understanding and explaining these individual differences remains key in theoretical discussions of working memory, as they could be the result of potentially different sources. For example, it could be that individual differences in capacity are the result of individual differences across a resource (whether fixed or continuous). Alternatively, these capacity limitations could arise from other components contributing to working memory performance, such as executive function or attention (Baddeley, 2003; Conway et al., 2003). Indeed, research has found potential limitations or biases in VWM can arise from the combination of different sources of information, such as the memory of an item in combination with categorical information (Huttenlocher, Hedges, & Duncan, 1991), nonuniformities in attention (Schurgin & Flombaum, 2014), or ensemble information (Brady & Alvarez, 2011). As a result, understanding the source of individual differences in working memory capacity remains critical to our overall understanding of how capacity limitations arise.

In an effort to explain the capacity limitations of VWM, all the models discussed above also make an implicit assumption that the fidelity of VWM representations is directly affected by the number of objects to be remembered. However, recent research has demonstrated that even with a fixed number of items in a display there is variability across trials in the precision of VWM (Bae, Olkkonen, Allred, Wilson, & Flombaum, 2014; Fougnie, Suchow, & Alvarez, 2012; Van den Berg, Shin, Chou, George, & Ma, 2012). This suggests there are not only differences in visual working memory limits across individuals but that the quality of working memory representations varies within an individual as well. Moreover, this variability cannot be explained by fluctuations in attention or capacity resources (i.e., slots, continuous resource, etc.; Fougnie et al., 2012).

To better account for these results, researchers have proposed different kinds of variable precision models. These models account for random fluctuations in encoding precision that tend to occur from trial to trial and have been shown to fit data better than traditional fixed slot or continuous resource models (Fougnie et al., 2012; Van den Berg et al., 2012). However, it is important to note that variable precision does not necessarily favor either a slot or continuous resource account of working memory capacity (Van den Berg & Ma, 2017). As a result, the role of variability affecting VWM fidelity should be treated as separate from the source of capacity limitations described by the models above.

Common methods in VWM

Change-detection task

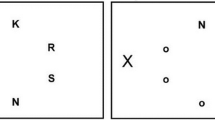

In order to understand the capacity of VWM, many experiments have utilized a change-detection task. In this paradigm, observers first see an array of items (whether a single feature, conjunction of features, or images of real-world objects) and are told to remember what they saw. The array then disappears and after a brief delay reappears with either all the items exactly as before or with a single item changed. Observers are asked to identify whether a change occurred or not (see Fig. 7). The general goal of this paradigm is that by varying the number of items in a display (two, four, eight, etc.), researchers can investigate what kinds of constraints may be limiting VWM capacity (Awh et al., 2007; Luck & Vogel, 1997; Vogel et al., 2001, 2006; Xu, 2002).

Illustration of typical VWM methods. In a change-detection task, participants are briefly shown an initial array of items (colors, objects, etc.). After a delay, they are then shown a test display. Half of the time the display is exactly the same, whereas in the other half one of the items in the display has changed. Participants are told to indicate whether a change occurred. In the single probe report task, at test participants are only shown one item instead of the entire display (with or without a change). In the continuous report task, at test one of the previous items is probed and participants indicate their memory for a single feature in a continuous space (i.e., report the previous color of the item using a color wheel). (Color figure on line)

A variation of the change-detection task uses a single probe report, showing only one item at test instead of the entire display (with or without a change). A potential advantage of single probe change-detection tasks is that it may be harder for observers to use relational or summary statistical information (i.e., how much the overall display was “blueish” and whether the test display is different in the overall “blueishness”) to inform their judgments. A potential disadvantage of this procedure is that if visual memory for certain stimuli (objects, scenes, etc.) relies on relational information (i.e., position, layout, etc.), then probing a single item may remove important information and erroneously reduce performance. It may also be the case that when participants are given the entire display at test, this encourages them to remember the entire display. It is worth nothing that VWM capacity estimates have been found to be comparable whether they were based on the whole-display or a single-probe procedure (Luck & Vogel, 1997; Jiang, Olson, & Chun, 2000).

Time course of consolidation in VWM