Abstract

Despite recent progress in understanding the factors that determine where an observer will eventually look in a scene, we know very little about what determines how an observer decides where he or she will look next. We investigated the potential roles of object-level representations in the direction of subsequent shifts of gaze. In five experiments, we considered whether a fixated object’s spatial orientation, implied motion, and perceived animacy affect gaze direction when shifting overt attention to another object. Eye movements directed away from a fixated object were biased in the direction it faced. This effect was not modified by implying a particular direction of inanimate or animate motion. Together, these results suggest that decisions regarding where one should look next are in part determined by the spatial, but not by the implied temporal, properties of the object at the current locus of fixation.

Similar content being viewed by others

We move our eyes three to four times each second as we investigate our visual surroundings. Each one of these eye movements requires a deceptively simple decision: Where should we look next? Despite recent progress in understanding the factors that determine where an observer will eventually look in a scene, we know very little about what determines how an observer decides where he or she will specifically look next. Some evidence suggests that visual salience (Itti & Koch, 2000), occulomotor biases (Tatler & Vincent, 2009), the locations of prior fixations (Dodd, Van der Stigchel, & Hollingworth, 2009; Klein & MacInnes, 1999), semantic content (Underwood & Foulsham, 2006), and momentary task relevance (Hayhoe, Shrivastava, Mruczek, & Pelz, 2003) likely play roles, but identifying the factors that influence saccade sequencing remains an underdeveloped area of research that requires much greater scrutiny (Henderson, 2011). In this article, we consider the degree to which one’s representation of a fixated object’s spatio-temporal properties influences the direction in which a subsequent eye movement will travel.

When viewing a scene, an observer forms several representations regarding objects’ spatial position and temporal dynamics. For example, we use spatial reference frames to define objects’ fronts, backs, left sides, right sides, and so forth. By doing so, we are able to describe a chair as “facing” a certain direction, being to the left of a table, or being behind a stool. In addition to defining the current spatial position of an object in a scene, it is also important to consider the ways in which an object’s position might be altered over time. For example, some objects may be more or less likely to move. Similarly, considering the potential causes of an object’s motion allows one to predict future events. Some objects may require an external force to act upon them, whereas others may be capable of volitional self-movement. One’s assignment of reference frames (e.g., Carlson-Radvansky & Jiang, 1998; Logan, 1996; Logan & Sadler, 1996), representation of any possible motion (e.g., Gervais, Reed, Beall, & Roberts, 2010), and interpretation of animacy (e.g., Pratt, Radulescu, Guo, & Abrams, 2010) each affect covert aspects of attentional control. However, their impacts on overt attention (i.e., gaze control) have not been investigated.

Experiment 1

The purpose of Experiment 1 was to assess whether a fixated object’s spatial orientation in a display influences subsequent shifts of gaze. Our empirical approach was based on the logic of previous work conducted by Marwan Daar and Jay Pratt (2008) examining response selection processes within the context of the spatial–numerical association of response codes (SNARC) effect. The SNARC effect refers to an interaction between numerical magnitude and spatially mapped responses in number-processing tasks. In its initial demonstration, observers were shown to be faster to respond to low numbers with leftward responses (e.g., a keypress with the left hand) and faster to respond to high numbers with rightward responses (Dehaene, Bossini, & Giraux, 1993). Daar and Pratt further showed that in addition to altering the speed with which a spatially mapped response is made, numerical magnitude biases which of several spatially mapped responses an observer will volitionally select. They presented observers with low digits (1 or 2), high digits (8 or 9), or a neutral symbol (@, #, &, or *) and asked them to make one of two keypresses (left or right). Crucially, on each trial, the observers were given free choice of which key to press. When presented with neutral stimuli, observers chose the right keypress approximately 56% of the time, indicating a slight baseline bias to press the right key. Importantly, when high digits were presented, this rightward bias increased to 63%. However, when low digits were presented, the baseline rightward bias decreased (52%). Because the semantic identity of the digit (or symbol) was irrelevant to the task (which was simply to press a key of one’s own choice), Daar and Pratt concluded that an observer’s processing of numerical magnitude influences subsequent decisions regarding response selection.

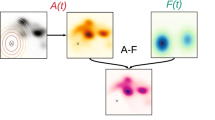

We applied Daar and Pratt’s (2008) logic to our investigation into the possible influence of an object’s spatio-temporal properties on saccadic decision making (see Fig. 1a). Participants’ eye movements were monitored as they viewed inanimate objects one by one on a computer screen. Viewing single objects allowed us to isolate and manipulate the effects of spatial orientation independently of other factors that might be present in a full scene. Each object was oriented so that it faced the left or the right side of the monitor. Two small squares flanked each object, such that one was in front of the object and the other was behind it. When presented with a go signal, participants moved their eyes from the object to the square of their choice. This eye movement was analogous to the left/right buttonpress made by Daar and Pratt’s (2008) participants. If interpretations of spatial orientation are used to make gaze control decisions, then gaze should be biased to shift in the same direction the object is facing, given the behavioral and functional importance of the area of space in front of an object.

Panel A: An example stimulus is illustrated that depicts one of the neutral nonobjects used across all five experiments. Panel B: A schematic illustration of the procedure. The position of the observer’s gaze is depicted throughout the trial by the cartoon of an eye. The observer was free to shift gaze to either peripheral square

Method

Participants

A group of 36 University of Notre Dame undergraduates participated in exchange for course credit or monetary compensation.

Stimuli and apparatus

Example stimuli are illustrated in Fig. 2. Each display consisted of a line drawing, presented in the center of the screen, that was flanked to its left and right by two solid squares. The drawings consisted of inanimate objects (a clothespin, kettle, trumpet, and whistle) adapted from Snodgrass and Vanderwart (1980) and symmetrical nonobjects adapted from Kroll and Potter (1984). In addition to the originals, mirror-reversed versions were created to produce left- and right-facing versions of each drawing. The drawings were adjusted to be subjectively the same size (approximately 7° horizontally and 6° vertically), and the flanking squares subtended 0.55° horizontally and vertically and were positioned 9° to the left or right of center. All items were drawn in black on a gray background.

Objects used in the experiments. Only right-facing objects are depicted. Mirror-reversed versions were created to face left. Row A: Nonobjects used in all experiments. Row B: Objects used in Experiment 1. Row C: Objects used in Experiment 2. Row D: Objects used in Experiment 3. Row E: Objects used in Experiment 4. Row F: Objects used in Experiment 5

Stimuli were presented on a 21-in. CRT display with a refresh rate of 120 Hz. Chin and forehead rests maintained a viewing distance of 81 cm. Eye movements were sampled from each participant’s right eye at a rate of 1000 Hz by an EyeLink 2K eyetracking system (SR Research, Inc.), which provided an average spatial accuracy of 0.15°.

Procedure

The procedure is illustrated in Fig. 1b. Participants began the experiment by completing a calibration procedure that mapped the output of the eyetracker onto the display. Calibration was monitored throughout the experiment and was adjusted if necessary. Participants initiated each trial by pressing a button. A fixation cross was presented for a randomly determined interval between 500 and 1,500 ms, after which a stimulus was presented. Participants were instructed to maintain fixation on the central image. After 50, 500, or 1,000 ms had elapsed, a small white square subtending 0.2° horizontally and vertically appeared at the center of the screen for 150 ms. This onset served as a “go signal” and indicated that the observer was to shift his or her gaze from the central figure to one of the peripheral squares. The go signal did not inform the observer which square he or she was to look toward; observers were told to move their eyes to the saccade target of their choice. The variable timing of the go signal’s onset was used to prevent participants from automatizing their saccades across trials. Upon fixating one of the peripheral squares, the trial was terminated. A trial was also terminated if a participant shifted gaze away from the central figure prior to the go signal (such trials were recycled at the end of the experiment).

In the experiment, we employed an entirely within-subjects design. The eight figure identities (kettle, trumpet, Nonobject 1, Nonobject 2, etc.), and two figure orientations (left- vs. right-facing) were fully crossed with a repetition factor of 12, yielding 192 experimental trials. Trials were presented in different random orders across participants.

Results and discussion

Because leftward and rightward saccades together accounted for 100% of the data in each cell of the experimental design, we arbitrarily focused our analyses on the frequency with which observers looked to the right after viewing each image. These frequencies were contrasted across three conditions: objects that faced to the left, neutral nonobjects, and objects that faced to the right (Fig. 3). The nonobject condition established each observer’s baseline tendency to shift gaze to the right. To avoid floor and ceiling effects, seven participants who looked to the right on less than 5% or more than 95% of these neutral trials were excluded from our analyses.

Results from all five experiments. Percentages of rightward saccades are illustrated as a function of object identity (objects, tilted objects, vehicles, animals looking at the participant, or animals looking in the direction of travel) and object type (leftward-facing, neutral, or rightward-facing)

A one-way repeated measures analysis of variance (ANOVA) indicated that the frequency of rightward saccades depended on the nature of the object at fixation, F(2, 56) = 20.2, p < .001, ω 2 = .244. Observers looked to the right on 46% of the neutral nonobject trials. This slight leftward bias increased when objects faced to the left (32% rightward saccades) and decreased when objects faced to the right (60% rightward saccades). In other words, relative to their neutral baseline tendencies, observers were biased to shift their gaze in the direction the object faced.

A subsequent analysis considered the strength of the gaze bias as a function of the stimulus onset asynchrony (SOA) between the onset of the object and the go signal. Although SOA was manipulated to prevent participants from automatizing their saccades across trials, longer SOAs nevertheless provided participants more time to process each object before executing an outbound saccade. This afforded us an opportunity to examine a potential link between viewing time and the degree to which gaze decisions were based on spatial orientation. In this experiment, the time spent viewing the object did not alter gaze control decisions: The main effect of SOA was not reliable, F(2, 56) = 1.65, p = .20, nor did it interact with object orientation, F(4, 112) = 1.09, p = .36. This suggests that the processing time needed for spatial orientation to inform the location of the next saccade was fully completed even in our shortest SOA condition.

To place this timing in context, we determined the average saccade latencies (i.e., the elapsed time between the onset of the go signal and the start of the saccade) in the shortest SOA condition (50 ms). The average saccade latencies were 527 ms, indicating a total object viewing time of 577 ms before the saccade was executed. This is substantially longer than the saccade latencies typically observed under normal scene-viewing conditions, in which the eyes move three or four times per second (Rayner, 1998). It is perhaps not surprising, then, that SOA had no effect on the gaze biases observed in this experiment.

In summary, the results of Experiment 1 suggest that the determination of a fixated object’s front and back through the application of a spatial reference frame can influence subsequent shifts of gaze. Experiment 2 was designed to provide further evidence for this conclusion.

Experiment 2

In Experiment 1, the objects were directly aligned with the saccade target squares. In Experiment 2, we rotated the objects 45° so that they now faced the lower left and lower right corners of the monitor (Fig. 2). We did not, however, alter the locations of the saccade target squares. As a result, the alignment between the facing direction of the objects and the saccade direction choices available to the participants was reduced. If participants are biased to look in the direction an object faces, he or she should do so more often when the experiment permits him or her to do so (Exp. 1) than when it does not (Exp. 2). To take one concrete example as an illustration, an object facing down-and-to-the-left may still bias leftward saccades, but it should do so at a rate smaller than that observed for objects facing directly to the left.

Method

Participants

A group of 34 University of Notre Dame undergraduates participated in exchange for course credit or monetary compensation. None had participated in Experiment 1.

Stimuli, apparatus, and procedure

The stimuli, apparatus, and procedure were the same as in Experiment 1, except that the objects used in Experiment 1 (clothespin, kettle, trumpet, and whistle), were rotated 45°, so that they either faced down-and-to-the-left or down-and-to-the-right. Although the stimuli could have also been rotated to face up-and-to-the-left and up-and-to-the-right, we elected not to include these additional directions, in order to keep the number of possible object orientations (i.e., two) the same across Experiments 1 and 2.

Results and discussion

The initial analyses paralleled those in Experiment 1 (Fig. 3). Five participants were excluded because they looked to the right on less than 5% or more than 95% of the neutral nonobject trials. As in Experiment 1, the frequency of rightward saccades depended on the nature of the fixated objects, F(2, 56) = 6.27, p = .003, ω 2 = .061. Observers looked to the right on 50% of the neutral nonobject trials. This bias decreased when objects faced to the left (42% rightward saccades) and increased when objects faced to the right (53% rightward saccades). As in Experiment 1, the strength of this gaze bias did not vary as a function of the SOA between the onset of the object and the go signal: The main effect of SOA was not reliable, F(2, 56) < 1, nor was the interaction with object orientation, F(4, 112) < 1.

To compare the results of Experiment 2 with those of Experiment 1, we conducted a 2 (Experiment) × 3 (Cue Direction) mixed-model ANOVA. As expected, the main effect of cue direction was reliable, F(2, 112) = 25.3, p < .001. Importantly, the magnitudes of this effect were different across the experiments, as indicated by a reliable interaction term, F(2, 112) = 4.55, p = .013. Specifically, the orientation-based biases on saccade direction were reduced when the objects were misaligned with the available saccade targets. Experiments 1 and 2, therefore, provide strong evidence that in the absence of other pictorial cues, a representation of an object’s spatial orientation can influence an individual’s gaze control decisions. In Experiment 3, we considered whether an object’s potential for motion can augment this bias.

Experiment 3

In Experiments 1 and 2, the objects were stationary and no implication of upcoming or ongoing motion was provided. Some objects, however, are strongly associated with motion (e.g., vehicles). Implied motion affects visual cognition at multiple levels, ranging from perception (e.g., Pavan, Cuturi, Maniglia, Casco, & Campana, 2011; Winawer, Huk, & Boroditsky, 2008), to attention (Shi, Weng, He, & Jiang, 2010), to memory (e.g., Freyd & Finke, 1984). For example, still images of people engaging in activities such as throwing a ball have been shown to direct spatial attention in the direction of the implied motion (Gervais, Reed, Beall, & Roberts, 2010). If representations of possible or implied motion similarly influence gaze, then the biases associated with the direction an object is facing and a potential bias to shift gaze in the direction it is moving should be additive.

Method

Participants

A group of 35 University of Notre Dame undergraduates participated in exchange for course credit or monetary compensation. None had participated in Experiment 1 or 2.

Stimuli, apparatus, and procedure

The stimuli, apparatus, and procedure were the same as in Experiment 1, except that the immobile objects used in Experiment 1 (clothespin, kettle, trumpet, and whistle), were replaced by drawings of vehicles (airplane, bus, car, and truck). These stimuli were also adapted from Snodgrass and Vanderwart (1980), and both left- and right-facing versions were created.

Results and discussion

The initial analyses paralleled those in prior experiments (Fig. 2). Five participants were excluded because they looked to the right on less than 5% or more than 95% of the neutral nonobject trials. As in Experiment 1, the frequency of rightward saccades depended on the nature of the fixated objects, F(2, 58) = 17.7, p < .001, ω 2 = .159. Observers looked to the right on 52% of the neutral nonobject trials. This bias decreased when objects faced to the left (45% rightward saccades) and increased when objects faced to the right (66% rightward saccades). As in previous experiments, the strength of this gaze bias did not vary as a function of the SOA between the onset of the object and the go signal: The main effect of SOA was not reliable, F(2, 62) = 1.24, p = .30, nor was the interaction with object orientation, F(4, 124) < 1.

To consider possible additive effects of spatial orientation and implied motion, the results of Experiment 3 were compared to those of Experiment 1 with a 2 (Experiment) × 3 (Cue Direction) mixed-model ANOVA. As expected, the main effect of cue direction was reliable, F(2, 114) = 37.6, p < .001. Importantly, the magnitudes of this effect were not different across the experiments, as indicated by a nonreliable interaction term, F(2, 114) = 1.03, p = .36. That is, vehicles did not cue gaze in the direction of implied motion more frequently than did stationary objects in the direction of their orientation. A representation of motion, therefore, does not necessarily influence an individual’s gaze control decisions. In Experiment 4, we considered whether one’s representation of the potential cause(s) of an object’s motion can enhance the saccade direction bias observed in Experiment 1.

Experiment 4

In Experiment 3, the objects were associated with motion, but could not move unless acted upon by an external force. Some objects, however, are capable of volitional self-motion (e.g., animals). The visual system seems to be tuned to detect animacy in the environment (Scholl & Tremoulet, 2000), and observers freely anthropomorphize abstract shapes that move in ways reminiscent of chasing or stalking behavior (Gao & Scholl, 2011). Such “animate” motion seems to capture covert attention more efficiently than inanimate motion (Pratt et al., 2010). Thus, if representations of animacy similarly influence gaze, then the biases observed in Experiment 1 should be larger after viewing objects capable of animate motion.

Method

Participants

In all, 30 University of Notre Dame undergraduates participated in exchange for course credit or monetary compensation. None had participated in Experiments 1–3.

Stimuli, apparatus, and procedure

The stimuli, apparatus, and procedure were the same as in Experiment 3, except that the vehicles (airplane, bus, car, and truck), were replaced by drawings of animals (bear, deer, fox, and raccoon). These stimuli were again adapted from Snodgrass and Vanderwart (1980), and both left- and right-facing versions were created. In the original versions of each drawing, each animal was itself looking in the direction of its motion. To avoid confounding each animal’s direction of motion and direction of gaze (cf. Friesen & Kingstone, 1998), an artist redrew the head of each animal so that it was looking at the participant.

Results and discussion

The analyses paralleled those in previous experiments (see Fig. 3). Two participants were excluded because they looked to the right on less than 5% or more than 95% of the neutral nonobject trials. As in Experiments 1 and 2, the frequency of rightward saccades depended on the nature of the fixated objects, F(2, 54) = 21.8, p < .001, ω 2 = .197. Observers looked to the right on 56% of the neutral nonobject trials. This bias decreased when objects faced to the left (42% rightward saccades), and increased when objects faced to the right (67% rightward saccades). As in the previous experiments, the strength of this gaze bias did not vary as a function of the SOA between the onset of the object and the go signal: The main effect of SOA was not reliable, F(2, 54) < 1, nor was the interaction with object orientation, F(4, 108) < 1.

To consider possible additive effects of implied motion and animate motion, the results of Experiment 4 were compared to those of Experiment 1 with a 2 (Experiment) × 3 (Cue Direction) mixed-model ANOVA. As expected, the main effect of cue direction was reliable, F(2, 110) = 41.4, p < .001. Once again, however, the magnitudes of this effect were not different across the experiments, F(2, 110) < 1. Thus, neither vehicles (nonanimate) nor animals (animate) cued gaze in the direction of motion more frequently than did other cues. A representation of animacy, therefore, did not independently influence individuals’ gaze control decisions in this experiment.

Experiment 5

In Experiment 4, we had intentionally decoupled the animals’ implied directions of motion and gaze. Gaze cues, however, can create strong biases of both covert and overt attention (e.g., Friesen & Kingstone, 1998; Mansfield, Farroni, & Johnson, 2003; see Frischen, Bayliss, & Tipper, 2007, for a review). Thus, we repeated Experiment 4 under conditions in which the depicted animals were looking in their own direction of travel, instead of at the participant.

Method

Participants

A group of 32 University of Notre Dame undergraduates participated in exchange for course credit or monetary compensation. None had participated in Experiments 1–4.

Stimuli, apparatus, and procedure

The stimuli, apparatus, and procedure were the same as in Experiment 4, except that the original Snodgrass and Vanderwart (1980) versions of each animal drawing were used, such that each animal was itself looking in the direction of its motion. As in previous experiments, both left- and right-facing versions were created.

Results and discussion

The analyses paralleled those in previous experiments (see Fig. 3). One participant was excluded from the analysis because he or she looked to the right on less than 5% or more than 95% of the neutral nonobject trials. As in all previous experiments, the frequency of rightward saccades depended on the nature of the fixated objects, F(2, 60) = 10.1, p < .001, ω 2 = .123. Observers looked to the right on 55% of the neutral nonobject trials. This bias decreased when objects faced to the left (43% rightward saccades) and increased when objects faced to the right (64% rightward saccades). As in previous experiments, the strength of this gaze bias did not vary as a function of the SOA between the onset of the object and the go signal: The main effect of SOA was not reliable, F(2, 60) < 1, nor was the interaction with object orientation, F(4, 120) < 1.

To consider possible additive effects of implied motion, animacy, and gaze direction, the results of Experiment 5 were compared to those of Experiment 1 with a 2 (Experiment) × 3 (Cue Direction) mixed-model ANOVA. As expected, the main effect of cue direction was reliable, F(2, 116) = 28.7, p < .001. Importantly, the magnitudes of this effect were not different across the experiments, F(2, 116) < 1. Hence, even the combination of implied motion, animacy, and depicted intention (through gaze) did not influence gaze control decisions more than spatial orientation alone. One potential caveat to this final conclusion, however, is our use of animals instead of humans as the stimuli in Experiment 5. Our selection of animals was practical, given our use of the Snodgrass and Vanderwart (1980) stimulus set. It remains possible, and even likely, that gaze cues in illustrations of human faces could produce stronger effects within the present paradigm. Indeed, such human-based gaze cues have been strong predictors of behavior in a variety of perceptual and attentional tasks (see Frischen et al., 2007).

Conclusions

Research over the past several decades has unequivocally shown that gaze control is not the product of random fixation point selection (e.g., Buswell, 1935; Yarbus, 1967). By blending image-based measures of visual salience (e.g., Itti & Koch, 2001), computations of local image statistics (e.g., Parkhurst & Niebur, 2003), episodic memory for specific scene content (e.g., Brockmole & Henderson, 2006), memory for semantic or structural redundancies within scene categories (e.g., Torralba, Oliva, Castelhano, & Henderson, 2006), and task knowledge (e.g., Land, Mennie, & Rusted, 1999), observers are able to efficiently control gaze in the service of completing visually guided tasks. An underdeveloped area of investigation within this program of research is work specifically addressing the sequencing of saccades. In this article, we demonstrated that one’s representations of an object’s spatio-temporal properties contribute to volitional decisions regarding where one will look next.

All else being equal, after fixating an object, observers are biased to shift gaze toward the area in front of objects. We suspect that this bias is adaptive, because it focuses gaze on areas of space that are likely to be important in eventual actions involving the object. For example, functional attributes of objects are often used to determine the fronts of objects (drawers are in the front of the dresser, the seat is in the front of the chair, etc.). Perhaps surprisingly, implied or potential motion does not augment this bias. This suggests that the direction of motion does not, in isolation, provide additional information to the process of fixation point selection beyond orientation alone. For example, the orientation of a car may be sufficient to indicate regions of a scene that are likely to change, without the need to additionally compute its motion capabilities. Furthermore, the lack of an effect of implied motion on gaze control was observed regardless of whether that motion could be expected to arise from external forces or animate self-movement. Together, these results suggest that implied motion and animacy may only affect covert shifts of attention. Understanding how these biases interact with other factors already known to affect saccade sequencing and those yet to be discovered is an important avenue for future research.

References

Brockmole, J. R., & Henderson, J. M. (2006). Recognition and attention guidance during contextual cueing in real-world scenes: Evidence from eye movements. Quarterly Journal of Experimental Psychology, 59, 1177–1187. doi:10.1080/17470210600665996

Buswell, G. T. (1935). How people look at pictures: A study of the psychology and perception in art. Chicago: University of Chicago Press.

Carlson-Radvansky, L. A., & Jiang, Y. (1998). Inhibition accompanies reference-frame selection. Psychological Science, 9, 386–391. doi:10.1111/1467-9280.00072

Daar, M., & Pratt, J. (2008). Digits affect actions: The SNARC effect and response selection. Cortex, 44, 400–405. doi:10.1016/j.cortex.2007.12.003

Dehaene, S., Bossini, S., & Giraux, P. (1993). The mental representation of parity and number magnitude. Journal of Experimental Psychology: General, 122, 371–396. doi:10.1037/0096-3445.122.3.371

Dodd, M. D., Van der Stigchel, S., & Hollingworth, A. (2009). Novelty is not always the best policy: Inhibition of return and facilitation of return as a function of visual task. Psychological Science, 20, 333–339. doi:10.1111/j.1467-9280.2009.02294.x

Freyd, J. J., & Finke, R. A. (1984). Representational momentum. Journal of Experimental Psychology: Learning, Memory, and Cognition, 10, 126–132. doi:10.1037/0278-7393.10.1.126

Friesen, C. K., & Kingstone, A. (1998). The eyes have it! Reflexive orienting is triggered by nonpredictive gaze. Psychonomic Bulletin & Review, 5, 490–495. doi:10.3758/BF03208827

Frischen, A., Bayliss, A. P., & Tipper, S. P. (2007). Gaze cueing of attention: Visual attention, social cognition, and individual differences. Psychological Bulletin, 133, 694–724. doi:10.1037/0033-2909.133.4.694

Gao, T., & Scholl, B. J. (2011). Chasing vs. stalking: Interrupting the perception of animacy. Journal of Experimental Psychology: Human Perception and Performance, 37, 669–684. doi:10.1037/a0020735

Gervais, W. M., Reed, C. L., Beall, P. M., & Roberts, R. J. (2010). Implied body action directs spatial attention. Attention, Perception, & Psychophysics, 72, 1437–1443. doi:10.3758/APP.72.6.1437

Hayhoe, M. M., Shrivastava, A., Mruczek, R., & Pelz, J. B. (2003). Visual memory and motor planning in a natural task. Journal of Vision, 3(1), 49–63. doi:10.1167/3.1.6

Henderson, J. M. (2011). Eye movements and scene perception. In S. P. Liversedge, I. D. Gilchrist, & S. Everling (Eds.), Oxford handbook of eye movements (pp. 593–606). Oxford: Oxford University Press.

Itti, L., & Koch, C. (2000). A saliency-based search mechanism for overt and covert shifts of visual attention. Vision Research, 40, 1489–1506. doi:10.1016/S0042-6989(99)00163-7

Itti, L., & Koch, C. (2001). Computational modeling of visual attention. Nature Reviews Neuroscience, 2, 194–203. doi:10.1038/35058500

Klein, R. M., & MacInnes, W. J. (1999). Inhibition of return is a foraging facilitator in visual search. Psychological Science, 10, 346–352. doi:10.1111/1467-9280.00166

Kroll, J. F., & Potter, M. C. (1984). Recognizing words, pictures, and concepts: A comparison of lexical, object, and reality decisions. Journal of Verbal Learning and Verbal Behavior, 23, 39–66.

Land, M., Mennie, N., & Rusted, J. (1999). The roles of vision and eye movements in the control of activities in daily living. Perception, 28, 1311–1328. doi:10.1068/p2935

Logan, G. D. (1996). The CODE theory of visual attention: An integration of space-based and object-based attention. Psychological Review, 103, 603–649. doi:10.1037/0033-295X.103.4.603

Logan, G. D., & Sadler, D. D. (1996). A computational analysis of the apprehension of spatial relations. Cambridge: MIT Press.

Mansfield, E. M., Farroni, T., & Johnson, M. H. (2003). Does gaze perception facilitate overt orienting? Visual Cognition, 10, 7–14.

Parkhurst, D. J., & Niebur, E. (2003). Scene content selected by active vision. Spatial Vision, 16, 125–154. doi:10.1163/15685680360511645

Pavan, A., Cuturi, L. F., Maniglia, M., Casco, C., & Campana, G. (2011). Implied motion from static photographs influences the perceived position of stationary objects. Vision Research, 51, 187–194. doi:10.1016/j.visres.2010.11.004

Pratt, J., Radulescu, P. V., Guo, R. M., & Abrams, R. A. (2010). It’s alive! Animate motion captures visual attention. Psychological Science, 21, 1724–1730. doi:10.1177/0956797610387440

Rayner, K. (1998). Eye movements in reading and information processing: 20 years of research. Psychological Bulletin, 124, 372–422. doi:10.1037/0033-2909.124.3.372

Scholl, B. J., & Tremoulet, P. D. (2000). Perceptual causality and animacy. Trends in Cognitive Sciences, 4, 299–309. doi:10.1016/S1364-6613(00)01506-0

Shi, J., Weng, X., He, S., & Jiang, Y. (2010). Biological motion cues trigger reflexive attentional orienting. Cognition, 117, 348–354. doi:10.1016/j.cognition.2010.09.001

Snodgrass, J. G., & Vanderwart, M. (1980). A standardized set of 260 pictures: Norms for name agreement, image agreement, familiarity, and visual complexity. Journal of Experimental Psychology: Human Learning and Memory, 6, 174–215. doi:10.1037/0278-7393.6.2.174

Tatler, B. W., & Vincent, B. T. (2009). The prominence of behavioral biases in eye guidance. Visual Cognition, 17, 1029–1054. doi:10.1080/13506280902764539

Torralba, A., Oliva, A., Castelhano, M. S., & Henderson, J. M. (2006). Contextual guidance of eye movements and attention in real-world scenes: The role of global features in object search. Psychological Review, 113, 766–786. doi:10.1037/0033-295X.113.4.766

Underwood, G., & Foulsham, T. (2006). Visual saliency and semantic incongruency influence eye movements when inspecting pictures. Quarterly Journal of Experimental Psychology, 59, 1931–1949. doi:10.1080/17470210500416342

Winawer, J., Huk, A. C., & Boroditsky, L. (2008). A motion aftereffect from still photographs depicting motion. Psychological Science, 19, 276–283. doi:10.1111/j.1467-9280.2008.02080.x

Yarbus, A. L. (1967). Eye movements and vision. New York: Plenum Press.

Author note

Portions of this research were conducted by D.A.C. as part of a senior thesis at the University of Notre Dame. We thank Ryan O’Donnell and Madden Zappa for their help with data collection. We also thank James Turza for his help creating the stimuli used in Experiment 4. Funding was provided by Notre Dame’s Institute for Scholarship in the Liberal Arts.

Author information

Authors and Affiliations

Corresponding author

Rights and permissions

About this article

Cite this article

Cronin, D.A., Brockmole, J.R. Evaluating the influence of a fixated object’s spatio-temporal properties on gaze control. Atten Percept Psychophys 78, 996–1003 (2016). https://doi.org/10.3758/s13414-016-1072-0

Published:

Issue Date:

DOI: https://doi.org/10.3758/s13414-016-1072-0