Abstract

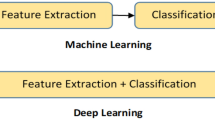

In this paper, we analyze effective methods of multi-label classification of image sets in development of visual recommender systems. We propose a two-step algorithm, which at the first step performs fine-tuning of a convolutional neural network for extraction of visual features. At the second stage, the algorithm concatenates the obtained feature vectors of each image from the input set into one descriptor using modifications of a neural aggregation module based on linear squeezing of the feature space and an attention mechanism. We perform an experimental study for the Amazon Product dataset solving a problem of classification of customer interests based on photos of the products they have purchased. We show that one of the highest F1-measure indicators can be achieved for a one-level attention block with squeezing of the feature vectors.

Similar content being viewed by others

REFERENCES

Aggarwal, C.C., Recommender Systems, Cham: Springer, 2016.

Shapiro, D., Qassoud, H., Lemay, M., and Bolic, M., Visual deep learning recommender system for personal computer users, Proc. of Int. Conf. on Applications and Systems of Visual Paradigms (VISUAL), 2017, pp. 1–10.

McAuley, J., Targett, C., Shi, Q., and Van Den Hengel, A., Image-based recommendations on styles and substitutes, Proc. of Int. Conf. on Research and Development in Information Retrieval (SIGIR), ACM, 2015, pp. 43–52.

Kang, W.C., Fang, C., Wang, Z., and McAuley, J., Visually-aware fashion recommendation and design with generative image models, Proc. of Int. Conf. on Data Mining (ICDM), IEEE, 2017, pp. 207–216.

Demochkin, K.V. and Savchenko, A.V., Visual product recommendation using neural aggregation network and context gating, J. Phys.: Conf. Ser., 2019, vol. 1368, 032016, pp. 1–7.

Zhai, A., Kislyuk, D., Jing, Y., Feng, M., Tzeng, E., Donahue, J., Du, Y.L., and Darrell, T., Visual discovery at PInterest, Proc. of Int. Conf. on World Wide Web Companion (WWW), 2017, pp. 515–524.

Yang, L., Hsieh, C.-K., and Estrin, D., Beyond classification: Latent user interests profiling from visual contents analysis, Proc. of Int. Conf. onData Mining Workshop (ICDMW), IEEE, 2015, pp. 1410–1416.

You, Q., Bhatia, S., and Luo, J., A picture tells a thousand words – about you! User interest profiling from user generated visual content, Signal Process., 2016, vol. 124, pp. 45–53.

Andreeva, E., Ignatov, D.I., Grachev, A., and Savchenko, A.V., Extraction of visual features for recommendation of products via deep learning, Proc. of Int. Conf. on Analysis of Images, Social Networks and Texts (AIST), LNCS, Springer, Cham, 2018, vol. 11179, pp. 201–210.

Yang, J., Ren, P., Chen, D., Wen, F., Li, H., and Hua, G., Neural aggregation network for video face recognition, Proc. of Int. Conf. on Computer Vision and Pattern Recognition (CVPR), IEEE, 2017, pp. 4362–4371

Goodfellow, I., Bengio, Y., and Courville, A., Deep Learning, MIT Press (Adaptive Computation and Machine Learning series), 2016.

Shankar, D., Narumanchi, S., Ananya, H., Kompalli, P., and Chaudhury, K., Deep learning based large scale visual recommendation and search for e-commerce, arXiv:1703.02344, 2017.

Wu, Z., Huang, Y., and Wang, L., Learning representative deep features for image set analysis, IEEE Trans. Multimedia, 2015, vol. 17, no. 11, pp. 1960–1968.

Demochkin, K. and Savchenko, A.V., Multi-label image set recognition in visually-aware recommender systems, Proc. of Int. Conf. on Analysis of Images, Social Networks and Texts (AIST), LNCS, Springer, Cham, 2019, vol. 11832, pp. 291–297.

Savchenko, A.V., Demochkin, K.V., and Grechikhin, I.S., User preference prediction in visual data on mobile devices, arXiv:1907.04519, 2019.

Howard, A. et al., MobileNets: efficient convolutional neural networks for mobile vision applications. arXiv:1704.04861, 2017.

Grechikhin, I. and Savchenko, A.V., User modeling on mobile device based on facial clustering and object detection in photos and videos, Proc. of Iberian Conf. on Pattern Recognition and Image Analysis (IbPRIA), LNCS, Springer, 2019, vol. 11868, pp. 429–440.

Zhu, P., Zhang, L., Zuo, W., and Zhang, D., From point to set: Extend the learning of distance metrics, Proc. of Int. Conf. on Computer Vision (ICCV), IEEE, 2013, pp. 2664–2671.

Huang, Z., Wang, R., Shan, S., and Chen, X., Learning Euclidean-to-Riemannian metric for point-to-set classification, Proc. of Int. Conf. on Computer Vision and Pattern Recognition (CVPR), IEEE, 2014, pp. 1677–1684.

Savchenko, A.V., Belova, N.S., and Savchenko, L.V., Fuzzy analysis and deep convolution neural networks in still-to-video recognition, Opt. Mem. Neural Networks, 2018, vol. 27, no. 1, pp. 23–31.

Savchenko, A.V. and Belova, N.S., Unconstrained face identification using maximum likelihood of distances between deep off-the-shelf features, Expert Syst. Appl., 2018, vol. 108C, pp. 170–182.

Miech, A., Laptev, I., and Sivic, J., Learnable pooling with Context Gating for video classification, arXiv:1706.06905, 2017.

Li, H., Hua, G., Shen, X., Lin, Z., and Brandt, J.L., Eigen-PEP for video face recognition, Proc. of Asian Conf. on Computer Vision (ACCV), 2014, pp. 17–33.

Arandjelovic, R., Gronat, P., Torii, A., Padjla, T., and Sivic, J., NetVLAD: CNN architecture for weakly supervised place recognition, Proc. of Int. Conf. on Computer Vision and Pattern Recognition (CVPR), IEEE, 2016, pp. 5297–5307.

Rassadin, A. and Savchenko, A.V., Scene recognition in user preference prediction based on classification of deep embeddings and object detection, Proc. of Int. Symp. on Neural Networks (ISNN), Springer, LNCS, 2019, vol. 11555, pp. 422–430.

Iandola, F., Han, S., Moskewicz, M., Ashraf, K., Dally, W., and Keutzer, K., SqueezeNet: AlexNet-level accuracy with 50x fewer parameters and <0.5 MB model size, arXiv:1602.07360, 2016.

Hu, J., Shen, L., and Sun, G., Squeeze-and-Excitation networks, arXiv:1709.01507, 2017.

Vaswani, A., Shazeer, N., Parmar, N., Uszkoreit, J., Jones, L., Gomez, A.N., Kaiser, L., and Polosukhin, I., Attention is all you need, Proc. of Advances in Neural Information Processing Systems (NIPS), 2017, pp. 5998–6008.

Sokolova, A.D. and Savchenko, A.V., Computation-efficient face recognition algorithm usinga sequential analysis of high dimensional neural-net features, Opt. Mem. Neural Networks, 2020, vol. 29, no. 1, pp. 19–29.

Android application for visual preferences prediction, URL: https://drive.google.com/file/d/1rThhcKReOb5A9LBIH6jkP8tTiYjoVNWH

Source code of attention neural network training for multi-task classification, URL: https://github.com/KirillDemochkin/UserVisualPreferences

Yu, X., Jiang, F., Du, J., and Gong, D., A cross-domain collaborative filtering algorithm with expanding user and item features via the latent factor space of auxiliary domains, Pattern Recognit., 2019, vol. 94, pp. 96–109.

Funding

The article was prepared within the framework of the Academic Fund Program at the National Research University Higher School of Economics (HSE University) in 2019–2020 (grant no. 19-04-004) and by the Russian Academic Excellence Project “5-100”.

CONFLICT OF INTERESTThe authors declare that they have no conflicts of interest.

Author information

Authors and Affiliations

Corresponding author

About this article

Cite this article

Savchenko, A.V., Demochkin, K.V. & Savchenko, L.V. Neural Attention Mechanism and Linear Squeezing of Descriptors in Image Classification for Visual Recommender Systems. Opt. Mem. Neural Networks 29, 297–304 (2020). https://doi.org/10.3103/S1060992X20040050

Received:

Revised:

Accepted:

Published:

Issue Date:

DOI: https://doi.org/10.3103/S1060992X20040050