Abstract

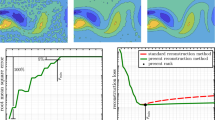

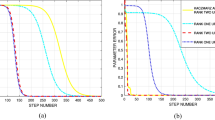

By introducing an arbitrary diagonal matrix, a generalized energy function (GEF) is proposed for searching for the optimum weights of a two layer linear neural network. From the GEF, we derive a recursive least squares (RLS) algorithm to extract in parallel multiple principal components of the input covariance matrix without designing an asymmetrical circuit. The local stability of the GEF algorithm at the equilibrium is analytically verified. Simulation results show that the GEF algorithm for parallel multiple principal components extraction exhibits the fast convergence and has the improved robustness resistance to the eigenvalue spread of the input covariance matrix as compared to the well-known lateral inhibition model (APEX) and least mean square error reconstruction (LMSER) algorithms.

Similar content being viewed by others

References

Golub, G. H., Van Loan, C. F., Matrix Computations, 2nd ed., Baltimore, MD: Johns Hopkins University Press, 1989.

Oja, E., A simplified neuron model as a principal component analyzer, J. Math. Biol., 1982, 15: 267–273.

Diamantaras, K. I., Kung, S. Y., Principal Component Networks—Theory and Applications, New York: Wiley, 1996.

Oja, E., Principal components, minor components, and linear neural networks, Neural Networks, 1992, 5: 927–935.

Yang, B., Projection approximation subspace tracking, IEEE Trans. Signal Processing, 1995, 43(1): 95–107.

Sanger, T. D., Optimal unsupervised learning in a single-layer linear feedforward neural network, Neural Networks, 1989, 2: 459–473.

Xu, L., Least mean square error reconstruction principle for self-organizing neural-nets, Neural Networks, 1993, 6: 627–648.

Ouyang, S., Neural learning algorithms for principal and minor components analysis and applications, Ph. D. dissertation, Xidian University, Xi’an, May, 2000.

Oja, E., Ogawa, H., Wangviwattana, J., Principal component analysis by homogeneous neural networks, part I: The weighted subspace criterion, IEICE, Trans. Information and System, 1992, E75-D(3): 366–375.

Brockett, R.W., Dynamical system that sort lists, diagonalize matrices, and solve linear programming problems, Linear Algebra and Its Applications, 1991, 146(1): 79–91.

Chatterjee, C., Roychowdhury, V., Chong, E., On relative convergence properties of principal component analysis algorithms, IEEE Trans. Neural Networks, 1998, 9(2): 319–329.

Oja, E., Ogawa, H., Wangviwattana, J., Principal component analysis by homogeneous neural networks, part II: Analysis and extensions of the learning algorithms, IEICE, Trans. Information and System, 1992, E75-D(3): 376–382.

Chen, T., Hua, Y., Yan, W., Global convergence of Oja’s subspace algorithm for principal component extraction, IEEE Trans. Neural Networks, 1998, 9(1): 58–67.

Author information

Authors and Affiliations

Corresponding author

Rights and permissions

About this article

Cite this article

Ouyang, S., Bao, Z. & Liao, G. Fast adaptive principal component extraction based on a generalized energy function. Sci China Ser F 46, 250–261 (2003). https://doi.org/10.1360/01yf0114

Received:

Issue Date:

DOI: https://doi.org/10.1360/01yf0114