Abstract

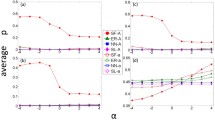

The average abundance function under aspiration rule has received much attention in recent years due to the fact that it reflects the level of cooperation of the population. Under aspiration rule, individuals will adjust their strategies based on a comparison of their payoffs from the evolutionary game to a value they aspire, called the aspiration level. This means it is important to analyze how to increase the average abundance function in order to facilitate the proliferation of cooperative behavior. However, further analytical insights have been lacking so far. In addition, the theoretical basis of the corresponding data simulation results is still lacking sufficient understanding. Therefore, this article analyses the characteristics of average abundance function \({X_A}(\omega )\) of multi-player threshold public goods evolutionary game model under aspiration rule through analytical analysis and numerical simulation. In general, the main work of this article contains three aspects. (1) The concrete expression of expected payoff function and the intuitive expression of average abundance function has been obtained. (2) The approximate expressions of average abundance function when selection intensity is large enough has been deduced. The range of summation for average abundance function will be reduced because of this approximation expression. (3) We analyze the influence of the size of group d, multiplication factor r, cost (and initial endowment) c, aspiration level \(\alpha \) on average abundance function through numerical simulation. On the one hand, the influence of parameters on average abundance function when selection intensity is small is slight. On the other hand, average abundance function will decrease with d. It will show an U-shaped trend or an upward trend when \({X_A}(\omega )\) changes with r. The \({X_A}(\omega )\) basically remains stable with the increase of c. It will show a stair-like trend when \({X_A}(\omega )\) changes with \(\alpha \). Furthermore, these conclusions have been explained on the basis of expected payoff function \(\pi \left( \centerdot \right) \) and function \(h(i,\omega )\).

Similar content being viewed by others

Data Availability Statement

This manuscript has associated data in a data repository. [Authors’ comment: The data for this article is stored in the Figshare Database: 10.6084/m9.figshare.12153519.]

References

X. Chen, F. Fu, L. Wang, Could feedback-based self-learning help solve networked Prisoner’s Dilemma? in IEEE Conference on Decision & Control (2009)

Y. Liu, X. Chen, L. Wang, B. Li, W. Zhang, H. Wang, Aspiration-based learning promotes cooperation in spatial Prisoner’s dilemma games. EPL 94(6), 60002 (2011)

W. Zeng, M. Li, N. Feng, The effects of heterogeneous interaction and risk attitude adaptation on the evolution of cooperation. J. Evol. Econ. 27(3), 1–25 (2017)

J. Zhang, Y.P. Fang, W.B. Du, X.B. Cao, Promotion of cooperation in aspiration-based spatial Prisoner’s dilemma game. Phys. A 390(12), 2258–2266 (2011)

A. Szolnoki, M. Perc, Impact of critical mass on the evolution of cooperation in spatial public goods games. Phys. Rev. E 81(5), 57101 (2010)

M. Perc, J.J. Jordan, D.G. Rand, Z. Wang, S. Boccaletti, A. Szolnoki, Statistical Physics of Human Cooperation. Phys. Rep. S144137040 (2017)

M. Perc, J. Gomez-Gardenes, A. Szolnoki, L.M. Floria, Y. Moreno, Evolutionary dynamics of group interactions on structured populations: a review. J. R. Soc. Interface 10, 20120997 (2013)

Y. Liu, X. Chen, L. Zhang, L. Wang, M. Perc, Win-stay-lose-learn promotes cooperation in the spatial Prisoner’s dilemma game. PLoS ONE 7(2), e30689 (2012)

X. Chen, F. Fu, L. Wang, Promoting cooperation by local contribution under stochastic win-stay-lose-shift mechanism. Phys. A 387(22), 5609–5615 (2008)

X. Chen, L. Wang, Promotion of cooperation induced by appropriate payoff aspirations in a small-world networked game. Phys. Rev. E 77, 01710312 (2008)

L. Zhou, B. Wu, V.V. Vasconcelos, L. Wang, Simple property of heterogeneous aspiration dynamics: beyond weak selection. Phys. Rev. E 98(6), 147 (2018)

J. Du, Redistribution promotes cooperation in spatial public goods games under aspiration dynamics. Appl. Math. Comput. 393, 121 (2019)

F.A. Matsen, M.A. Nowak, Win-stay, lose-shift in language learning from peers. Proc. Natl. Acad. Sci. USA 101(52), 18053–18057 (2004)

H. Lin, D.P. Yang, J.W. Shuai, Cooperation among mobile individuals with payoff expectations in the spatial Prisoner’s dilemma game. Chaos Soliton. Fract. 44(1), 153–159 (2011)

H.X. Yang, Z.X. Wu, B.H. Wang, Role of aspiration-induced migration in cooperation. Phys. Rev. E 81(2), 65101 (2010)

Y. Peng, X. Wang, Q. Lu, Q. Zeng, B. Wang, Effects of aspiration-induced adaptation and migration on the evolution of cooperation. Int. J. Mod. Phys. C 25(07), 69 (2014)

C. Tang, Y. Wang, L. Cao, X. Li, Y. Yang, Towards the role of social connectivity and aspiration level on evolutionary game. Eur. Phys. J. B 86(1), 1–6 (2013)

B. Wu, L. Zhou, Individualised aspiration dynamics: calculation by proofs. PLoS Comput. Biol. 14(9), 156 (2018)

T. Platkowski, Aspiration-based full cooperation in finite systems of players. Appl. Math. Comput. 251, 46–54 (2015)

X. Feng, B. Wu, L. Wang, Voluntary vaccination dilemma with evolving psychological perceptions. J. Theor. Biol. 439, 65–75 (2018)

T. Platkowski, P. Bujnowski, Cooperation in aspiration-based N-person Prisoner’s dilemmas. Phys. Rev. E 79(2), 36103 (2009)

T. Platkowski, Enhanced cooperation in Prisoner’s dilemma with aspiration. Appl. Math. Lett. 22(8), 1161–1165 (2009)

C.P. Roca, D. Helbing, Emergence of social cohesion in a model society of greedy, mobile individuals. Proc. Natl. Acad. Sci. USA 108(28), 11370–11374 (2011)

Z.X. Wu, Z. Rong, Boosting cooperation by involving extortion in spatial Prisoner’s dilemma games. Phys. Rev. E 90(6), 62102 (2014)

J.Y. Wakano, N. Yamamura, A simple learning strategy that realizes robust cooperation better than pavlov in iterated Prisoners’ dilemma. J. Ethol. 19(1), 1–8 (2001)

Z.H. Rong, Q. Zhao, Z.X. Wu, T. Zhou, K.T. Chi, Proper aspiration level promotes generous behavior in the spatial Prisoner’s dilemma game. Eur. Phys. J. B 89(7), 1–7 (2016)

H.F. Zhang, R.R. Liu, Z. Wang, H.X. Yang, B.H. Wang, Aspiration-induced reconnection in spatial public goods game. EPL 94(1), 73–79 (2011)

P. Matjaz, Z. Wang, Heterogeneous aspirations promote cooperation in the Prisoner’s dilemma game. PLoS ONE 5(12), e15117 (2010)

J. Du, B. Wu, L. Wang, Evolutionary game dynamics of multi-agent cooperation driven by self-learning, in 9th Asian Control Conference (2013)

J. Du, B. Wu, L. Wang, Aspiration dynamics and the sustainability of resources in the public goods dilemma. Phys. Lett. A 380(16), 1432–1436 (2016)

J. Du, B. Wu, L. Wang, Aspiration dynamics in structured population acts as if in a well-mixed one. Sci. Rep. UK 5, 8014 (2015)

Z. Li, Z. Yang, T. Wu, L. Wang, Aspiration-based partner switching boosts cooperation in social dilemmas. PLoS ONE 9, e978666 (2014)

M. Li, C.X. Jia, R.R. Liu, B.H. Wang, Emergence of cooperation in spatial public goods game with conditional participation. Phys. A 392(8), 1840–1847 (2013)

X. Liu, M. He, Y. Kang, Q. Pan, Aspiration promotes cooperation in the Prisoner’s dilemma game with the imitation rule. Phys. Rev. E. 94, 0121241 (2016)

W. Chen, T. Wu, Z. Li, L. Wang, Coevolution of aspirations and cooperation in spatial Prisoner’s dilemma game. J. Stat. Mech. (P01032) (2015)

K. Xu, K. Li, R. Cong, L. Wang, Cooperation guided by the coexistence of imitation dynamics and aspiration dynamics in structured populations. EPL 117, 480024 (2017)

T. Yakushkina, D.B. Saakian, New versions of evolutionary models with lethal mutations. Phys. A 507, 470–477 (2018)

B. Wu, A. Traulsen, C.S. Gokhale, Dynamic properties of evolutionary multi-player games in finite populations. Games 4(2), 182–199 (2013)

B. Wu, D. Zhou, L. Wang, Evolutionary dynamics on stochastic evolving networks for multiple-strategy games. Phys. Rev. E 84(2), 46111 (2011)

J. Du, B. Wu, P.M. Altrock, L. Wang, Aspiration dynamics of multi-player games in finite populations. J. R. Soc. Interface 11(94), 20140077 (2014)

T. Wu, L. Wang, Adaptive play stabilizes cooperation in continuous public goods games. Phys. A 495, 427–435 (2018)

J. Tu, Contribution inequality in the spatial public goods game: should the rich contribute more? Phys. A 496, 9–14 (2018)

J. Yang, Y. Chen, Y. Sun, H. Yang, Y. Liu, Group formation in the spatial public goods game with continuous strategies. Phys. A 505, 737–743 (2018)

A. McAvoy, C. Hauert, Structure coefficients and strategy selection in multiplayer games. J. Math. Biol. 72(1–2), 203–238 (2016)

X. Sui, B. Wu, L. Wang, Multiple tolerances dilute the second order cooperative dilemma. Phys. Lett. A 381(45), 3785–3797 (2017)

Y.T. Lin, H.X. Yang, Z.X. Wu, B.H. Wang, Promotion of cooperation by aspiration-induced migration. Phys. A 390(1), 77–82 (2011)

J. Tanimoto, M. Nakata, A. Hagishima, N. Ikegaya, Spatially correlated heterogeneous aspirations to enhance network reciprocity. Phys. A 391(3), 680–685 (2012)

W. Xianjia, X. Ke, Extended average abundance function of multi-player snowdrift evolutionary game under aspiration driven rule. Syst. Eng. Theory Pract. 5(39), 1128–1136 (2019)

M.A. Amaral, L. Wardil, M. Perc, J.K.L. Da Silva, Stochastic win-stay-lose-shift strategy with dynamic aspirations in evolutionary social dilemmas. Phys. Rev. E 94, 0323173 (2016)

Y.S. Chen, H.X. Yang, W.Z. Guo, Aspiration-induced dormancy promotes cooperation in the spatial Prisoner’s dilemma games. Phys. A 469, 251 (2017)

B. Wu, J. Garcia, C. Hauert, A. Traulsen, Extrapolating weak selection in evolutionary games. PLoS Comput. Biol. 9, e100338112 (2013)

P.M. Altrock, A. Traulsen, Fixation times in evolutionary games under weak selection. New J. Phys. 11, 013012 (2009)

C. Hauert, M. Holmes, M. Doebeli, Evolutionary games and population dynamics: maintenance of cooperation in public goods games. Proc. R. Soc. B Biol. Sci. 273(1600), 2565–2570 (2006)

S. Attila, P. Matja, Competition of tolerant strategies in the spatial public goods game. New J. Phys. 18(8), 83021 (2016)

A. Szolnoki, X. Chen, Competition and partnership between conformity and payoff-based imitations in social dilemmas. New J. Phys. 20(9), 568 (2018)

K. Li, R. Cong, T. Wu, L. Wang, Social exclusion in finite populations. Phys. Rev. E 91, 0428104 (2015)

Y. Dong, H. Xu, S. Fan, Memory-based stag hunt game on regular lattices. Phys. A 519, 247–255 (2019)

D. Sun, X. Kou, Punishment effect of prisoner dilemma game based on a new evolution strategy rule. Math. Probl. Eng. 57, 108024 (2014)

L. Zhang, L. Ying, J. Zhou, S. Guan, Y. Zou, Fixation probabilities of evolutionary coordination games on two coupled populations. Phys. Rev. E 94, 0323073 (2016)

R. Cong, T. Wu, Y.Y. Qiu, L. Wang, Time scales in evolutionary game on adaptive networks. Phys. Lett. A 378(13), 950–955 (2014)

M.L. Pinsky, Species coexistence through competition and rapid evolution. Proc. Natl. Acad. Sci. USA 116(7), 2407–2409 (2019)

X. Wang, S. Lv, J. Quan, The evolution of cooperation in the Prisoner’s dilemma and the snowdrift game based on particle swarm optimization. Phys. A 482, 286–295 (2017)

B. Wu, P.M. Altrock, L. Wang, A. Traulsen, Universality of weak selection. Phys. Rev. E 82, 04610642 (2010)

Acknowledgements

This research is supported by the National Natural Science Foundation of China (Nos. 72031009, 71871171) and the Key Technology Research and Development Program of Henan Province (No. 202102310312). I thank the Editors and Reviewers’ warm work earnestly. All the authors declare no conflict of interest. The data for figures in this article can be seen in 10.6084/m9.figshare.12153519.

Author information

Authors and Affiliations

Corresponding author

Appendices

Appendices

1.1 Appendix A: The proof for formula (2.3)

Formula (2.3) reveals the concrete expression of expected payoff function of multi-player threshold public goods evolutionary game model. On the basis of formula (2.3), it can be deduced that the expected payoff function \({{\pi }_{A}}(i)\) or \({{\pi }_{B}}(i)\) can be divided into two parts: the linear function related to i and the complex function related to i ( \(\varDelta {{\varepsilon }_{1}}(i)\) or \(\varDelta {{\varepsilon }_{2}}(i)\) ). In addition, the \(\varDelta {{\varepsilon }_{1}}(i)\) or \(\varDelta {{\varepsilon }_{2}}(i)\) will get close to 0 when \(i>N-d+m-1\). This means the threshold public goods game will generate to linear public goods game when i is large enough.

By inserting the expression of \({{a}_{k}}\) and \({{b}_{k}}\) defined by formula (2.2) into formula (2.1), it can be deduced that:

By using the commutative law and associative law of addition, formula (A.1a) can be rewritten as follows:

Based on the identity \(kC_{i-1}^{k}=(i-1)C_{i-2}^{k-1}\), formula (A.2) can be simplified as follows:

Based on the Vandermonde identity \(\sum \nolimits _{k=0}^{d-1}{\left( C_{i-2}^{k-1}C_{N-i}^{d-1-k} \right) }=C_{N-2}^{d-2}\) and \(\sum \nolimits _{k=0}^{d-1}{\left( C_{i-1}^{k}C_{N-i}^{d-1-k} \right) }=C_{N-1}^{d-1}\), formula (A.3) can be simplified. Then we can obtain formula (2.3a) and (2.4a).

Similarly, by using the commutative law and associative law of addition, the formula (A.2b) can be rewritten as follows:

Based on the identity \(kC_{i}^{k}=iC_{i-1}^{k-1}\), formula (A.4) can be simplified as follows:

Based on the Vandermonde identity \(\sum \nolimits _{k=0}^{d-1}{\left( C_{i-2}^{k-1}C_{N-i}^{d-1-k} \right) }=C_{N-2}^{d-2}\) and \(\sum \nolimits _{k=0}^{d-1}{\left( C_{i-1}^{k}C_{N-i}^{d-1-k} \right) }=C_{N-1}^{d-1}\), formula (A.5) can be simplified. Then we can obtain formula (2.3b) and (2.4b).

Furthermore, the characteristics of the \(\varDelta {{\varepsilon }_{1}}(i)=-\sum \nolimits _{k=0}^{m-2}{\frac{C_{i-1}^{k}C_{N-i}^{d-1-k}}{C_{N-1}^{d-1}}\left( \frac{k+1}{d}rc \right) }\) will be taken into consideration. Based on the properties of permutation and combination function, it can be deduced that:

By combining formula (A.6a) and (A.6b), it can be deduced that there will be \(\varDelta {{\varepsilon }_{1}}(i)=0\) if \(i<k+1\) or \(i>N-d+k+1\). Because \(0\le k\le m-2\), it can be deduced that there will be \(\varDelta {{\varepsilon }_{1}}(i)=0\) if \(i<1\) or \(i>N-d+m-1\).

Moreover, the characteristics of the \(\varDelta {{\varepsilon }_{2}}(i)=-\sum \nolimits _{k=0}^{m-1}{\frac{C_{i}^{k}C_{N-i-1}^{d-1-k}}{C_{N-1}^{d-1}}\left( \frac{k}{d}rc+c \right) }\) will be taken into account. Based on the properties of permutation and combination function, it can be deduced that:

By analyzing formula (A.7a) and (A.7b), it can be deduced that there will be \(\varDelta {{\varepsilon }_{2}}(i)=0\) if \(i<k\) or \(i>N-d+k\). Because \(0\le k\le m-2\), it can be deduced that there will be \(\varDelta {{\varepsilon }_{2}}(i)=0\) if \(i<0\) or \(i>N-d+m-1\).

Then, it can be concluded from the above discussions that there will be \(\varDelta {{\varepsilon }_{1}}(i)=0\) and \(\varDelta {{\varepsilon }_{2}}(i)=0\) if \(i<0\) or \(i>N-d+m-1\). Because the inequality \(i<0\) can not hold, it can be deduced that there will be \(\varDelta {{\varepsilon }_{1}}(i)=0\) and \(\varDelta {{\varepsilon }_{2}}(i)=0\) if \(i>N-d+m-1\). In this situation, both \({{\pi }_{A}}(i)\) and \({{\pi }_{B}}(i)\) will change linearly with i.

1.2 Appendix B: The proof for formula (3.5)

On the basis of formula (3.5), we can obtain the approximate expression of average abundance function when selection intensity is large. Because of the approximation formula (3.5), the range of summation will be reduced. In addition, the number of operations for average abundance function \({{X}_{A}}(\omega )\) will be reduced and the operating efficiency for numerical simulation will be improved. Furthermore, when analyzing the properties of average abundance function \({{X}_{A}}(\omega )\), we only need to focus on the case for \(i\in \left[ 1,{{i}^{*}}+1 \right] \) (not the \(i\in \left[ 1,N \right] \)).

We have already deduced that when i is large enough, the following relationship will hold:

We will set the critical i for this situation as \(i_0\). In other words, that formula (B.1) will hold if \(i>i_0\).

Based on result 3.1, it can be deduced that the \({\pi _B}(i)\) will increase with the increase of i when i is large. This means that the inequality \({\pi _B}(i) > \alpha + \varepsilon \) will hold if i is large enough.

Furthermore, it can be deduced that if i is large enough to make sure the inequality \({\pi _B}(i) > \alpha + \varepsilon \) holds, at the same time selection intensity \(\omega \) is large enough, the following inequality will hold:

We will set the critical i for formula (B.2) as \(i_1\) and set the critical \(\omega \) for formula (B.2) as \(\omega _1\). In other words, formula (B.2) will hold when \(i>i_1\) and \(\omega >\omega _1\).

We have already deduced that the formula (B.1) will hold when \(i>i_0\). In addition, formula (B.2) will hold when \(i>i_1\) and \(\omega >\omega _1\). So it can be concluded that when \(i>{{i}^{*}}\) and \(\omega >\omega _1\), both formula (B.1) and (B.2) will hold. It should be noted that \({{i}^{*}}\) can be defined as follows:

Then, by combining formula (B.1b) and (B.2), it can be deduced that:

For the convenience of description, we will set:

Based on the above discussions, it can deduced that when \(i>{{i}^{*}}\) and \(\omega >\omega _1\), the following formula will hold:

Then we will consider how to use formula (B.6) to simplify the \({\mathop {\prod }}_{i=0}^{j-1}\,h(i,\omega )\) in formula (2.7). It can be deduced that when \(j>{{i}^{*}}\text {+}1\), the \({\mathop {\prod }}_{i=0}^{j-1}\,h(i,\omega )\) can be rewritten as:

By inserting formula (B.6) into (B.7), the following result can be obtained:

Then, the \({\mathop {\prod }}_{i=0}^{j-1}\,h(i,\omega )\) can be rewritten as :

Based on formula (B.9), it can be deduced that:

Then it can be deduced that the first item on the right side of formula (B.10) is the summation for the case of \(i\le {{i}^{*}}\), and the second item is the summation for the case of \(i>{{i}^{*}}\). Furthermore, by inserting formula (B.6) into (B.10), it can be deduced that:

In addition, it should be noticed that in formulas (B.11a) and (B.11b), the \({\mathop {\prod }}_{i=0}^{{{i}^{*}}}\,h(i,\omega )\) in \(\sum \nolimits _{j={{i}^{*}}+2}^{N}{j\mathop {\prod }}_{i=0}^{{{i}^{*}}}\,h(i,\omega )\underset{i={{i}^{*}}+1}{\overset{j-1}{\mathop {\prod }}}\,\frac{N-i}{i+1}{{e}^{-\omega \varepsilon }}\) and \(\sum \nolimits _{j={{i}^{*}}+2}^{N}{\mathop {\prod }}_{i=0}^{{{i}^{*}}}\,h(i,\omega ){\mathop {\prod }}_{i={{i}^{*}}+1}^{j-1}\,\frac{N-i}{i+1}{{e}^{-\omega \varepsilon }}\) can be moved out of the summation. So formulas (B.11a) and (B.11b) can be rewritten as follows:

By analyzing the \(\underset{i={{i}^{*}}+1}{\overset{j-1}{\mathop {\prod }}}\,\frac{N-i}{i+1}{{e}^{-\omega \varepsilon }}\) in formula (B.12), it can be deduced that:

By inserting formula (B.13) into (B.12), it can be deduced that:

Moreover, we should attach importance to the item \(\sum \nolimits _{j={{i}^{*}}+2}^{N}{jC_{N}^{j}{{e}^{-\omega \varepsilon \left( j-{{i}^{*}}-1 \right) }}}\) and \(\sum \nolimits _{j={{i}^{*}}+2}^{N}{C_{N}^{j}{{e}^{-\omega \varepsilon \left( j-{{i}^{*}}-1 \right) }}}\) in formulas (B.14a) and (B.14b). Based on the fact that \({{i}^{*}}+2\le j\le N\), it can be deduced that \(j-{{i}^{*}}-1>0\). So the \({{e}^{-\omega \varepsilon \left( j-{{i}^{*}}-1 \right) }}\) will be sufficiently small when selection intensity \(\omega \) is large enough. We will set the critical \(\omega \) for this relationship as \(\omega _2\). In other words, the relationship \({{e}^{-\omega \varepsilon \left( j-{{i}^{*}}-1 \right) }}\rightarrow 0\) will hold when \(\omega >\omega _2\).

Considering the analyses above, it can be deduced that when \(i>{{i}^{*}}\) and \(\omega >{{\omega }^{*}}\), formula (B.15) will hold:

It should be noted that the expressions for \({{\omega }^{*}}\) is defined as follows:

Then, by inserting formula (B.15) into (2.7), the approximate expression of average abundance function can be obtained:

1.3 Appendix C: The proof for Property 5.1 and formula (5.1)

On the basis of Property 5.1, we can analyze how average abundance function changes with m when \(m=0.5\). On the basis of formula (5.1), we can obtain the concrete expression for the approximation formula of average abundance function of multi-player threshold public goods evolutionary game model when selection intensity \(\omega \) is small. Furthermore, formula (5.1) lays a foundation for the formula (5.5), (5.8), (5.10) and so on. This means formula (5.1) will play an important role in the following research on the influence of parameters (\(d, r, c, \alpha \)) on average abundance function.

Many scholars have deduced the approximation expression of average abundance function when \(\omega \rightarrow 0\) [63]:

Since \({{\pi }_{A}}(i)=\sum \nolimits _{k=0}^{d-1}{\frac{C_{i-1}^{k}C_{N-i}^{d-1-k}}{C_{N-1}^{d-1}}{{a}_{k}}}\) and \({{\pi }_{B}}(i)=\sum \nolimits _{k=0}^{d-1}{\frac{C_{i}^{k}C_{N-i-1}^{d-1-k}}{C_{N-1}^{d-1}}{{b}_{k}}}\) , it can be deduced that the \({{a}_{k}}-{{b}_{k}}\) in formula (C.1) reflects the direct connection between \({\pi _A}\left( i \right) \) and \({\pi _B}\left( i \right) \). Furthermore, it can be deduced that when selection intensity is sufficient small (\(\omega \rightarrow 0\)), the indirect connection between \({\pi _A}\left( i \right) \) and \({\pi _B}\left( i \right) \) brought by aspiration level \(\alpha \) will become the direct connection between \({\pi _A}\left( i \right) \) and \({\pi _B}\left( i \right) \) reflected by \({{a}_{k}}-{{b}_{k}}\) in formula (C.1).

By inserting formula (2.2) into (C.1), we can get:

Based on formula (C.2), it can be deduced that:

From the above analysis, formula (5.1) can be proved. In addition, this will lay a foundation for proving Property 5.1. Based on formula (C.3), it can be deduced that:

The function g(m) in formula (C.4) can be defined as follows:

It can be deduced that whether formula (C.4) is positive or negative depends on function \(g\left( m \right) \) because the inequality \(1<m<d\) holds. In other words, whether \({{\left. {{X}_{A}}(\omega ) \right| }_{m+1}}-{{\left. {{X}_{A}}(\omega ) \right| }_{m}}\) is positive or negative will be determined by function \(g\left( m \right) \).

Furthermore, it can be deduced that the function \(g\left( m \right) \) is a monadic quadratic equation related to m and the two roots of this equation can be defined as follows:

Based on the inequality \(r>1\), it can be deduced that:

It can be concluded that \({{m}_{1}}<0\) and \(0<{{m}_{2}}<d\). Then, the \({{m}_{1}}\) will be discarded and \(0<{{m}_{2}}<d\) will be retained because the condition \(1<m<d\) must be satisfied in the threshold public goods evolutionary game model.

Moreover, it can be deduced based on the properties of monadic quadratic equation that the function \(g\left( m \right) \) will be larger than 0 when \(0<m<{{m}_{2}}\). In addition, the function \(g\left( m \right) \) will be smaller than 0 when \({{m}_{2}}<m<d\). Also, the function \(g\left( m \right) \) will be equal to 0 when \(m={{m}_{2}}\). Combining the discussions above, it can be concluded that:

It has been deduced that whether \({{\left. {{X}_{A}}(\omega ) \right| }_{m+1}}-{{\left. {{X}_{A}}(\omega ) \right| }_{m}}\) is positive or negative will be determined by function \(g\left( m \right) \). So there will be:

Then it can be deduced that the \({{X}_{A}}(\omega )\) will increase with m when \(0<m<{{m}_{2}}\). In addition, the \({{X}_{A}}(\omega )\) will decrease with m when \({{m}_{2}}+1<m<d\). Moreover, the \({{X}_{A}}(\omega )\) will reach the maximum value when \(m={{m}_{2}}\) or \(m={{m}_{2}}+1\).

It should be noted that \({{m}_{2}}\) is a decimal. Since the threshold m must be an integer, it can be deduced that \({{X}_{A}}(\omega )\) will increase with m when \(0<m\le {\text {int}}\left( \frac{d}{4r}\left[ r-1+\sqrt{{{r}^{2}}+6r+1} \right] \right) +1\) and decrease with m when \({\text {int}}\left( \frac{d}{4r}\left[ r-1+\sqrt{{{r}^{2}}+6r+1} \right] \right) +1<m<d\). In addition, \({{X}_{A}}(\omega )\) will reach the maximum value when \(m={\text {int}}\left( \frac{d}{4r}\left[ r-1+\sqrt{{{r}^{2}}+6r+1} \right] \right) +1\). Then Property 5.1 will be proved.

1.4 Appendix D: The mathematical interpretations of Result 5.1.1–5.1.3

1.4.1 The mathematical interpretation of Result 5.1.1

Based on formula (5.1), it can be deduced that:

On the one hand, based on formula (D.1), it is easy to deduce that the average abundance function will be larger than 0.5 when \(d\le r\) :

On the other hand, we will consider the situation when \(d>r\). Then it is necessary to compare \(mC_{d-1}^{m-1}r\) and \(\left( d-r \right) \sum \nolimits _{k=m}^{d-1}{C_{d-1}^{k}}\) in formula (D.1). It can be deduced that there will be \(mC_{d-1}^{m-1}r<\left( d-r \right) \sum \nolimits _{k=m}^{d-1}{C_{d-1}^{k}}\) because d is large enough. So average abundance function will be smaller than 0.5 in this situation. Moreover, it can be deduced that the \({{2}^{d+2}}d\) as part of the denominator is very large. So average abundance function will change slowly.

1.4.2 The mathematical interpretation of Result 5.1.2

The result 5.1.2 can be explained on the basis of formula (5.5):

Because \(d>r\), it is necessary to compare \(mC_{d-1}^{m-1}r\) and \(\left( d-r \right) \sum \nolimits _{k=m}^{d-1}{C_{d-1}^{k}}\) in formula (5.5).

On the one hand, when \(d=11\), it can be deduced that \(\omega \frac{1}{{{2}^{d+2}}}\frac{c}{d}\left[ mC_{d-1}^{m-1}r-\left( d-r \right) \sum \nolimits _{k=m}^{d-1}{C_{d-1}^{k}} \right] \) will be small and \(\omega \frac{1}{{{2}^{d+2}}}\frac{c}{d}\left[ mC_{d-1}^{m-1}r-\left( d-r \right) \sum \nolimits _{k=m}^{d-1}{C_{d-1}^{k}} \right] >0\).

On the other hand, when d keeps increasing, it can be deduced that \(C_{d-1}^{m-1}>C_{d-1}^{k}\) (\(m\le k\le d-1\)). So we can obtain that:

Based on formula (D.3), it can be deduced that the average abundance function will be larger than 0.5. In addition, the \({{2}^{d+2}}d\) as part of the denominator is very large. So average abundance function will be slightly larger than 0.5.

1.4.3 The mathematical interpretation of Result 5.1.3

On the one hand, it can be deduced that individuals will become more rational when selection intensity \(\omega \) becomes larger. In other words, more individuals in the group will choose strategy B when selection intensity \(\omega \) increases from \(\omega =0.5\) to \(\omega =4\) or \(\omega =9\). In addition, it can be deduced that with the increase of the size of group d, the proportion of individuals choosing strategy A in the group will decrease. Combining the discussions above, it can be deduced that the average abundance function will decrease with d when \(\omega =4\) or \(\omega =9\).

1.5 Appendix E: The mathematical interpretations of Result 5.2.1-5.2.3

1.5.1 The mathematical interpretation of Result 5.2.1

The result 5.2.1 when \(\omega =0.5\) and \(m=4\) can be explained based on formula (5.1):

Based on formula (5.1), it can be deduced that:

So average abundance function will increase with r when \(\omega =0.5\). In addition, the trend is close to linear change.

The reason why average abundance function will increase with r when \(\omega =0.5\) if \(m=10\) is similar to the situation when \(m=4\). But it should be noted the corresponding trend is not linear change. Furthermore, it can be deduced that the applicability of formula (5.1) is limited when \(m=10\). It indicates that the complete applicability of formula (5.1) requires that m should be smaller (at least \(m<10\)).

1.5.2 The mathematical interpretation of Result 5.2.2

First of all, we will try to explain why average abundance function will decrease with r when \(r<4\). Based on the characteristics of \({{\pi }_{A}}(i+1)\) and \({{\pi }_{B}}(i)\), it can be deduced that the inequality \({{\pi }_{B}}(i)>{{\pi }_{A}}(i+1)\) holds in most cases when \(r<4\). This means that in the process of the increase of r, the \({{\pi }_{B}}(i)\) will become larger than aspiration level \(\alpha \) soon, while the \({{\pi }_{A}}(i+1)\) is still smaller than aspiration level \(\alpha \) at the same time. In other words, \({{\pi }_{B}}(i)>\alpha \) and \({{\pi }_{A}}(i+1)<\alpha \). Therefore, more individuals will choose strategy B. So average abundance function will decrease with r when \(r<4\).

Then, we will try to explain why average abundance function will increase with r when \(r>4\). Based on the expected payoff function \(\pi \left( \centerdot \right) \) defined by formula (3.1) and the function \(h\left( i,\omega \right) \) defined by formula (2.7), this phenomenon can be understood.

Based on formula (3.1), we can obtain the derivative of \({{\pi }_{A}}(i+1)\) and \({{\pi }_{B}}(i)\) with respect to r. In addition, we can compare the derivative of \({{\pi }_{A}}(i+1)\) with the derivative of \({{\pi }_{B}}(i)\) :

Based on formula (E.2), it can be concluded that both \({{\pi }_{A}}(i+1)\) and \({{\pi }_{B}}(i)\) will increase with r. In addition, the increasing rate of \({{\pi }_{A}}(i+1) \) will be higher than that of \({{\pi }_{B}}(i)\).

Based on the discussions above, it can be deduced that both \({{\pi }_{A}}(i+1)\) and \({{\pi }_{B}}(i)\) will become larger than aspiration level \(\alpha \) when r increases to a very high level (\(r>4\) ). In other words, \({{\pi }_{A}}(i+1)>\alpha , {{\pi }_{B}}(i)>\alpha \). In addition, the increasing rate of \({{\pi }_{A}}(i+1)\) with respect to r is larger than that of \({{\pi }_{B}}(i)\). Furthermore, combining the discussions above, it can be concluded that \(\frac{1+{{e}^{-\omega (\alpha -{{\pi }_{A}}(i+1))}}}{1+{{e}^{-\omega (\alpha -{{\pi }_{B}}(i))}}}\) will increase with r. Moreover, it can be deduced that \(h\left( i,\omega \right) \) will increase with r because \(h\left( i,\omega \right) =\frac{N-i}{i+1}\times \frac{1+{{e}^{-\omega (\alpha -{{\pi }_{A}}(i+1))}}}{1+{{e}^{-\omega (\alpha -{{\pi }_{B}}(i))}}}\). So average abundance function will increase with r when \(r>4\) .

1.5.3 The mathematical interpretation of Result 5.2.3

Based on the characteristics of \({{\pi }_{A}}(i+1)\) and \({{\pi }_{B}}(i)\), it can be deduced that the inequality \({{\pi }_{B}}(i)<{{\pi }_{A}}(i+1)\) holds in most cases when \(m=10\). This means that in the process of the increase of r, the \({{\pi }_{A}}(i+1)\) will become larger than aspiration level \(\alpha \) and the \({{\pi }_{B}}(i)\) is still smaller than aspiration level \(\alpha \) at the same time. In other words, \({{\pi }_{A}}(i+1)>\alpha \) and \({{\pi }_{B}}(i)< \alpha \). Therefore, more individuals will choose strategy A. So average abundance function will increase with r.

1.6 Appendix F: The mathematical interpretations of Result 5.3.1-5.3.3

1.6.1 The mathematical interpretation of Result 5.3.1

The result 5.3.1 can be explained based on formula (5.1):

Based on formula (5.1), it can be deduced that:

When \(m=4\), by inserting the values of parameters into the \(\frac{{\partial {X_A}(\omega )}}{{\partial c}}\), it can be deduced that:

Based on the properties of permutation and combination function, the following inequality will hold:

Based on formulas (F.1)–(F.3), it can be deduced that \(\frac{{\partial {X_A}(\omega )}}{{\partial c}} < 0\). So average abundance function will decrease with c when \(\omega =0.5\). In addition, the trend is close to linear change.

1.6.2 The mathematical interpretation of Result 5.3.2

When \(m=10\), by inserting the values of parameters into the \(\frac{{\partial {X_A}(\omega )}}{{\partial c}}\), it can be deduced that:

Based on the properties of permutation and combination function, the following inequality will hold:

Based on formulas (F.4)–(F.5), it can be deduced that \(\frac{{\partial {X_A}(\omega )}}{{\partial c}} > 0\). So average abundance function will increase with c when \(\omega =0.5\).

It should be noted the corresponding trend is not linear change when \(m=10\). Furthermore, it can be deduced that the applicability of formula (5.1) is limited when \(m=10\). It indicates that the complete applicability of formula (5.1) requires that m should be smaller (at least \(m<10\)).

1.6.3 The mathematical interpretation of Result 5.3.3

When \(m=4\), based on formula (3.1), we can obtain the derivative of \({{\pi }_{A}}(i+1)\) and \({{\pi }_{B}}(i)\) with respect to c. In addition, we can compare the derivative of \({{\pi }_{A}}(i+1)\) with the derivative of \({{\pi }_{B}}(i)\):

Furthermore, by inserting the values of parameters into formula (F.6c), it can be deduced that:

Based on the discussions above, it can be deduced that both \({{\pi }_{A}}(i+1)\) and \({{\pi }_{B}}(i)\) will increase with c. In addition, the difference between \(\frac{{\partial {\pi _A}(i + 1)}}{{\partial c}}\) and \(\frac{{\partial {\pi _B}(i)}}{{\partial c}}\) is so small that it can be ignored because \(\frac{{\partial {\pi _A}(i + 1)}}{{\partial c}} - \frac{{\partial {\pi _B}(i)}}{{\partial c}} < \frac{r}{d} - 1 \approx - 0.47\) and \(-0.47\) is very small. Furthermore, it can be deduced that \(h\left( i,\omega \right) \) will basically remain unchanged with c because \(h\left( i,\omega \right) =\frac{N-i}{i+1}\times \frac{1+{{e}^{-\omega (\alpha -{{\pi }_{A}}(i+1))}}}{1+{{e}^{-\omega (\alpha -{{\pi }_{B}}(i))}}}\). So average abundance function will basically remain unchanged with c when \(m=4\). The tendency for the situation when \(m=10\) is similar to this.

1.7 Appendix G: The mathematical interpretations of Result 5.4.1-5.4.4

1.7.1 The mathematical interpretation of Result 5.4.1

The result 5.4.1 can be explained based on formula (5.1):

Based on formula (5.1), it can be deduced that \(\frac{{\partial {X_A}(\omega )}}{{\partial \alpha }} = 0\). So average abundance function will basically remain stable with the increase of \(\alpha \) when \(\omega =0.5\) if \(m=4\).

1.7.2 The mathematical interpretation of Result 5.4.2

It should be noted that average abundance function will decrease with \(\alpha \) when \(\omega =0.5\) if \(m=10\). This phenomenon is inconsistent with the conclusion that \(\frac{{\partial {X_A}(\omega )}}{{\partial \alpha }} = 0\). Furthermore, it can be deduced that the applicability of formula (5.1) is limited when \(m=10\). It indicates that the complete applicability of formula (5.1) requires that m should be smaller (at least \(m<10\)).

1.7.3 The mathematical interpretation of Result 5.4.3

When \(m=4\), based on formula (3.1), we can obtain the derivative of \({{\pi }_{A}}(i+1)\) and \({{\pi }_{B}}(i)\) with respect to \(\alpha \). In addition, we can compare the derivative of \({{\pi }_{A}}(i+1)\) with the derivative of \({{\pi }_{B}}(i)\):

Based on formula (G.1), it can be concluded that neither \({{\pi }_{A}}(i+1)\) and \({{\pi }_{B}}(i)\) is related to \(\alpha \). This result lays a foundation for the following discussions.

At the beginning, we will try to explain why average abundance function will basically remain stable with \(\alpha \) when \(\alpha <4.1\). When \(\alpha \) is small (\(\alpha <4.1\)), both \({{\pi }_{A}}(i+1)\) and \({{\pi }_{B}}(i)\) will become larger than aspiration level \(\alpha \). In other words, \({{\pi }_{A}}(i+1)>\alpha , {{\pi }_{B}}(i)>\alpha \). Furthermore, the following inequality will be established:

Because of the establishment of formula (G.2), the function \(h\left( i,\omega \right) \) will gradually degenerate to the following form:

Based on formula (3.1) and (G.3), it can be deduced that:

Based on formula (G.4), it can be deduced that function \(h(i,\omega )\) is not related to \(\alpha \). Moreover, it can be deduced that the corresponding average abundance function will be not related to \(\alpha \). In other words, average abundance function will remain stable when \(\alpha \) is small (\(\alpha <4.1\)).

Then, we will try to explain why average abundance function will increase with \(\alpha \) and get close to 1/2 when \(\alpha >4.1\). It can be deduced that both \({{\pi }_{A}}(i+1)\) and \({{\pi }_{B}}(i)\) will become smaller than aspiration level \(\alpha \) when \(\alpha \) increases to a very high level (\(\alpha >4.1\)). In other words, \({{\pi }_{A}}(i+1)<\alpha , {{\pi }_{B}}(i)<\alpha \). Furthermore, the following inequality will be established:

Because of the establishment of formula (G.5), the function \(h\left( i,\omega \right) \) will gradually degenerate to the first part:

Based on formula (G.6), it can be deduced that average abundance function will gradually get close to 1/2 when \(\alpha \) is large enough(\(\alpha >4.1\)). In addition, it is easy to deduce that \({X_A}(\omega )\left| {_{m = 4}} \right. <0.5\) when \(\alpha <4.1\). Based on the discussions above, it can be concluded that average abundance function will increase and get close to 1/2 when \(\alpha >4.1\) (from \({X_A}(\omega )<0.5\) to \({X_A}(\omega ) \rightarrow 0.5\)).

On the whole, if \(m=4\), average abundance function will remain stable at first and then increase with \(\alpha \) when \(\omega =4\) or \(\omega =9\). In addition, average abundance function will get close to 1/2.

1.7.4 The mathematical interpretation of Result 5.4.4

The reason for result 5.4.4 is similar to the reason for result 5.4.3. It can be deduced that average abundance function will remain stable when \(\alpha \) is small (\(\alpha <4.1\)). In addition, there will be \({X_A}(\omega ) \rightarrow 0.5\) when \(\alpha \) is large enough(\(\alpha >4.1\)). Furthermore, it is easy to deduce that \({X_A}(\omega )\left| {_{m = 10}} \right. >0.5\) when \(\alpha <4.1\). Based on the discussions above, it can be concluded that average abundance function will decrease and get close to 1/2 when \(\alpha >4.1\) (from \({X_A}(\omega )>0.5\) to \({X_A}(\omega ) \rightarrow 0.5\)).

On the whole, if \(m=10\), average abundance function will remain stable at first and then decrease with \(\alpha \) when \(\omega =4\) or \(\omega =9\). In addition, average abundance function will get close to 1/2.

Rights and permissions

About this article

Cite this article

Xia, K. The average abundance function of multi-player threshold public goods evolutionary game model. Eur. Phys. J. Plus 136, 242 (2021). https://doi.org/10.1140/epjp/s13360-021-01218-0

Received:

Accepted:

Published:

DOI: https://doi.org/10.1140/epjp/s13360-021-01218-0