Abstract

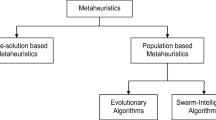

Swarm algorithms belong to the class of population metaheuristic optimization methods. Despite the use of various metaphors, most swarm algorithms have similar structures, where one can distinguish common components such as the decision population initialization, decision diversification, and decision intensification. Based on the concept of generality, an analysis of key approaches to, methods for, and ways of increasing the efficiency of swarm optimization algorithms was carried out. In the survey, swarm optimization algorithms are viewed as a set of operators without a detailed discussion of each algorithm. The main focus is on the analysis of the key components of the algorithms. The main idea behind efficiency improvement is to maintain a balance between diversification and intensification. In this context, we consider mechanisms for supporting population diversity, methods for tuning and adjusting the swarm algorithm parameters, and approaches to hybridization of algorithms. We also indicate several open problems related to the topic of the survey.

Similar content being viewed by others

REFERENCES

Li, Q., Liu, S.-Y., and Yang, X.-S., Influence of initialization on the performance of metaheuristic optimizers, Appl. Soft Comput., 2020, vol. 91, article ID 106193.

Luke, S., Essentials of Metaheuristics, lulu.com, 2013. .

Boussaid, I., Lepagnot, J., and Siarry, P., A survey on optimization metaheuristics, Inf. Sci., 2013, vol. 237, pp. 82–117.

Kirkpatrick, S., Gelatt, C.D., and Vecchi, M.P., Optimization by simulated annealing, Science, 1983, vol. 220, pp. 671–680.

Glover, F., Future paths for integer programming and links to artificial intelligence, Comput. Oper. Res., 1986, vol. 13, pp. 533–549.

Nematollahi, A.F., Rahiminejad, A., and Vahidi, B., A novel meta-heuristic optimization method based on golden ratio in nature, Soft Comput., 2020, vol. 24, pp. 1117–1151.

Wolpert, D. and Macready, W., No free lunch theorems for optimization, IEEE Trans. Evol. Comput., 1997, vol. 1, pp. 67–82.

Clerc, M., From theory to practice in particle swarm optimization, in Handbook of Swarm Intelligence. Vol. 8 , Berlin: Springer, 2011, pp. 3–36.

Sorensen, K., Metaheuristics—the metaphor exposed, Int. Trans. Oper. Res., 2015, vol. 22, no. 1, pp. 3–18.

Camacho-Villalon, C.L., Dorigo, M., and Stützle, T., The intelligent water drops algorithm: why it cannot be considered a novel algorithm, Swarm Intell., 2019, vol. 13, pp. 173–192.

Weyland, D., A rigorous analysis of the harmony search algorithm: how the research community can be misled by a “novel” methodology, Int. J. Appl. Metaheuristic Comput., 2010, vol. 1, pp. 50–60.

Geem, Z.W., Kim, J.H., and Loganathan, G.V., A new heuristic optimization algorithm: harmony search, Simulation, 2001, vol. 76, pp. 60–68.

Piotrowski, A.P., Napiorkowski, J.J., and Rowinski, P.M., How novel is the “novel” black hole optimization approach?, Inf. Sci., 2014, vol. 267, pp. 191–200.

Hatamlou, A., Black hole: a new heuristic optimization approach for data clustering, Inf. Sci., 2013, vol. 222, pp. 175–184.

Shah-Hosseini, H., The intelligent water drops algorithm: a nature-inspired swarm-based optimization algorithm, Int. J. Bio-Inspired Comput., 2009, vol. 1, no. 1/2, pp. 71–79.

Neri, F., Diversity management in memetic algorithms, in Handbook of Memetic Algorithms. Studies in Computational Intelligence. Vol. 379 , Berlin–Heidelberg: Springer, 2012, pp. 153–165.

Cuevas, E., Oliva, D., Zaldivar, D., Perez-Cisneros, M., and Pajares, G., Opposition-based electromagnetism-like for global optimization, Int. J. Innovation Comput. I , 2012, vol. 8, no. 12, pp. 8181–8198.

Turgut, M.S., Turgut, O.E., and Eliiyi, D.T., Island-based crow search algorithm for solving optimal control problems, Appl. Soft Comput., 2020. vol. 90. article ID 106170.

Khodashinskii, I.A. and Dudin, P.A., Identification of fuzzy systems based on direct ant-colony algorithm, Iskusstv. Intellekt Prinyatie Reshenii, 2011, no. 3, pp. 26–33.

Kennedy, J. and Ebenhart, R., Particle swarm optimization, Proc. 1995 IEEE Int. Conf. Neural Networks, Perth: IEEE Serv. Center, 1995, pp. 1942–1948.

Yang, X.-S. and Deb, S., Engineering optimisation by cuckoo search, Int. J. Math. Model. Numer. Optim., 2010, vol. 1, no. 4, pp. 330–343.

Shirazi, M.Z. et al., Particle swarm optimization with ensemble of inertia weight strategies, in ICSI 2017, Lect. Notes Comput. Sci., Vol. 10385 , Heidelberg: Springer, 2017, pp. 140–147.

Chen, D.B. and Zhao, C.X., Particle swarm optimization with adaptive population size and its application, Appl. Soft Comput., 2009, vol. 9, no. 1, pp. 39–48.

Chen, L., Lu, H., Li, H., Wang, G., and Chen, L., Dimension-by-dimension enhanced cuckoo search algorithm for global optimization, Soft Comput., 2019, vol. 23, pp. 11297–11312.

Singh, A. and Deep, K., Artificial bee colony algorithm with improved search mechanism, Soft Comput., 2019, vol. 23, pp. 12437–12460.

Gupta, S. and Deep, K., Cauchy grey wolf optimiser for continuous optimisation problems, J. Exp. Theor. Artif. Intell., 2018, vol. 30, pp. 1051–1075.

Kazimipour, B., Omidvar, M.N., Li, X., and Qin, A., A novel hybridization of opposition-based learning and cooperative co-evolutionary for large-scale optimization, Proc. IEEE Congr. Evol. Comput. (Beijing, 2014), pp. 2833–2840.

Pant, M., Thangaraj, R., and Abraha, A., Low discrepancy initialized particle swarm optimization for solving constrained optimization problems, Fundamenta Informaticae, 2009, vol. 95, pp. 511–531.

Brits, R., Engelbrecht, A.P., and van den Bergh, F., A niching particle swarm optimizer, Proc. 4th Asia-Pac. Conf. Simul. Evol. Learn. (2002), pp. 692–696.

Altinoz, O.T., Yilmaz, A.E., and Weber, G., Improvement of the gravitational search algorithm by means of low-discrepancy Sobol quasi random-number sequence based initialization, Adv. Electron. Comput. Eng., 2014, vol. 14, no. 3, pp. 55–62.

Mahdavi, S., Rahnamayan, S., and Deb, K., Opposition based learning: a literature review, Swarm Evol. Comput., 2018, vol. 39, pp. 1–23.

Rahnamayan, S., Tizhoosh, H.R., and Salama, M., Opposition-based differential evolution, IEEE Trans. Evol. Comput., 2008, vol. 12, no. 1, pp. 64–79.

Farooq, M.U., Ahmad, A., and Hameed, A., Opposition-based initialization and a modified pattern for inertia weight (IW) in PSO, Proc. IEEE Int. Conf. Innovation Intell. Syst. Appl. (INISTA), 2017, no. 17083603.

Wang, G.-G., Deb, S., Gandomi, A.H., and Alavi, A.H., Opposition-based krill herd algorithm with Cauchy mutation and position clamping, Neurocomputing, 2016, vol. 177, pp. 147–157.

Gao, W.-F., Liu, S.-Y., and Huang, L.-L., Particle swarm optimization with chaotic opposition-based population initialization and stochastic search technique, Commun. Nonlinear Sci. Numer. Simul., 2012, vol. 17, pp. 4316–4327.

Kazimipour, B., Li, X., and Qin, A.K., A review of population initialization techniques for evolutionary algorithms, Proc. IEEE Congr. Evol. Comput. (Beijing, 2014), pp. 2585–2592.

Melo, V.V. and Botazzo Delbem, A.C., Investigating smart sampling as a population initialization method for differential evolution in continuous problems, Inf. Sci., 2012, vol. 193, pp. 36–53.

Grobler, J. and Engelbrecht, A.P., A scalability analysis of particle swarm optimization roaming behaviour, in ICSI 2017. Lect. Notes Comput. Sci., Vol. 10385 , Heidelberg: Springer, 2017, pp. 119–130.

Gao, W.-F., Liu, S.-Y., and Huang, L.-L., Enhancing artificial bee colony algorithm using more information-based search equations, Inf. Sci., 2014, vol. 270, pp. 112–133.

Cheng, S., Shi, Y., and Qin, Q., Experimental study on boundary constraints handling in particle swarm optimization: from population diversity perspective, Int. J. Swarm Intell. Res., 2011, vol. 2, pp. 43–69.

Salleh, M.N.M. et al., Exploration and exploitation measurement in swarm-based metaheuristic algorithms: an empirical analysis, in Recent Advances on Soft Computing and Data Mining, Berlin: Springer, 2018, pp. 24–32.

Chaudhary, R. and Banati, H., Swarm bat algorithm with improved search (SBAIS), Soft Comput., 2019, vol. 23, pp. 11461–11491.

Blackwell, T. and Kennedy, J., Impact of communication topology in particle swarm optimization, IEEE Trans. Evol. Comput., 2019, vol. 23, no. 4, pp. 689–702.

Cheng, S., Shi, Y., and Qin, Q., Promoting diversity in particle swarm optimization to solve multimodal problem, in Neural Inf. Proc., Lect. Notes. Comput. Sci., Vol. 7063 , Heidelberg: Springer, 2011, pp. 228–237.

Kaucic, M., A multi-start opposition-based particle swarm optimization algorithm with adaptive velocity for bound constrained global optimization, J. Glob. Optim., 2013, vol. 55, pp. 165–188.

Cao, Y. et al., Comprehensive learning particle swarm optimization algorithm with local search for multimodal functions, IEEE Trans. Evol. Comput., 2019, vol. 23, no. 4, pp. 718–731.

Neri, F., Tirronen, V., Karkkainen, T., and Rossi, T., Fitness diversity based adaptation in multimeme algorithms: a comparative study, IEEE Congr. Evol. Comput., Singapore: IEEE, 2007, INSPEC accession no. 9889441.

Ghannami, A., Li, J., Hawbani, A., and Alhusaini, N., Diversity metrics for direct-coded variable-length chromosome shortest path problem evolutionary algorithms, Computing, 2020.

Cheng, S., Shi, Y., Qin, Q., Zhang, Q., and Bai, R., Population diversity maintenance in brain storm optimization algorithm, J. Artif. Intell. Soft Comput. Res., 2014, vol. 4, no. 2, pp. 83–97.

Simon, D., Omran, M.G., and Clerc, M., Linearized biogeography-based optimization with re-initialization and local search, Inf. Sci., 2014, vol. 267, pp. 140–157.

Kennedy, D.D., Zhang, H., Rangaiah, G.P., and Bonilla-Petriciolet, A., Particle swarm optimization with re-initialization strategies for continuous global optimization, in Global Optimization: Theory, Developments and Applications, Nova Science Publ., 2013, pp. 1–42.

Merrikh-Bayat, F., The runner-root algorithm: a metaheuristic for solving unimodal and multimodal optimization problems inspired by runners and roots of plants in nature, Appl. Soft Comput., 2015, vol. 33, pp. 292–303.

Saxena, A., A comprehensive study of chaos embedded bridging mechanisms and crossover operators for grasshopper optimisation algorithm, Expert Syst. Appl., 2019, vol. 132, pp. 166–188.

Wang, G.-G. and et., al., Chaotic krill herd algorithm, Inf. Sci., 2014, vol. 274, pp. 17–34.

Wang, G.-G., Deb, S., Zhao, X., and Cui, Z., A new monarch butterfly optimization with an improved crossover operator, Oper. Res. Int. J., 2018, vol. 18, pp. 731–755.

Zhou, L., Ma, M., Ding, L., and Tang, W., Centroid opposition with a two-point full crossover for the partially attracted firefly algorithm, Soft Comput., 2019, vol. 23, pp. 12241–12254.

San, P.P., Ling, S.H., and Nguyen, H.T., Hybrid PSO-based variable translation wavelet neural network and its application to hypoglycemia detection system, Neural Comput. Appl., 2013, vol. 23, pp. 2177–2184.

Saha, S.K., Kar, R., Mandal, D., and Ghoshal, S.P., Optimal IIR filter design using gravitational search algorithm with wavelet mutation, J. King Saud Univ. Comput. Inf. Sci., 2015, vol. 27, pp. 25–39.

Nobahari, H., Nikusokhan, M., and Siarry, P., A multi-objective gravitational search algorithm based on non-dominated sorting, Int. J. Swarm. Intell. Res., 2012, vol. 3, pp. 32–49.

Chen, Y. et al., Dynamic multi-swarm differential learning particle swarm optimizer, Swarm Evol. Comput., 2018, vol. 39, pp. 209–221.

Yazdani, S. and Hadavandi, E., LMBO-DE: a linearized monarch butterfly optimization algorithm improved with differential evolution, Soft Comput., 2019, vol. 23, pp. 8029–8043.

Luo, J. and Liu, Z., Novel grey wolf optimization based on modified differential evolution for numerical function optimization, Appl. Intell., 2020, vol. 50, pp. 468–486.

Zou, F. et al., Teaching-learning-based optimization with differential and repulsion learning for global optimization and nonlinear modeling, Soft Comput., 2018, vol. 22, pp. 7177–7205.

Mekh, M.A. and Hodashinsky, I.A., Comparative analysis of differential evolution methods to optimize parameters of fuzzy classifiers, J. Comput. Syst. Sci. Int., 2017, vol. 56, no. 4, pp. 616–626.

Herrera, F., Lozano, M., and Sanchez, A.M., A taxonomy for the crossover operator for real-coded genetic algorithms: an experimental study, Int. J. Intell. Syst., 2003, vol. 18, pp. 309–338.

Gao, S. et al., Gravitational search algorithm combined with chaos for unconstrained numerical optimization, Appl. Math. Comput., 2014, vol. 231, pp. 48–62.

Metlicka, M. and Davendra, D., Chaos driven discrete artificial bee algorithm for location and assignment optimisation problems, Swarm Evol. Comput., 2015, vol. 25, pp. 15–28.

Pluhacek, M., Senkerik, R., and Davendra, D., Chaos particle swarm optimization with ensemble of chaotic systems, Swarm Evol. Comput., 2015, vol. 25, pp. 29–35.

Ma, H. et al., Multi-population techniques in nature inspired optimization algorithms: a comprehensive survey, Swarm Evol. Comput., 2019, vol. 44, pp. 365–387.

Blackwell, T. and Branke, J., Multiswarms, exclusion, and anti-convergence in dynamic environments, IEEE Trans. Evol. Comput., 2006, vol. 10, pp. 459–472.

Lung, R.I. and Dumitrescu, D., Evolutionary swarm cooperative optimization in dynamic environments, Nat. Comput., 2010, vol. 9, pp. 83–94.

Corcoran, A.L. and Wainwright, R.L., A parallel island model genetic algorithm for the multiprocessor scheduling problem, Proc. ACM Symp. Appl. Comput. ACM. (1994), pp. 483–487.

Lardeux, F., Maturana, J., Rodriguez-Tello, E., and Saubion, F., Migration policies in dynamic island models, Nat. Comput., 2019, vol. 18, pp. 163–179.

Sutton, R. and Barto, A., Reinforcement Learning: an Introduction, London: MIT Press, 1998.

Gong, Y.J. et al., Distributed evolutionary algorithms and their models: a survey of the state-of-the-art, Appl. Soft Comput., 2015, vol. 34, pp. 286–300.

Raidl, G.R., A unified view on hybrid metaheuristics, in Proc. Hybrid Metaheuristics, Third Int. Workshop, Lect. Notes. Comput. Sci., Vol. 4030., Heidelberg: Springer, 2006, pp. 1–12.

Hodashinsky, I.A. and Gorbunov, I.V., Hybrid method of building fuzzy systems based on island model, Inf. Sist. Upr., 2014, no. 3, pp. 114–120.

Lynn, N., Ali, M.Z, and Suganthan, P.N., Population topologies for particle swarm optimization and differential evolution, Swarm Evol. Comput., 2018, vol. 39, pp. 24–35.

Shi, Y., Liu, H., Gao, L., and Zhang, G., Cellular particle swarm optimization, Inf. Sci., 2011, vol. 181, pp. 4460–4493.

Fang, W., Sun, J., Chen, H., and Wu, X., A decentralized quantum-inspired particle swarm optimization algorithm with cellular structured population, Inf. Sci., 2016, vol. 330, pp. 19–48.

Huang, L. and Qin, C., A novel modified gravitational search algorithm for the real world optimization problem, Int. J. Mach. Learn. Cybern., 2019, vol. 10, pp. 2993–3002.

Li, C. and Yang, S., A general framework of multipopulation methods with clustering in undetectable dynamic environments, IEEE Trans. Evol. Comput., 2012, vol. 16, pp. 556–577.

Xia, L., Chu, J., and Geng, Z., A multiswarm competitive particle swarm algorithm for optimization control of an ethylene cracking furnace, Appl. Artif. Intell., 2014, vol. 28, pp. 30–46.

Huang, C., Li, Y., and Yao, X., A survey of automatic parameter tuning methods for metaheuristics, IEEE Trans. Evol. Comput., 2020, vol. 24, no. 2, pp. 201–216.

Poli, R., Mean and variance of the sampling distribution of particle swarm optimizers during stagnation, IEEE Trans. Evol. Comput., 2009, vol. 13, no. 4, pp. 712–721.

Sengupta, S., Basak, S., and Peters, R.A., Particle swarm optimization: a survey of historical and recent developments with hybridization perspectives, Mach. Learn. Knowl. Extr., 2019, vol. 1, pp. 157–191.

Calvet, L., Juan, A.A., Serrat, C., and Ries, J., A statistical learning based approach for parameter fine-tuning of metaheuristics, SORT—Stat. Oper. Res. Trans., 2016, vol. 40, pp. 201–224.

Birattari, M., Tuning metaheuristics: a machine learning perspective, SCI., 2009, vol. 197.

Hutter, F., Hoos, H.H., Leyton-Brown, K., and Stützle, T., ParamILS: an automatic algorithm configuration framework, J. Artif. Intell. Res., 2009, vol. 36, pp. 267–306.

Yuan, Z., de Oca, M.M.A., Birattari, M., and Stützle, T., Continuous optimization algorithms for tuning real and integer parameters of swarm intelligence algorithms, Swarm Intell., 2012, vol. 6, pp. 49–75.

Eiben, A.E. and Smit, S.K., Evolutionary algorithm parameters and methods to tune them, in Autonomous Search, Berlin: Springer, 2012, pp. 15–36.

Adenso-Diaz, B. and Laguna, M., Fine-tuning of algorithms using fractional experimental designs and local search, Oper. Res., 2006, vol. 54, pp. 99–114.

Barbosa, E.B.M. and Senne, E.L.F., Improving the fine-tuning of metaheuristics: an approach combining design of experiments and racing algorithms, J. Optim., 2017, vol. 2017, pp. 1–7.

Fallahi, M., Amiri, S., and Yaghini, M., A parameter tuning methodology for metaheuristics based on design of experiments, Int. J. Eng. Tech. Sci., 2014, vol. 2, pp. 497–521.

Huang, D., Allen, T.T., Notz, W.I., and Zeng, N., Global optimization of stochastic black-box systems via sequential kriging meta-models, J. Glob. Optim., 2006, vol. 34, no. 3, pp. 441–466.

Audet, C. and Dennis, J.E., Mesh adaptive direct search algorithms for constrained optimization, SIAM J. Optim., 2006, vol. 17, no. 1, pp. 188–217.

Montero, E., Riff, M.-C., and Neveu, B., A beginner’s guide to tuning methods, Appl. Soft. Comput., 2014, vol. 17, pp. 39–51.

Karafotias, G., Hoogendoorn, M., and Eiben, A.E., Parameter control in evolutionary algorithms: trends and challenges, IEEE Trans. Evol. Comput., 2015, vol. 19, no. 2, pp. 167–187.

Zhang, J., Chen, W.-N., Zhan, Z.-H., Yu, W.-J., Li, Y.-L., Chen, N., and Zhou, Q., A survey on algorithm adaptation in evolutionary computation, Front. Electr. Electron. Eng., 2012, vol. 7, no. 1, pp. 16–31.

Harrison, K.R., Engelbrecht, A.P., and Ombuki-Berman, B.M., Inertia weight control strategies for particle swarm optimization, Swarm. Intell., 2016, vol. 10, no. 4, pp. 267–305.

Eberhart, R.C. and Shi, Y., Tracking and optimizing dynamic systems with particle swarms, Proc. IEEE Congr. Evol. Comput. (Seoul, South Korea, 2001), vol. 1, pp. 94–100.

Shi, Y. and Eberhart, R.C., Empirical study of particle swarm optimization, Proc. IEEE Congr. Evol. Comput. (1999), vol. 3, pp. 1945–1950.

Yang, C., Gao, W., Liu, N., and Song, C., Low-discrepancy sequence initialized particle swarm optimization algorithm with high-order nonlinear time-varying inertia weight, Appl. Soft Comput., 2015, vol. 29, pp. 386–394.

Jiao, B., Lian, Z., and Gu, X., A dynamic inertia weight particle swarm optimization algorithm, Chaos Solitons Fractals, 2008, vol. 37, no. 3, pp. 698–705.

Fan, S.K.S. and Chiu, Y.Y., A decreasing inertia weight particle swarm optimizer, Eng. Optim., 2007, vol. 39, no. 2, pp. 203–228.

Chauhan, P., Deep, K., and Pant, M., Novel inertia weight strategies for particle swarm optimization, Memetic Comput., 2013, vol. 5, no. 3, pp. 229–251.

Chen, G., Huang, X., Jia, J., and Min, Z., Natural exponential inertia weight strategy in particle swarm optimization, Proc. Sixth World Congr. Intell. Control Autom. (2006), IEEE, vol. 1, pp. 3672–3675.

Gao, Y.-L., An, X.-H., and Liu, J.-M., A particle swarm optimization algorithm with logarithm decreasing inertia weight and chaos mutation, Int. Conf. Comput. Intell. Security (2008), IEEE, vol. 1, pp. 61–65.

Feng, Y., Teng, G.F., Wang, A.X., and Yao, Y.M., Chaotic inertia weight in particle swarm optimization, Proc. Second Int. Conf. Innovative Comput. Inf. Control, Kumamoto: IEEE, 2007, pp. 475–479.

Kentzoglanakis, K. and Poole, M., Particle swarm optimization with an oscillating inertia weight, Proc. 11th Annu. Conf. Genetic Evol. Comput. ACM (2009), pp. 1749–1750.

Ratnaweera, A., Halgamuge, S.K., and Watson, H.C., Self-organizing hierarchical particle swarm optimizer with time-varying acceleration coefficients, IEEE Trans. Evol. Comput., 2004, vol. 8, pp. 240–255.

Tanweer, M.R., Suresh, S., and Sundararajan, N., Self regulating particle swarm optimization algorithm, Inf. Sci., 2015, vol. 294, pp. 182–202.

Xu, G., An adaptive parameter tuning of particle swarm optimization algorithm, Appl. Math. Comput., 2013, vol. 219, pp. 4560–4569.

Adewumi, A.O. and Arasomwan, A.M., An improved particle swarm optimiser based on swarm success rate for global optimisation problems, J. Exp. Theor. Artif. Intell., 2014, vol. 28, pp. 441–483.

Yang, X., Yuan, J., and Mao, H., A modified particle swarm optimizer with dynamic adaptation, Appl. Math. Comput., 2007, vol. 189, pp. 1205–1213.

Zhan, Z.-H., Zhang, J., Li, Y., and Chung, H.S.-H., Adaptive particle swarm optimization, IEEE Trans. Syst. Man Cybern. B, 2009, vol. 39, pp. 1362–1381.

Huang, L., Ding, S., Yu, S., Wang, J., and Lu, K., Chaos-enhanced cuckoo search optimization algorithms for global optimization, Appl. Math. Model., 2016, vol. 40, pp. 3860–3875.

Mirjalili, S. and Gandomi, A.H., Chaotic gravitational constants for the gravitational search algorithm, Appl. Soft Comput., 2017, vol. 53, pp. 407–419.

Gandomi, A., Yang, X.-S., Talatahari, S., and Alavi, A., Firefly algorithm with chaos, Commun. Nonlinear Sci.Numer. Simul., 2013, vol. 18, pp. 89–98.

Gandomi, A.H. and Yang, X.-S., Chaotic bat algorithm, J. Comput. Sci., 2014, vol. 5, pp. 224–232.

Juang, Y.-T., Tung, S.-L., and Chiu, H.-C., Adaptive fuzzy particle swarm optimization for global optimization of multimodal functions, Inf. Sci., 2011, vol. 181, pp. 4539–4549.

Melin, P. et al., Optimal design of fuzzy classification systems using PSO with dynamic parameter adaptation through fuzzy logic, Expert Syst. Appl., 2013, vol. 40, pp. 3196–3206.

Neshat, M., FAIPSO: fuzzy adaptive informed particle swarm optimization, Neural Comput. Appl., 2013, vol. 23, pp. 95–116.

Gonzalez, B., Melin, P., Valdez, F., and Prado-Arechiga, G., A gravitational search algorithm using fuzzy adaptation of parameters for optimization of ensemble neural networks in medical imaging, Proc. Inter. Conf. Artif. Intell. (2017), Las Vegas: CSREA Press, 2017, pp. 54–59.

Perez, J. et al., Interval type-2 fuzzy logic for dynamic parameter adaptation in the bat algorithm, Soft Comput., 2017, vol. 21, pp. 667–685.

Amador-Angulo, L. and Castillo, O., Statistical analysis of type-1 and interval type-2 fuzzy logic in dynamic parameter adaptation of the BCO, Proc. 9th Conf. Eur. Soc. Fuzzy Logic Technol. (2015), Atlantis Press, 2015, pp. 776–783.

Abdel-Basset, M., Wang, G.-G., Sangaiah, A.K., and Rushdy, E., Krill herd algorithm based on cuckoo search for solving engineering optimization problems, Multimedia Tools Appl., 2019, vol. 78, pp. 3861–3884.

Galvez, J., Cuevas, E., Becerra, H., and Avalos, O., A hybrid optimization approach based on clustering and chaotic sequences, Int. J. Mach. Learn. Cybern., 2020, vol. 11, pp. 359–401.

Caraffini, F., Neri, F., and Epitropakis, M., HyperSPAM: a study on hyper-heuristic coordination strategies in the continuous domain, Inf. Sci., 2019, vol. 477, pp. 186–202.

Chen, X., Ong, Y.-S., Lim, M.-H., and Tan, K.C., A multi-facet survey on memetic computation, IEEE Trans. Evol. Comput., 2011, vol. 15, pp. 591–607.

Bartoccini, U., Carpi, A., Poggioni, V., and Santucci, V., Memes evolution in a memetic variant of particle swarm optimization, Mathematics, 2019, vol. 7, p. 423.

Duan, Q., Liao, T.W., and Yi, H.Z., A comparative study of different local search application strategies in hybrid metaheuristics, Appl. Soft Comput., 2013, vol. 13, pp. 1464–1477.

Lopez-Garcia, M., Garcia-Rodenas, R., and Gonzalez, A.G., Hybrid meta-heuristic optimization algorithms for time-domain-constrained data clustering, Appl. Soft Comput., 2014, vol. 23, pp. 319–332.

de Oca, M.A.M, Cotta, C., and Neri, F., Local search, in Handbook of Memetic Algorithms. Studies in Computational Intelligence. Vol. 379 , Berlin–Heidelberg: Springer, 2012, pp. 29–41.

Lai, X. and Hao, J.-K., A tabu search based memetic algorithm for the max-mean dispersion problem, Comput. Oper. Res., 2016, vol. 72, pp. 118–127.

Yu, Y. et al., CBSO: a memetic brain storm optimization with chaotic local search, Memetic Comput., 2018, vol. 10, pp. 353–367.

Petalas, Y.G., Parsopoulos, K.E., and Vrahatis, M.N., Memetic particle swarm optimization, Ann. Oper. Res., 2007, vol. 156, pp. 99–127.

Wang, H., Moon, I., Yang, S., and Wang, D., A memetic particle swarm optimization algorithm for multimodal optimization problems, Inf. Sci., 2012, vol. 197, pp. 38–52.

Fister, I., Fister, I., Jr., Brest, J., and Zumer, V., Memetic artificial bee colony algorithm for large-scale global optimization, 2012 IEEE Congr. Evol. Comput., Brisbane: IEEE, 2012, pp. 1–8.

Sudholt, D., Parametrization and balancing local and global search, in Handbook of Memetic Algorithms. Studies in Computational Intelligence. Vol. 379 , Berlin–Heidelberg: Springer, 2012, pp. 55–72.

Wu, G., Mallipeddi, R., and Suganthan, P.N., Ensemble strategies for population-based optimization algorithms—a survey, Swarm Evol. Comput., 2019, vol. 44, pp. 695–711.

Li, C., Yang, S., and Nguyen, T.T., A Self-learning particle swarm optimizer for global optimization problems, IEEE Trans. Syst. Man Cybern. Part B., 2012, vol. 42, pp. 627–646.

Wang, H. et al., Multi-strategy ensemble artificial bee colony algorithm, Inf. Sci., 2014, vol. 279, pp. 587–603.

Lynn, N. and Suganthan, P.N., Ensemble Particle Swarm Optimizer, Appl. Soft Comput., 2017, vol. 55, pp. 533–548.

Pillay, N. and Qu, R., Hyper-Heuristics: Theory and Applications, Cham: Springer, 2018.

Del Ser, J. et al., Bio-inspired computation: where we stand and what’s next, Swarm Evol. Comput., 2019, vol. 48, pp. 220–250.

Elaziz, M.A. and Mirjalili, S., A hyper-heuristic for improving the initial population of whale optimization algorithm, Knowl. Based Syst., 2019, vol. 172, pp. 42–63.

Miranda, P., Prudencio, R., and Pappa, G., H3ad: a hybrid hyper-heuristic for algorithm design, Inf. Sci., 2017, vol. 414, pp. 340–354.

Yang, X.-S., Nature-inspired optimization algorithms: challenges and open problems, J. Comput. Sci., 2020, article ID 101104.

Bassimir, B., Schmitt, M., and Wanka, R., Self-adaptive potential-based stopping criteria for particle swarm optimization with forced moves, Swarm. Intell., 2020, vol. 14, pp. 285–311.

Li, P. and Zhao, Y., A quantum-inspired vortex search algorithm with application to function optimization, Nat. Comput., 2019, vol. 18, pp. 647–674.

Nedjah, N. and Mourelle, L.M., Hardware for Soft Computing and Soft Computing for Hardware. Studies in Computational Intelligence. Vol. 529 , Cham: Springer, 2014.

Li, D. et al., A general framework for accelerating swarm intelligence algorithms on FPGAs, GPUs and multi-core CPUs, IEEE Access., 2018, vol. 6, pp. 72327–72344.

Damaj, I., Elshafei, M., El-Abd, M., and EminAydin, M., An analytical framework for high-speed hardware particle swarm optimization, Microprocess Microsyst. 2020, vol. 72, article ID 102949.

Funding

This work was supported by the Russian Foundation for Basic Research, project no.19-17-50050.

Author information

Authors and Affiliations

Corresponding author

Additional information

Translated by V. Potapchouck

Rights and permissions

About this article

Cite this article

Hodashinsky, I.A. Methods for Improving the Efficiency of Swarm Optimization Algorithms. A Survey. Autom Remote Control 82, 935–967 (2021). https://doi.org/10.1134/S0005117921060011

Received:

Revised:

Accepted:

Published:

Issue Date:

DOI: https://doi.org/10.1134/S0005117921060011