Abstract

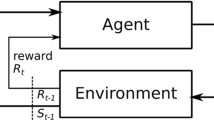

This article considers adaptive control architectures that integrate active sensory-motor systems with decision systems based on reinforcement learning. One unavoidable consequence of active perception is that the agent's internal representation often confounds external world states. We call this phoenomenon perceptual aliasingand show that it destabilizes existing reinforcement learning algorithms with respect to the optimal decision policy. We then describe a new decision system that overcomes these difficulties for a restricted class of decision problems. The system incorporates a perceptual subcycle within the overall decision cycle and uses a modified learning algorithm to suppress the effects of perceptual aliasing. The result is a control architecture that learns not only how to solve a task but also where to focus its visual attention in order to collect necessary sensory information.

Article PDF

Similar content being viewed by others

Avoid common mistakes on your manuscript.

References

Agre, P.E.(1988). The dynamic structure of everyday life.PhD thesis, MIT Artificial Intelligence Laboratory, Cambridge, MA.

Agre, P.E., & Chapman, D.(1987). Pengi:An implementation of a theory of activity. Proceedings of the Sixth National Conference on Artificial Intelligence(pp. 268-272). Los Altos, CA: Morgan Kaufmann.

Albus, J.S.(1975). A new approach to manipulator control:Cerebellar model articulation controller (CMAC). Transactions of the ASME:Journal of Dynamic Systems, Measurement and Control, 1025-61.

Albus, J.S.(1981). Brains, behavior, and robotics.Peterborough, NH: BYTE Books.

Anderson, C.W.(1986). Learning and problem solving with multilayer connectionist systems.PhD thesis, University of Massachusetts, Amherst, MA.

Anderson, C.W.(1989). Towers of hanoi with connectionist networks:Learning new features. Proceedings of the Sixth International Conference on Machine Learning(pp. 345-350). San Mateo, CA: Morgan Kaufmann.

Ballard, D.H.(1989). Reference frames for animate vision. Proceedings of the Eleventh International Joint Conference on Artificial Intelligence(pp. 1635-1641). Los Altos, CA: Morgan Kaufmann.

Barto, A.B., & Sutton, R.S.(1981). Landmark learning:An illustration of associative search. Biological Cybernetics, 421-8.

Barto, A.B., Sutton, R.S., & Watkins, C.(1990a). Sequential decision problems and neural networks.In D. S. Touretzky (Ed.), Advances in neural information processing systems 2.San Mateo, CA: Morgan Kaufmann.

Barto, A.B., Sutton, R.S., & Watkins, C.J.C.(1990b). Learning and sequential decision making.In M.Gabrial & J.W.Moore (Eds.), Learning and computational neuroscience.Cambridge, MA: MIT Press.(Also COINS Tech Report 89-95, Dept.of Computer and Information Sciences, University of Massachusetts, Amherst, MA 01003).

Barto, A.G., Sutton, R.S., & Anderson, C.W.(1983). Neuron-like elements that can solve difficult learning control problems. IEEE Transactions on Systems, Man, and Cybernetics, SMC-13834-846.

Bellman, R.E.(1957). Dynamic programming.Princeton, NJ: Princeton University Press.

Bertsekas, D.P.(1987). Dynamic programming:Deterministic and stochastic models.Englewood Cliffs, NJ: Prentice-Hall.

Blum, L., & Blum, M.(1975). Toward a mathematical theory of inductive inference. Information and Control, 28125-155.

Blythe, J., & Mitchell, T.M.(1989). On becoming reactive. Proceedings of the Sixth International Conference on Machine Learning(pp. 255-259). San Mateo, CA: Morgan Kaufmann.

Booker, L.B.(1982). Intelligent behavior as an adaptation to the task environment.PhD thesis, University of Michigan.

Brooks, R.A.(1986). A robust layered control system for a mobile robot. IEEE Journal of Robotics and Automation, 214-22.

Chapman, D.(1989). Penguins can make cake. AI Magazine, 1045-50.

Clocksin, W.F., & Moore, A.W.(1988). Some experiments in adaptive state-space robotics.(Technical report). University of Cambridge, Computer Laboratory.

Drummond, M.(1989). Situated control rules. Proceedings of the Rochester Planning Workshop(pp. 18-34). (Technical Report 284).University of Rochester, Department of Computer Science.

Fikes, R.E., Hart, P.E., & Nilsson, N.J.(1972). Learning and executing generalized robot plans. Artificial Intelligence, 3251-288.

Firby, R.J.(1987). An investigation into reactive planning in complex domains. Proceedings of the Sixth National Conference on Artificial Intelligence(pp. 202-206). Los Altos, CA: Morgan Kaufmann.

Franklin, J.A.(1988). Refinement of robot motor skills through reinforcement learning. Proceedings of the 27th IEEE Conference on Decision and Control.Austin, TX.

Georgeff, M.P., & Lansky, A.L.(1987). Reactive reasoning and planning. Proceedings of the Sixth National Conference on Artificial Intelligence(pp. 677-682.). Los Altos, CA: Morgan Kaufmann.

Gibson, J.J.(1979). The ecological approach to visual perception.Boston, MA: Houghton Mifflin.

Ginsberg, M.L.(1989). Universal planning:An (almost)universally bad idea. AI Magazine, JO41-44.

Girosi, F., & Poggio, T.(1989). Networks and the best approximation property(AI Memo No.1164).Massachusetts Institute of Technology, Artificial Intelligence Laboratory.

Gordon, D.G., & Grefenstette, J.J.(1990). Explanations of empirically derived reactive plans. Proceedings of the Seventh International Conference on Machine Learning(pp. 198-203). San Mateo, CA: Morgan Kaufmann.

Grefenstette, J.J., Ramsey, C., & Schultz, A.(1990). Learning sequential decision rules using simulation and competition. Machine Learning, 5355-382.

Grefenstette, J.J.(1988). Credit assignment in rule discovery systems based on genetic algorithms. Machine Learning, 3225-245.

Grefenstette, J.J.(1989). Incremental learning of control strategies with genetic algorithms. Proceedings of the Sixth International Workshop on Machine Learning(pp. 340-344). San Mateo, CA: Morgan Kaufmann.

Holland, J.H.(1975). Adaptation in natural and artificial systems.Ann Arbor, MI: University of Michigan Press.

Holland, J.H.(1986). Escaping brittleness:the possibilities of general-purpose learning algorithms applied to parallel rule-based systems.In R.S.Michalski, J.G.Carbonell, & T.M.Mitchell (Eds.), Machine learning: An artificial intelligence approach (Volume II).San Mateo, CA: Morgan Kaufmann.

Holland, J.H., Holyoak, K.F., Nisbett, R.E., &Thagard, P.R.(1986). Induction:processes of inference, learning, and discovery.Cambridge, MA: MIT Press.

Hormel, M.(1989). A self-organizing associative memory system for control applications.In D.S.Touretzky (Ed.), Advances in neural information processing systems 1.San Mateo, CA: Morgan Kaufmann.

Kaelbling, L.P.(1989). A formal framework for learning in embedded systems. Proceedings of the Sixth Interna-tional Workshop on Machine Learning(pp. 350-353). San Mateo, CA: Morgan Kaufmann.

Laird, J.E., Rosenbloom, P.S., & Newell, A.(1986). Chunking in soar:The anatomy of a general learning mechanism. Machine Learning, 111-46

Mahadevan, S., & Connell, J.(1990). Automatic programming of behavior-based robots using reinforcement learning(Research Report RC 16359).IBM T.J.Watson Research Center.

Miller, W.T., Sutton, R.S., & Werbos, P.J.(1990). Neural networks for control.Cambridge, MA: MIT Press.

Nilsson, N.J.(1989). Action networks. Proceedings of the Rochester Planning Workshop(Technical Report 284) (pp. 36-68).University of Rochester, Department of Computer Science.

Ramsey, C., Schultz, A., & Grefenstette, J.(1990). Simulation-assisted learning by competition:Effects of noise differences between training model and target environment. Proceedings of the Seventh International Conference on Machine Learning(pp. 211-215). San Mateo, CA: Morgan Kaufmann.

Ross, S.(1983). Introduction to stochastic dynamic programming.New York, NY: Academic Press.

Schoppers, M.J.(1987). Universal plans for reactive robots in unpredictable domains. Proceedings of Ninth In-ternational Joint Conference on Artificial Intelligence(pp. 1039-1046). Los Altos, CA: Morgan Kaufmann.

Schoppers, M.J.(1989). Representation and automatic synthesis of reaction plans.PhD thesis, Dept.of Computer Science, University of Illinois at Urbana-Champaign.

Sutton, R.S.(1984). Temporal credit assignment in reinforcement learning.PhD thesis, University of Massachusetts at Amherst.

Sutton, R.S.(1988). Learning to predict by the method of temporal differences. Machine Learning, 39-44.

Sutton, R.S.(1990a). First results with DYNA, an integrated architecture for learning, planning, and reacting. Proceedings of the AAA1 Spring Symposium on Planning in Uncertain, Unpredictable, or Changing Environments.

Sutton, R.S.(1990b). Integrating architectures for learning, planning and reacting based on approximating dynamic programming. Proceedings of the Seventh International Conference on Machine Learning(pp. 216-224). San Mateo, CA: Morgan Kaufmann.

Ullman, S.(1984). Visual routines. Cognition, 1897-159.(Also in:Visual cognitionS.Pinker (Ed.), 1985).

Watkins, C.(1989). Learning from delayed rewards.PhD thesis, Cambridge University.

Whitehead, S.D.(1989). Scaling in reinforcement learning(Technical Report TR 304).University of Rochester, Department of Computer Science.

Whitehead, S.D., & Ballard, D.H.(1989a). Reactive behavior, learning, and anticipation. Proceedings of the NASA Conference on Space Telerobotics(pp. 333-344). Pasadena, CA: Jet Propulsions Laboratory.

Whitehead, S.D., & Ballard, D.H.(1989b). A role for anticipation in reactive systems that learn. Proceedings of the Sixth International Workshop on Machine Learning(pp. 354-357). San Mateo, CA: Morgan Kaufmann.

Whitehead, S.D., & Ballard, D.H.(1991). A study of cooperative mechanisms for faster reinforcement learning(Technical Report TR 365). Rochester, NY: University of Rochester, Department of Computer Science.

Williams, R.J.(1987). Reinforcement-learning connectionist systems(Technical Report NU-CCS-87-3). Boston, MA: Northeastern University, College of Computer Science.

Wilson, S.W.(1987). Classifier systems and the animate problem. Machine Learning, 2199-228.

Yee, R.C, Saxena, S., Utgoff, P.E., & Barto, A.G.(1990). Explaining temporal-differences to create useful concepts for evaluating states. Proceedings of Ninth National Conference on Artificial Intelligence(pp. 882-888). Cambridge, MA: MIT Press.

Author information

Authors and Affiliations

Rights and permissions

About this article

Cite this article

Whitehead, S.D., Ballard, D.H. Learning to Perceive and Act by Trial and Error. Machine Learning 7, 45–83 (1991). https://doi.org/10.1023/A:1022619109594

Issue Date:

DOI: https://doi.org/10.1023/A:1022619109594