Abstract

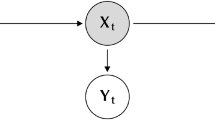

Hidden Markov models (HMMs) have proven to be one of the most widely used tools for learning probabilistic models of time series data. In an HMM, information about the past is conveyed through a single discrete variable—the hidden state. We discuss a generalization of HMMs in which this state is factored into multiple state variables and is therefore represented in a distributed manner. We describe an exact algorithm for inferring the posterior probabilities of the hidden state variables given the observations, and relate it to the forward–backward algorithm for HMMs and to algorithms for more general graphical models. Due to the combinatorial nature of the hidden state representation, this exact algorithm is intractable. As in other intractable systems, approximate inference can be carried out using Gibbs sampling or variational methods. Within the variational framework, we present a structured approximation in which the the state variables are decoupled, yielding a tractable algorithm for learning the parameters of the model. Empirical comparisons suggest that these approximations are efficient and provide accurate alternatives to the exact methods. Finally, we use the structured approximation to model Bach's chorales and show that factorial HMMs can capture statistical structure in this data set which an unconstrained HMM cannot.

Article PDF

Similar content being viewed by others

References

Baum, L., Petrie, T., Soules, G., & Weiss, N. (1970). A maximization technique occurring in the statistical analysis of probabilistic functions of Markov chains. The Annals of Mathematical Statistics, 41, 164–171.

Bengio, Y., & Frasconi, P. (1995). An input-output HMM architecture. In G. Tesauro, D. S. Touretzky, & T. K. Leen (Eds.), Advances in neural information processing systems 7, pp. 427–434. Cambridge, MA: MIT Press.

Cacciatore, T. W., & Nowlan, S. J. (1994). Mixtures of controllers for jump linear and non-linear plants. In J. D. Cowan, G. Tesauro, & J. Alspector (Eds.), Advances in neural information processing systems 6, pp. 719–726. San Francisco, CA: Morgan Kaufmann.

Conklin, D., & Witten, I. H. (1995). Multiple viewpoint systems for music prediction. Journal of New Music Research, 24, 51–73.

Cover, T., & Thomas, J. (1991). Elements of information theory. New York: John Wiley.

Dawid, A. P. (1992). Applications of a general propagation algorithm for probabilistic expert systems. Statistics and Computing, 2, 25–36.

Dean, T., & Kanazawa, K. (1989). A model for reasoning about persistence and causation. Computational Intelligence, 5, 142–150.

Dempster, A., Laird, N., & Rubin, D. (1977). Maximum likelihood from incomplete data via the EM algorithm. Journal of the Royal Statistical Society Series B, 39, 1–38.

Geman, S., Bienenstock, E., & Doursat, R. (1992). Neural networks and the bias/variance dilemma. Neural Computation, 4, 1–58.

Geman, S., & Geman, D. (1984). Stochastic relaxation, Gibbs distributions, and the Bayesian restoration of images. IEEE Transactions on Pattern Analysis and Machine Intelligence, 6, 721–741.

Ghahramani, Z. (1995). Factorial learning and the EM algorithm. In G. Tesauro, D. S. Touretzky, & T. K. Leen (Eds.), Advances in neural information processing systems 7, pp. 617–624. Cambridge, MA: MIT Press.

Heckerman, D. (1995.). A tutorial on learning Bayesian networks. (Technical Report MSR-TR–95–06). Redmond, WA: Microsoft Research.

Hinton, G. E., & Sejnowski, T. J. (1986). Learning and relearning in Boltzmann machines. In D. E. Rumelhart & J. L. McClelland (Eds.), Parallel distributed processing: Explorations in the microstructure of cognition. Volume 1: Foundations. Cambridge, MA: MIT Press.

Hinton, G. E., & Zemel, R. S. (1994). Autoencoders, minimum description length, and Helmholtz free energy. In J. D. Cowan, G. Tesauro, & J. Alspector (Eds.), Advances in neural information processing systems 6, pp. 3–10. San Francisco, CA: Morgan Kaufmann.

Jensen, F. V., Lauritzen, S. L., & Olesen, K. G. (1990). Bayesian updating in recursive graphical models by local computations. Computational Statistical Quarterly, 4, 269–282.

Jordan, M. I., Ghahramani, Z., & Saul, L. K. (1997). Hidden Markov decision trees. In M. Mozer, M. Jordan, & T. Petsche (Eds.), Advances in neural information processing systems 9. Cambridge, MA: MIT Press.

Jordan, M. I., & Jacobs, R. (1994). Hierarchical mixtures of experts and the EM algorithm. Neural Computation, 6, 181–214.

Kanazawa, K., Koller, D., & Russell, S. J. (1995). Stochastic simulation algorithms for dynamic probabilistic networks. In P. Besnard,, & S. Hanks (Eds.), Uncertainty in Artificial Intelligence: Proceedings of the Eleventh Conference. (pp. 346–351). San Francisco, CA: Morgan Kaufmann.

Krogh, A., Brown, M., Mian, I. S., Sjölander, K., & Haussler, D. (1994). Hidden Markov models in computational biology: Applications to protein modeling. Journal of Molecular Biology, 235, 1501–1531.

Lauritzen, S. L., & Spiegelhalter, D. J. (1988). Local computations with probabilities on graphical structures and their application to expert systems. Journal of the Royal Statistical Society B, 157–224.

McCullagh, P., & Nelder, J. (1989). Generalized linear models. London: Chapman & Hall.

Meila, M., & Jordan, M. I. (1996). Learning fine motion by Markov mixtures of experts. In D. S. Touretzky, M. C. Mozer, & M. E. Hasselmo (Eds.), Advances in neural information processing systems 8, pp. 1003–1009. Cambridge, MA: MIT Press.

Merz, C. J., & Murphy, P. M. (1996). UCI Repository of machine learning databases [http://www.ics.uci.edu/ mlearn/MLRepository.html]. Irvine, CA: University of California, Department of Information and Computer Science.

Neal, R. M. (1992). Connectionist learning of belief networks. Artificial Intelligence, 56, 71–113.

Neal, R. M. (1993). Probabilistic inference using Markov chain Monte Carlo methods (Technical Report CRG-TR–93–1). Toronto, Ontario: University of Toronto, Department of Computer Science.

Neal, R. M.,& Hinton, G. E. (1993). A new view of the EM algorithm that justifies incremental and other variants. Unpublished manuscript, Department of Computer Science, University of Toronto, Ontario.

Parisi, G. (1988). Statistical field theory. Redwood City, CA: Addison-Wesley.

Pearl, J. (1988). Probabilistic reasoning in intelligent systems: Networks of plausible inference. San Mateo, CA: Morgan Kaufmann.

Rabiner, L. R., & Juang, B. H. (1986). An Introduction to hidden Markov models. IEEE Acoustics, Speech & Signal Processing Magazine, 3, 4–16.

Saul, L. K., & Jordan, M. I. (1997). Mixed memory Markov models. In D. Madigan, & P. Smyth (Eds.), Proceedings of the 1997 Conference on Artificial Intelligence and Statistics. Ft. Lauderdale, FL.

Saul, L., Jaakkola, T., & Jordan, M. I. (1996). Mean Field Theory for Sigmoid Belief Networks. Journal of Artificial Intelligence Research, 4, 61–76.

Saul, L., & Jordan, M. I. (1995). Boltzmann chains and hidden Markov models. In G. Tesauro, D. S. Touretzky, & T. K. Leen (Eds.), Advances in neural information processing systems 7, pp. 435–442. Cambridge, MA: MIT Press.

Saul, L., & Jordan, M. I. (1996). Exploiting tractable substructures in Intractable networks. In D. S. Touretzky, M. C. Mozer, & M. E. Hasselmo (Eds.), Advances in neural information processing systems 8, pp. 486–492. Cambridge, MA: MIT Press.

Smyth, P., Heckerman, D., & Jordan, M. I. (1997). Probabilistic independence networks for hidden Markov probability models. Neural Computation, 9, 227–269.

Stolcke, A., & Omohundro, S. (1993). Hidden Markov model induction by Bayesian model merging. In S.J. Hanson, J. D. Cowan, & C. L. Giles (Eds.), Advances in neural information processing systems 5, pp. 11–18. San Francisco, CA: Morgan Kaufmann.

Tanner, M. A., & Wong, W. H. (1987). The calculation of posterior distributions by data augmentation (with discussion). Journal of the American Statistical Association, 82, 528–550.

Viterbi, A. J. (1967). Error bounds for convolutional codes and an asymptotically optimal decoding algorithm. IEEE Transactions Information Theory, IT-13, 260–269.

Williams, C. K. I., & Hinton, G. E. (1991). Mean field networks that learn to discriminate temporally distorted strings. In D. Touretzky, J. Elman, T. Sejnowski, & G. Hinton (Eds.), Connectionist models: Proceedings of the 1990 summer school (pp. 18–22). San Francisco, CA: Morgan Kaufmann.

Zemel, R. S. (1993). A minimum description length framework for unsupervised learning. Ph.D. Thesis, Department of Computer Science, University of Toronto, Toronto, Canada.

Author information

Authors and Affiliations

Rights and permissions

About this article

Cite this article

Ghahramani, Z., Jordan, M.I. Factorial Hidden Markov Models. Machine Learning 29, 245–273 (1997). https://doi.org/10.1023/A:1007425814087

Issue Date:

DOI: https://doi.org/10.1023/A:1007425814087