Abstract

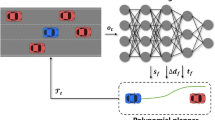

This paper proposes an improved decision-making method based on deep reinforcement learning to address on-ramp merging challenges in highway autonomous driving. A novel safety indicator, time difference to merging (TDTM), is introduced, which is used in conjunction with the classic time to collision (TTC) indicator to evaluate driving safety and assist the merging vehicle in finding a suitable gap in traffic, thereby enhancing driving safety. The training of an autonomous driving agent is performed using the Deep Deterministic Policy Gradient (DDPG) algorithm. An action-masking mechanism is deployed to prevent unsafe actions during the policy exploration phase. The proposed DDPG + TDTM + TTC solution is tested in on-ramp merging scenarios with different driving speeds in SUMO and achieves a success rate of 99.96% without significantly impacting traffic efficiency on the main road. The results demonstrate that DDPG + TDTM + TTC achieved a higher on-ramp merging success rate of 99.96% compared to DDPG + TTC and DDPG.

Similar content being viewed by others

Abbreviations

- DDPG:

-

Deep Deterministic Policy Gradient

- DQN:

-

Deep Q network

- DRL:

-

Deep reinforcement learning

- MDP:

-

Markov Decision Process

- SAC:

-

Soft actor-critic

- TTC:

-

Time to collision

- TDTM:

-

Time difference to merging

- TD3:

-

Twin delayed DDPG

References

Lin, Y., McPhee, J., Azad, N.L.: Anti-jerk on-ramp merging using deep reinforcement learning. In: 31st IEEE Intelligent Vehicles Symposium (IV), Electr Network, pp. 7–14. IEEE (2020)

Nakka, S.K.S., Chalaki, B., Malikopoulos, A.A.: A multi-agent deep reinforcement learning coordination framework for connected and automated vehicles at merging roadways. In: American Control Conference (ACC), Atlanta, GA, pp. 3297–3302. IEEE (2022)

Min, H., Fang, Y., Wu, X., Wu, G., Zhao, X.: On-ramp merging strategy for connected and automated vehicles based on complete information static game. J. Traffic Transp. Eng.-Engl. Ed. 8(4), 582–595 (2021). https://doi.org/10.1016/j.jtte.2021.07.003

Claussmann, L., Revilloud, M., Gruyer, D., Glaser, S.: A review of motion planning for highway autonomous driving. IEEE Trans. Intell. Transp. Syst. 21(5), 1826–1848 (2020). https://doi.org/10.1109/tits.2019.2913998

Cao, W., Mukai, M., Kawabe, T., Nishira, H., Fujiki, N.: Cooperative vehicle path generation during merging using model predictive control with real-time optimization. Control Eng. Pract. 34, 98–105 (2015). https://doi.org/10.1016/j.conengprac.2014.10.005

Rios-Torres, J., Malikopoulos, A.A.: Automated and cooperative vehicle merging at highway on-ramps. IEEE Trans. Intell. Transp. Syst. 18(4), 780–789 (2017). https://doi.org/10.1109/tits.2016.2587582

Zhou, Y., Cholette, M.E., Bhaskar, A., Chung, E.: Optimal vehicle trajectory planning with control constraints and recursive implementation for automated on-ramp merging. IEEE Trans. Intell. Transp. Syst. 20(9), 3409–3420 (2019). https://doi.org/10.1109/tits.2018.2874234

Chen, X., Jin, M., Chan, C., Miao, Y.: Gong, J.: Bionic decision-making analysis during urban expressway ramp merging for autonomous vehicle. In: Transportation Research Board 96th Annual Meeting. No. 17-03483 (2017)

Marinescu, D., Curn, J., Bouroche, M., Cahill, V.: On-ramp traffic merging using cooperative intelligent vehicles: a slot-based approach. In: 15th International IEEE Conference on Intelligent Transportation Systems (ITSC), Anchorage, AK, pp. 900–906. IEEE (2012)

Ding, J., Li, L., Peng, H., Zhang, Y.: A rule-based cooperative merging strategy for connected and automated vehicles. IEEE Trans. Intell. Transp. Syst. 21(8), 3436–3446 (2020). https://doi.org/10.1109/tits.2019.2928969

Wang, P., Chan, C.Y.: Autonomous ramp merge maneuver based on reinforcement learning with continuous action space. arXiv:1803.09203 (2018)

Liu, H., Huang, Z., Wu, J., Lv, C.: Improved deep reinforcement learning with expert demonstrations for urban autonomous driving. In: 33rd IEEE Intelligent Vehicles Symposium (IEEE IV), Aachen, GERMANY, pp. 921–928. IEEE (2022)

Vallon, C., Ercan, Z., Carvalho, A., Borrelli, F.: A machine learning approach for personalized autonomous lane change initiation and control. In: 28th IEEE Intelligent Vehicles Symposium (IV), Redondo Beach, CA, pp. 1590–1595. IEEE (2017)

Bojarski, M., Del Testa, D., Dworakowski, D., Firner, B., Flepp, B., Goyal, P., Jackel, L.D., Monfort, M., Muller, U., Zhang, J.: End to end learning for self-driving cars. arXiv:1604.07316 (2016)

Li, G., Yang, L., Li, S., Luo, X., Qu, X., Green, P.: Human-like decision making of artificial drivers in intelligent transportation systems: an end-to-end driving behavior prediction approach. IEEE Intell. Transp. Syst. Mag. 14(6), 188–205 (2022). https://doi.org/10.1109/mits.2021.3085986

Wang, H., Gao, H., Yuan, S., Zhao, H., Wang, K., Wang, X., Li, K., Li, D.: Interpretable decision-making for autonomous vehicles at highway on-ramps with latent space reinforcement learning. IEEE Trans. Veh. Technol. 70(9), 8707–8719 (2021). https://doi.org/10.1109/tvt.2021.3098321

Cha, H., Park, J., Kim, H., Bennis, M., Kim, S.L.: Proxy experience replay: federated distillation for distributed reinforcement learning. IEEE Intell. Syst. 35(4), 94–101 (2020). https://doi.org/10.1109/mis.2020.2994942

Wu, Y., Tan, H., Qin, L., Ran, B.: Differential variable speed limits control for freeway recurrent bottlenecks via deep actor-critic algorithm. Transp. Res. Part C Emerg. Technol. (2020). https://doi.org/10.1016/j.trc.2020.102649

Triest, S., Villaflor, A., Dolan, J.M.: Learning highway ramp merging via reinforcement learning with temporally-extended actions. In: 31st IEEE Intelligent Vehicles Symposium (IV), Electr Network, pp. 1589–1594. IEEE (2020)

Bouton, M., Nakhaei, A., Fujimura, K., Kochenderfer, M.J.: Cooperation-aware reinforcement learning for merging in dense traffic. In: IEEE Intelligent Transportation Systems Conference (IEEE-ITSC), Auckland, New Zealand, pp. 3441–3447. IEEE (2019)

Kurzer, K., Schoerner, P., Albers, A., Thomsen, H., Daaboul, K., Zoellner, J.M.: Generalizing decision making for automated driving with an invariant environment representation using deep reinforcement learning. In: 32nd IEEE Intelligent Vehicles Symposium (IV), Electr Network, pp. 994–1000. IEEE (2021)

Wolf, P., Kurzer, K., Wingert, T., Kuhnt, F., Zoellner, J.M.: Adaptive behavior generation for autonomous driving using deep reinforcement learning with compact semantic states. In: IEEE Intelligent Vehicles Symposium (IV), Changshu, Peoples Republic of China, pp. 993–1000. IEEE (2018)

Kong, Y., Guan, Y., Duan, J., Li, S.E., Sun, Q., Nie, B.: Decision-making under on-ramp merge scenarios by distributional soft actor-critic algorithm. arXiv:2103.04535 (2021)

Chen, D., Li, Z., Wang, Y., Jiang, L., Wang, Y.: Deep multi-agent reinforcement learning for highway on-ramp merging in mixed traffic. arXiv:2105.05701 (2021)

Ma, X., Zhang, Q., Xia, L., Zhou, Z., Yang, J., Zhao, Q.: Distributional soft actor critic for risk sensitive learning. arXiv:2004.14547 (2020)

Ye, Y., Zhang, X., Sun, J.: Automated vehicle’s behavior decision making using deep reinforcement learning and high-fidelity simulation environment. Transp. Res. Part C Emerg. Technol. 107, 155–170 (2019). https://doi.org/10.1016/j.trc.2019.08.011

Lubars, J., Gupta, H., Chinchali, S., Li, L., Raja, A., Srikant, R., Wu, X.: Combining reinforcement learning with model predictive control for on-ramp merging. In: IEEE Intelligent Transportation Systems Conference (ITSC), Indianapolis, IN, pp. 942–947. IEEE (2021)

Lillicrap, T.P., Hunt, J.J., Pritzel, A., Heess, N., Erez, T., Tassa, Y., Silver, D., Wierstra, D.: Continuous control with deep reinforcement learning. arXiv:1509.02971 (2015)

Mnih, V., Kavukcuoglu, K., Silver, D., Rusu, A.A., Veness, J., Bellemare, M.G., Graves, A., Riedmiller, M., Fidjeland, A.K., Ostrovski, G., Petersen, S., Beattie, C., Sadik, A., Antonoglou, I., King, H., Kumaran, D., Wierstra, D., Legg, S., Hassabis, D.: Human-level control through deep reinforcement learning. Nature 518(7540), 529–533 (2015). https://doi.org/10.1038/nature14236

Silver, D., Lever, G., Heess, N., Degris, T., Wierstra, D., Riedmiller, M.: Deterministic policy gradient algorithms. In: International Conference on Machine Learning, Pmlr, pp. 387–395. (2014)

Peters, J., Schaal, S.: Natural actor-critic. Neurocomputing 71(7–9), 1180–1190 (2008). https://doi.org/10.1016/j.neucom.2007.11.026

Lopez, P.A., Behrisch, M., Bieker-Walz, L., Erdmann, J., Flotterod, Y.-P., Hilbrich, R., Lucken, L., Rummel, J., Wagner, P., Wiessner, E.: Microscopic traffic simulation using SUMO. In: 21st IEEE International Conference on Intelligent Transportation Systems (ITSC), Maui, HI, pp. 2575–2582. IEEE (2018)

Treiber, M., Hennecke, A., Helbing, D.: Congested traffic states in empirical observations and microscopic simulations. Phys. Rev. E 62(2), 1805–1824 (2000). https://doi.org/10.1103/PhysRevE.62.1805

Haarnoja, T., Zhou, A., Abbeel, P., Levine, S.: Soft actor-critic: off-policy maximum entropy deep reinforcement learning with a stochastic actor. In: 35th International Conference on Machine Learning (ICML), Stockholm, Sweden (2018)

Fujimoto, S., van Hoof, H., Meger, D.: Addressing function approximation error in actor-critic methods. In: 35th International Conference on Machine Learning (ICML), Stockholm, Sweden (2018)

Li, G., Li, S., Li, S., Qu, X.: Continuous decision-making for autonomous driving at intersections using deep deterministic policy gradient. IET Intell. Transp. Syst. 16(12), 1669–1681 (2022). https://doi.org/10.1049/itr2.12107

Li, G., Yang, Y., Li, S., Qu, X., Lyu, N., Li, S.E.: Decision making of autonomous vehicles in lane change scenarios: deep reinforcement learning approaches with risk awareness. Transp. Res. Part C-Emerg. Technol. (2022). https://doi.org/10.1016/j.trc.2021.103452

Li, G., Li, S., Li, S., Qin, Y., Cao, D., Qu, X., Cheng, B.: Deep reinforcement learning enabled decision-making for autonomous driving at intersections. Autom. Innov. 3(4), 374–385 (2020). https://doi.org/10.1007/s42154-020-00113-1

Jiang, R., Liu, Z., Li, H.: Evolution towards optimal driving strategies for large-scale autonomous vehicles. IET Intell. Transp. Syst. 15(8), 1018–1027 (2021). https://doi.org/10.1049/itr2.12076

Krauß, S.: Microscopic modeling of traffic flow: investigation of collision free vehicle dynamics (1998)

Gipps, P.G.: A behavioral car-following model for computer-simulation. Transp. Res. Part B Methodol. 15(2), 105–111 (1981). https://doi.org/10.1016/0191-2615(81)90037-0

Baheri, A.: Safe reinforcement learning with mixture density network, with application to autonomous driving. Results Control Optim. 6, 100095 (2022)

Chen, D., Jiang, L., Wang, Y., Li, Z.: Autonomous driving using safe reinforcement learning by incorporating a regret-based human lane-changing decision model. In: American Control Conference (ACC), Denver, CO, pp. 4355–4361. IEEE (2020)

Li, G., Li, S.E., Cheng, B., Green, P.: Estimation of driving style in naturalistic highway traffic using maneuver transition probabilities. Transp. Res. Part C Emerg. Technol. 74, 113–125 (2017). https://doi.org/10.1016/j.trc.2016.11.011

Acknowledgements

This study is supported by the National Natural Science Foundation of China (Grant No. 52272421) and the Shenzhen Fundamental Research Fund (Grant No. JCYJ20190808142613246).

Author information

Authors and Affiliations

Corresponding author

Ethics declarations

Conflict of interest

On behalf of all the authors, the corresponding author states that there is no conflict of interest.

Additional information

Academic Editor:Xudong Zhang

Rights and permissions

Springer Nature or its licensor (e.g. a society or other partner) holds exclusive rights to this article under a publishing agreement with the author(s) or other rightsholder(s); author self-archiving of the accepted manuscript version of this article is solely governed by the terms of such publishing agreement and applicable law.

About this article

Cite this article

Li, G., Zhou, W., Lin, S. et al. On-Ramp Merging for Highway Autonomous Driving: An Application of a New Safety Indicator in Deep Reinforcement Learning. Automot. Innov. 6, 453–465 (2023). https://doi.org/10.1007/s42154-023-00235-2

Received:

Accepted:

Published:

Issue Date:

DOI: https://doi.org/10.1007/s42154-023-00235-2